Clear Sky Science · en

Brain-inspired warm-up training with random noise for uncertainty calibration

Why smarter AI needs to know when it might be wrong

Modern artificial intelligence systems can recognize images, drive cars and converse in natural language with impressive skill. Yet they often have a dangerous blind spot: they may be completely wrong while being extremely sure of themselves. This mismatch between confidence and correctness can lead to bad medical decisions, unsafe self-driving behavior or chatbots that convincingly invent facts. The paper "Brain-inspired warm-up training with random noise for uncertainty calibration" explores a surprisingly simple, biologically inspired way to teach AI models a crucial human-like habit: to express uncertainty when they truly do not know.

When confidence and reality drift apart

The authors begin by examining how today’s neural networks report confidence. A classifier trained on natural images not only predicts what it sees, but also a probability that its answer is correct. Ideally, if a model says it is 80% confident, it should be right about 80% of the time. In practice, the researchers show that as networks grow deeper and are trained on limited data, their confidence becomes inflated: they frequently assign very high certainty to wrong answers. This problem gets even worse when the model encounters images it has never seen during training—a situation known as out-of-distribution data—where it still acts overly sure instead of admitting uncertainty.

A warm-up borrowed from the developing brain

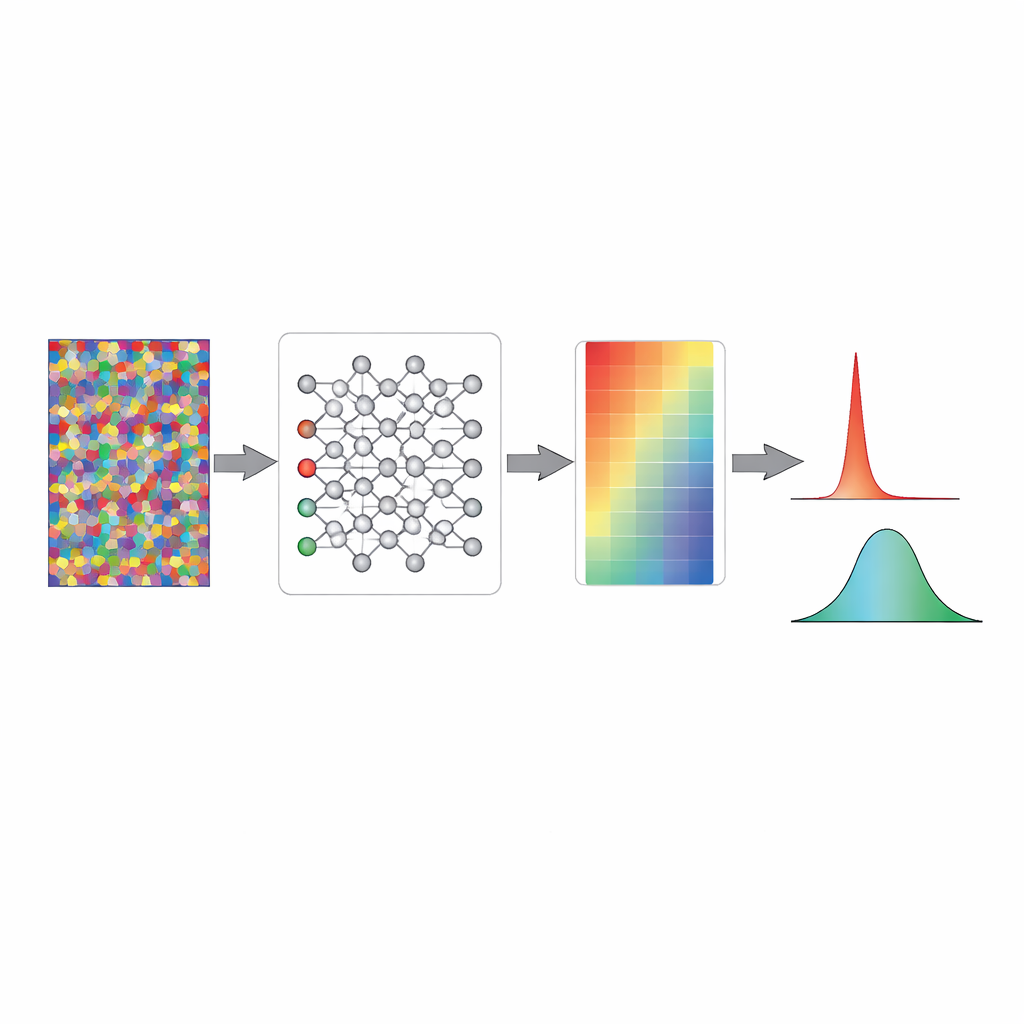

To tackle this, the study looks to biology. Before birth, animal brains are already active, firing spontaneous patterns of electrical activity long before meaningful sights and sounds arrive. Neuroscientists think this early "noise" helps wire up circuits so they are ready for real sensory experience. Inspired by this, the authors propose a warm-up phase for artificial networks: before seeing any real images or labels, the model is briefly trained on completely random input patterns paired with random, meaningless labels. At first glance, this seems pointless—the network cannot learn anything useful from pure noise. Yet during this short phase, its internal responses settle into a state where its output probabilities are spread evenly, corresponding to pure chance rather than overconfidence.

How random noise makes confidence honest

Using simplified toy models and full image classifiers, the researchers show what this warm-up does under the hood. In a standard randomly initialized network, the mathematical signals just before the final decision layer often have large swings, which the softmax function converts into extreme probabilities near zero or one. This means an untrained model already behaves as if it is very sure, even though it knows nothing. Training on random noise gently rescales those internal signals, pulling them into a range where the output probabilities cluster near the chance level. The weights themselves barely change; instead, the overall pattern of activity is "pre-calibrated" so that, before real learning starts, the model naturally expresses maximum uncertainty about unfamiliar inputs.

Better behavior on real and unknown data

Once this brain-inspired warm-up is complete, the same networks are trained on real image datasets using standard methods. Across many architectures—from simple feed-forward networks to popular ResNet, DenseNet and vision transformer models—the noise-trained networks maintain a closer match between confidence and actual accuracy throughout learning. They perform as well as or slightly better than conventionally initialized networks on standard test sets, but their confidence scores are much more trustworthy. Crucially, when shown truly unfamiliar images, these pre-calibrated models assign low confidence that hovers near chance, instead of confidently mislabeling them. This simple property dramatically improves their ability to detect when an input is "unknown"—a key requirement for safe deployment in the wild.

Foundations for more trustworthy AI

In plain terms, the study shows that a very short, inexpensive warm-up on random noise can teach neural networks a kind of humility from the outset. Rather than starting life as overconfident guessers, models begin in a cautious state where they admit they do not know, and then adjust their confidence upward only as they accumulate real evidence. This approach avoids extra post-processing steps, works across many model types and data sizes, and echoes how biological brains seem to prepare themselves before birth. If adopted widely, such brain-inspired warm-up training could help AI systems become not just accurate, but also appropriately aware of their own limits—an essential step toward making them safer and more reliable partners in everyday decision-making.

Citation: Cheon, J., Paik, SB. Brain-inspired warm-up training with random noise for uncertainty calibration. Nat Mach Intell 8, 602–613 (2026). https://doi.org/10.1038/s42256-026-01215-x

Keywords: uncertainty calibration, neural networks, random noise warm-up, out-of-distribution detection, brain-inspired AI