Clear Sky Science · en

Computational framework to predict and shape human–machine interactions in closed-loop, co-adaptive neural interfaces

Teaching Machines to Learn With Us

As brain–computer interfaces and advanced prosthetic limbs move from the lab to everyday life, a central question emerges: how can we design devices that learn from us at the same time we are learning to use them? This study tackles that challenge by building a mathematical and experimental framework for "co-adaptive" neural interfaces—systems in which both the human user and the decoding algorithm continually adjust to each other so that control feels smoother, more natural, and more reliable over time.

A New Kind of Human–Machine Partnership

Many neural interfaces work by translating high-dimensional biological signals, such as muscle or brain activity, into simple commands that can move a cursor or robotic limb. Traditionally, designers have tried to optimize either the human side (training the user to adapt to a fixed decoder) or the machine side (letting the decoder update while assuming a mostly fixed user). In reality, both sides are constantly learning. This two-learner setup can unlock better performance and personalization, but it is also harder to design: if the algorithm adapts too quickly or in the wrong way, it can confuse the user rather than help. The authors bring together tools from control theory and game theory to describe, predict, and ultimately shape these intertwined learning processes.

Building a Safe Testbed for Co-Adaptive Interfaces

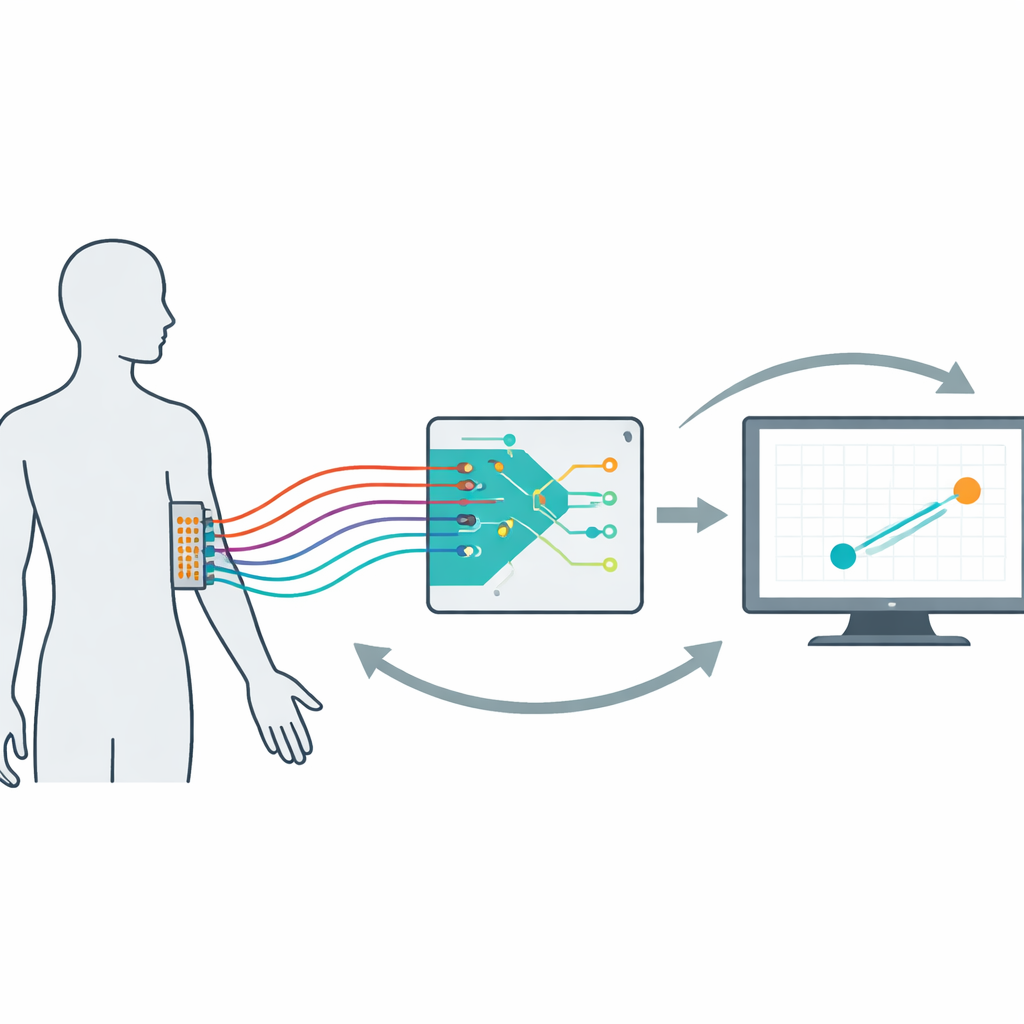

To study these dynamics in a controlled way, the researchers created a non-invasive myoelectric interface. Fourteen volunteers wore a 64-electrode grid on their dominant forearm. Their muscle activity drove a computer cursor that had to follow a wandering target on the screen. Under the hood, a decoder converted the muscle signals into cursor velocity and updated itself every 20 seconds based on recent performance. Across 16 five-minute trials per person, the team systematically varied how fast the decoder learned, how strongly it was penalized for using large signal gains (its "effort"), and how it was initialized. By examining both behavior and muscle patterns, they confirmed that users did not merely wait for the algorithm to improve; they actively changed how they used their muscles within and across trials, creating a genuine co-adaptive human–machine loop.

Using Control Theory to See Inside the Loop

The next step was to turn this messy interaction into a model that could be measured and tested. Using ideas from control theory, the authors estimated a compact mathematical description of each user’s strategy—an "encoder" that maps target and cursor information to patterns of muscle activity. This encoder could be separated into a predictive, feedforward part and a corrective, feedback part. By combining the estimated encoder with the known decoder, the team could assess whether the whole system was on track for accurate and stable control. They found that, over time, the combined user–decoder pair tended to move toward values predicted to give good tracking and stability, even though neither the user nor the decoder ever stopped changing completely. Users also showed signs of longer-term learning, with their muscle mappings shifting less from trial to trial as they became more proficient.

Game Theory Reveals How Learning Rules Shape Behavior

To go beyond description and actually predict how design choices would affect co-adaptation, the authors turned to game theory, which studies how multiple decision-makers interact when each is trying to minimize its own cost. They modeled the user and decoder as two "players" who both care about reducing tracking error but also pay a price for effort—muscle activation for the user and large gains for the decoder. In this framework, the joint system can settle into one of several stable states, or stationary points, depending on learning rates, effort penalties, and starting conditions. The model made concrete predictions: decoder learning rate should strongly affect how quickly and how well the system converges; adjusting the decoder’s effort penalty should shift how much effort the user must invest without necessarily changing accuracy; and initial decoder settings could subtly bias the user’s eventual strategy.

Testing How Algorithms Steer Human Learning

Experiments largely confirmed these predictions. When the decoder learned slowly, users improved more within a trial, and the combined encoder–decoder pair moved closer to the theoretically ideal regime for accurate and stable cursor control. When the decoder adapted too quickly, performance suffered and users changed their muscle strategies less, suggesting that the machine was moving faster than people could follow. Changing the decoder’s effort penalty provided another lever: higher penalties pushed the decoder to work less hard, which in turn led many users to increase their own muscle effort to maintain accuracy. Interestingly, participants differed in how they balanced effort and cursor speed—some chose to work harder to keep the cursor fast, while others accepted slower motion to save effort—hinting at individual preferences that future interfaces could personalize.

What This Means for Future Neural Interfaces

In plain terms, this work shows that the training rules built into neural interface algorithms do more than clean up noisy signals; they actively shape how people learn to use the device. By combining rigorous modeling with human experiments, the authors provide a toolkit for choosing decoder learning rates, effort penalties, and initializations in a principled way, rather than by trial and error. Their framework could guide the design of next-generation prosthetics, rehabilitation tools, and brain–computer interfaces that are not just accurate, but also stable, comfortable, and tailored to each user’s learning style.

Citation: Madduri, M.M., Yamagami, M., Li, S.J. et al. Computational framework to predict and shape human–machine interactions in closed-loop, co-adaptive neural interfaces. Nat Mach Intell 8, 372–387 (2026). https://doi.org/10.1038/s42256-026-01194-z

Keywords: neural interfaces, human machine interaction, co adaptive control, myoelectric cursor control, brain computer interface design