Clear Sky Science · en

Predictive robot eyes shape visual attention, performance, and trust in interaction with an industrial CoBot

Why Robot Eyes Matter at Work

In many modern factories, people now share their workstations with collaborative robots, or “CoBots.” These machines are designed to work side by side with humans, handing over parts or tools instead of sitting in cages behind safety fences. But for this partnership to feel safe and efficient, workers must be able to tell what the robot will do next. This study asks a simple question with big practical consequences: can a robot’s “eyes” or simple arrows help people quickly understand its next move, and what happens to performance and trust when those visual hints occasionally turn out to be wrong?

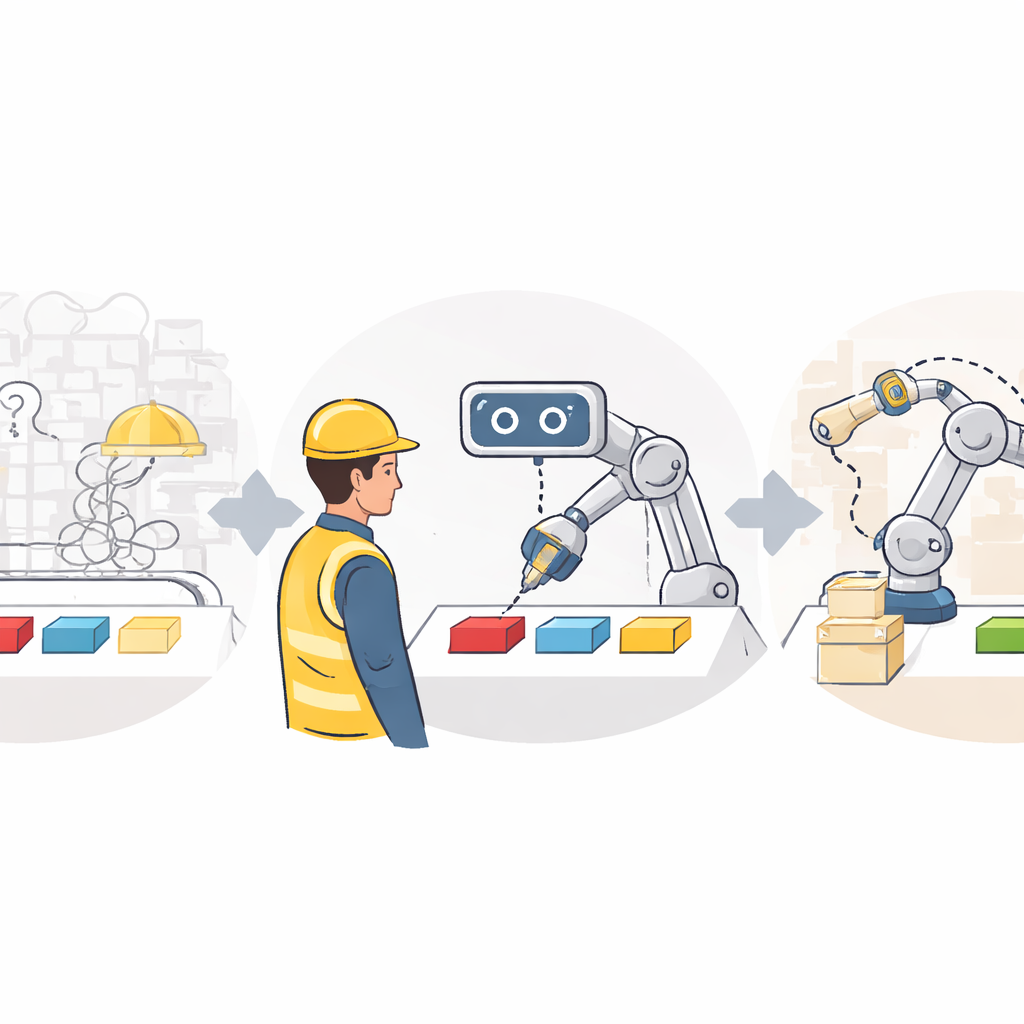

Setting Up a Shared Robot Workspace

The researchers brought volunteers into a lab with an industrial collaborative robot arm called Sawyer. Between the person and the robot lay a table with six colored squares that served as possible movement targets for the arm. On a tablet in front of them, participants saw the same six colors and had to tap the square they thought the robot would reach for next, as quickly and accurately as possible. Right after each prediction, they completed a brief, timed memory-and-search task on the tablet. The faster they guessed the robot’s move, the more time they had left for this second task, mimicking the multitasking demands of real factory work.

Robot Eyes, Arrows, or No Hints at All

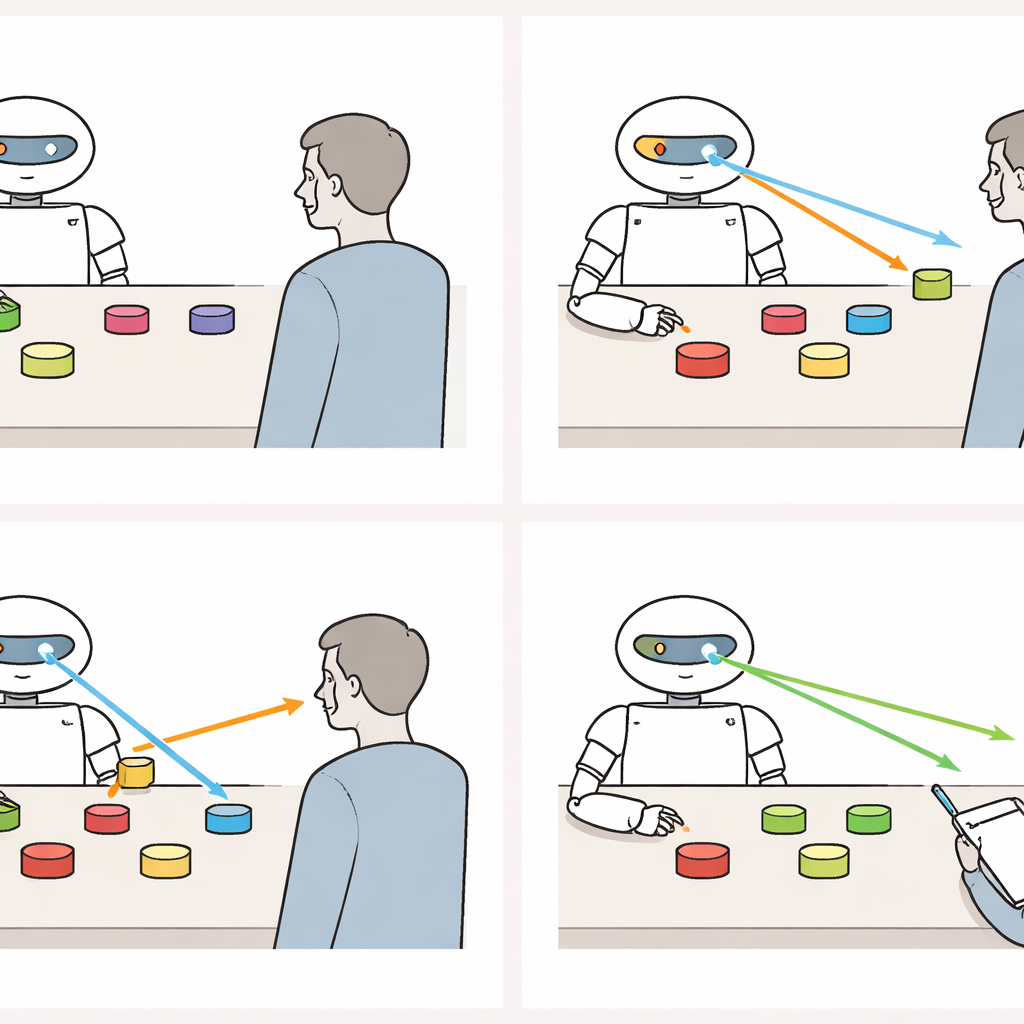

Across two separate but nearly identical studies, people were randomly assigned to interact with one of three robot display types: a robot with abstract eye-like shapes on its screen, a robot that used directional arrows, or a robot with a blank screen offering no visual hints. In the cue conditions, the eyes or arrows turned toward the correct colored square one second before the arm started to move and then held that direction. Participants were clearly told that these cues were predictive, and a demonstration phase showed several examples before the real trials began. In the middle block of trials, the researchers secretly introduced two “error” events in the cue conditions, where the eyes or arrows pointed to one square but the arm moved to a different one. Eye-tracking glasses recorded where and when participants looked during each trial.

Guiding Attention and Speeding Up Decisions

When the robot’s cues were reliable, the eye-like display had a clear advantage. Participants who saw robot eyes shifted their gaze to the correct target more quickly than those who had no cues, and generally faster than those who saw arrows. They also confirmed their predictions on the tablet sooner, sometimes nearly a full second earlier than people working with a cue-less robot. Arrows helped too, but their benefits were smaller and less consistent across the two studies. Importantly, accuracy stayed high in all conditions: using the cues let people decide faster without making more mistakes. The eye-tracking data showed that participants really did look at the robot’s display in the cue conditions, and those who looked at it more often tended to predict the robot’s moves more quickly and perform better on the demanding memory task that followed.

What Happens When the Robot Misleads You

The picture changed as soon as the robot broke its promise. When the eyes or arrows pointed one way and the arm reached somewhere else, participants slowed down. In later trials, they took longer to fixate the target with their eyes and longer to make their predictions, erasing the earlier speed advantage over the no-cue group. Their gaze behavior shifted as well: people reduced how much they looked at the robot’s display and instead watched the moving robot arm more closely and for longer, as if double-checking its actions. Despite this disruption, the damage was not permanent. In the final block of error-free trials, attention and prediction speed partially recovered, and the eye condition again tended to outperform the no-cue condition, though not always as strongly as before the errors.

Trusting a Helpful but Fallible Partner

Alongside performance, the researchers measured how much people reported trusting the robot at four points: before any interaction, after an initial stretch of flawless behavior, immediately after the cue errors, and after a final error-free block. Trust followed a familiar wave. It rose somewhat with smooth, predictable interaction, dipped sharply when the cues misled the user, and then climbed again once the robot behaved reliably. These rises and falls appeared only in the conditions with predictive cues, where people actually had expectations to be violated; trust in the no-cue robot stayed comparatively steady because that robot never gave misleading signals. Interestingly, workload ratings did not show a clear pattern, suggesting that adding predictive eyes or arrows did not reliably make the task feel harder or easier.

What This Means for People Working with Robots

To a lay observer, the takeaway is straightforward: giving a factory robot expressive "eyes" or simple arrows can make it much easier to see what it will do next, helping people react more quickly without sacrificing accuracy. These gains matter in busy, noisy workplaces where spoken instructions are limited. But the study also shows a trade-off. When the robot’s visual hints occasionally point in the wrong direction, people slow down, look away from the display, and trust the system less—at least for a while. With continued reliable behavior, both performance and trust can rebound. For designers of future human-robot workstations, the message is that predictive visual cues are powerful tools for attention and coordination, but their value depends critically on keeping them honest, explaining their meaning clearly, and planning for how to recover when rare mistakes inevitably occur.

Citation: Naendrup-Poell, L., Onnasch, L. Predictive robot eyes shape visual attention, performance, and trust in interaction with an industrial CoBot. Sci Rep 16, 14171 (2026). https://doi.org/10.1038/s41598-026-50476-4

Keywords: human-robot collaboration, visual attention, predictive cues, trust in automation, industrial cobots