Clear Sky Science · en

Review of large YOLOv8 and RT-DETR energy efficiency on edge devices for real-time detection

Smart cameras at the edge

From delivery drones to traffic-monitoring cameras, more and more machines need to recognize people and objects on their own, far away from power-hungry data centers. This paper asks a practical question behind that trend: can today’s large, high-accuracy object detection models run fast and efficiently on tiny computers like a Raspberry Pi or compact AI boards used in robots, without draining their batteries?

Two rival brains for spotting objects

The authors focus on two modern object detectors that have become workhorses in computer vision. One, called YOLOv8, is a streamlined evolution of classic convolutional neural networks, long favored for their mix of speed and accuracy. The other, RT-DETR, blends these convolutions with transformer blocks, a newer kind of network borrowed from language models that excels at capturing long-range patterns. The study uses the large versions of both models, roughly comparable in size, and tests how well they can spot everyday objects in the popular COCO image collection.

Tiny computers, many software paths

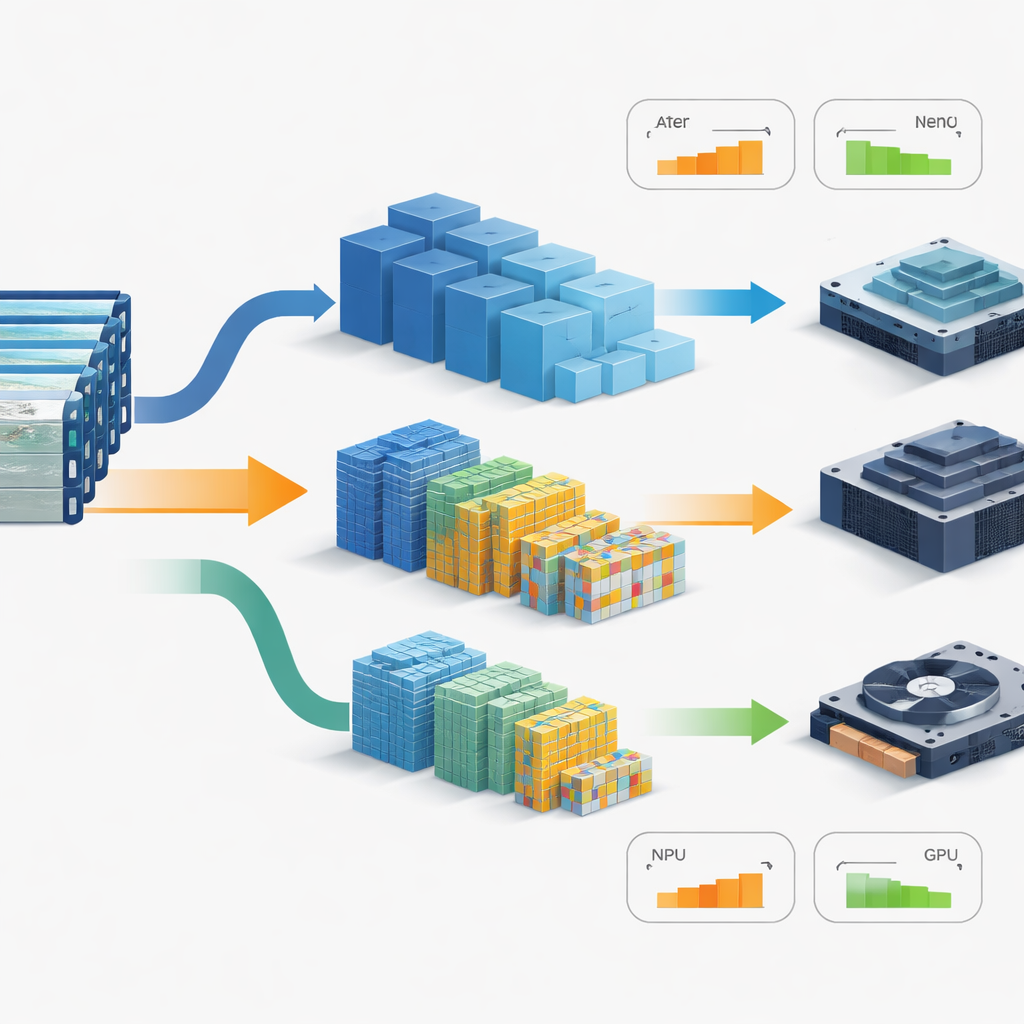

Instead of running these models on a big desktop GPU, the team turns to two edge platforms that resemble the brains of drones and small robots: a Raspberry Pi 5 and an Nvidia Jetson Orin NX. On the Raspberry Pi, they test plain CPU execution and add-on neural chips such as Google’s Edge TPU and the Hailo-8-based Raspberry Pi AI HAT+. On the Jetson board, they lean on its built‑in GPU. Each model is run through multiple software engines—from research-oriented frameworks like PyTorch to highly tuned deployment tools like TensorRT, NCNN, MNN, Paddle Lite, and TensorFlow Lite—to see how software choices reshape speed, power draw, and accuracy.

Measuring speed, power, and precision together

To mimic real-world use, the authors do not just time the core network. They feed in a full high-definition video stream, including decoding the frames, preparing them for the model, running detection, and tidying up the results. They define “real-time” as at least 25 processed frames per second, the rate of standard video. While the models’ raw detection quality stays high across many runtimes, the overall frame rate and energy use vary wildly. On the Raspberry Pi, running large models on the CPU alone leads to multi-second delays per frame and extremely poor energy efficiency. Dedicated neural chips change the picture: the Hailo-8 path gives YOLOv8 both high energy efficiency and strong accuracy, while the Edge TPU runs quickly but forces lower input resolution and aggressive number rounding, which cuts detection quality far below practical levels.

GPU tuning flips the winner

The Jetson Orin NX, with its more powerful GPU, allows a closer look at the tug-of-war between model design and deployment software. Here, TensorRT—a toolchain that compiles and compresses models for Nvidia hardware—shrinks inference times dramatically and boosts frames per second per watt for both detectors. Under the default research setup, YOLOv8 appears faster. After full TensorRT optimization and low‑precision arithmetic are applied, RT-DETR catches up and even overtakes YOLOv8 in raw throughput for large models. Yet when the authors normalize the results by the advertised amount of math each model performs, YOLOv8 still uses less time and less energy per unit of nominal work, while RT-DETR proves more sensitive to conversion steps between toolchains.

Why raw numbers do not tell the whole story

To unpack these findings, the paper separates three ingredients of performance: the basic amount of computation each model seems to require on paper, the way its building blocks actually move data through memory, and the overhead added by the runtime software. Transformers like those in RT-DETR rely on attention layers that connect many image locations to one another, producing large intermediate data structures that can tax memory and scheduling even if their nominal operation counts look modest. Convolution-heavy designs like YOLOv8, by contrast, lend themselves more easily to fused kernels and local data reuse on embedded GPUs. The authors also show that part of the accuracy loss blamed on low-precision arithmetic actually arises earlier, during conversion from the original training framework into a hardware-optimized engine.

What this means for real-world devices

In the end, none of the large-model setups on either device reaches the strict 25-frames-per-second goal for the full video pipeline. The study’s takeaway for engineers is that choosing an “edge-ready” detector is not as simple as reading off parameter counts or theoretical operation numbers. Real success depends on how the model’s structure interacts with the specific chip, how well the runtime software compiles and schedules its operations, and how much accuracy survives export and quantization. For now, achieving true real-time performance on small, power-limited platforms will still require hardware-aware tuning and, in many cases, smaller versions of these models rather than the largest and most accurate ones.

Citation: Suchý, I., Turčaník, M. Review of large YOLOv8 and RT-DETR energy efficiency on edge devices for real-time detection. Sci Rep 16, 10908 (2026). https://doi.org/10.1038/s41598-026-46453-6

Keywords: edge AI, object detection, energy efficiency, embedded GPU, model quantization