Clear Sky Science · en

Multi-scale adaptive fusion network for retinal layer and fluid segmentation in optical coherence tomography B-scans

Sharper Eye Scans for Everyday Vision Care

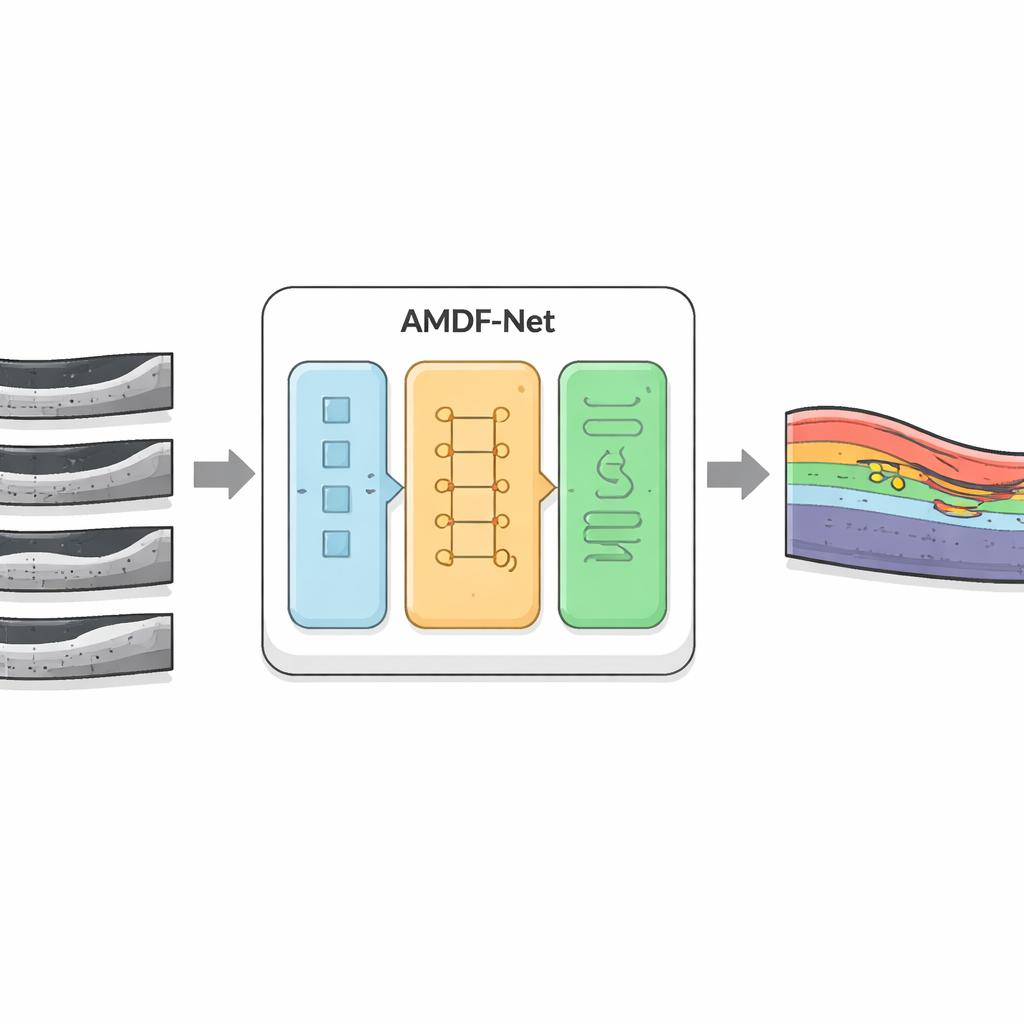

Diseases like diabetes and age-related macular degeneration can quietly damage the retina, the light-sensitive tissue at the back of the eye, long before we notice changes in vision. Modern scanners called optical coherence tomography (OCT) already give doctors detailed cross‑section views of the retina, but interpreting these images by hand is slow and can miss subtle warning signs. This study introduces an advanced computer system, AMDF‑Net, designed to automatically trace the retina’s delicate layers and pockets of fluid with high precision, potentially allowing faster, more reliable diagnosis and monitoring of blinding eye diseases.

Why Eye Fluid Matters So Much

In several common eye conditions—including diabetic macular edema, age‑related macular degeneration and retinal vein or nerve problems—tiny pools of fluid form within or beneath the retina. Their size, shape and exact location tell doctors whether a treatment is working or a disease is getting worse. OCT images capture these features in great detail, but the retina is made of many paper‑thin layers that curve and overlap. Fluid can blur boundaries and appear in irregular blobs. Traditional computer programs, and even earlier deep‑learning tools, often struggle to separate these layers and fluids correctly when images are noisy, the contrast is low or the disease pattern is unusual.

A New Digital Assistant for OCT Images

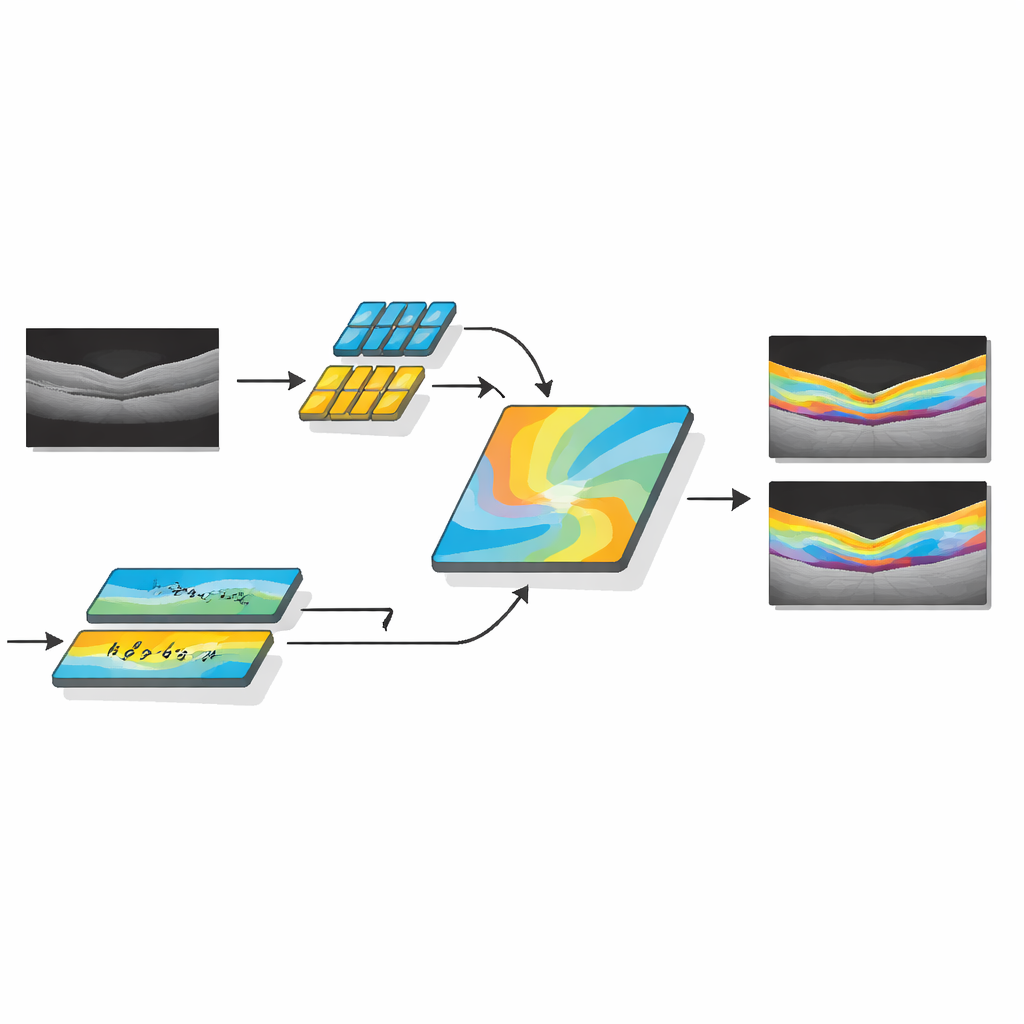

The researchers developed AMDF‑Net (Adaptive Multi‑Domain Fusion Network) to tackle these challenges head‑on. The system takes raw OCT eye scans and first cleans them up using edge enhancement, noise reduction and brightness matching so that subtle structures stand out and images from different scanners look more alike. Then it passes the data through two complementary pathways: one focuses on small local details such as thin boundaries between layers, while the other analyzes broader patterns across the whole scan, capturing how layers bend and where fluid tends to collect. A special fusion block combines these two views and uses an attention mechanism—essentially a way of automatically “paying more attention” to informative regions—to highlight features that are especially likely to represent diseased tissue.

Seeing Both Anatomy and Disease Together

Beyond simply outlining healthy anatomy, AMDF‑Net includes a disease‑inclusive module that is tuned to patterns seen in real patients, such as pockets of intraretinal and subretinal fluid or areas where the supporting tissue has lifted away. This part of the network learns, during training, to emphasize image patterns that commonly signal disease, while still keeping track of the normal layer structure. The system is guided by a carefully designed loss function—a mathematical score it tries to optimize—that rewards both accurate region filling and crisp boundaries. By combining region‑based and edge‑focused feedback during learning, the model is pushed to produce segmentations that not only overlap well with expert markings but also have smooth, clinically meaningful contours.

Tested Across Many Patients and Scanners

To judge how well AMDF‑Net works in practice, the authors tested it on three well‑known public OCT collections and a real‑world clinical dataset from a busy eye hospital. These sets cover different diseases, scanner brands and image qualities, including especially tricky regions around the optic nerve and tiny fluid pockets that many algorithms miss. Across all of them, AMDF‑Net consistently matched or outperformed leading methods, improving common accuracy scores by roughly three to five percentage points and reducing visible errors such as broken layer lines or misidentified fluid blobs. Importantly, the system handled images from different manufacturers with only small drops in performance, a key requirement for eventual use in everyday clinics.

What This Means for Patients and Clinics

For non‑specialists, the core message is that AMDF‑Net acts like a highly trained digital assistant that can quickly and reliably trace the retina’s structure and problem spots in OCT scans. By doing this across large numbers of images, it could help eye doctors spot disease earlier, track subtle changes between visits and tailor treatments more precisely, even in busy or resource‑limited settings. While the method currently demands more computing power than simpler models, the authors note that future refinements and compression techniques could make it fast enough for routine chair‑side use. In the long run, tools like AMDF‑Net may help preserve sight by turning complex eye scans into clear, actionable maps of retinal health.

Citation: Mani, P., Ramachandran, N., Sowmya, V. et al. Multi-scale adaptive fusion network for retinal layer and fluid segmentation in optical coherence tomography B-scans. Sci Rep 16, 10600 (2026). https://doi.org/10.1038/s41598-026-44006-5

Keywords: optical coherence tomography, retinal disease, deep learning segmentation, medical imaging AI, macular edema