Clear Sky Science · en

A hybrid convolution and attention-based framework with visual explanation for fruit disease identification

Why Smarter Fruit Checks Matter

From breakfast bananas to strawberries in smoothies, fruit is a daily staple—and a major source of income for farmers worldwide. But hidden spots, rot, and fungal infections can quietly wipe out harvests, shorten shelf life, and drive up prices. This study explores how an advanced yet lightweight artificial intelligence system can automatically spot diseases on common fruits from simple photos, while also showing humans exactly what it "looked at" to make each decision.

The Problem with Sick Fruit

Fruit crops such as bananas, grapes, lemons, mangos, and strawberries are vulnerable to a wide range of diseases caused by fungi, bacteria, viruses, and nutrient problems. These issues often show up as small leaf spots, discolored patches, or areas of rot on the fruit surface. If caught late, infections spread quickly across orchards, cutting yields and forcing farmers to spend more on chemicals and labor-intensive inspections. Traditional diagnosis relies on trained experts walking the fields and visually examining plants—a process that is slow, subjective, and hard to scale to large farms. Early or subtle symptoms are easily missed, especially under changing light, cluttered backgrounds, or when fruits look similar across varieties.

Teaching Computers to See Fruit Flaws

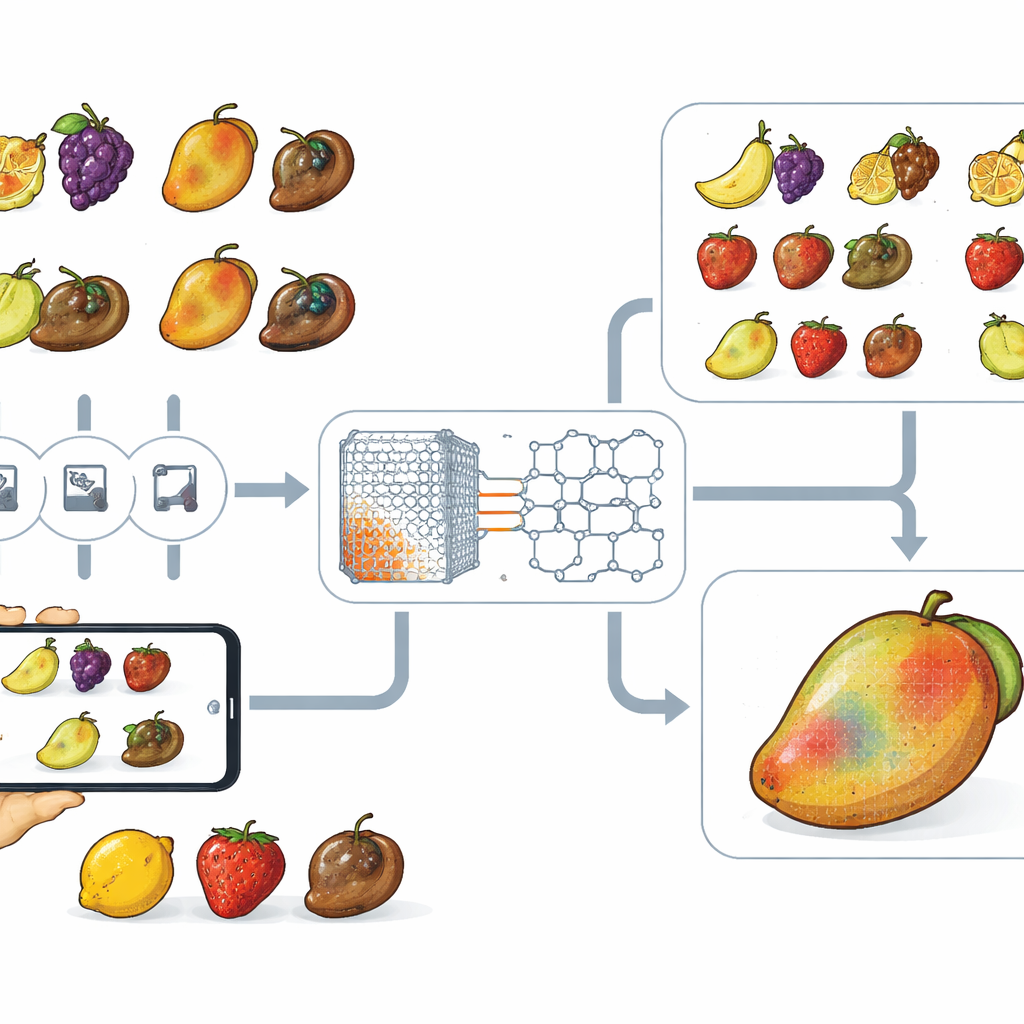

The researchers turned to deep learning, a form of artificial intelligence that learns patterns directly from images. They used a public dataset of 22,457 photos showing five fruit types, each labeled as either healthy or rotten. The images were carefully resized, color-corrected, and augmented with rotations, flips, and noise to mimic real-world variation and avoid overfitting to a narrow set of conditions. Four strong existing models were tested as benchmarks: two based on convolutional neural networks (which excel at picking up local textures and edges) and two based on transformer architectures (which are good at capturing long-range relationships across an image). Each model was retrained on the fruit dataset and evaluated for accuracy, robustness under cross-validation, and computational cost.

A Hybrid Model That Sees Both Detail and Big Picture

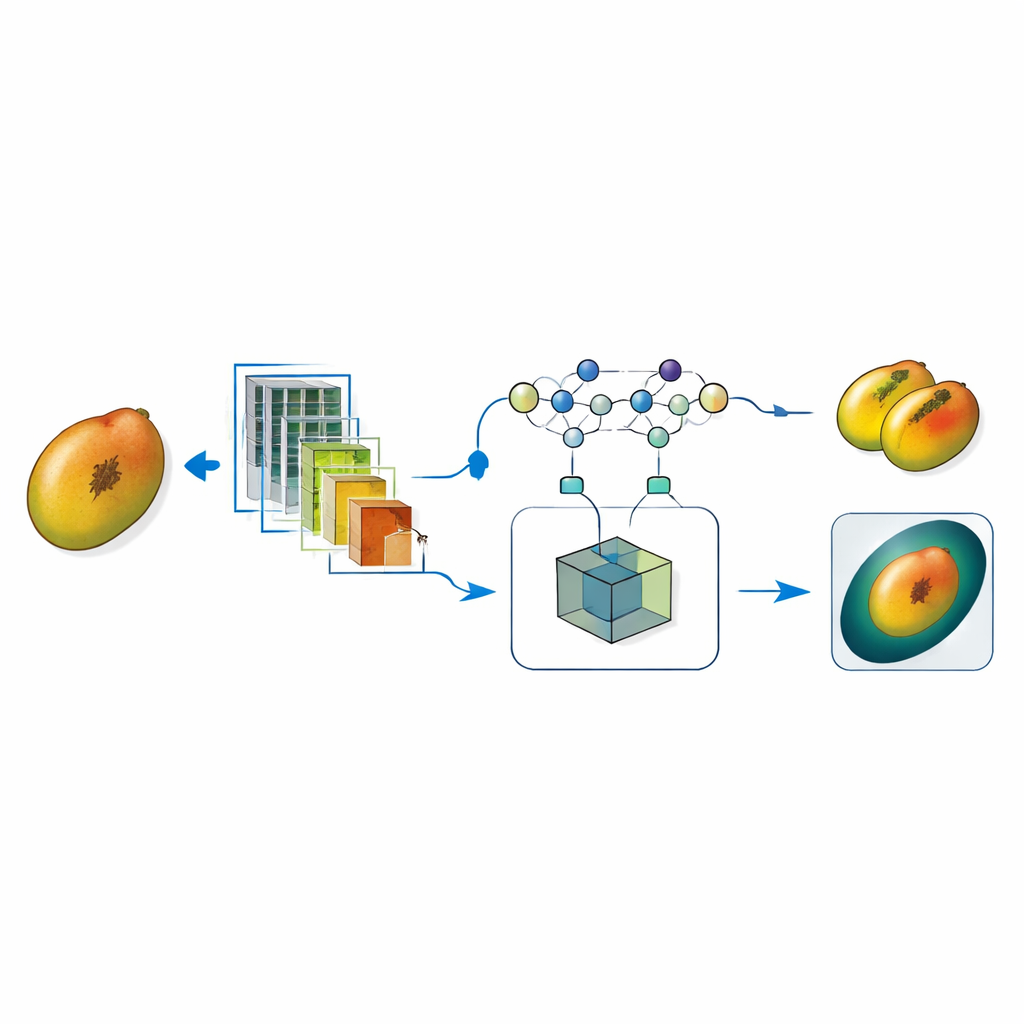

Building on the strengths and weaknesses of these baselines, the authors designed a new hybrid model called CoAT‑AgriLite. It combines a convolutional "stem" that focuses on fine spatial detail—such as tiny lesions or surface roughness—with attention-based blocks borrowed from transformer networks that capture how different regions of a fruit relate to one another across the whole image. Instead of simply stacking a convolutional network and a transformer one after the other, CoAT‑AgriLite fuses their features midway, allowing local and global information to interact directly. The design is intentionally lightweight: it uses fewer parameters and fewer floating‑point operations than typical transformer models, making it suitable for real-time use on modest hardware like mobile phones, drones, or edge devices in packing houses.

Seeing How the AI Makes Its Decisions

Accuracy alone is not enough in agriculture, where a wrong call can mean wasted fruit or unchecked disease spread. To build trust, the team integrated an explainability tool known as Grad‑CAM. For each prediction, Grad‑CAM produces a heatmap highlighting areas of the fruit image that contributed most strongly to the model’s decision. In healthy fruits, attention spreads evenly or remains low, while in diseased fruits it concentrates around dark spots, discolored patches, or softened regions. Experiments showed that over 90 percent of the model’s activation energy fell on true lesion areas rather than on background clutter, and even its rare mistakes tended to involve images with extremely subtle or partially hidden damage rather than random noise.

How Well It Works and Why It Matters

Across extensive tests, CoAT‑AgriLite clearly outperformed all four benchmark models and a range of previously published systems. On unseen test images, it achieved 99.37 percent overall accuracy, with similarly high precision, recall, and F1 scores for each fruit type, indicating that it rarely missed diseased samples and seldom raised false alarms. It also matched or beat more complex, heavier models while requiring less computation, confirming that careful hybrid design can be both powerful and efficient. For non-specialists, the key message is that a compact, explainable AI can now look at ordinary photos of fruit and reliably flag disease, while visually justifying its choices. Such systems could support farmers, agronomists, and supply‑chain managers in monitoring crop health, automating sorting lines, and reducing waste—helping to deliver healthier fruit from orchard to table with greater transparency and lower cost.

Citation: Kothandaraman, R., Srinivasan, S., Mathivanan, S. et al. A hybrid convolution and attention-based framework with visual explanation for fruit disease identification. Sci Rep 16, 12771 (2026). https://doi.org/10.1038/s41598-026-42135-5

Keywords: fruit disease detection, explainable AI, deep learning, computer vision, precision agriculture