Clear Sky Science · en

An enhanced diabetic retinopathy detection approach using optimized deep learning technique

Why spotting eye damage early matters

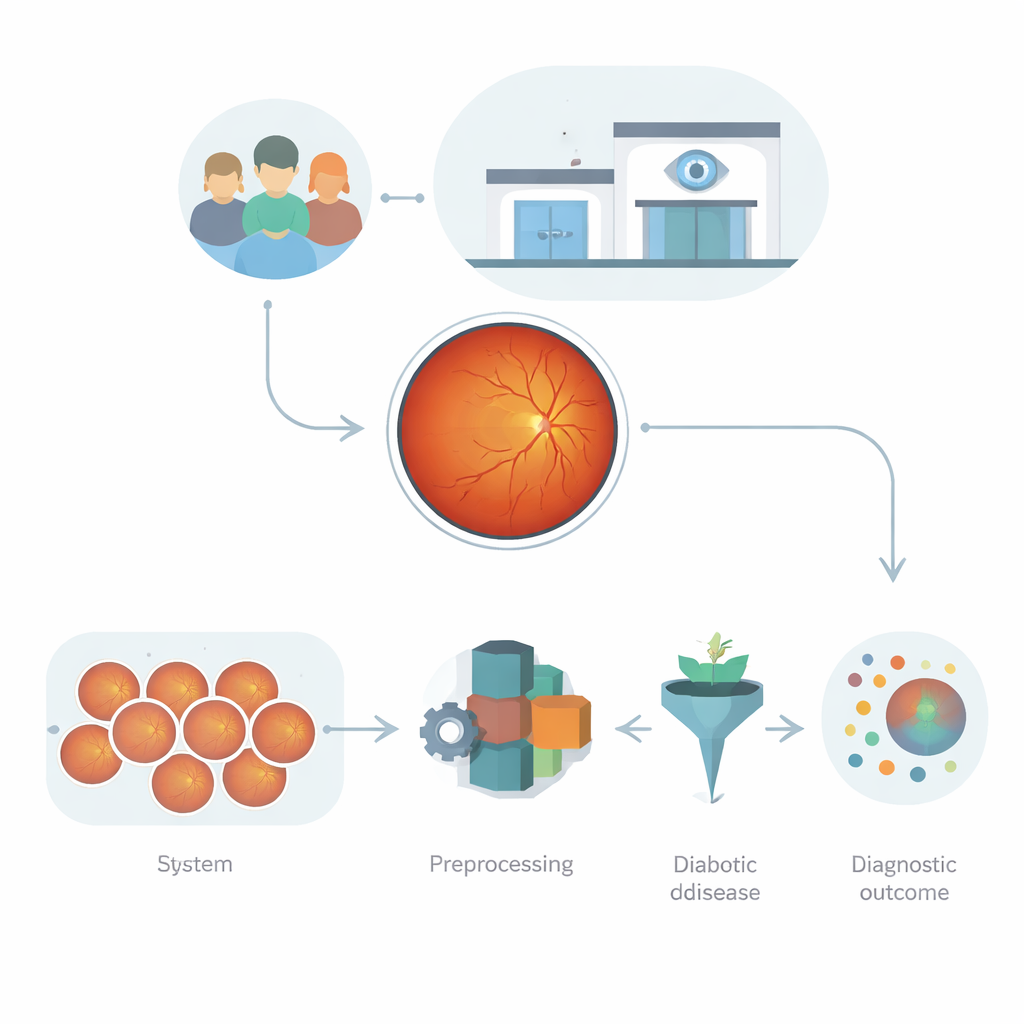

Diabetes can quietly damage the tiny blood vessels at the back of the eye, eventually leading to blurred vision or even blindness. Eye doctors can catch this condition—called diabetic retinopathy—by examining detailed photographs of the retina. But with millions of people at risk and too few specialists, many patients are never screened in time. This study explores how a carefully designed artificial intelligence system can read retinal photos more accurately and reliably, helping detect trouble early enough to save sight.

Pictures of the eye as a data gold mine

Retinal photographs, known as fundus images, are far more than simple snapshots. They capture how light is absorbed, reflected, and scattered by the layers of the eye, revealing blood vessels, tiny leaks, and scars. These patterns are rich with clues about diabetic damage, but they are also complex: images vary in brightness, focus, camera type, and patient background. Earlier computer programs either relied on hand-crafted measurements, which miss subtle changes, or on deep learning networks that can be powerful but prone to overfitting, especially when image quality or clinical settings differ. The challenge is to build an automated system that can learn from this messy, high‑dimensional data without becoming brittle or unpredictable.

Teaching a neural network to see key warning signs

The authors first use a modern deep learning model, EfficientNet‑B0, to act as a highly trained “feature extractor” for each retinal image. Rather than having doctors manually mark every hemorrhage or fatty deposit, the network learns abstract visual patterns that consistently appear in diseased versus healthy eyes. To make the system more robust, all images are cleaned and standardized: they are resized, converted to grayscale to focus on structure instead of color, and enhanced to sharpen tiny spots and vessel details. The grayscale images are then converted into a format the pre-trained network can handle, and the final layers of the network are gently fine‑tuned so its internal filters respond strongly to retinal structures instead of everyday objects.

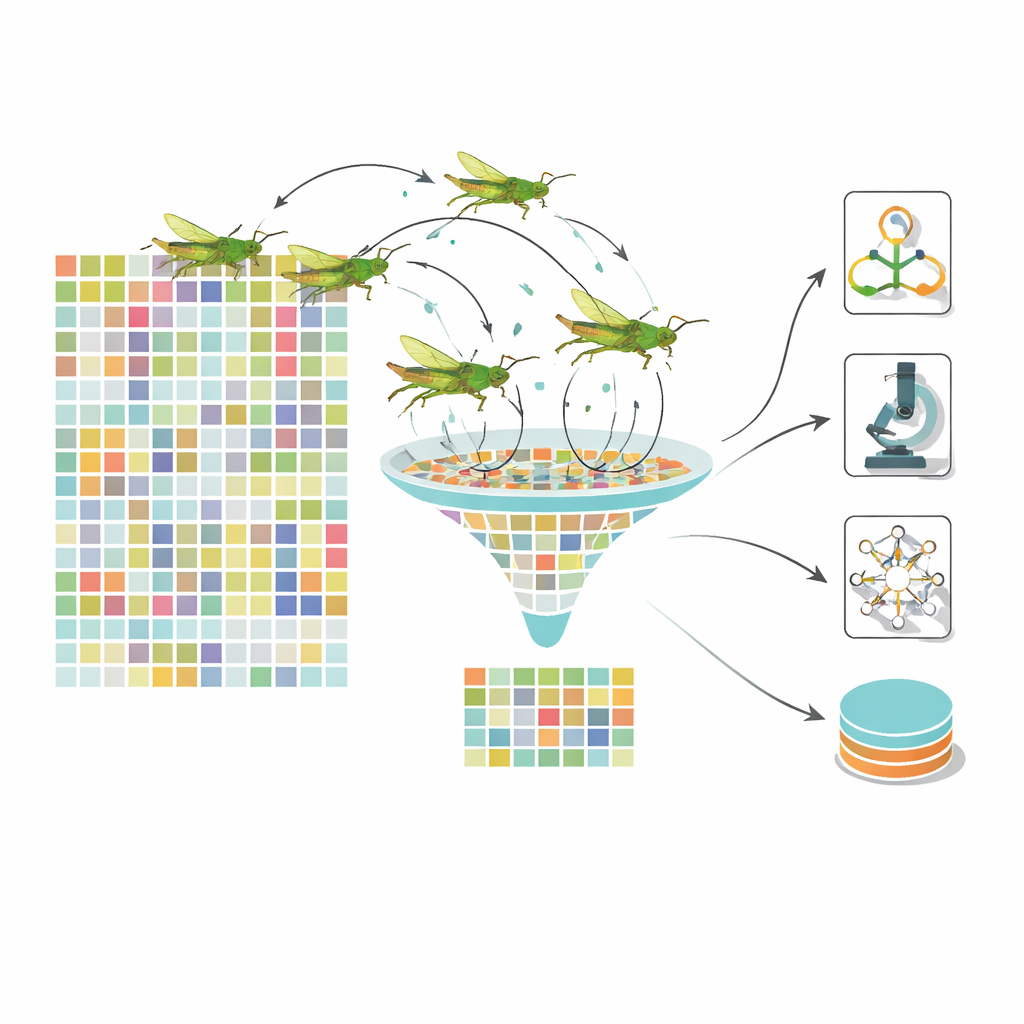

Letting a virtual swarm pick the most telling clues

Even after deep learning, each image is represented by more than a thousand numerical features, many of them redundant. Feeding all of these into a classifier slows learning and can blur the distinction between disease stages. To tackle this, the team turns to a nature‑inspired optimizer modeled after the movement of grasshoppers. In their dynamic grasshopper optimization algorithm, each “grasshopper” represents a different subset of features. Over many iterations, the swarm explores combinations, guided by a balance between roaming widely and closing in on promising regions. Crucially, the control parameters change over time, keeping the swarm from getting stuck too early. The result is a much smaller set of highly informative features—about one hundred instead of 1,280—that still encode important signs such as clusters of microaneurysms, patches of exudates, or patterns of vessel swelling.

Many simple opinions make a stronger diagnosis

Rather than trusting a single model, the system uses a stacked ensemble of several different classifiers. A support vector machine, a Bayesian network, and a decision tree each receive the optimized feature set and output their own probability that an eye belongs in a given disease stage. These “opinions” are then combined by a fast gradient‑boosting method called LightGBM, which learns how to weight each base model depending on the situation. This layered design reduces the chance that any one model’s blind spots will dominate. The authors test their framework on large public datasets of retinal images, including the widely used EyePACS and APTOS collections, and compare it with leading deep learning pipelines and other bio‑inspired optimizers.

How well the system performs in real‑world‑like tests

Across experiments, the dynamic grasshopper–ensemble framework consistently beats competing methods. On key benchmarks it reaches about 94–95% accuracy, a high F1‑score (which balances missed cases and false alarms), and an area under the ROC curve of 0.96, indicating strong separation between healthy and diseased eyes. It also generalizes well across different train–test splits and additional datasets, and maintains most of its performance when images are artificially noisier or distorted—conditions meant to mimic real clinic variability. By contrast, earlier swarm algorithms with fixed settings tend to converge too soon, keep more redundant features, and yield lower sensitivity and specificity, especially on challenging or imbalanced data.

What this means for patients and clinics

Translated into everyday terms, the study shows that combining a modern image‑analysis network with a smart feature‑picking swarm and a team of simple classifiers can produce a fast, accurate, and comparatively robust “second reader” for diabetic eye disease. Such a tool will not replace eye doctors, but it can help flag at‑risk patients sooner, especially in busy or under‑resourced clinics where specialists are scarce. The authors note that further validation on local hospital data and continued work on reducing computational cost are still needed, but their physics‑aware, optimization‑driven approach moves automated screening closer to trustworthy, real‑world deployment.

Citation: Darwish, S.M., Milad, K.G. & Ibrahim, R.E.ED. An enhanced diabetic retinopathy detection approach using optimized deep learning technique. Sci Rep 16, 9825 (2026). https://doi.org/10.1038/s41598-026-41998-y

Keywords: diabetic retinopathy, retinal imaging, deep learning, feature selection, medical AI