Clear Sky Science · en

MuGu:mutual guidance learning between pretrained SAM and lightweight model for medical image segmentation

Sharper Computer Vision for Medical Scans

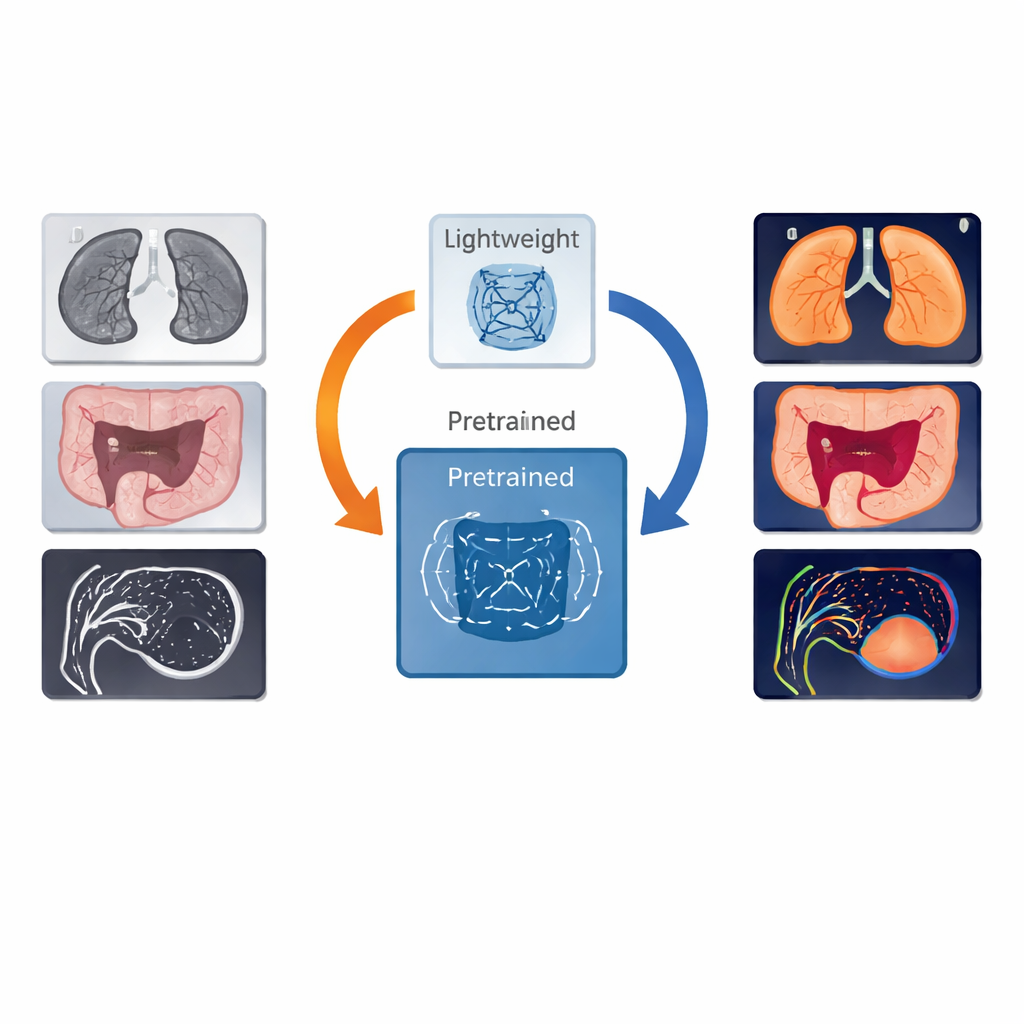

Doctors rely on computers to highlight suspicious spots in scans of the lungs, colon, brain, and liver—but today’s tools face a trade-off: small models run quickly in hospitals but can miss subtle details, while huge cutting-edge models are more accurate yet too heavy and costly to use everywhere. This paper introduces a new way to let a powerful "foundation" model teach a smaller model without holding it back, aiming to bring top-tier image understanding to everyday clinical settings.

The Challenge of Teaching Smaller Models

Medical image segmentation is the task of tracing the exact outline of organs, vessels, or lesions in scans. Traditional deep-learning systems are usually tailored to a specific task or organ and struggle when data or imaging conditions change. Newer foundation models, such as SAM-Med, can adapt to many tasks but require substantial computing power and memory. Existing attempts to combine the two typically let the large model supervise the small one throughout training and on every image. The authors show that this constant, uniform supervision can actually hurt the smaller model once it begins to catch up in performance, preventing it from fully developing its own strengths.

A Two-Way Conversation Between Models

The authors propose MuGu, short for "mutual guidance," a framework where the large and small models influence each other in a more selective and dynamic way. At its core is a streamlined segmentation network—the lightweight model—equipped with two heads that predict what is foreground (for example, a tumor) and what is background. This design helps the smaller network better judge its own certainty. MuGu then introduces a feedback loop between the lightweight network and SAM-Med, rather than a one-way flow of knowledge.

Letting Confidence Decide When Help Is Needed

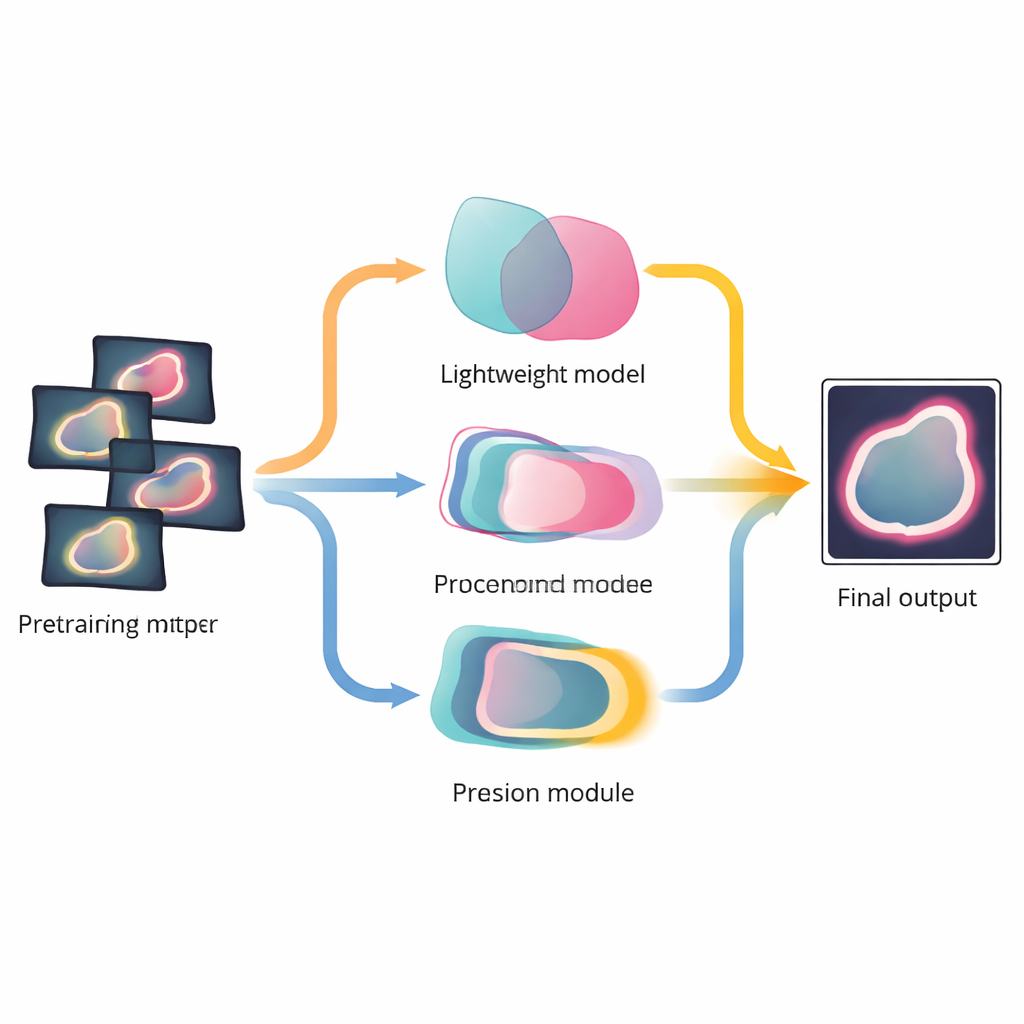

The first key idea, called Confidence Prompt Guidance, is to involve SAM-Med only where it is most useful. During training, MuGu measures how well the small model’s foreground and background predictions match SAM-Med’s output on each image. When there is strong disagreement—meaning the small model is unsure or wrong—its prediction is converted into a "prompt" that asks SAM-Med for more detailed guidance on that specific case. When the two models already agree, SAM-Med steps back. Over time, the number of images needing such intervention drops, so the powerful model focuses on the hardest examples instead of overshadowing the smaller one.

Sharpening Boundaries Through Cooperative Focus

The second innovation, Ensemble Structure Boundary Guidance, focuses on the most critical information in many medical tasks: the precise boundary between healthy and abnormal tissue. MuGu fuses three perspectives—the large model’s segmentation, the small model’s foreground prediction, and its background prediction—into a shared boundary signal. A specialized attention mechanism learns how much to trust each branch at every stage, and this fused boundary information is then used to refine the lightweight model’s internal features and training loss. Importantly, once training is complete, the system can produce final segmentations using only the efficient small model, without needing SAM-Med at all.

Proven Gains Across Organs and Scan Types

The researchers tested MuGu on four public datasets that together cover lung lesions, colon polyps, brain arteries, and liver vessels, using both 2D images and full 3D volumes. Across all of them, MuGu outperformed widely used segmentation networks and also beat straightforward ways of pairing large and small models. It improved overall overlap scores and reduced boundary errors, while keeping computational demands much closer to those of a conventional lightweight model. Analysis of the training process showed that, as the lightweight network improved, MuGu automatically shifted reliance away from the foundation model and toward the smaller one’s own predictions.

Bringing Powerful AI Closer to the Clinic

In simple terms, this work shows how a big, expensive model can act as a smart tutor rather than a permanent crutch for a smaller model. By calling on the large model only when confidence is low and by jointly refining the outlines of anatomical structures, MuGu trains an efficient network that can rival or even surpass its teacher on key tasks. This approach could help hospitals and clinics deploy stronger AI assistants on modest hardware, bringing more reliable automated readings of medical scans to everyday practice.

Citation: Wang, C., Wang, Z., Chen, W. et al. MuGu:mutual guidance learning between pretrained SAM and lightweight model for medical image segmentation. Sci Rep 16, 12099 (2026). https://doi.org/10.1038/s41598-026-41924-2

Keywords: medical image segmentation, foundation models, deep learning, computer-aided diagnosis, model compression