Clear Sky Science · en

SAM2-ARAFNet: adapting SAM2 with an attention-enhanced residual ASPP fusion network for high-resolution remote sensing semantic segmentation

Sharper Eyes on Our Changing Planet

From tracking storm damage to guiding city planning, aerial and satellite images have become one of humanity’s most powerful tools for understanding the world. But turning these detailed pictures into clear maps of buildings, roads, trees and cars is still surprisingly hard, especially when computers must work quickly on drones or small devices. This paper presents SAM2-ARAFNet, a new mapping system that builds on a powerful vision model and carefully trims it down, aiming to deliver highly accurate land-cover maps from high‑resolution images while using far less computing power than today’s leading methods.

Why Mapping Cities from Above Is So Difficult

High‑resolution aerial photos capture cities in remarkable detail: individual houses, tree crowns, parked cars and even narrow sidewalks are visible. Yet this richness brings challenges. Surfaces that belong to the same category, such as different types of pavement, can look very different, while distinct classes like low bushes and tree canopies may appear confusingly similar. Images can be blurred, partly hidden by shadows or clouds, and vary from one region to another. Traditional rule‑based approaches and earlier machine‑learning systems struggle to cope with this variety, and even modern deep networks often need large labeled datasets and powerful hardware, limiting their use on satellites, unmanned aerial vehicles and edge devices.

Adapting a General Vision Model to Remote Sensing

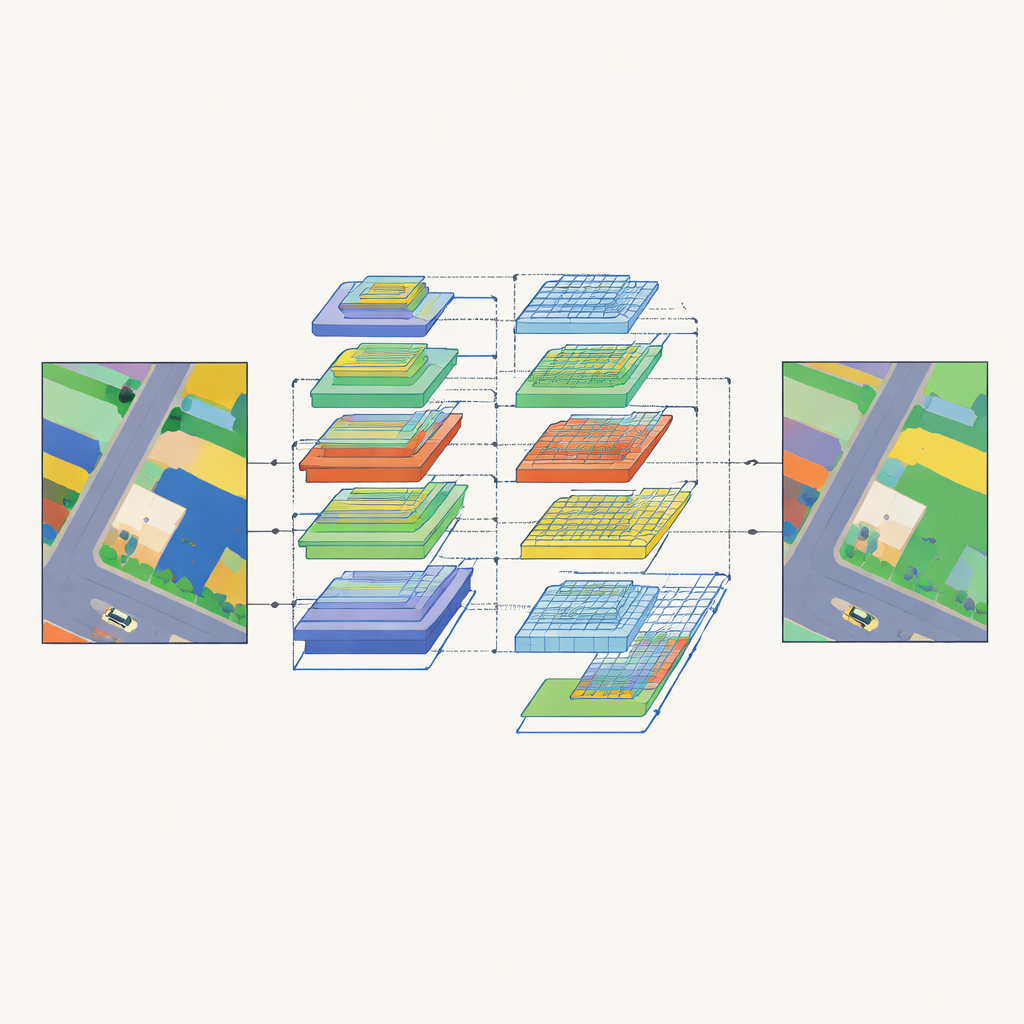

Recent “foundation models” for vision, trained on massive collections of everyday photos, have shown an impressive ability to segment almost anything in an image. One of the strongest of these is Segment Anything Model 2 (SAM2), which can draw object outlines without being told in advance what those objects are. However, SAM2 is tuned to natural images and produces class‑agnostic regions, making it less suited for remote sensing tasks that must assign a specific land‑cover label to every pixel. The authors therefore design SAM2‑ARAFNet, which keeps SAM2’s powerful encoder frozen and adds lightweight adapter modules that gently adjust its internal representations to match the unique look of aerial scenes. This avoids retraining the huge backbone from scratch while still tailoring it to the remote sensing domain.

Seeing the Big Picture and the Fine Details at Once

To turn encoded features into full land‑cover maps, SAM2‑ARAFNet uses a specially crafted decoder that combines information across many scales. At lower levels, it preserves sharp edges and small objects by fusing early feature maps through multiple branches and an attention module that emphasizes informative patterns and suppresses noise. At higher levels, it introduces an attention‑enhanced residual module that spreads its “receptive field” over larger and larger neighborhoods, helping the network understand wider context such as how buildings, roads and vegetation relate to each other. A bilateral fusion block then brings low‑level detail and high‑level meaning together so that, for example, car outlines remain crisp while being correctly distinguished from nearby roofs or asphalt.

Teaching a Smaller Network to Imitate a Bigger One

While the full SAM2‑ARAFNet model delivers strong accuracy, its size still makes it heavy for on‑board deployment. To address this, the authors train a compact “student” network, built on the EfficientNet‑b0 backbone, to mimic the predictions of the large “teacher” model. Instead of copying just the final labels, the student learns from the teacher’s richer output patterns, capturing how different classes relate to each other and how pixels within the same class behave across the scene. This knowledge‑distillation process shrinks the parameter count by about 97 percent—from roughly 223 million to 6.7 million—while preserving more than 99 percent of the teacher’s accuracy in overall terms. The result is a much lighter model that still produces high‑quality segmentations suitable for drones and other edge platforms.

How Well Does It Work in Real Cities?

The team evaluates both teacher and student models on two widely used benchmarks of urban aerial imagery: the ISPRS Vaihingen and Potsdam datasets. Compared with a broad range of strong competitors based on convolutional networks, Transformers and hybrid designs, SAM2‑ARAFNet achieves consistently higher scores on standard measures of segmentation quality. It is particularly effective at handling tricky situations such as vehicles partially hidden by buildings, or the subtle transitions between low vegetation, trees and clutter around building facades. Visual comparisons show that its outputs have cleaner object boundaries and fewer misclassified patches, underscoring the benefits of its multi‑scale attention and fusion design.

Smarter Maps for a Resource‑Limited World

In everyday terms, this work shows how a powerful but bulky vision model can be adapted and slimmed down to create accurate, efficient maps from aerial images. By reusing SAM2’s strong encoder, carefully designing multi‑scale attention modules, and then distilling this knowledge into a lightweight student, SAM2‑ARAFNet delivers detailed urban land‑cover maps with far less computational cost. This balance of precision and efficiency makes it a promising tool for environmental monitoring, disaster assessment and city management directly on satellites, drones or other devices that cannot rely on constant cloud connection.

Citation: Shi, W., Ding, J., Lei, J. et al. SAM2-ARAFNet: adapting SAM2 with an attention-enhanced residual ASPP fusion network for high-resolution remote sensing semantic segmentation. Sci Rep 16, 10225 (2026). https://doi.org/10.1038/s41598-026-38047-z

Keywords: remote sensing, semantic segmentation, satellite imagery, deep learning, knowledge distillation