Clear Sky Science · en

Deep neural network inference on an integrated, reconfigurable photonic tensor processor

Why Faster Thinking Machines Matter

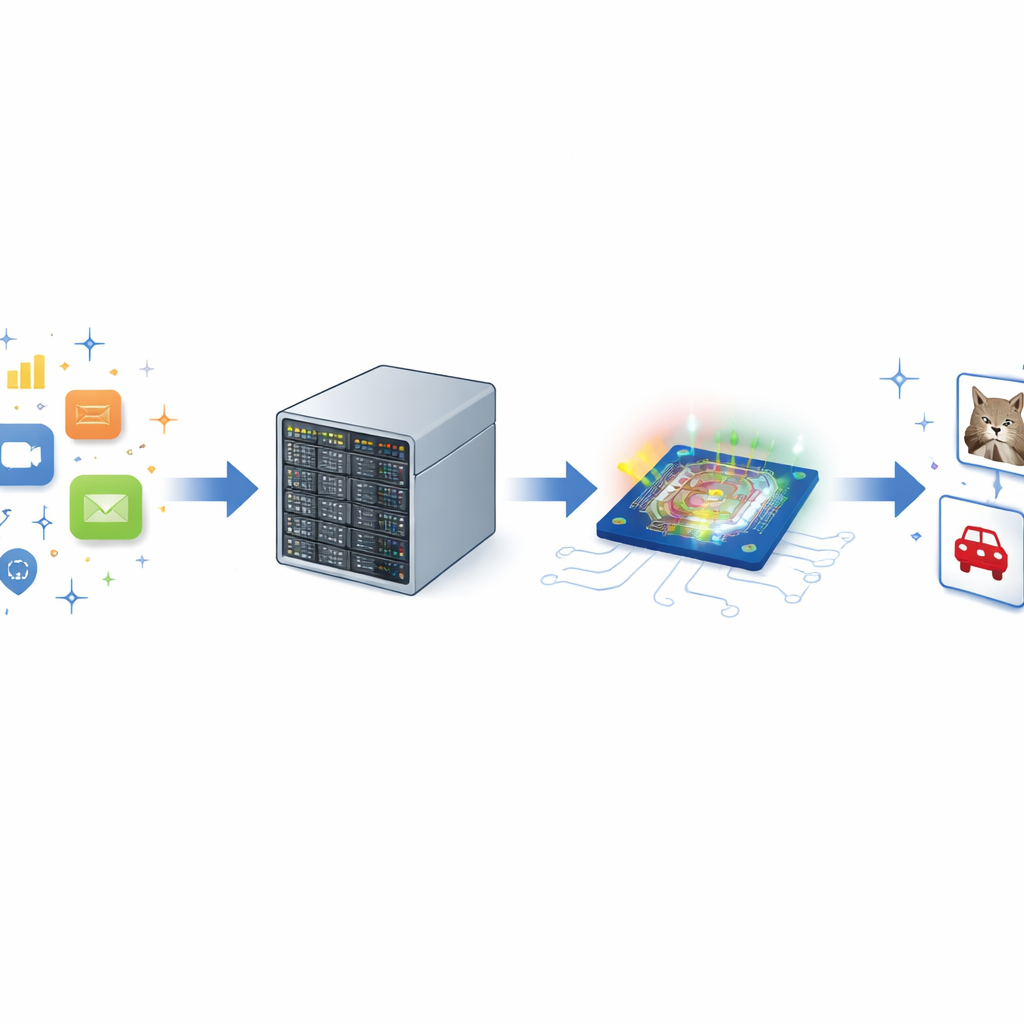

Every time you unlock your phone with your face, speak to a voice assistant, or see an AI system detect objects in a video, enormous amounts of number‑crunching happen behind the scenes. As these artificial intelligence models grow larger and more capable, they demand more energy, more hardware, and more time. This article explores a new kind of “optical brain” that uses light instead of only electricity to carry out these heavy computations, aiming to make future AI both faster and more energy‑efficient.

Light as a New Way to Compute

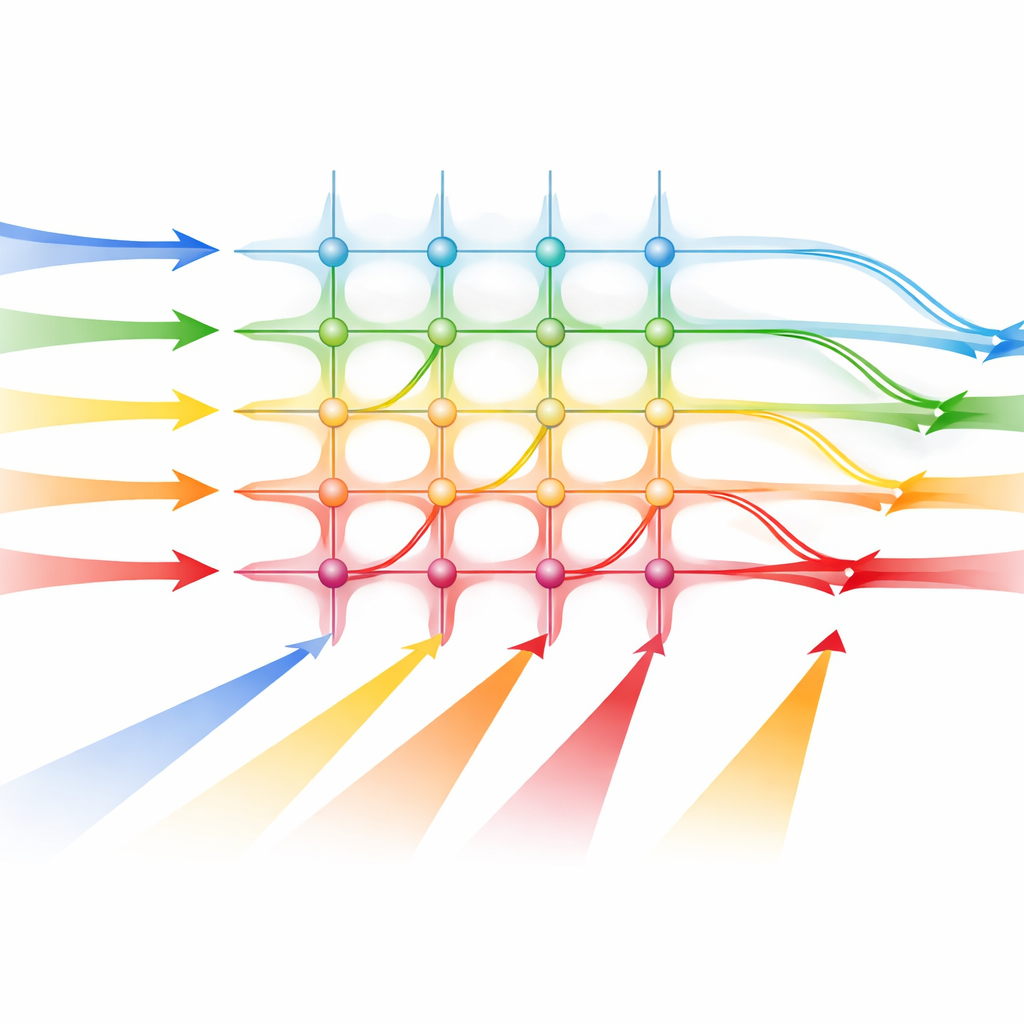

Modern AI is powered by deep neural networks, which boil down to repeated applications of similar math operations on large tables of numbers, called tensors. Today these operations run on electronic chips such as GPUs, which are bumping up against limits in speed and power consumption. The authors turn to photonics—the use of light in tiny on‑chip structures—to perform these same operations. Because light can travel quickly and in many channels at once, a photonic processor can, in principle, carry out many calculations in parallel with very low delay while avoiding some of the energy losses that plague conventional electronics.

Building a Light‑Powered Math Engine

The team designed a photonic tensor processor that fits into a standard 19‑inch computer rack, making it resemble other server hardware. At its heart is a silicon photonic chip made with an industrial manufacturing process, which improves the chances that such devices could eventually be produced at scale. On this chip, incoming electrical signals that represent data are converted into patterns of light using special modulators. These light signals then travel through a grid of tiny waveguides that act like a matrix of adjustable connections, where each crossing can strengthen or weaken the passing light. At the end of each column, built‑in light sensors convert the combined brightness back into electrical signals that correspond to the result of a matrix‑vector multiplication, the core operation in many neural network layers.

Keeping the Light Stable and the Math Accurate

To feed the chip, the researchers use a single advanced light source called a microcomb, which produces many evenly spaced colors of light at once. Each color serves as a separate channel carrying part of the input data, allowing multiple calculations to proceed in parallel. However, working with analog light signals introduces noise and imperfections. The authors tackle this with careful calibration: they measure how each modulator and detector responds, and then adjust the control voltages and power levels so that a desired mathematical weight corresponds to a specific optical setting. They also compensate for crosstalk between channels and for distortions introduced by the optical packaging. By averaging repeated measurements when needed, they can trade a bit of extra time for higher accuracy.

Running Real AI Tasks with Light

To show that their system is not just a lab curiosity, the team connects the photonic processor directly to PyTorch, a widely used AI software framework. They train two image‑recognition neural networks digitally and then fine‑tune them while modeling the kind of noise the hardware will introduce. Once trained, the same networks run on the photonic processor with no need to redesign them for the chip. On the simple handwritten‑digit dataset MNIST, the optical system achieves about 98.1% accuracy in a higher‑precision mode and 91% in a low‑latency mode, close to the all‑digital baseline. On the more demanding CIFAR‑10 color image dataset, it reaches 72% accuracy in precision mode—lower than the digital version but still demonstrating practical performance on a tougher task.

What This Means for the Future of AI Hardware

Although the current prototype is less energy‑efficient than cutting‑edge electronic accelerators, most of its power is spent in supporting electronics, not in the optical core itself. The results show that a programmable, rack‑mountable photonic processor can carry out nearly all the heavy linear algebra in real neural networks, with stable performance over time and a tunable balance between speed and accuracy. As the technology scales to larger optical cores, more wavelengths, and better converters, such light‑based tensor processors could offer ultra‑fast, low‑latency AI engines that complement or, in some cases, offload work from conventional chips, helping future systems handle ever‑growing AI workloads more efficiently.

Citation: Meyer, L., Dijkstra, J., Tebeck, S. et al. Deep neural network inference on an integrated, reconfigurable photonic tensor processor. Nat Commun 17, 3396 (2026). https://doi.org/10.1038/s41467-026-71599-2

Keywords: photonic computing, optical AI accelerator, deep neural networks, silicon photonics, tensor processing