Clear Sky Science · en

A foundation model for multi-task cross-distribution restoration of fluorescence microscopy images

Sharper Views of the Hidden Cell World

Modern biology depends on microscopes to watch the bustling life inside cells, but the images we capture are often grainy, blurry, or missing fine details. This paper introduces FluoResFM, a new artificial intelligence (AI) model designed to clean up and sharpen fluorescence microscopy images across many different experiments, all within a single system. For scientists, this means clearer pictures with less trial-and-error; for patients and the public, it points toward faster, more reliable biological discoveries and medical insights built on higher-quality data.

Why Microscopy Images Are So Hard to Get Right

Fluorescence microscopes reveal proteins, membranes, and organelles by making them glow, but there is a trade-off: using gentle light to protect living cells often produces noisy, dim, and blurry images. Researchers have turned to deep learning to repair these images, teaching neural networks to remove noise, undo blur, or boost resolution using examples of low- and high-quality image pairs. However, most existing tools are narrow specialists. One model may work only for denoising a particular structure, another only for sharpening a certain microscope type. When such a model is applied to a new structure or imaging setup, it can fail badly, inventing fake details or distorting real ones—a serious problem when scientists rely on these images for precise measurements.

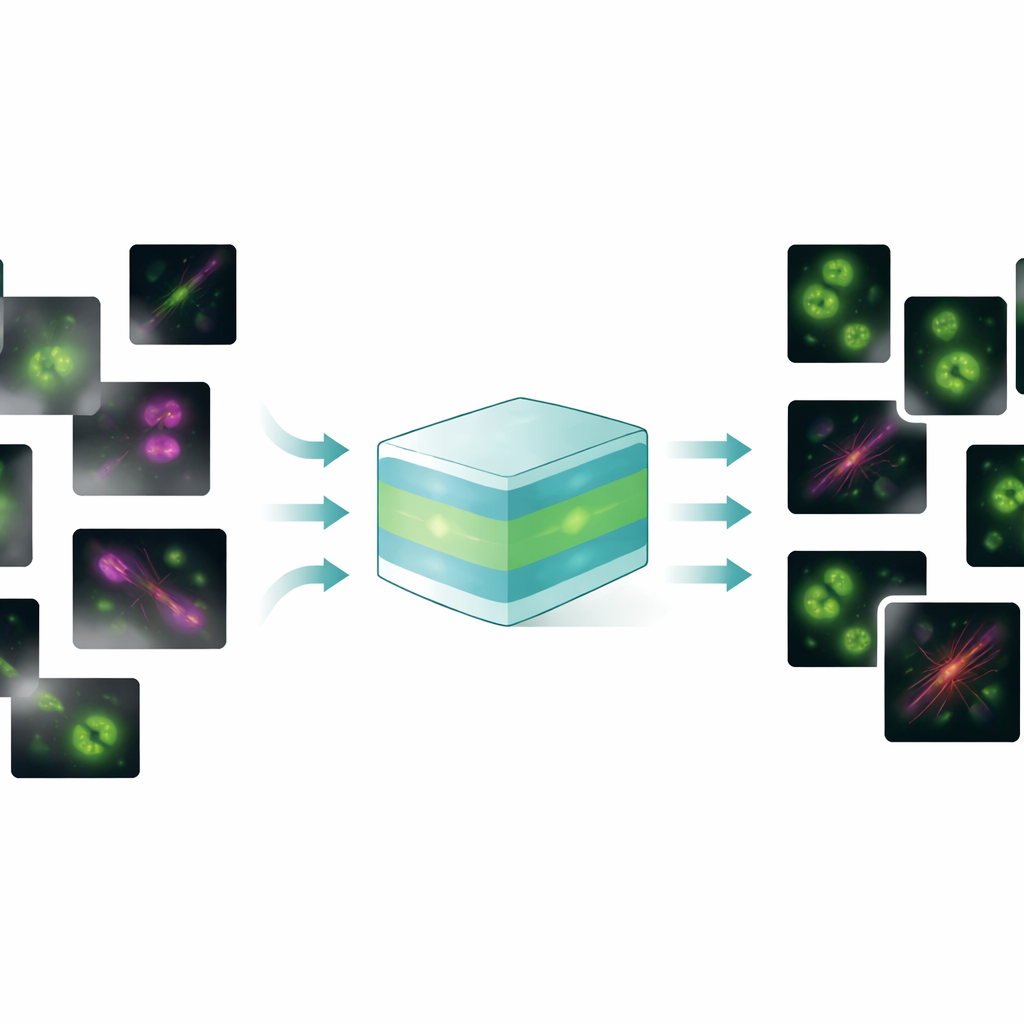

One Model to Handle Many Image Problems

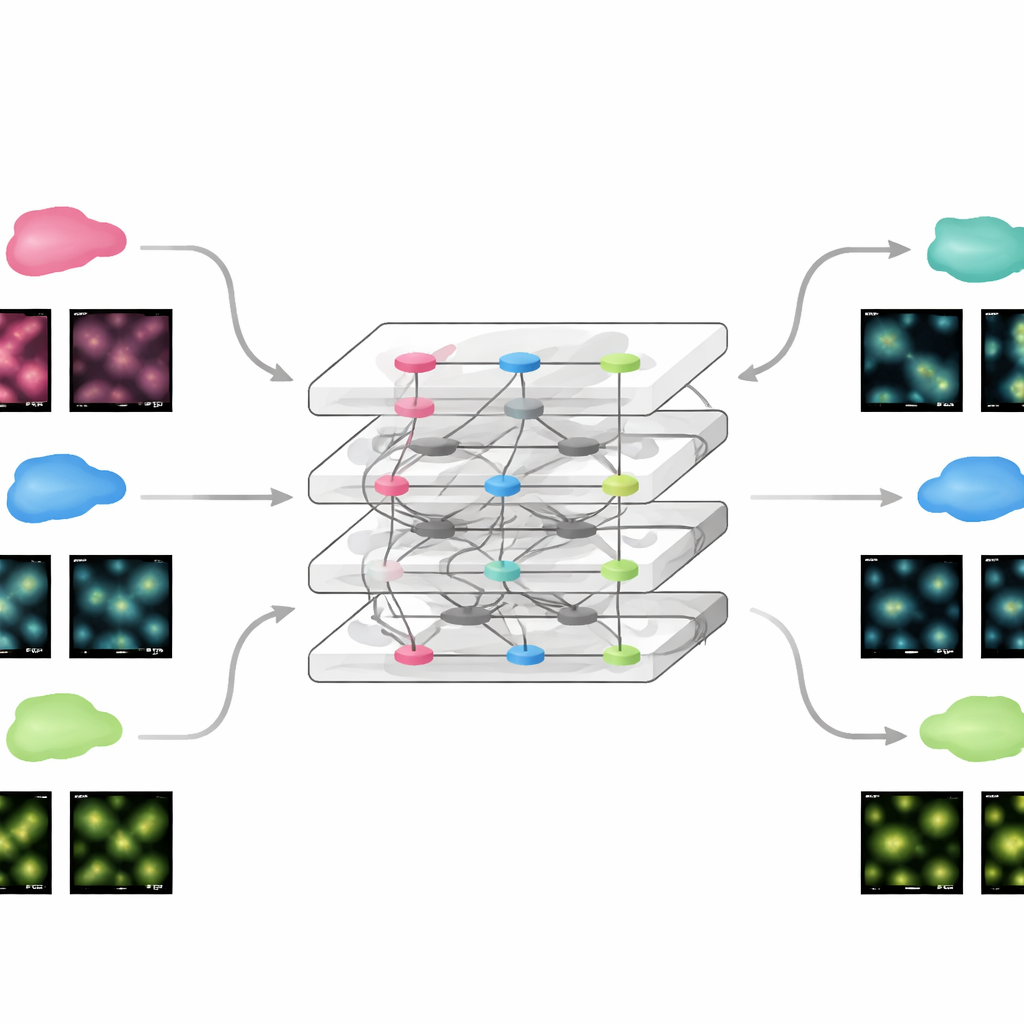

FluoResFM aims to be a generalist: a foundation model that can handle several restoration tasks—denoising, deblurring (deconvolution), and super-resolution—across many types of cell structures and microscopes in a single unified framework. The authors trained it on more than 4.3 million image patches covering over 20 biological structures, from clathrin-coated pits and microtubules to nuclei, lysosomes, and endoplasmic reticulum, collected from a wide variety of imaging conditions. At its core, FluoResFM uses a U‑Net-style architecture, a common design for biomedical image processing, but it is guided by extra information: short text descriptions that encode what task is being done, what structure is present, and how the images were acquired. These text prompts are converted into numerical features using a pre-trained biomedical language–vision model and then fused with image features inside the network through attention layers. In effect, the model is told not just “clean this picture” but “denoise a microtubule image from this kind of microscope toward that kind of target,” which helps it choose the right kind of correction.

How Text Guidance Improves Image Quality

When the authors compared FluoResFM to a leading earlier foundation model and to a version of itself without text guidance, the text-aware model clearly came out ahead. Across hundreds of internal datasets and 51 previously unseen external datasets, FluoResFM produced images that were closer to the high-quality references by multiple measures of sharpness, similarity, and error. It was especially good at resolving closely spaced features such as ring-shaped pores in the nuclear envelope or tangled microtubule networks, and it avoided the tendency to smear together neighboring structures. The textual prompts also provided a powerful steering mechanism. Changing the task description from “denoising” to “super-resolution” (conceptually) led the same network to perform very different operations on identical input images. Likewise, specifying the wrong structure type caused the model to reconstruct misleading patterns, such as turning a reticular network into dot-like spots, underscoring both the model’s flexibility and the importance of correct prior knowledge.

Adapting Quickly to New Experiments

Because FluoResFM starts from a broad base of experience, it can be fine-tuned to new data using remarkably little additional information. The team showed that by updating only a small part of the network with a single example image from a new dataset, the model reached performance comparable to conventional deep networks trained from scratch on hundreds of images. This held true for static images and for time-lapse movies of moving cellular structures, where the fine-tuned model improved both clarity and the stability of measurements over time. The same strategy allowed FluoResFM to be extended beyond its original training tasks to new ones such as three-dimensional volume restoration, surface projections from 3D to 2D, making resolution more even along different axes, and handling higher magnification factors. In all these cases, the model produced clearer structures and better quantitative agreement with reference data.

Helping Other Tools See Cells More Clearly

FluoResFM is not just a way to make pretty pictures; it also strengthens downstream analysis tools that depend on image quality. When the authors fed restored images into popular automated segmentation programs used to outline nuclei, membranes, and organelles, those tools detected more objects, missed fewer, and produced shapes that agreed better with expert-derived ground truth. This improvement was seen across dozens of datasets featuring many cell types and structures. To lower the barrier to everyday use, the team packaged FluoResFM as a plugin for napari, a widely used interactive image viewer, so that biologists can restore images and fine-tune the model within their usual workflows without needing to write code.

What This Means for Future Microscopy

In simple terms, this work shows that a single, text-guided AI model can clean up and sharpen a wide range of fluorescence microscopy images, adapt quickly to new experiments, and boost the performance of other analysis tools. By weaving together knowledge about what is being imaged, how it was captured, and what kind of improvement is desired, FluoResFM produces more trustworthy images than task-specific networks trained in isolation. As more data and tasks are added, such foundation models could become standard companions to microscopes, turning imperfect raw snapshots into reliable windows on the hidden architecture and dynamics of living cells.

Citation: Lu, Q., Liu, X., Feng, Q. et al. A foundation model for multi-task cross-distribution restoration of fluorescence microscopy images. Nat Commun 17, 3729 (2026). https://doi.org/10.1038/s41467-026-70307-4

Keywords: fluorescence microscopy, image restoration, deep learning, foundation models, cell imaging