Clear Sky Science · en

Deep learning-based image compression for wireless communications: impacts on robustness, throughput, and latency

Why smart image delivery over the air matters

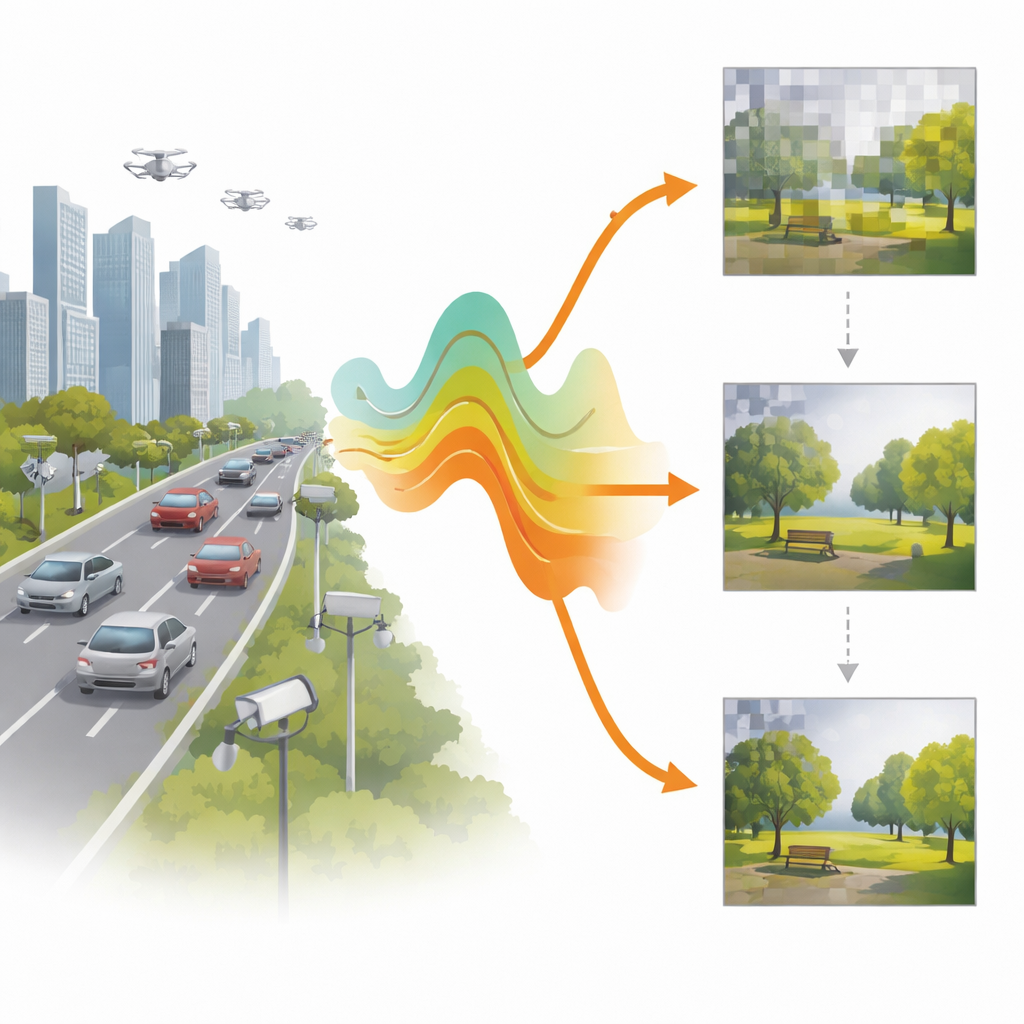

Every day, phones, cars, drones, and tiny sensors capture images that must travel wirelessly—sometimes from crowded city streets, sometimes from remote or harsh environments. When the radio link is weak or noisy, today’s image formats can stall, blur, or fail completely, which is dangerous for tasks like autonomous driving or remote monitoring. This paper explores how modern deep learning can redesign image compression so that pictures arrive faster and more reliably, even when the wireless channel is highly unpredictable.

The wireless bottleneck for pictures

Traditional formats such as JPEG, WebP, and video standards like HEVC were built for stable, wired or high‑quality links. They squeeze images into fewer bits, but are fragile: a few flipped bits in the compressed stream can ruin the entire picture, forcing heavy error‑correction and retransmissions. In real wireless channels, especially those with strong fading and low signal‑to‑noise ratio (SNR), that fragility translates into long waiting times before any usable image appears. Yet many modern applications—from IoT cameras to self‑driving cars—need a quick, even if rough, view of the scene first, and only then refinements as the link allows.

Progressive pictures that adapt to the air

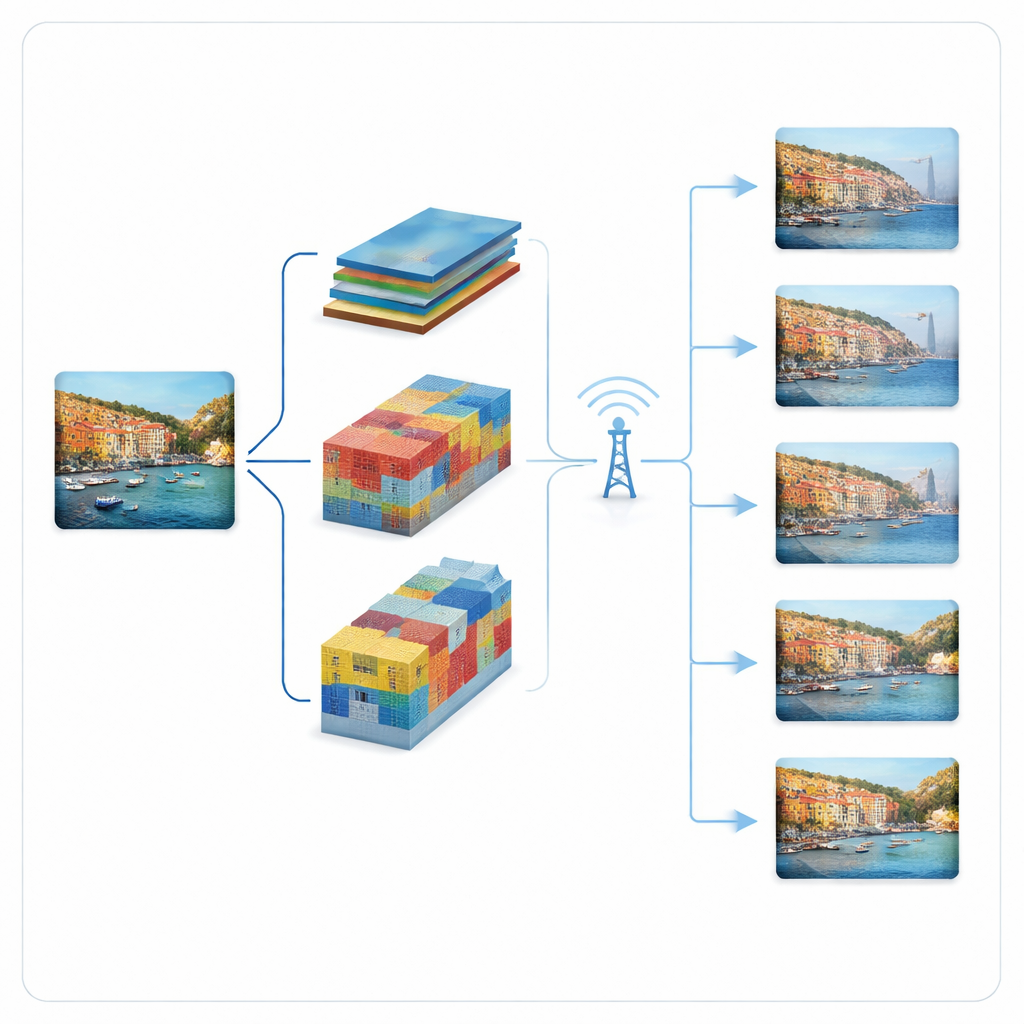

The authors build an adaptive, progressive transmission pipeline around two leading deep learning image compressors: a “hyperprior” model and a VQGAN model. Instead of sending one rigid bitstream per image, these systems break the compressed representation into ordered pieces. The most important pieces go first and already allow a coarse reconstruction; later pieces add detail when the channel improves or more bandwidth becomes available. The hyperprior model represents the picture as compact feature maps whose contribution to quality is ranked by importance. The VQGAN model represents the picture using codebook entries; it sends coarse codewords first and then residual refinements in stages. In both cases, the transmitter consults the current channel state and chooses how many pieces it can safely send in that time slot.

Testing in harsh wireless conditions

To evaluate these ideas, the study simulates image transmission over a Rayleigh fading channel, a standard model where signal strength rises and falls unpredictably. Using the Kodak set of high‑quality test images, the authors compare their progressive hyperprior and progressive VQGAN against an adaptive WebP baseline that also adjusts its compression level to the channel. Crucially, they measure not only image quality but also throughput (how many pixels per second are delivered) and waiting time—the delay until an image is successfully received. This waiting time is often ignored in deep‑learning communication work, but it dominates user experience in delay‑sensitive applications.

Speed versus robustness: what wins where

The results show that in very noisy conditions, standard adaptive WebP essentially gives up: the channel cannot support even its lowest quality setting, so no complete image is delivered. In contrast, both progressive learned models still provide viewable images, because they can fall back to sending only a minimal base layer. Among them, the progressive hyperprior model achieves the lowest latency and highest throughput across most low‑SNR settings, thanks to its very compact, finely ordered feature maps. This makes it especially attractive when rapid response is vital, such as for interactive vision systems. The progressive VQGAN, while slightly less efficient, offers higher visual quality in the harshest conditions and can tolerate bit errors without relying on separate error‑correction codes, which reduces computational load and system complexity.

What this means for future wireless imaging

In simple terms, the paper shows that teaching neural compressors to send images in smart, bite‑sized chunks transforms how pictures travel over unreliable wireless links. One design (hyperprior) is optimized for getting “good enough” images on screen with minimal delay, while the other (VQGAN) is tuned to keep images sharp even when the channel is very bad and extra protection codes are impractical. Together, they demonstrate that progressive, learned compression can keep cameras and vision systems operating smoothly where today’s codecs stumble, pointing toward future networks where the quality, speed, and robustness of image delivery can be flexibly balanced in real time.

Citation: Naseri, M., Ashtari, P., Seif, M. et al. Deep learning-based image compression for wireless communications: impacts on robustness, throughput, and latency. npj Wirel. Technol. 2, 14 (2026). https://doi.org/10.1038/s44459-025-00019-6

Keywords: wireless image transmission, deep learning compression, progressive coding, low latency communication, robust codecs