Clear Sky Science · en

Simulation-based inference at the theoretical limit for fast, robust microstructural MRI with minimal diffusion data

Why Faster Brain Scans Matter

Magnetic resonance imaging (MRI) can reveal fine details of brain tissue, but the most informative scans are often slow and noisy. Long scan times are uncomfortable for patients, hard to schedule in busy hospitals, and nearly impossible for children or very ill people. This study asks a simple question with big consequences: can we use smart computer simulations and artificial intelligence to squeeze the same rich information out of much shorter, messier diffusion MRI scans, without sacrificing reliability?

Looking Inside the Brain with Moving Water

Diffusion MRI tracks how water molecules jostle and wander through brain tissue. Because water moves differently along nerve fibers, through cell bodies, or around damaged regions, these patterns can act like a fingerprint of the brain’s microscopic structure. Over the years, scientists have built several families of models to translate diffusion signals into maps of tissue properties. Simpler approaches, such as diffusion tensor imaging, summarize how easily water moves and how directional that movement is. More advanced methods, such as diffusion kurtosis imaging and biophysical models like CHARMED and AxCaliber, aim to capture details like the density of fibers and even typical axon diameters. These maps could serve as “virtual biopsies,” offering clues about disease without surgery—but they usually demand many repeated measurements and long scans.

The Bottleneck of Traditional Fitting

Turning raw diffusion measurements into meaningful maps is a mathematical fitting problem: the model’s parameters are adjusted until the predicted signal matches what the scanner saw. The most common tools today, like non-linear least squares, do this by minimizing the squared difference between model and data. While simple and widely available, these methods work best when there are far more measurements than strictly needed—the scan-time equivalent of taking ten times as many photos just to be safe. They also struggle when noise is high or when starting guesses are poor, which is common in real clinical data. Newer statistical approaches can help but are often slow, sensitive to assumptions about noise, and rarely used outside research centers.

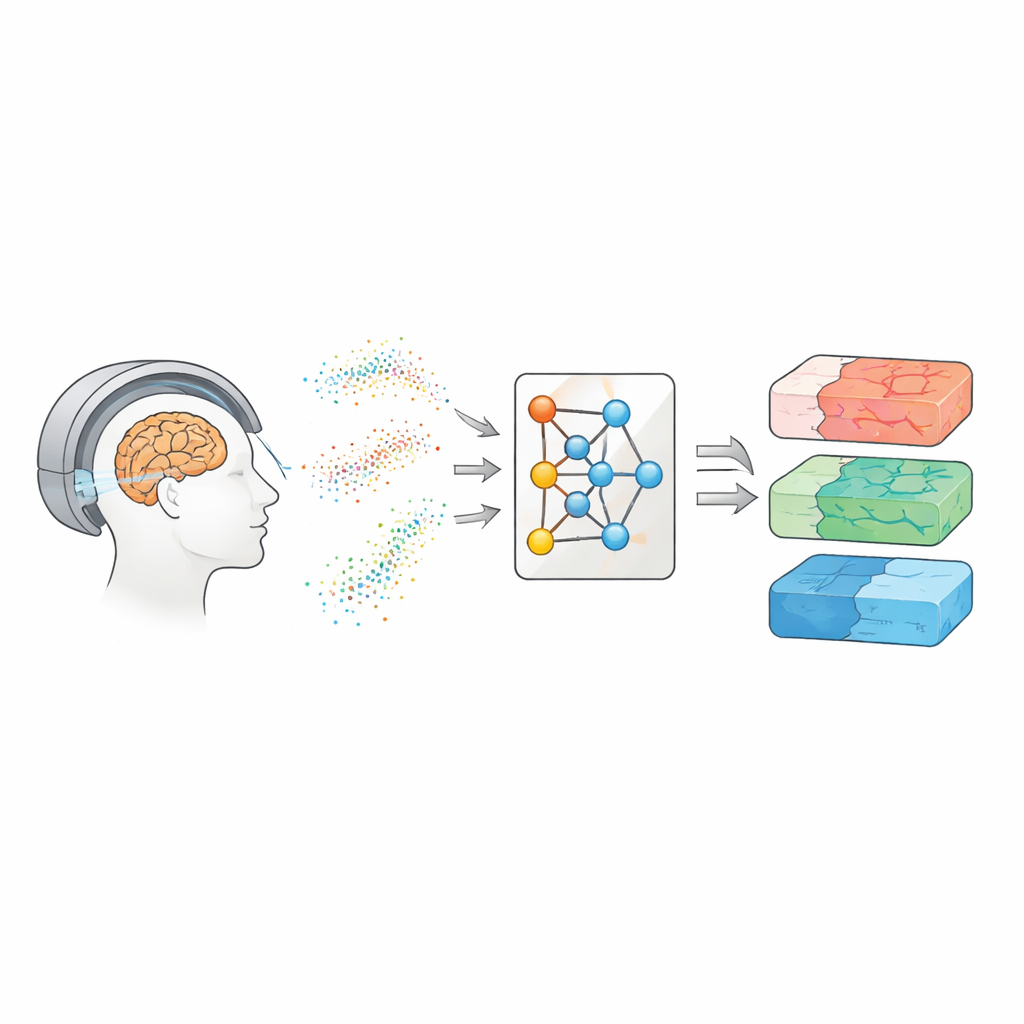

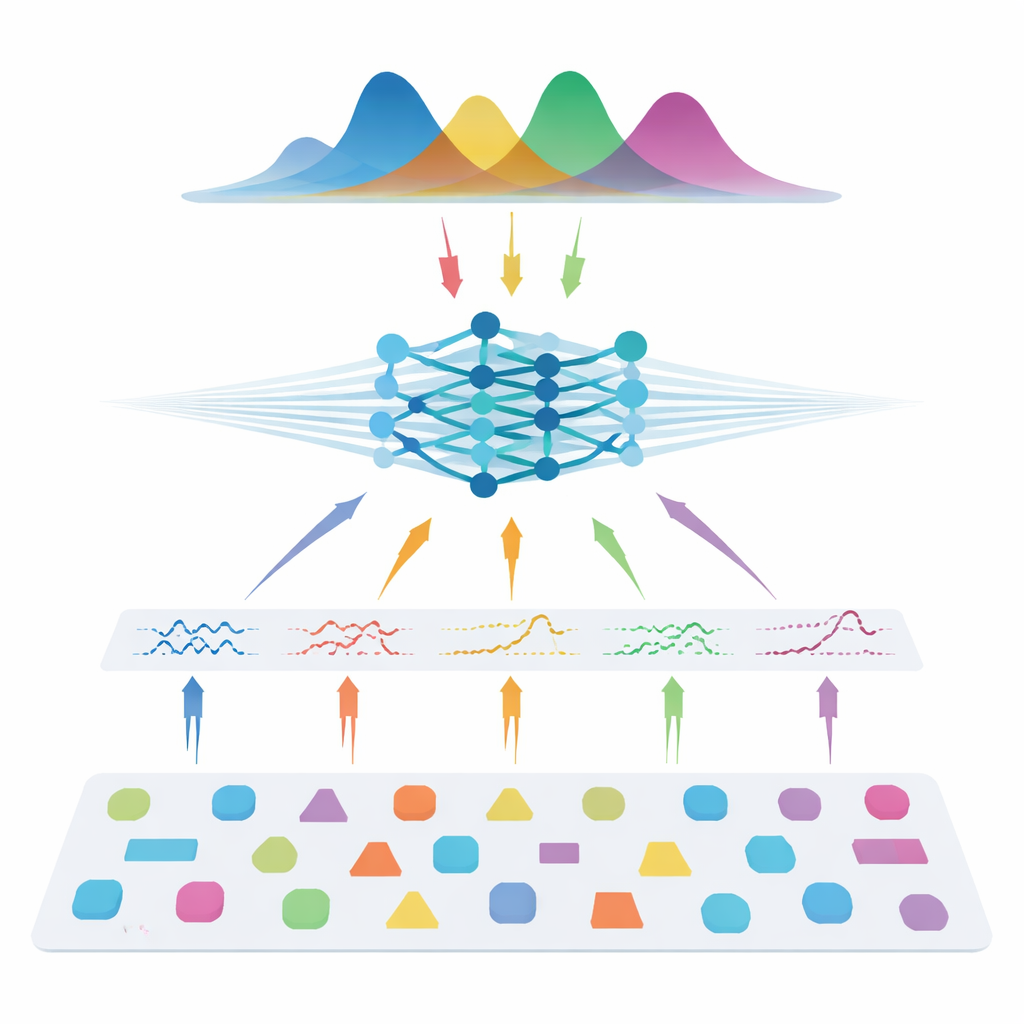

Learning from Simulations Instead of Patients

The authors take a different route: instead of learning directly from patient scans, they teach a neural network using entirely simulated data. They draw many possible combinations of model parameters, use the known physics of diffusion to generate synthetic signals, add realistic noise, and then train a so-called neural posterior estimator. This network learns to output a full probability distribution over the parameters that could have produced a given signal, naturally providing both a best guess and an uncertainty estimate. To make the method flexible across different scanner settings, the team does not feed in raw measurements. Instead, they compress each signal into compact, physics-informed features that summarize how it varies with gradient strength, direction, and diffusion time. These features capture the essence of the signal while remaining largely independent of the exact acquisition scheme.

Matching the Best with a Fraction of the Data

Once trained, the system is tested on both synthetic data with known “ground truth” and on several human datasets, including healthy volunteers and people with multiple sclerosis. Across all three model families—diffusion tensor imaging, diffusion kurtosis imaging, and AxCaliber—the simulation-based approach recovers key microstructural metrics accurately even when using as little as 7–22 measurements instead of full protocols with 69–271 measurements. That can mean up to 90% fewer scans. In noisy conditions or when the number of measurements is severely reduced, the new method consistently outperforms standard fitting, producing cleaner maps that preserve important structure. It also detects expected changes inside multiple sclerosis lesions and recovers known patterns of axon sizes across the corpus callosum, suggesting that it generalizes well to both healthy and diseased tissue.

What This Means for Patients and Clinics

For non-specialists, the takeaway is that the authors show how to get nearly the same microscopic view of brain tissue from much shorter and potentially lower-quality diffusion MRI scans by leaning heavily on simulations and advanced inference. Instead of asking the scanner for more data, they ask computers to make better use of less data. This could shorten exam times, broaden access to advanced imaging in routine hospitals, and even rescue older studies that were acquired with suboptimal settings. Because the method is trained entirely on simulated signals, it is also privacy-friendly and easier to share and scale. If widely adopted, this style of simulation-based inference could help close the gap between cutting-edge research protocols and everyday clinical MRI, bringing virtual tissue biopsies closer to standard care.

Citation: Eggl, M.F., De Santis, S. Simulation-based inference at the theoretical limit for fast, robust microstructural MRI with minimal diffusion data. Commun Med 6, 275 (2026). https://doi.org/10.1038/s43856-026-01614-6

Keywords: diffusion MRI, brain microstructure, simulation-based inference, neural networks, scan time reduction