Clear Sky Science · en

Multimodal models for skin cancer classification using clinical freetext and dermatoscopic images

Why smarter skin checks matter

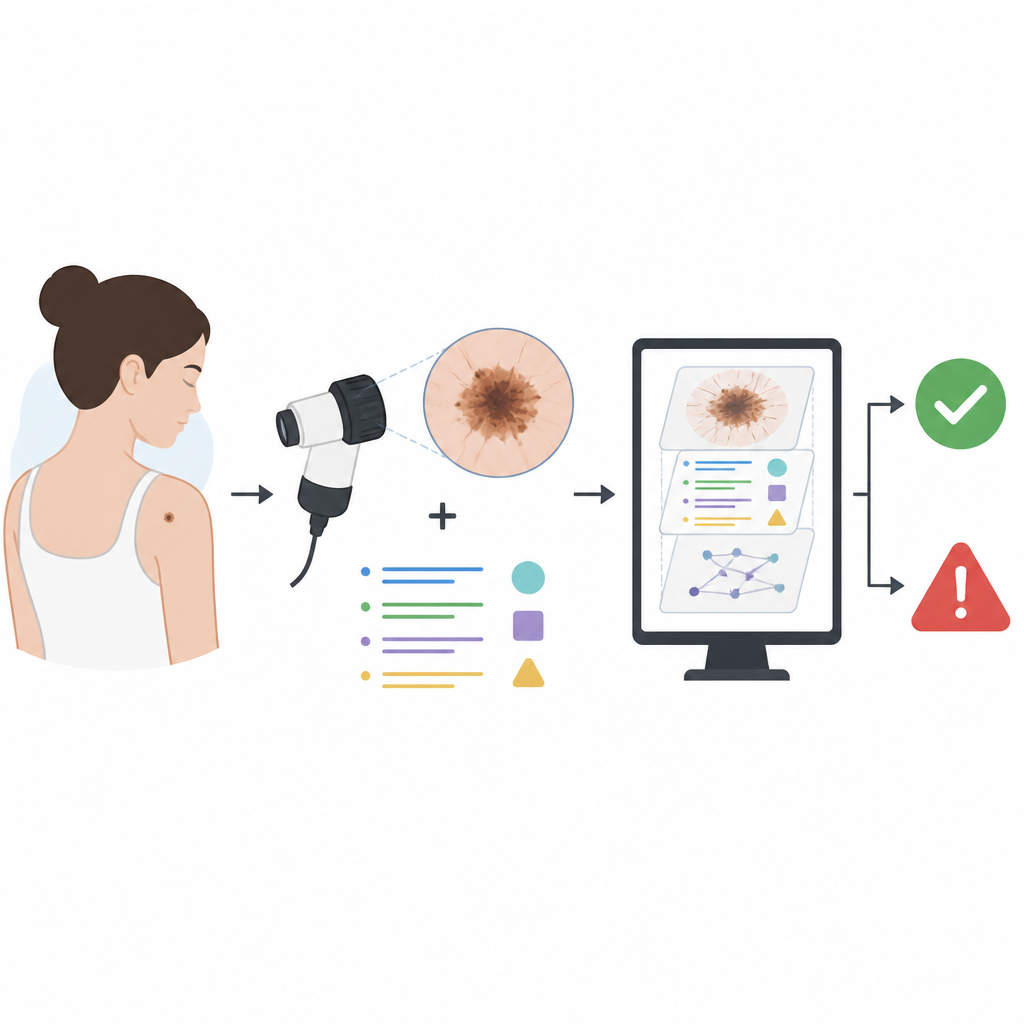

Skin cancer is common, but when it is caught early, people usually do very well. Doctors already use close-up photographs of moles to decide which ones look worrying. This study asks a simple question with big implications: if computers could also read the doctor’s notes about each mole, not just the pictures, could they spot skin cancers more accurately and more fairly?

Pictures plus words tell a fuller story

The researchers built a large dataset from routine dermatology clinics in the United Kingdom. It included 5481 close-up dermatoscopic images from 4538 adults, along with basic patient details such as age and skin type, and four kinds of clinical notes. These notes covered how the lesion looked and changed over time, whether skin cancer ran in the family, how much sun exposure the person had, and what the surgeon thought and planned to do. Each case was labelled as either benign or malignant, with malignant cases confirmed by biopsy whenever possible.

Hidden clues inside clinical notes

Unlike simple tick-box data, free text allows doctors to describe subtle features: a mole that has grown darker, a spot that bleeds, or a patient who worked outdoors for years. Such details can be highly informative, but they can also give away the answer. Many notes contain what the authors call leading language: phrases that state or strongly hint at the diagnosis or treatment, such as “basal cell carcinoma, refer for biopsy” or “no treatment required.” If a machine learning model simply latches onto these shortcuts, it may appear very accurate on past data while learning little about how to truly recognise cancer from the images or from patient-reported descriptions.

Teaching computers to ignore shortcuts

To tackle this problem, the team designed several levels of text cleaning. Simple rules first stripped out the explicit names of skin diseases and the words benign and malignant. They then used a large language model to perform more subtle filtering. In one setting, key diagnostic phrases and treatment plans were replaced with neutral tags so the authors could measure how much each type of statement boosted performance. In the strictest setting, only factual information that a patient could reasonably provide, such as how long a mole had been present or past sun habits, was kept. This approach aimed to move the text closer to what a patient-facing system might see rather than relying on inside clues from specialists.

What the models actually learned

When the computer model relied on images alone, it performed well, but adding unfiltered notes made it significantly better. The main accuracy measure, the area under the receiver operating characteristic curve (AUROC), rose from 0.909 with images only to 0.970 with images plus raw notes. Even when all obvious diagnostic language was removed, combining images with carefully filtered text still reached an AUROC of about 0.948, higher than either source on its own. Experiments with tagged phrases showed that simple actions like “refer to hospital” conveyed nearly as much information as an outright cancer label, confirming that many notes carry strong built-in bias. The authors also examined performance across age groups and skin tone categories and found relatively low levels of unfairness, both for the image-only and for the fully multimodal models.

What this means for future skin checks

For non-experts, the key takeaway is that doctor’s notes contain real, useful clues that can help computers support skin cancer decisions, but they must be handled with care. If models are allowed to read unfiltered notes, they may learn to mimic the wording of doctors rather than to recognise risky moles themselves. This study shows that by thoughtfully cleaning the text and blending it with images and basic patient data, it is possible to boost accuracy while reducing hidden bias. In time, such multimodal tools could help primary care clinicians make better referrals and shorten waits for specialist care, while also laying the groundwork for safe, text-aware systems that might one day assist patients directly.

Citation: Watson, M., Winterbottom, T., Hudson, T. et al. Multimodal models for skin cancer classification using clinical freetext and dermatoscopic images. Commun Med 6, 277 (2026). https://doi.org/10.1038/s43856-026-01456-2

Keywords: skin cancer, machine learning, dermatology, clinical notes, medical imaging