Clear Sky Science · en

The impact of tissue detection on diagnostic artificial intelligence algorithms in prostate digital pathology

Why the First Step in Cancer AI Matters

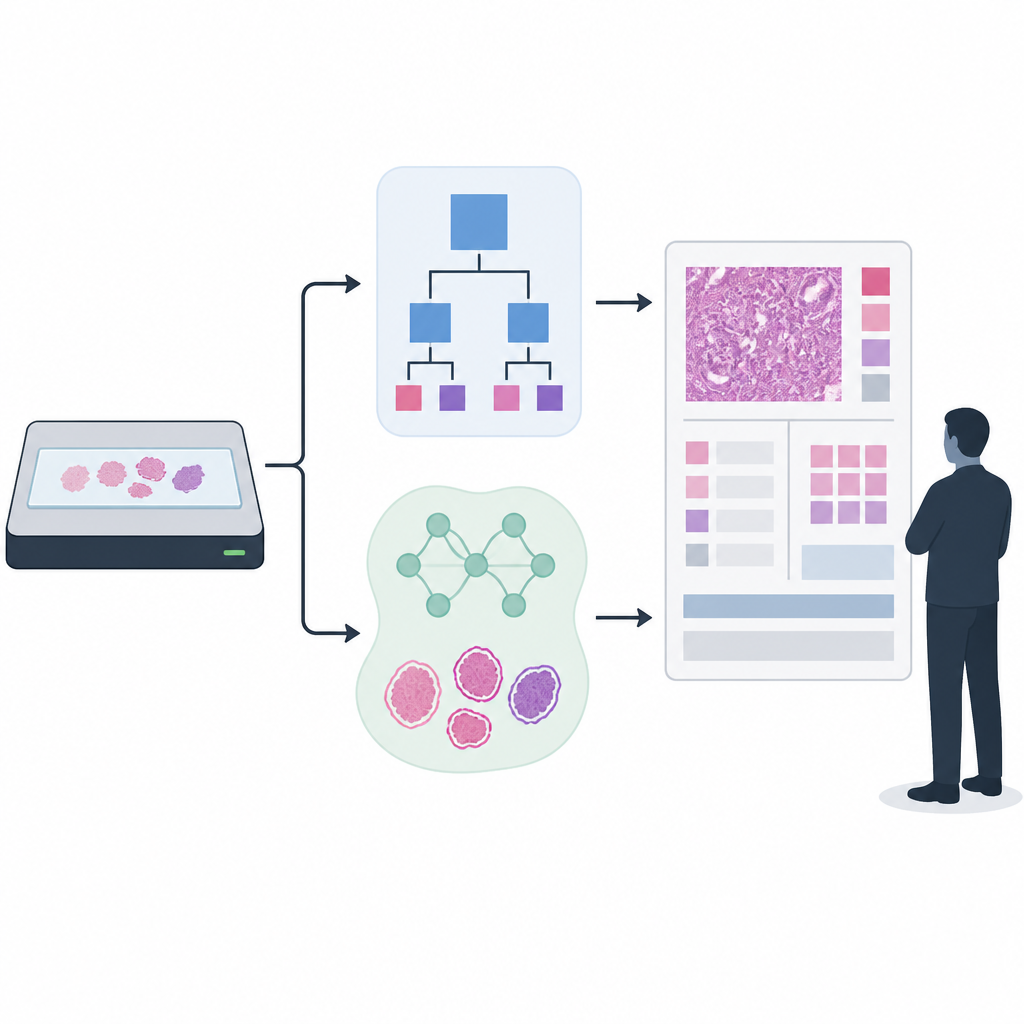

As hospitals increasingly use artificial intelligence to help read prostate biopsies, a quiet but crucial step often goes unnoticed: simply finding where the tissue is on a digital slide. If this first step fails and important pieces of tissue are missed, even the smartest cancer-detecting AI can be led astray. This study asks how much that basic step really matters for overall performance, and whether modern AI can do a safer job than older, rule-based methods at spotting tissue in the first place.

From Glass Slide to Digital Image

In digital pathology, thin slices of prostate tissue are scanned into high‑resolution images. Before any cancer‑grading algorithm can get to work, the system must separate tissue from empty background. Traditionally, this is done with simple rules based on color and brightness, like setting a cut‑off to decide which pixels are likely to be tissue. Newer systems instead train AI models to recognize tissue shapes and patterns, much as they do for more complex tasks. The question is whether this upgrade in the “plumbing” of the pipeline actually improves real‑world cancer grading, or mainly reduces rare but serious failures.

Building and Testing Two Detection Approaches

The researchers focused on prostate cancer grading, in which biopsies are assigned Gleason scores and ISUP grades that guide treatment decisions. They compared a long‑used rule‑based tissue detector with a newer AI model built on a UNet++ image segmentation network. To train and evaluate the tissue detector, they used more than 33,000 scanned slides from several hospitals and scanner types, combining automatically generated tissue outlines with smaller sets that had been laboriously checked and refined by experts. For the downstream cancer grading, they applied a state‑of‑the‑art AI that had been trained on over 55,000 slides and then tested across 13 clinical sites using 13 different scanners, mirroring the messy diversity of real clinical practice.

How Well Each Method Sees the Tissue

When they looked solely at how accurately each method marked tissue pixels, both performed well on average. The AI‑based detector captured slightly more true tissue overall, while the classical method was a bit better at avoiding background areas. The crucial difference appeared in rare outlier slides with unusual appearance. On these difficult cases, the rule‑based method sometimes missed large chunks of tissue entirely, while the AI detector generally preserved more of it. Across more than 27,000 evaluation slides, the number of complete failures where no tissue was detected at all fell from 118 with the classical method to 24 with the AI‑based detector, suggesting that AI may offer a safety advantage by reducing catastrophic misses.

Does Better Tissue Detection Improve Cancer Grading?

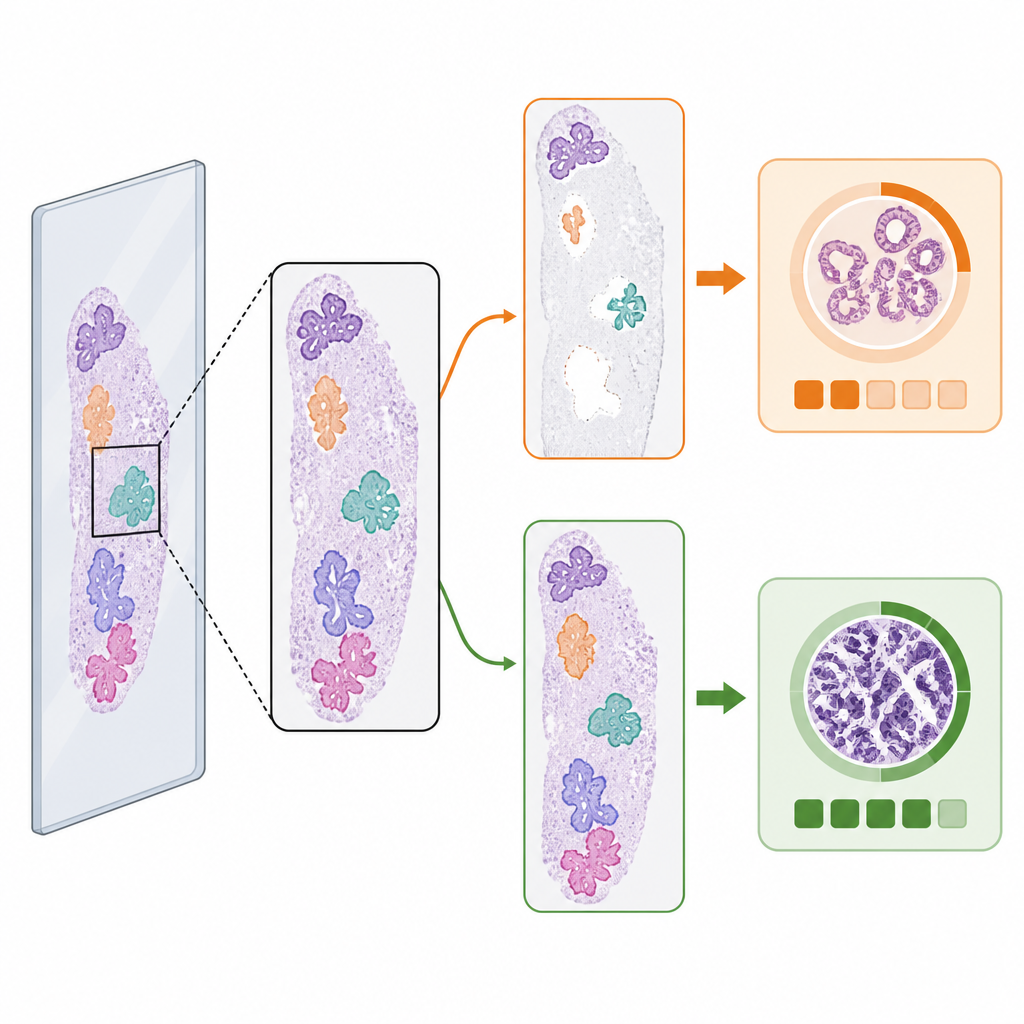

The team then asked whether these differences in tissue maps actually changed the final cancer grades produced by the downstream AI. For fairness, they only analyzed slides where both tissue detection methods found at least some tissue, and compared the agreement between AI‑predicted grades and those assigned by pathologists. On this large set of slides, the overall grading performance was very similar whether the system used rule‑based or AI tissue detection, with overlapping confidence intervals across all clinical cohorts. In other words, for typical slides, the initial detection step did not clearly limit the accuracy of a strong modern grading model, even when an older, simpler detector was used.

When the First Step Changes the Answer

Despite the similar averages, the choice of tissue detector did change the predicted cancer grade in a small but important fraction of cases. Among malignant slides with per‑slide reference grading, 3.5 percent had different ISUP grade predictions depending on which tissue detector was used. In roughly equal numbers of these, the AI detector led to the correct grade when the classical method did not, and vice versa. Visual examples showed why: if one detector missed a large piece of tumor‑bearing tissue or misidentified debris as tissue, the grading AI could be pushed toward a lower or higher risk category. These “edge cases” highlight how subtle changes in which image patches reach the grading model can alter its final decision.

What This Means for Future Cancer AI

For now, the study suggests that in high‑quality, multi‑site prostate biopsy datasets, the exact method used for tissue detection does not strongly change the average accuracy of advanced AI‑based grading. However, AI tissue detection clearly reduces the rate of complete failures and can sway results in a small subset of malignant cases, where missing tissue might be most harmful. The authors argue that tissue detection should be treated as an integral, auditable part of diagnostic AI systems, with clear visualization for pathologists and continued research into robust, efficient models as downstream AIs become even more precise.

Citation: Boman, S.E., Mulliqi, N., Blilie, A. et al. The impact of tissue detection on diagnostic artificial intelligence algorithms in prostate digital pathology. Sci Rep 16, 14968 (2026). https://doi.org/10.1038/s41598-026-52148-9

Keywords: digital pathology, prostate cancer, tissue detection, Gleason grading, diagnostic AI