Clear Sky Science · en

Federated CT foundation models for multi-center detection of lymph node metastasis in pancreatic cancer

Why this matters for patients

Pancreatic cancer is one of the deadliest cancers, in part because it is often discovered late and has already spread to nearby lymph nodes. Knowing whether this spread has happened before surgery is crucial: it guides how aggressive the operation should be and whether patients should receive chemotherapy first. Yet, today’s CT scans, interpreted by experts, miss many of these hidden metastases. This study explores how a new generation of large medical AI models, trained collaboratively across hospitals without sharing raw patient data, could make these life‑shaping decisions more accurate and more equitable.

A tough cancer and a blind spot in imaging

Most pancreatic cancers are of a type called pancreatic ductal adenocarcinoma, which is notorious for its poor survival. A key sign that the disease is serious is spread to lymph nodes near the pancreas. Radiologists try to spot this spread on CT scans, usually by looking at the size and shape of lymph nodes. Unfortunately, these visual clues are unreliable: many patients with microscopic spread look “normal” on CT, and different experts often disagree. As a result, tumors may be underestimated, and some patients may not receive the intensive treatment they actually need.

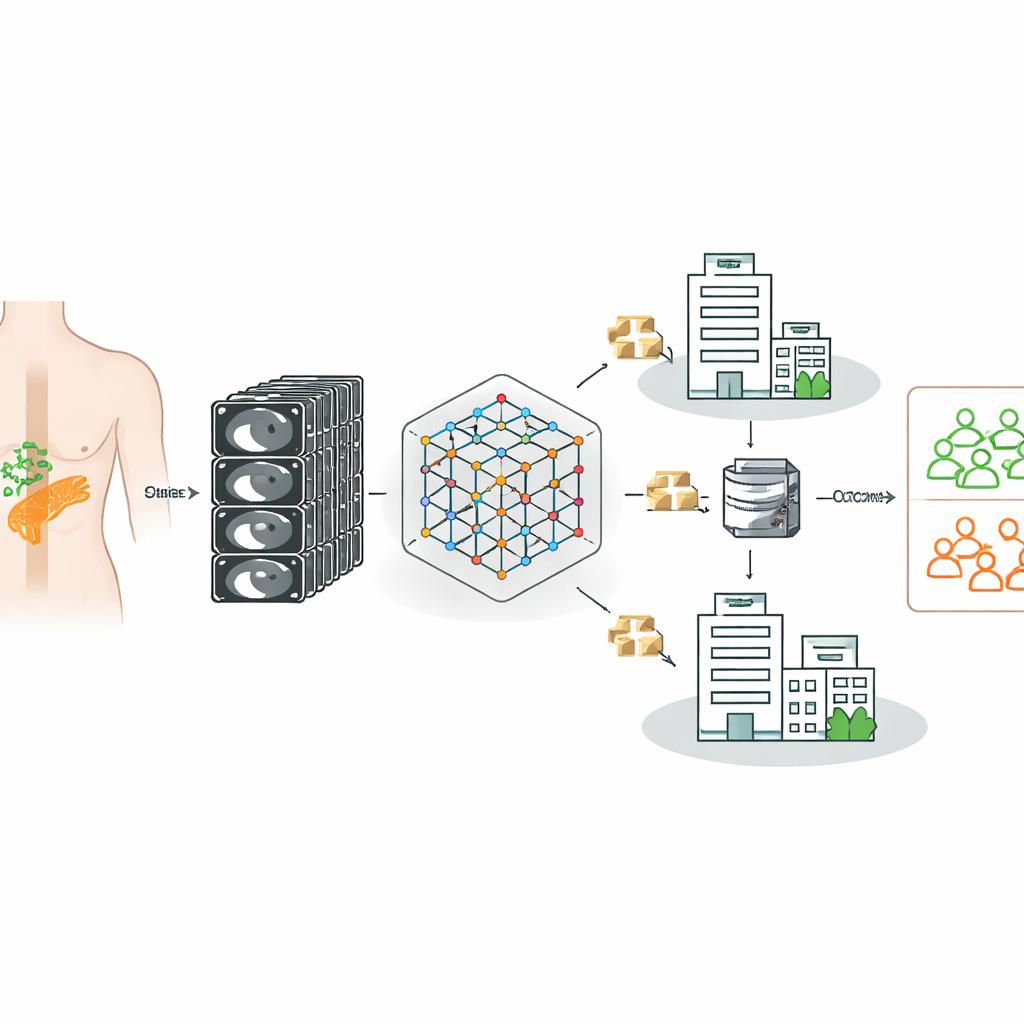

Teaching a CT model to learn from many hospitals

The researchers built on a powerful “foundation model” for CT imaging—an AI system first trained on 148,000 CT scans to recognize general patterns in three‑dimensional anatomy. They then fine‑tuned this model to decide, for each patient with pancreatic cancer, whether lymph nodes were truly metastatic, using confirmed surgical and pathology results as ground truth. Importantly, the data came from three German hospitals with different scanners, imaging protocols, and patient populations, reflecting the messy reality of clinical practice rather than a single, carefully curated dataset.

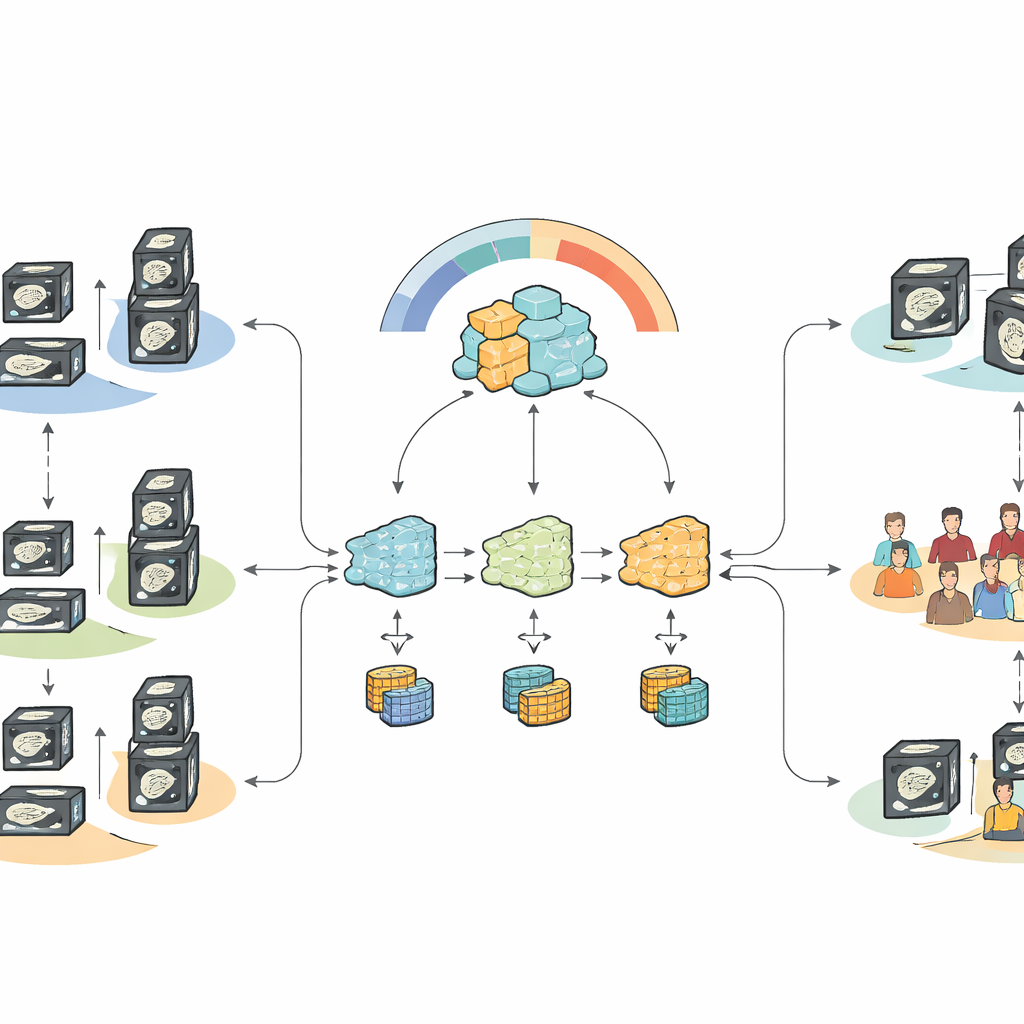

Collaborating without sharing patient data

Because strict privacy rules prevent hospitals from freely pooling patient scans, the team turned to federated learning. In this approach, a common model is sent to each hospital, trained locally on that hospital’s data, and then updated centrally using only the model’s learned parameters, never the images themselves. Standard federated methods, however, assume that all sites look similar. In medicine this is rarely true: differences in machines, contrast timing, and patient mix can pull local models in conflicting directions, degrading performance when their updates are simply averaged.

A smarter way to combine what hospitals learn

To tackle this, the authors designed a “heterogeneity‑aware” way of combining updates from each hospital. Their method looks not only at how imbalanced each site’s labels are (how many patients do or do not have metastases) but also at how different each hospital’s learned decision boundary is from the common model. Clients whose models drift too far from the shared pattern are down‑weighted when the updates are merged. This representation‑aware strategy stabilizes training while still letting the system learn from each hospital’s unique experience, yielding a global model that better separates patients with and without lymph node spread.

What the results show for real‑world care

When all data were pooled centrally—a scenario that would typically be blocked by privacy regulations—the fine‑tuned foundation model clearly beat traditional machine‑learning techniques and earlier pancreatic models at distinguishing metastatic from non‑metastatic cases. Under federated, privacy‑preserving conditions, the new heterogeneity‑aware approach recovered most of that performance and outperformed standard federated methods that either over‑predicted or under‑predicted metastasis. The system was especially good at sensitivity—catching patients with lymph node spread—while achieving moderate but improved specificity, a trade‑off that matches the clinical priority of avoiding missed disease, even at the cost of some false alarms.

What this means going forward

For a lay reader, the key message is that powerful AI can now be trained on sensitive scans scattered across many hospitals without moving the data, and still improve how doctors judge whether pancreatic cancer has spread to lymph nodes. This work shows that starting from a broad CT foundation model and using a smarter way of blending knowledge from diverse hospitals yields more reliable, clinically meaningful predictions than older methods. While the tool is not yet perfect—particularly in avoiding false positives—it represents a promising step toward safer, more consistent decision support for surgeons and oncologists facing one of the most challenging cancers.

Citation: Bhalla, P., Gaviria, D.D., Kupczyk, P. et al. Federated CT foundation models for multi-center detection of lymph node metastasis in pancreatic cancer. Sci Rep 16, 12051 (2026). https://doi.org/10.1038/s41598-026-47631-2

Keywords: pancreatic cancer, lymph node metastasis, CT imaging, medical AI, federated learning