Clear Sky Science · en

The explainability of radiomic-based machine learning models for brain glioma grading on amide proton transfer-weighted images

Why this research matters

Brain tumors called gliomas can range from relatively slow-growing to highly aggressive. Traditionally, doctors determine how dangerous a glioma is by taking a piece of the tumor during surgery or biopsy and examining it under a microscope. That approach is invasive, carries medical risks, and can miss the most aggressive parts of a very uneven tumor. This study explores whether advanced MRI scans combined with artificial intelligence can grade gliomas non‑invasively—and, crucially, explains how the computer models are making their decisions so that doctors can trust and interpret the results.

Looking inside the brain without surgery

The researchers focused on a special MRI method called amide proton transfer‑weighted (APTw) imaging, which is sensitive to the chemical environment of brain tissue. Earlier work had shown that APTw images can distinguish between high‑ and low‑grade gliomas, but the reasons for this success were not fully understood. In this study, 102 patients with untreated brain gliomas underwent MRI scans, including APTw images, before their tumors were surgically removed and graded by pathologists. The team then used these scans to train computer models to separate the most aggressive tumors (grade 4) from all others, aiming to mimic the pathologist’s judgment using only imaging data.

Turning pictures into measurable patterns

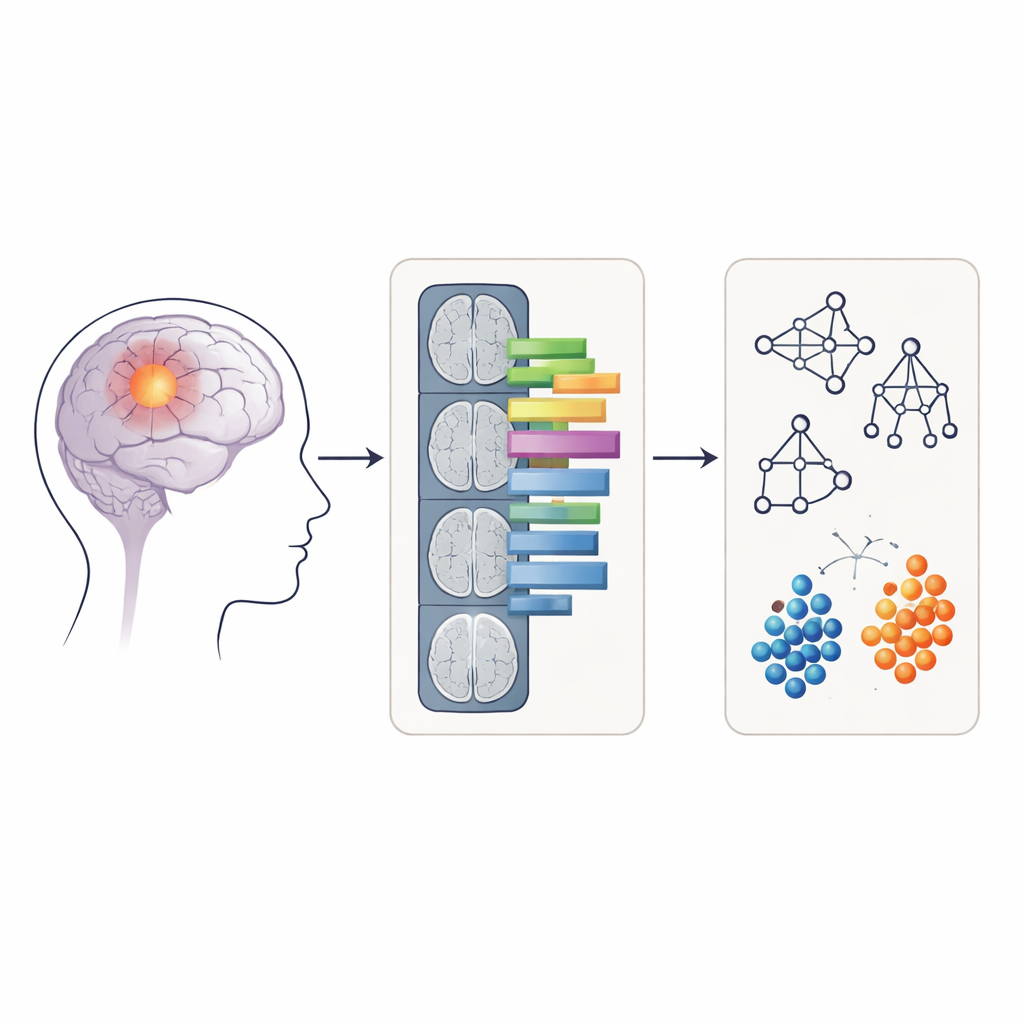

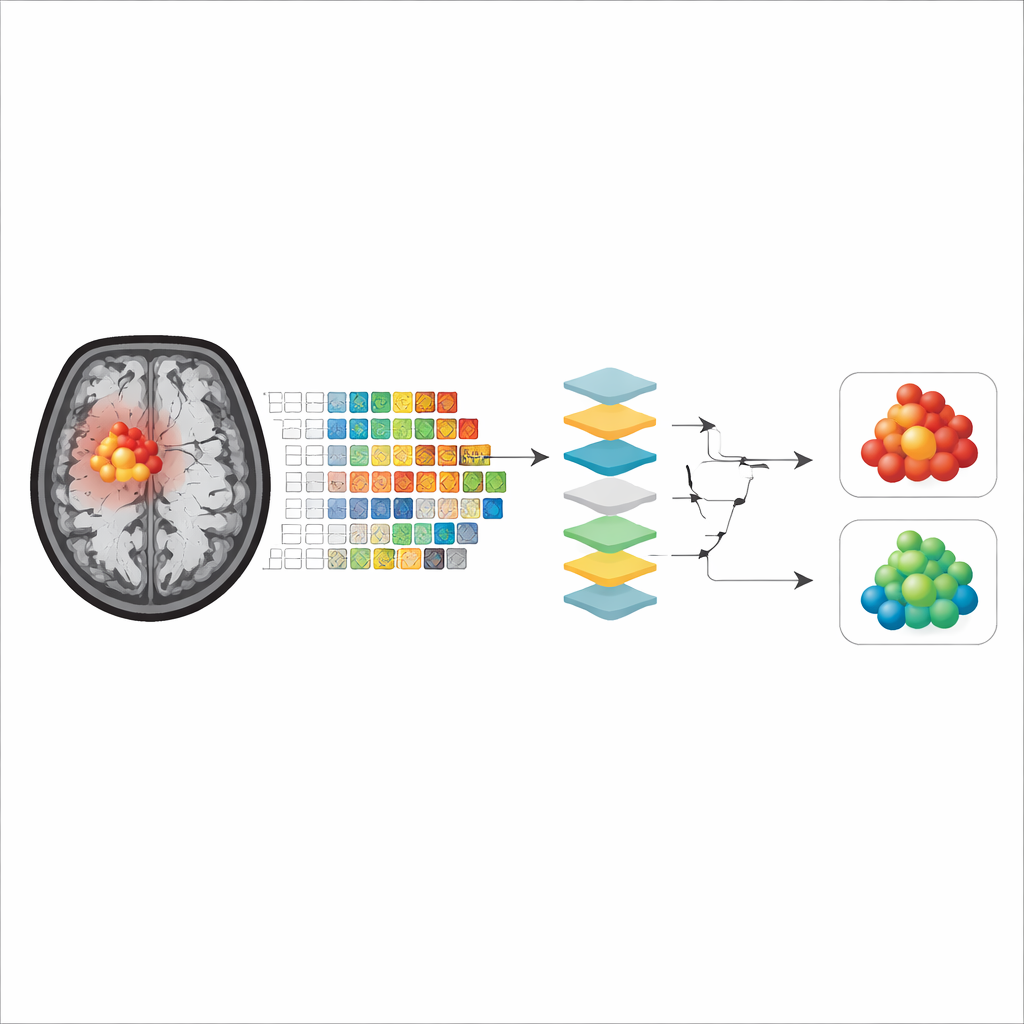

Rather than feeding raw MRI images directly into the computer, the researchers used a technique called radiomics, which converts medical images into hundreds of numerical features. These features describe things like how round or irregular the tumor looks and how evenly or unevenly the signal intensity is distributed within it. For each patient, radiomic features were extracted from two kinds of regions on the APTw images: only the contrast‑enhancing core of the tumor, and a larger area including both the enhancing core and the surrounding swollen tissue (edema). Four common machine‑learning methods—random forest, support vector machine, naïve Bayes, and logistic regression—were trained and tested on these features to see how accurately they could classify tumors as grade 4 or not.

Opening the black box of artificial intelligence

Many powerful machine‑learning models act like “black boxes,” making correct predictions without revealing how they arrived at them. To address this, the authors applied three explainability tools. Shapley values estimate how much each feature contributes to a given prediction, permutation importance measures how much performance drops when a feature is scrambled, and a method called anchors finds simple, rule‑like combinations of features that are sufficient to support a model’s decision. Together, these tools allowed the team to identify which aspects of tumor appearance on APTw images the models relied on most when grading gliomas.

What the models actually look at

Across all models and explainability methods, two main groups of features emerged as most important. The first described tumor shape, especially how close the tumor outline was to a smooth, regular form versus being irregular and uneven. The second captured how patchy or uniform the APTw signal was inside the tumor, reflecting the internal mix of tissue types. Interestingly, when models were trained only on the contrast‑enhancing core, shape features often dominated for some algorithms, whereas when the surrounding swollen tissue was included, measures of internal signal unevenness became more influential, or vice versa depending on the model. Overall, models that focused on the enhancing tumor core showed slightly better accuracy, suggesting that this region better represents the true tumor tissue with fewer confounding effects from necrosis or edema.

Limits and future directions

The study has several practical constraints. The patient group contained relatively few low‑grade tumors, so the models were designed to distinguish grade 4 tumors from all others combined rather than to separate every grade individually. The data also came from a single hospital, and the APTw images had relatively thick slices, which can limit how precisely some shape features are measured. Despite these limitations, the findings were consistent across different explainability methods, supporting the idea that irregular tumor boundaries and uneven internal signal patterns are key imaging clues that the models use to recognize highly aggressive gliomas.

What this means for patients and doctors

In simple terms, this work shows that computer models can use detailed patterns hidden in advanced MRI scans to tell aggressive brain tumors from less dangerous ones, and it clarifies what visual cues in the images drive those decisions. By highlighting the importance of tumor shape and internal patchiness on APTw scans, and by demonstrating that these clues can be transparently explained, the study moves non‑invasive tumor grading closer to clinical reality. If validated in larger, multi‑center studies, such explainable AI tools could help doctors plan treatment more safely and quickly, sometimes reducing the need for risky biopsies and giving patients clearer information based on scans alone.

Citation: Gao, X., Wang, J. The explainability of radiomic-based machine learning models for brain glioma grading on amide proton transfer-weighted images. Sci Rep 16, 14539 (2026). https://doi.org/10.1038/s41598-026-44963-x

Keywords: brain glioma imaging, radiomics, explainable AI, MRI biomarkers, tumor grading