Clear Sky Science · en

Design of an in-pipe inspection robotic system (IPIRS) with YOLOv8–LSTM integration for real-time in-pipe navigation

Robots Inside the Pipes

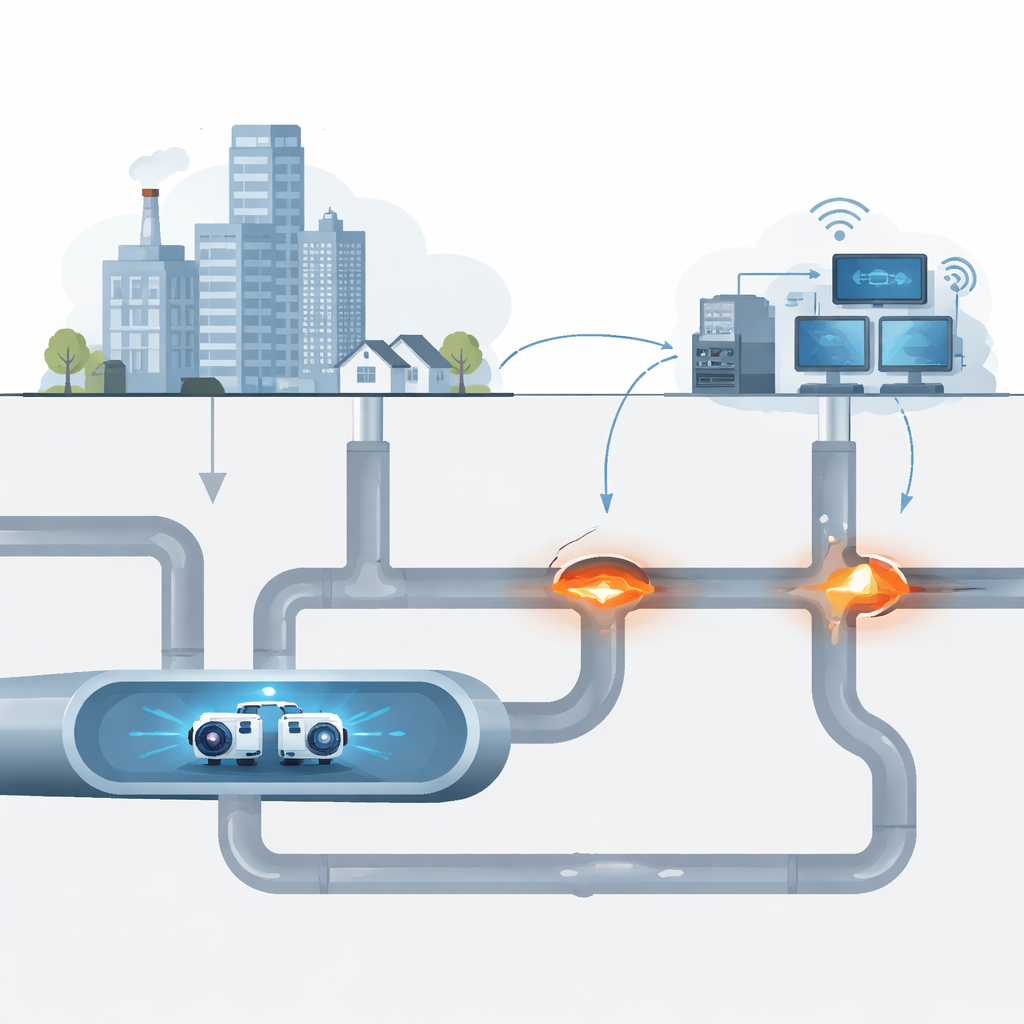

Every day, vast networks of buried pipes quietly deliver the oil, gas, and water that modern life depends on. Many of these pipelines are aging, hard to reach, and dangerous to inspect by hand. This study introduces a new kind of robot—small enough to crawl inside pipes—that uses advanced camera vision and predictive software to guide itself through twists, bends, and junctions while spotting trouble before it becomes a disaster.

Why Looking Inside Pipes Is So Hard

Hidden pipelines run for hundreds of kilometers under streets, fields, and factories. Over time they can corrode, crack, or clog, leading to leaks, bursts, or blockages. Traditional checks rely on people lowering cameras or devices into manholes, a slow and risky job that still misses many faults. Earlier pipe robots improved safety but struggled with tight curves and changing pipe diameters. Their wheels could slip, they jammed at junctions, and their simple cameras could not interpret what they were seeing well enough to guide themselves or warn operators early about emerging problems.

A Flexible Robot Built for Tight Spaces

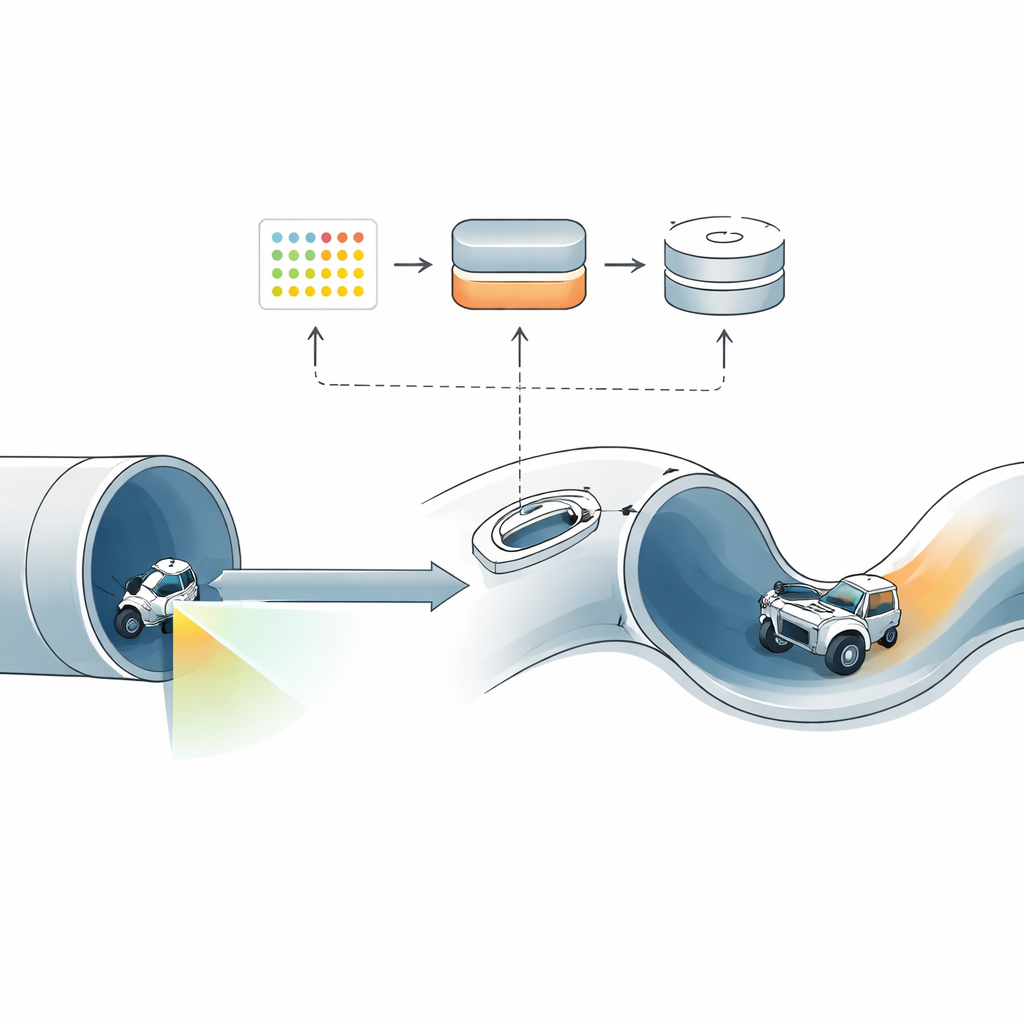

The researchers designed an in‑pipe inspection robotic system, or IPIRS, that is purpose‑built for narrow pipes between 10 and 15 centimeters wide. The robot body is made of four short modules connected by spring‑loaded joints. These joints press the wheels gently against the pipe walls, helping the robot grip as the pipe bends or its diameter changes. Front and rear modules contain small motors that steer and adjust the robot’s posture, allowing it to handle sharp corners and T‑junctions. Onboard, a depth‑sensing camera looks ahead while a motion sensor tracks how the robot tilts, turns, and accelerates, providing the raw data for its “digital brain.”

Teaching the Robot to See and Think Ahead

To turn raw camera images into useful understanding, the team used a modern vision method known as YOLOv8. Trained on thousands of labeled images from realistic computer simulations, this software can instantly spot key pipe features such as ends, exits, and different junction shapes as the robot moves. In tests, it correctly identified these structures with very high accuracy, scoring about 98% on a standard measure of detection quality and achieving an overall balance of hits and misses (F1 score) of 0.95. This means that most of the time, the robot knows exactly what kind of pipe feature lies ahead and where it is within the image.

Predicting the Effort Needed to Stay Stable

Seeing alone is not enough: when the robot enters a curve or a branching section, the forces on its joints and wheels change quickly. If the motors react too late, the robot can wobble, slip, or stall. To address this, the researchers added a second learning tool called an LSTM network that looks at short histories of motion‑sensor readings and motor efforts to predict how much force will be needed in the next instant. This predicted effort is then blended with a standard control loop that positions the joints, allowing the robot to subtly adjust torque before trouble starts. In simulations, the prediction errors were extremely small, indicating that the model could anticipate how hard the motors should push to keep movement smooth.

Putting the System to the Test in Virtual Pipes

Before building hardware, the team created a detailed digital twin of a pipe network using the Robot Operating System and physics‑based simulators. The virtual environment included straight sections, bends, vertical climbs, and several types of junctions. Here they could safely collect camera and sensor data, train their models, and then run full‑system trials. The combined perception–prediction setup allowed the robot to recognize upcoming structures, plan how to pass them, and adjust its posture and motor effort in real time, all while respecting limits on wheel speed and torque. Statistical checks showed that both the vision and prediction components behaved reliably across many test runs, despite some predictable confusion in the most visually similar junction types.

What This Means for Safer Pipelines

The study shows that pairing a flexible mechanical design with smart vision and predictive control can make in‑pipe robots far more capable than earlier designs. In simulation, IPIRS navigated complex pipe layouts while accurately recognizing structural features and forecasting how hard its motors should work, a combination that improves stability and sets the stage for true autonomy. Although the work so far is virtual and real pipes will add extra challenges such as rust, water, and debris, the results suggest a clear path toward compact inspection robots that not only see problems inside buried pipelines but also think ahead to avoid getting stuck while keeping critical infrastructure safer.

Citation: Elkholy, H., Meligy, R., Bassiuny, A.M. et al. Design of an in-pipe inspection robotic system (IPIRS) with YOLOv8–LSTM integration for real-time in-pipe navigation. Sci Rep 16, 9658 (2026). https://doi.org/10.1038/s41598-026-42181-z

Keywords: pipeline inspection, in-pipe robot, autonomous navigation, computer vision, predictive control