Clear Sky Science · en

Evolutionary reinforcement learning framework for energy-efficient fault resilience and topological stability in WSNs

Why smart sensor networks matter

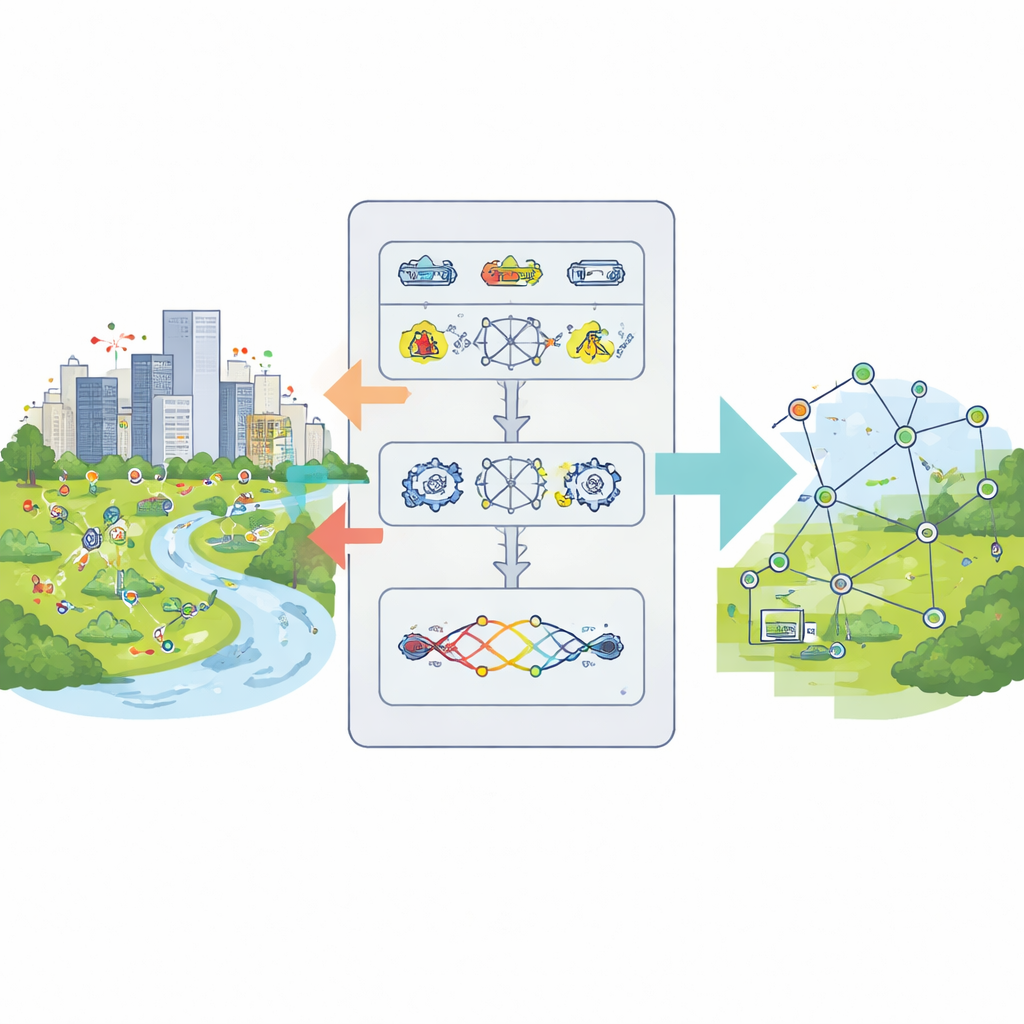

From precision farming to disaster warning systems, wireless sensor networks quietly watch over our world. Tiny battery-powered devices scattered across cities, factories, forests, and hospitals collect data and send it back for analysis. But because these sensors are cheap, remote, and hard to maintain, they fail often and run out of power quickly. This paper explores a new way to keep such networks running longer, more reliably, and with fewer human interventions, using an intelligent learning framework called EvoGenRL.

The challenge of tired and failing sensors

Wireless sensor networks must juggle several needs at once. Each node has very limited battery energy and computing power. Weather, physical damage, or interference can cause nodes or links to fail, breaking communication paths. As networks grow larger and more complex, traffic loads and surrounding conditions change constantly. Traditional methods usually tackle only one aspect, such as saving energy or improving routing, and they are often designed for fixed, predictable situations. As a result, when faults pile up or conditions shift, these networks can suffer from lost data, higher delays, and shortened lifetimes.

A learning brain for sensor networks

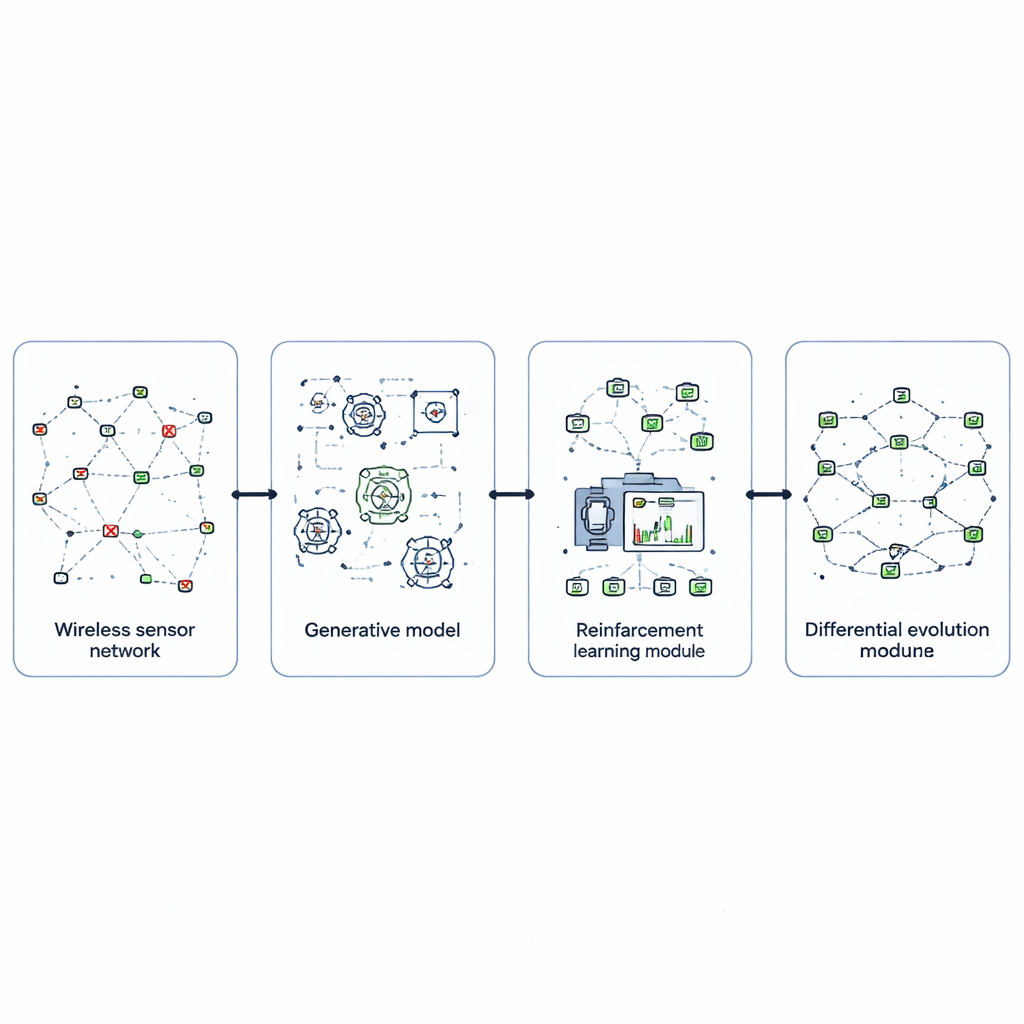

To address this, the authors design EvoGenRL, a combined learning framework that treats the network as a system that can learn from experience. At its core is reinforcement learning, a trial‑and‑error method where an intelligent agent observes the network’s condition—such as battery levels, delivery success, and recent faults—and chooses actions like changing routes, letting some nodes sleep, or retransmitting data. Actions that save energy, deliver more packets, and recover faster from faults earn higher rewards, nudging the agent toward better long‑term behavior. This turns network control from fixed rules into an adaptive policy that improves as it encounters new situations.

Imagining problems before they happen

A key difficulty with any learning system is training it on enough realistic situations, especially rare but damaging failures. EvoGenRL tackles this using generative adversarial networks, a class of models that can invent new data that mimics real examples. Here, a generator network fabricates plausible patterns of sensor faults—such as clusters of failing nodes or bursts of interference—while a discriminator network judges whether these patterns resemble genuine recorded events. Through their competition, the generator produces a rich variety of believable fault scenarios. These synthetic situations are mixed with real data and fed to the reinforcement learning agent, so it can practice coping with many kinds of trouble before the network faces them in the field.

Fine-tuning behavior through evolution

Even a clever learning agent depends heavily on its internal settings, such as how quickly it learns, how much it values future rewards, and how often it should explore new actions. Instead of picking these knobs by hand, the authors use an evolutionary search method called differential evolution. They treat each possible setting as an individual in a population and let them “compete” based on how well the resulting agent controls the network in simulation. By repeatedly mutating, combining, and selecting the best candidates, the method converges toward hyperparameters that make learning faster, more stable, and better suited to changing network conditions. This evolutionary layer wraps around the learning agent, steadily sharpening its performance.

Putting the framework to the test

The researchers evaluate EvoGenRL using a publicly available dataset of wireless sensor activity and detailed network simulations. They compare it against several established routing and optimization schemes drawn from recent literature. Across repeated runs and varying network sizes and fault rates, the new framework consistently uses less energy, keeps more nodes alive for longer, and maintains more stable connections. In numbers, EvoGenRL cuts energy consumption down to about 2.2 joules per node, stretches network lifetime to 1700 cycles, and raises the share of successfully delivered data packets to 99.7 percent. It also lowers the time it takes data to traverse the network to a few milliseconds and boosts the overall data rate, meaning the network can stay responsive even as it conserves power.

What this means for everyday technology

In simple terms, EvoGenRL teaches a sensor network to look after itself. By simulating many types of failures, learning which responses work best, and continually fine‑tuning its own behavior, the system can keep batteries alive longer and keep data flowing despite faults and changing conditions. This makes it attractive for mission‑critical uses, such as medical monitoring, industrial control, and environmental surveillance, where maintenance visits are costly or dangerous and downtime is unacceptable. While the approach still demands significant computing power during training, it offers a promising blueprint for future self‑managing networks that are smarter, more robust, and kinder to their limited energy budgets.

Citation: Lakshmi, S., Aswath, S., Swaminathan, A. et al. Evolutionary reinforcement learning framework for energy-efficient fault resilience and topological stability in WSNs. Sci Rep 16, 11769 (2026). https://doi.org/10.1038/s41598-026-38518-3

Keywords: wireless sensor networks, energy-efficient networking, fault-tolerant systems, reinforcement learning, generative models