Clear Sky Science · en

Explainable drug side effect prediction in central neural system via biologically informed graph neural network

Why hidden drug risks matter

Many medicines that help our brains can also quietly harm them. Dizziness, confusion, seizures, or depression may appear only after a drug has been widely used. Traditional lab and clinical tests are too slow and expensive to catch every possible problem before approval, especially for the central nervous system, where side effects can be subtle yet life‑changing. This study introduces a new computer model that reads across vast biological datasets to flag likely brain‑related side effects early, and to show the chain of molecular events that might cause them.

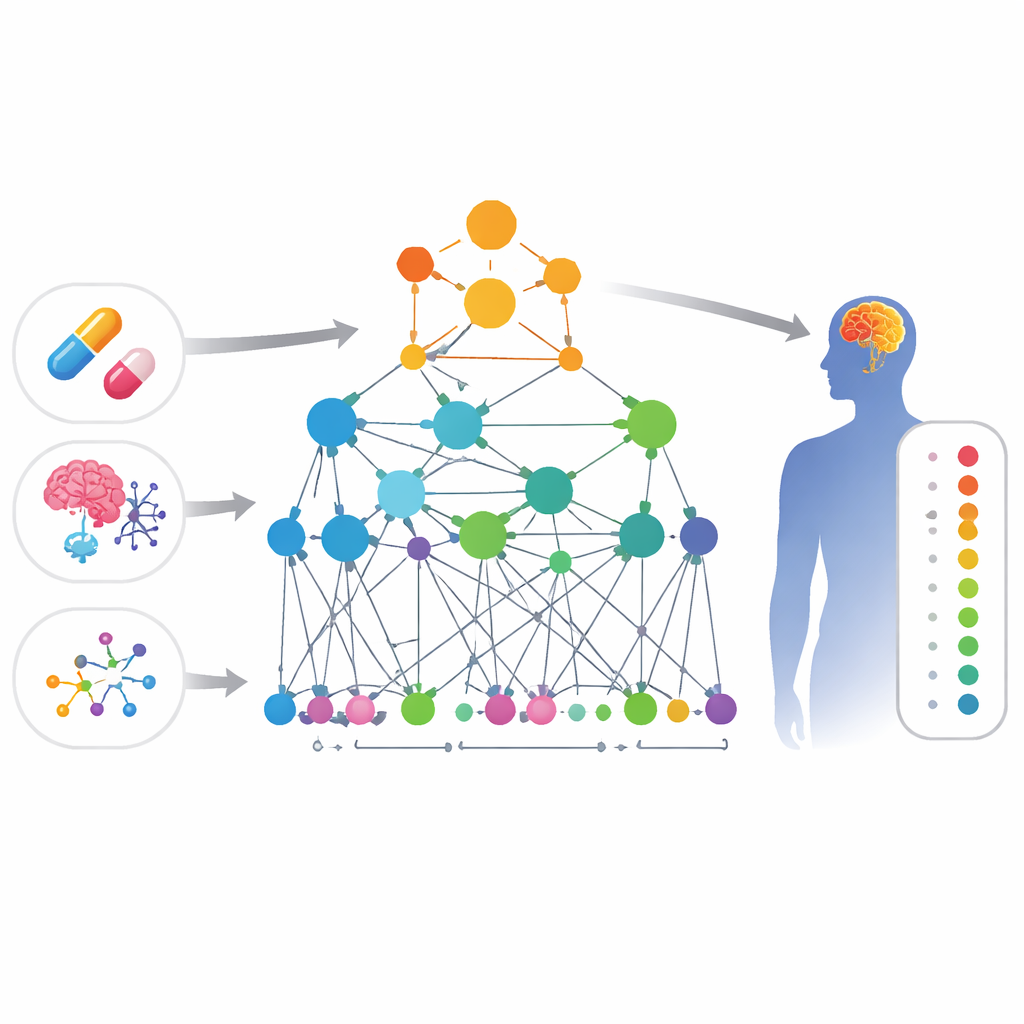

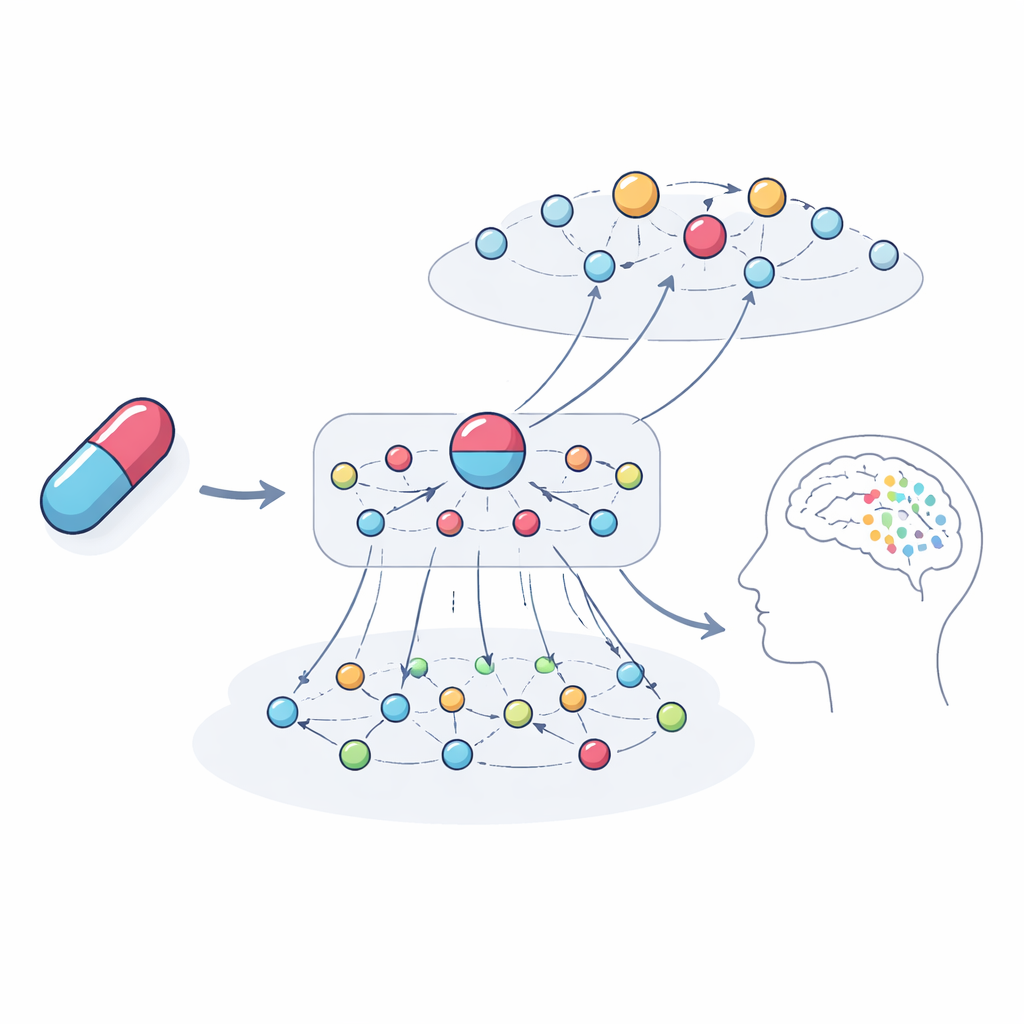

Connecting the dots between drugs and symptoms

The researchers built a large “map” of biological knowledge that links four kinds of things: drugs, side effects, genes, and gene functions. In this map, each item is a point, and lines between points represent evidence that they are related—for example, two genes that physically interact, two drugs with similar chemical structures, or a drug known to cause a certain side effect. Because central nervous system complaints are both common and often missed, the team focused this map on brain‑related problems such as mood changes, movement issues, and other neurological symptoms.

A smart network that learns biology

On top of this map, the authors designed a specialized form of artificial intelligence called a graph neural network. Unlike a standard model that sees each drug in isolation, this approach looks at the web of connections around each drug and side effect. The model, called HHAN‑DSI, is “biologically informed”: it is only allowed to pass information along paths that make biological sense—for example, from one gene to another it directly interacts with, from a gene to a drug that targets it, and then from that drug to side effects that have been seen with similar drugs. By giving more weight to the most informative paths, the system learns compact mathematical fingerprints for each drug and each side effect, and then scores how likely any given pair is to be linked.

Putting the model to the test

To see whether these predictions line up with reality, the team trained the model on a curated database of side effects reported in official product information and then tested it against both this source and a separate set of post‑marketing safety reports. These external reports capture problems that surfaced only after drugs were in wide use. The model not only distinguished true drug–side‑effect pairs from false ones, but, more importantly, ranked the most worrisome effects near the top of its lists. It outperformed several existing machine‑learning methods at this ranking task, especially when data were sparse, as is typical for new medicines. In many cases where the model seemed to be “wrong” because a predicted side effect was missing from the curated database, the same drug–symptom link appeared in the real‑world safety reports, suggesting the model had found overlooked risks.

Peering inside the black box

A key aim was not just to predict trouble but to explain it. The authors traced the most influential chains of connections the model used for specific examples. For the anesthetic and antidepressant ketamine, the system highlighted networks of genes and related drugs that point toward hallucinations and depression as potential outcomes. These paths involved gene families tied to dopamine and serotonin signaling, immune and hormone pathways, and drugs with similar targets that have case reports of similar problems. When the team analyzed the genes emphasized by the model, they found they were strongly enriched for processes already known to shape brain function and psychiatric symptoms, reinforcing that the explanations are biologically meaningful rather than arbitrary.

What this means for patients and future drugs

In plain terms, this work shows that it is possible to use existing biological and safety data to build an early‑warning system for brain‑related side effects—and to see why those warnings arise. HHAN‑DSI is not meant to replace doctors or clinical trials, but to help researchers and safety experts prioritize which potential side effects to investigate and monitor, especially for new drugs with little direct experience. By shining light on the molecular pathways that link medicines to unwanted symptoms, the approach could guide safer drug design, more targeted surveillance, and, ultimately, better protection for patients who rely on treatments that act on the brain.

Citation: Huang, T., Lin, KH., Machado-Vieira, R. et al. Explainable drug side effect prediction in central neural system via biologically informed graph neural network. Transl Psychiatry 16, 205 (2026). https://doi.org/10.1038/s41398-026-03971-1

Keywords: drug side effects, central nervous system, graph neural networks, pharmacovigilance, explainable AI