Clear Sky Science · en

On the precision models of fringe projection profilometry: unification, simplification and connection

Seeing Shape with Stripes of Light

From phone facial recognition to checking the smoothness of jet-engine parts, many technologies rely on measuring 3D shapes with great precision. This paper looks inside one of the most accurate optical methods for doing this, called fringe projection profilometry, and shows how to predict and improve its precision in a clear and unified way.

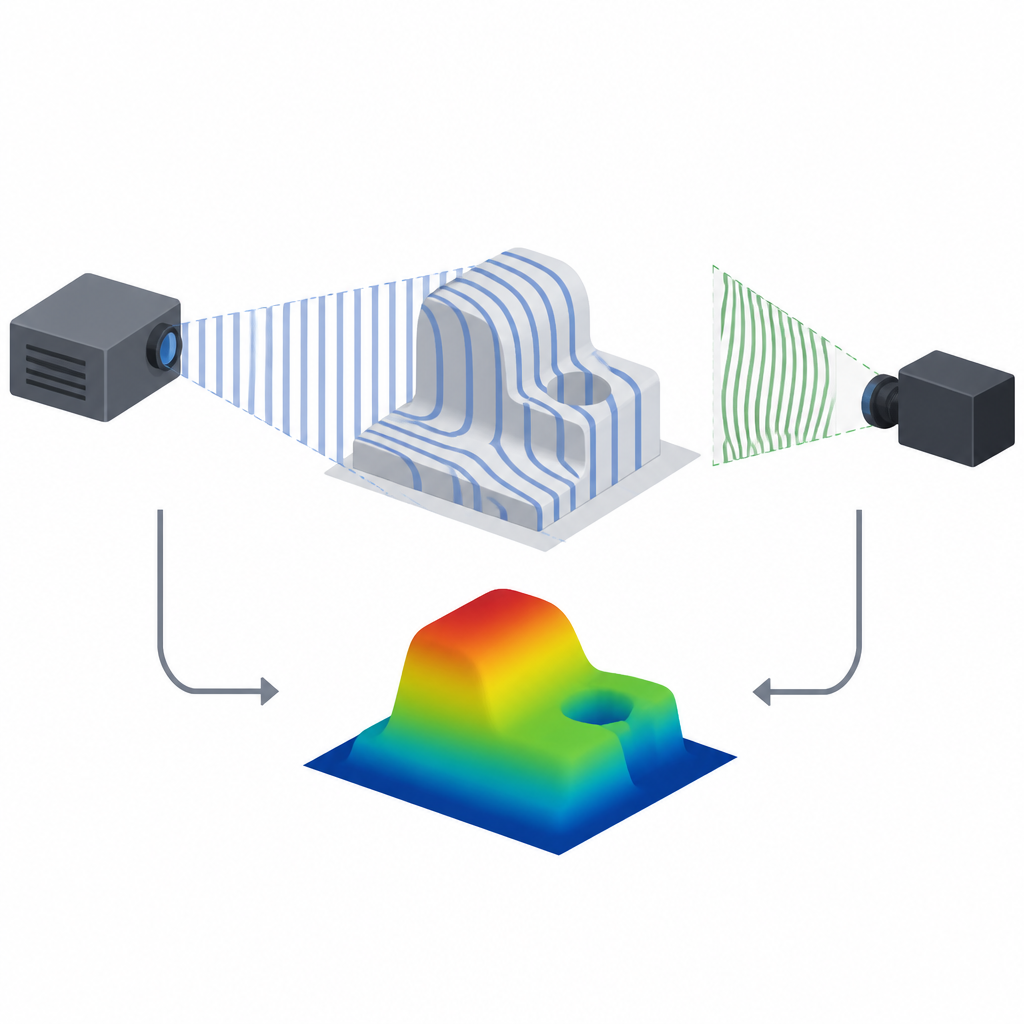

How Stripe Patterns Reveal 3D Shape

Fringe projection profilometry works a bit like shining evenly spaced stripes onto an object and watching how those stripes bend. A projector sends out straight light patterns, while a camera records how the object’s surface distorts them. By matching each camera pixel to a corresponding projector pixel, a computer can use simple geometry to reconstruct the 3D position of points on the object. This turns light and shadow into a highly detailed map of depth, often with accuracy down to a few micrometers across sizes ranging from small components to larger mechanical parts.

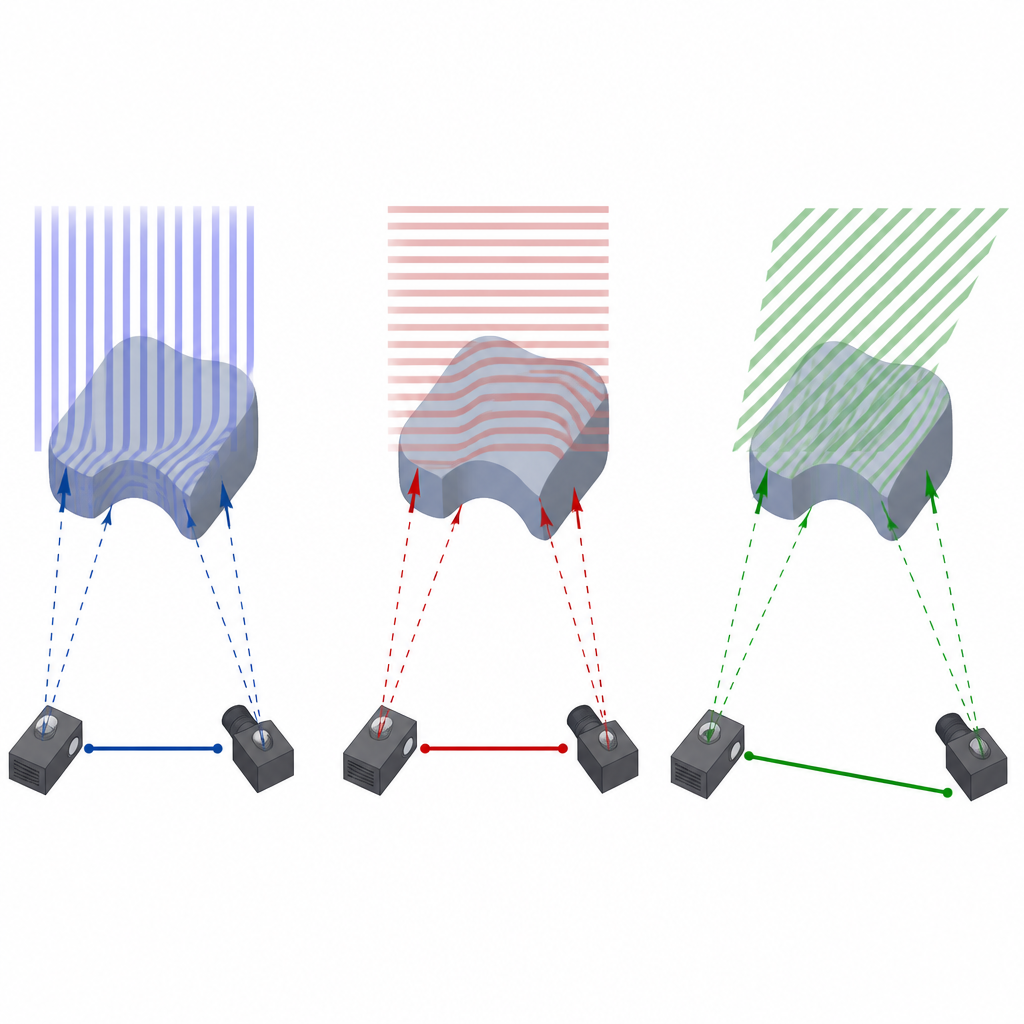

Three Ways to Look at the Same Object

Engineers can choose different directions for the projected stripes, and that choice affects how accurately depth can be measured. The paper focuses on three popular setups. In the first, vertical stripes are projected, and the system mainly uses information across the horizontal direction. In the second, horizontal stripes are used, relying more on vertical information. The third method uses stripes at a carefully chosen slanted angle that is linked to the geometry between the camera and projector. Although these methods look different in practice, the authors show that they can all be described by a single shared mathematical model of precision.

A Triangle that Explains Precision

By reworking earlier formulas, the authors discover a neat right triangle relationship between the three methods. When precision is written in terms of standard deviation of depth, the inverses of the precisions of the vertical and horizontal methods form the two short sides of a right triangle, while the inverse of the precision of the slanted-stripe method forms the longest side. This means the slanted-stripe method always gives the best precision for a given system geometry, while the vertical and horizontal versions can be viewed as simpler, slightly less precise special cases that use only part of the available geometric leverage between camera and projector.

Turning Complex Camera Geometry into Simple Design Rules

The full precision model depends on many camera and projector parameters that are hard to think about when building a real system. To make it practical, the authors simplify in two main steps. First, they consider a common layout in which the projector’s viewing direction is perpendicular to the line connecting camera and projector. In this case, an “effective baseline” can be defined that blends the physical spacing and focal lengths into a single length. The three methods then form another right triangle, this time in terms of these effective baselines: the longer this length, the better the depth precision. Second, they pull out the simple angle between the camera’s and projector’s viewing directions and show that precision mainly scales with the square of the distance to the object, and inversely with the effective baseline and a cosine of this angle. That gives a direct geometric handle for system designers.

Linking to Stereo Vision and Laser Range Finding

Because all these systems rely on triangulation, the authors compare their precision formulas to those used in stereo camera rigs and laser triangulation sensors. After appropriate simplifications, the expressions line up: fringe projection with optimally angled stripes behaves, at the precision level, just like two-camera stereo with the same baseline, and it shares the same dependence on viewing angle found in laser-based systems. This quantitative link supports the long-held view that these methods are different faces of the same geometric principle, differing mainly in how they find corresponding points and how noise enters the measurements.

Design Tools for Real-World Measurements

To move from theory to practice, the authors analyze how sensitive precision is to design choices such as working distance, baseline length, stripe period, and camera noise. They show how to set stricter design targets so that real systems still meet their required precision despite imperfections. Building on the simplified model, they create a software tool called FPP-Planner that lets engineers specify a desired precision and measurement distance, then suggests suitable camera–projector spacing, viewing angles, and pattern settings. Experiments with planes and spheres demonstrate that the predicted precision usually matches measured performance within a few percent, confirming that these models can reliably guide the design of high-precision 3D measurement systems.

Why This Matters for High-Tech Manufacturing

In plain terms, this paper explains how to predict how “sharp” a 3D optical measuring system will be before it is built, and how to tune its layout to reach a desired level of detail. By unifying three common variants of stripe projection into one framework, and tying them to other triangulation methods, the authors provide a clear map for choosing between simplicity and maximum precision. For industries that demand ever tighter tolerances, from semiconductor fabrication to advanced manufacturing, these results offer a practical recipe for designing stripe-based 3D scanners that reliably meet a given precision budget.

Citation: Lv, S., Huang, N., Zou, Y. et al. On the precision models of fringe projection profilometry: unification, simplification and connection. Light Sci Appl 15, 232 (2026). https://doi.org/10.1038/s41377-026-02300-x

Keywords: fringe projection profilometry, 3D shape measurement, optical metrology, stereo vision, laser triangulation