Clear Sky Science · en

Large language model tools as catalysts for collective cognition in collaborative new-product development: a quasi-experimental study

Why this matters for everyday teamwork

Modern teams—from start-ups to school project groups—are increasingly turning to AI chatbots to help them think, plan and create. But we know surprisingly little about how these tools actually change the way people work together. This study looks closely at how large language models (LLMs), such as advanced chatbots, shape group thinking when teams design new products together, and where human judgment still clearly outperforms AI.

How teams normally think together

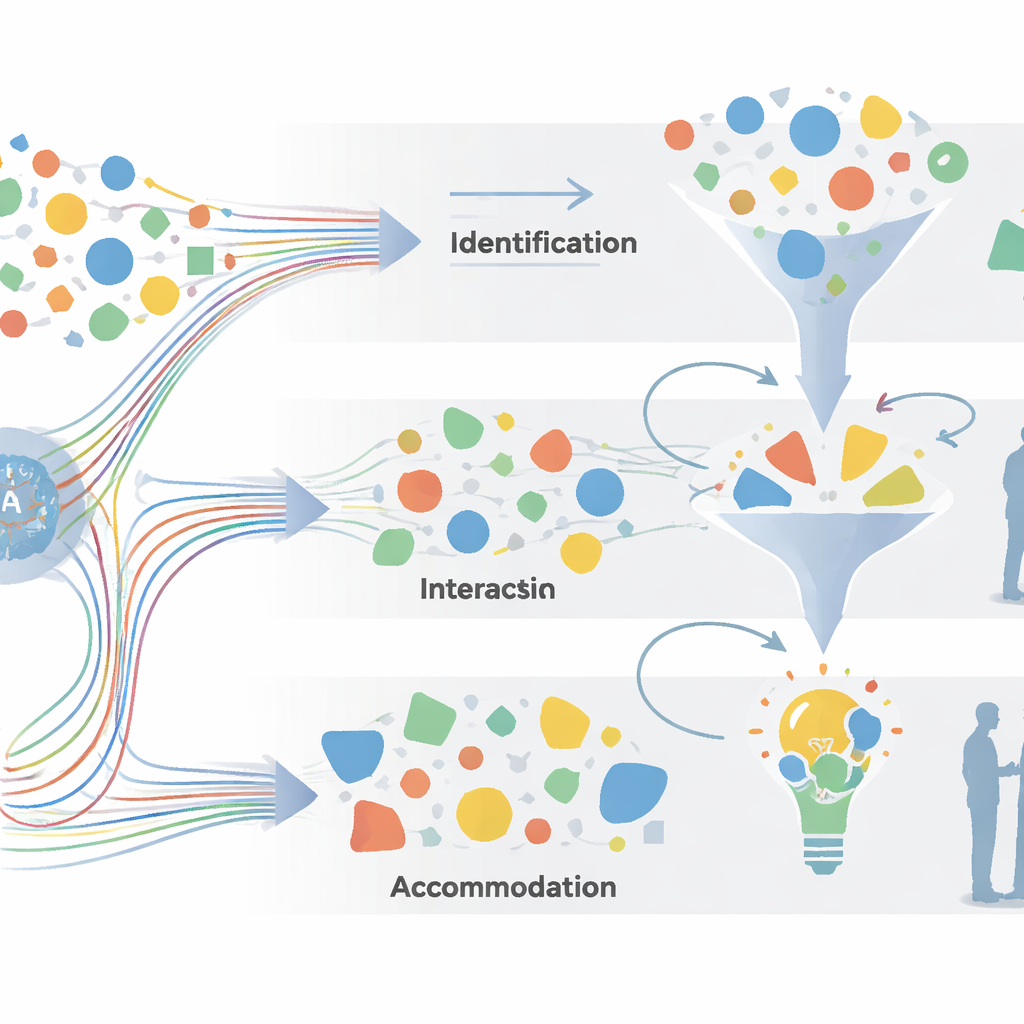

When people collaborate on a new product, they do more than just share tasks; they slowly build a shared understanding of the problem and possible solutions. The authors call this "collective cognition"—the way individual ideas and viewpoints blend into a common mental picture. Earlier research showed that this shared thinking develops through cycles of gathering information, talking it through, weighing options and settling on solutions, but those cycles were often described in broad strokes. To study real conversations in detail, the researchers refined an older model into what they call the 2I2A framework, which breaks collaboration into four overlapping spaces: identifying information and cues, interacting to organize ideas, analysing options and trade-offs, and accommodating, or turning insights into workable solutions.

A closer look at the experiment

The team recruited 44 advanced design and engineering students and paired them into 22 two-person groups. Each pair had 90 minutes to create an innovative product for children while thinking out loud. Half of the teams could freely use an LLM (ChatGPT-4 or a similar system) on a laptop; the other half had only paper and pencils. All spoken conversation was recorded and then carefully coded line by line using the 2I2A model. Each sentence or exchange was marked as belonging to one of the four spaces and to one of eight finer-grained communication types, such as taking in information, structuring questions, evaluating ideas or combining solutions. In addition, the LLM-using participants took part in semi-structured interviews, which the researchers analysed with grounded theory to uncover common themes in how people felt about working with AI.

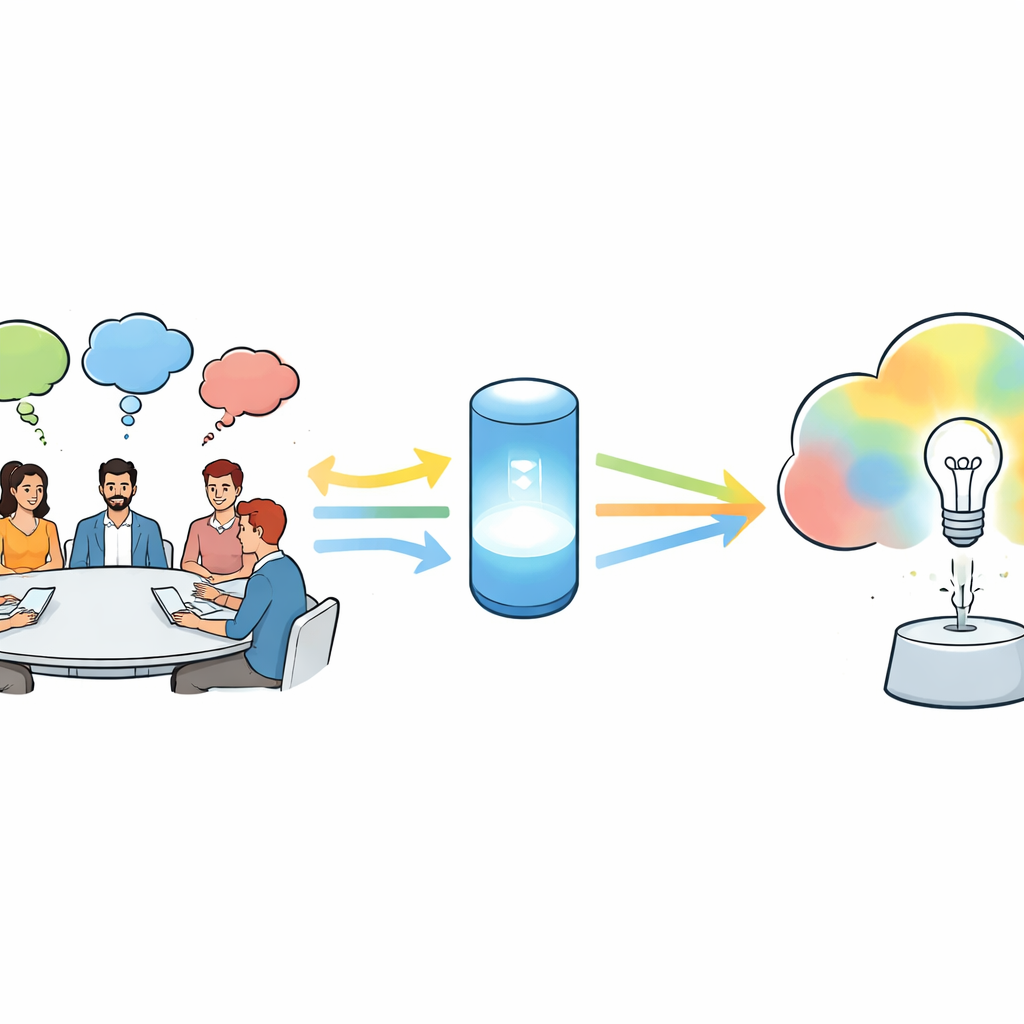

Where AI helps the most

The data show that LLMs strongly boost the early stages of group thinking. Compared with teams working without AI, LLM-assisted groups spent more of their collaboration time in the Identification and Interaction spaces. They asked the LLM for background facts, examples and inspiration, then used its responses as raw material to frame and reframe design questions. This meant they perceived more relevant information, selected and accumulated more options and more actively reorganized ideas. Interviews back this up: many participants said the LLM sped up their research, suggested directions they had not considered and acted like a quick "concept partner" that helped them break creative blocks. In short, AI expanded the teams’ cognitive boundaries and helped them reach a shared starting point more quickly.

Where human judgment still leads

In contrast, the LLM did not significantly change how often teams engaged in deeper reasoning or final synthesis. In the Analysis and Accommodation spaces—where people evaluate pros and cons, negotiate differences and weave ideas into a single design—LLM and non-LLM groups behaved similarly overall, and in some sub-areas the human-only teams were more active. Without AI, collaborators spent more time critically assessing options and discussing trade-offs. Interviewees often mentioned that the chatbot’s suggestions could be shallow, occasionally inaccurate and too agreeable, rarely pushing back as a critical teammate would. Some felt that AI sometimes "replaced" their own thinking or nudged them to follow its ideas too closely, which could narrow, rather than widen, the range of solutions. This helps explain why, despite livelier early-stage brainstorming, the final creative output was not clearly higher with AI.

Shifts in team dynamics and hidden risks

Looking at how teams moved between the four thinking spaces over time, the researchers found that LLM-assisted groups showed more fluid, back-and-forth transitions, especially between identifying information and interacting to structure it. The AI encouraged rapid cycles of asking, receiving and reshaping ideas, giving collaboration a more agile feel. Yet this flexibility came with trade-offs. Some participants reported that frequent shifts, powered by easy AI responses, made it harder to slow down for deeper reflection. Others worried about growing dependence on the tool, loss of “natural” human-to-human discussion and the need to double-check AI content for errors or missing context. The interviews also pointed to broader social effects: AI might automate routine work and free people for more creative tasks, but it could also change power dynamics by giving certain roles—like product managers who steer AI use—more influence within teams.

What this means for using AI in real teams

To a non-specialist, the core message is straightforward: LLMs are very good at helping teams get started and stay mentally flexible, but they are not a shortcut to deep understanding or wise decisions. These tools act as powerful accelerators for gathering information, exploring ideas and building an initial shared picture. However, careful analysis, hard choices and the blending of many viewpoints into a solid, workable design still rely heavily on human expertise, critical thinking and negotiation. The authors argue that organisations should treat LLMs as early-stage thinking aids within a broader 2I2A view of collaboration—not as replacements for the human conversations and judgments that ultimately make new products succeed.

Citation: Zhang, D., Luo, S., Liu, Y. et al. Large language model tools as catalysts for collective cognition in collaborative new-product development: a quasi-experimental study. Humanit Soc Sci Commun 13, 382 (2026). https://doi.org/10.1057/s41599-026-06738-7

Keywords: large language models, collaborative design, collective cognition, new product development, human–AI teamwork