Clear Sky Science · en

ClinicRealm: Re-evaluating large language models with conventional machine learning for non-generative clinical prediction tasks

Why smarter hospital predictions matter

Every day, hospitals collect enormous amounts of digital information about their patients, from brief doctor notes to long lists of lab results and vital signs. Hidden in this data are clues about who is likely to get better, who might return to the hospital soon, and who is at serious risk. Choosing the right kind of artificial intelligence (AI) to read these clues is no longer a purely technical question—it can shape how quickly and fairly patients receive help. This study asks a timely question: can today’s powerful chat-style AI systems, known as large language models, actually rival or surpass the carefully tailored algorithms that have long been the workhorses of medical prediction?

New tests for new kinds of medical AI

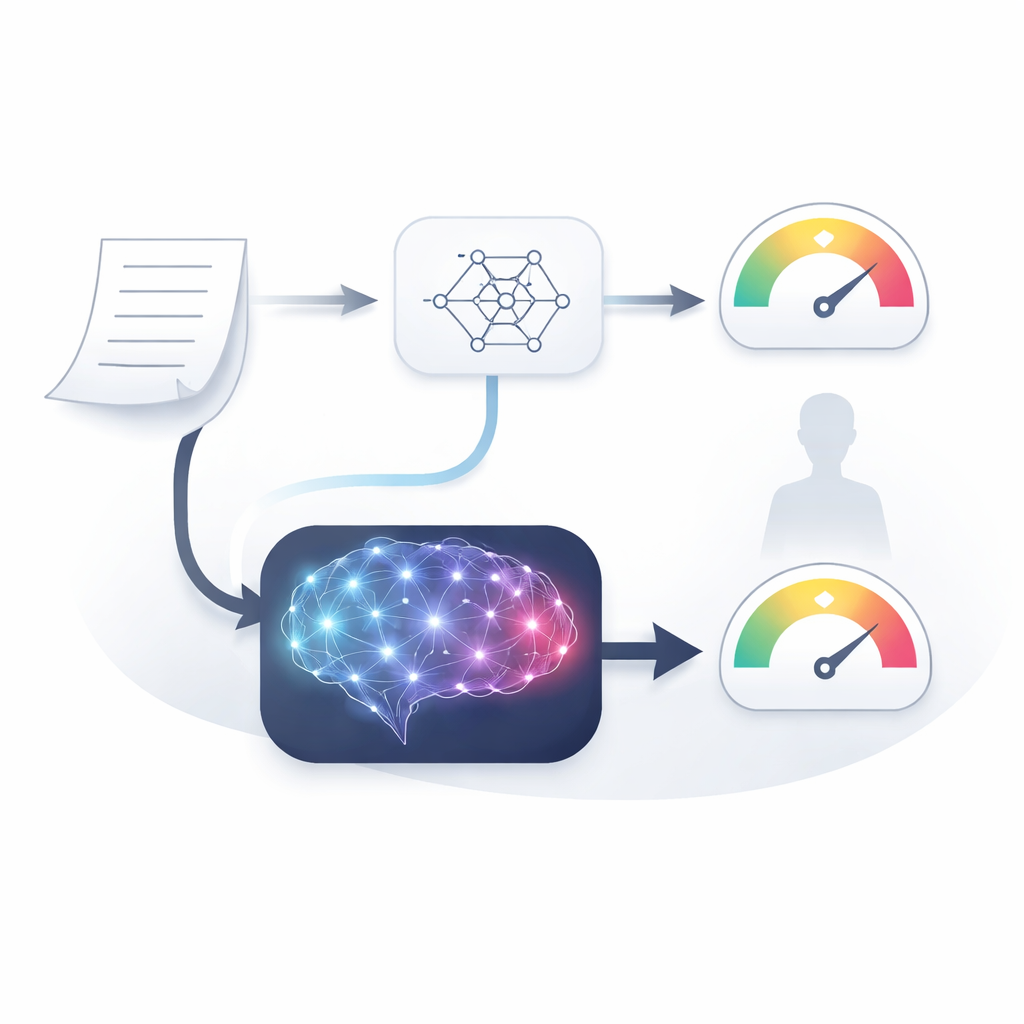

The researchers built a broad benchmark they call ClinicRealm to compare three families of models side by side: traditional machine-learning and deep-learning systems, earlier text-focused models, and modern large language models. They evaluated these tools on two main types of hospital data. One is unstructured text, such as admission and discharge notes written in everyday clinical language. The other is structured electronic health record tables, made up of numbers like lab values and time-stamped vital signs. The team focused on practical questions that matter to hospitals, including whether a patient would die during their stay, be readmitted within 30 days, or how long they might remain in the hospital.

When words beat numbers in prediction

A striking pattern emerged for tasks based on doctors’ and nurses’ notes. For years, specialized text models tuned on medical records were thought to be the best choice for predicting outcomes from such notes. Yet ClinicRealm shows that the latest large language models, used “zero-shot” without any extra training on the hospital data, now outperform these specialized systems by a wide margin. On both forward-looking risk predictions and after-the-fact document classification, advanced models like GPT-5 and DeepSeek variants achieved very high accuracy. This means that simply feeding them raw clinical text and asking for a prediction can work better than months of painstaking fine-tuning of older approaches. Remarkably, several open-source models matched or even exceeded the performance of proprietary ones, making strong tools more accessible to hospitals that must keep data in-house.

Numbers still reward classic tools—but not always

The story is more nuanced for structured electronic health records. Here, carefully trained traditional models and specialized deep-learning systems still come out on top when they can learn from large amounts of data. They are particularly good at spotting patterns in streams of lab values and vital signs over time. However, when only a small number of patient examples are available—as is often the case for rare diseases or new outbreaks—modern language models show surprising strength. In some tests, a large language model working from a cleverly designed prompt and a handful of examples matched or beat conventional models trained on the same limited data. Attempts to simply pour both tables and text into language models at once did not automatically improve performance, revealing that combining multiple data sources is still a delicate design problem rather than a free boost.

Peeking inside the AI’s medical reasoning

Because blind trust in a risk score is unsafe, the team also asked five clinicians to rate the explanations that language models produced alongside their predictions. Overall, experts found these narratives to be reasonably accurate, complete, and clinically useful, especially when models worked from rich narrative notes. Still, important weaknesses appeared. In some false alarms, models justified high risk by inventing or misreading details in the record. In missed-risk cases, they often recognized relevant findings but failed to weigh them properly, reflecting shallow judgment rather than simple data extraction errors. Even when predictions were correct, traces of flawed reasoning remained, underscoring that accuracy alone does not guarantee dependable clinical support.

Fairness, limits, and what comes next

The researchers also explored fairness across age, sex, and race. Encouragingly, state-of-the-art language models prompted carefully in zero-shot mode often showed better-balanced performance across groups than some heavily trained traditional systems, which could amplify existing data biases. Yet tuning models for specific tasks sometimes reintroduced disparities, and no method was perfectly fair. The authors stress that any deployment should include routine bias checks, robust prompt design, and safeguards for reliability, not just high accuracy on a single test set.

What this means for future hospital care

ClinicRealm concludes that modern large language models are no longer just chatty assistants; they have matured into serious contenders for predicting patient outcomes, especially from written notes and in settings with little data. Classic machine-learning systems still shine when there is plenty of structured information and time to train them, but the gap is narrowing. For hospitals and health technologists, this means moving away from one-size-fits-all choices toward a more nuanced toolkit: using traditional models where they remain best, relying on large language models for free-form text and rapid startup, and combining both with careful attention to reasoning quality and fairness. Done thoughtfully, this balanced strategy could make predictive analytics more powerful, more widely available, and ultimately more supportive of safer, more personalized care.

Citation: Zhu, Y., Gao, J., Wang, Z. et al. ClinicRealm: Re-evaluating large language models with conventional machine learning for non-generative clinical prediction tasks. npj Digit. Med. 9, 319 (2026). https://doi.org/10.1038/s41746-026-02539-z

Keywords: clinical prediction, electronic health records, large language models, medical AI benchmarking, healthcare fairness