Clear Sky Science · en

A multicenter multifunctional assessment of large language models in pure-tone audiogram interpretation for patients

Why hearing test reports are so hard to understand

Many people leave a hearing test holding a chart full of dots and lines, with only a short note from the doctor. For non‑specialists, these pure‑tone audiogram reports are almost impossible to decode, yet they inform life‑changing choices about hearing aids, treatment and daily communication. This study asks whether modern artificial intelligence chatbots, powered by large language models, can turn those technical charts into clear, reassuring explanations for ordinary patients.

Turning complex ear charts into plain language

Pure‑tone audiograms are the gold‑standard test for measuring how well we hear different tones, from low rumbles to high pitches. The resulting report looks more like a physics experiment than a health summary. At the same time, trained hearing specialists are in short supply worldwide, especially in regions with limited medical resources. The researchers saw an opportunity: if chatbots could “read” these charts and explain the results in everyday language, they might help patients understand their hearing earlier and more fully, supporting the World Health Organization’s goal of “hearing health for all.”

Putting multiple chatbots to the test

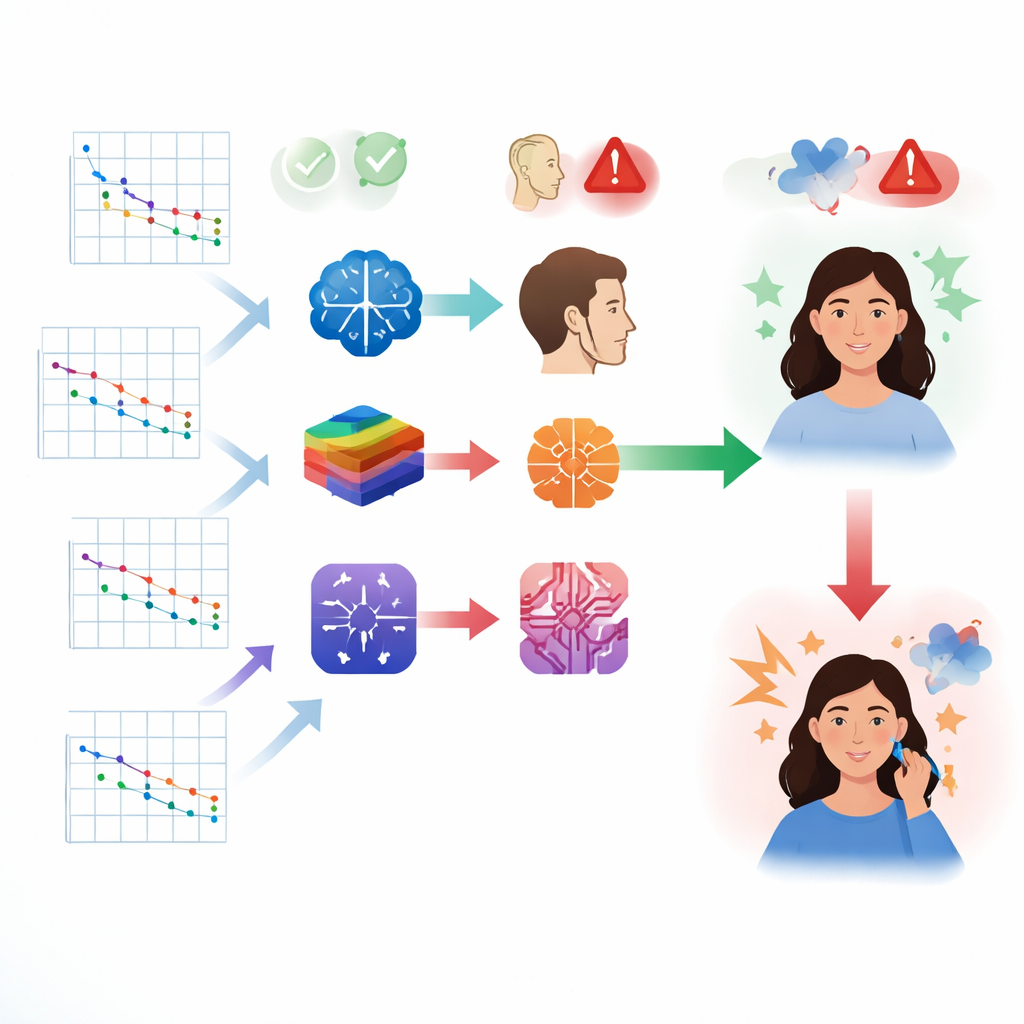

The team gathered 140 real hearing test reports from two centers in China, removed personal details, and regenerated standardized versions of the audiogram charts. They then asked eight different large language models, from companies in both China and the United States, to perform three tasks for each report: state how bad the hearing loss was and what kind (for example, related to the inner ear or outer ear), explain the findings in patient‑friendly language, and offer practical recommendations such as when to seek care or consider hearing aids. All model outputs were collected under controlled settings and were later judged by experienced clinicians and separate lay volunteers who did not know which model produced which answer.

How well the machines diagnosed hearing loss

When it came to acting like a virtual hearing specialist, the models’ performance was mixed. The best‑performing system, DeepSeek‑V3, correctly judged the severity of hearing loss about two‑thirds of the time and identified the broad type of hearing loss just over half the time. Other models often did worse, and accuracy across the board remained far below what is expected from trained clinicians. The researchers also tested alternative ways of feeding information to the models, for example adding more structured numbers along with the chart images. These changes improved accuracy for most systems, suggesting that how information is presented can be just as important as how powerful the model is.

Helpful explanations, but troubling made‑up details

Beyond raw accuracy, the study probed how readable and trustworthy the chatbots’ explanations were. Some models produced long, wordy responses, while others were more concise. Only the DeepSeek models consistently wrote at a reading level roughly suited to someone with a middle‑school education, matching health‑literacy guidelines from major medical organizations. However, several systems showed a worrying tendency to hallucinate, inventing details that were not in the original reports. In roughly one in four responses from some models, the chatbot fabricated numbers, mis‑stated hearing thresholds, or recommended nonexistent devices and unrealistic treatment pathways. In contrast, one Gemini model had far fewer hallucinations, though its medical accuracy was not the highest.

What experts and everyday users thought

Clinicians rated the models on how accurate, thorough, and practically useful their answers were. Here again, DeepSeek‑V3 and its sibling model generally ranked highest for professional quality, offering structured interpretations and focused recommendations aligned with clinical practice. Yet when members of the public rated the same responses, priorities shifted. Non‑experts favored models that were easier to follow, more conversational, and more emotionally supportive, even if these were not the most medically precise. The Gemini models scored especially well for clarity, empathy, and overall satisfaction, highlighting a tension between strict professional standards and patient‑centered communication needs.

Why this matters for people with hearing problems

Hearing loss is widespread, and many people never receive a clear explanation of their test results. This study shows that today’s chatbots are not ready to replace audiologists or make stand‑alone diagnoses from hearing charts. Their error rates and occasional invented details could mislead patients if used without oversight. At the same time, the models already have real strengths: turning dense charts into plain language, offering initial guidance, and easing anxiety for people who might otherwise have no one to ask. Used carefully, with strong warnings and under the supervision of hearing professionals, such tools could become valuable assistants that help bridge gaps in access to care, improve understanding, and support earlier action on hearing health.

Citation: Liang, J., Xing, M., Xiang, P. et al. A multicenter multifunctional assessment of large language models in pure-tone audiogram interpretation for patients. npj Digit. Med. 9, 348 (2026). https://doi.org/10.1038/s41746-026-02537-1

Keywords: hearing loss, pure-tone audiogram, large language models, patient communication, digital health