Clear Sky Science · en

Interpretable machine learning for thermoelectric materials design with Kolmogorov–Arnold networks

Turning Heat into Useful Power

Every day, enormous amounts of energy are lost as waste heat from car engines, factories, and even our own electronics. Thermoelectric materials offer a way to turn part of that waste heat directly into electricity, with no moving parts and no fuel. But finding new materials that do this efficiently is hard, because their performance depends on several tightly linked electronic properties. This study explores a new kind of artificial intelligence that can not only predict how good a material will be, but also explain why—opening a clearer path to designing better thermoelectric compounds.

Why Better Heat-to-Power Materials Are Hard to Find

Thermoelectric devices rely on materials that can generate a voltage when one side is hot and the other is cold. Their efficiency is captured by a figure called zT, which depends on three main ingredients: how strongly charge carriers respond to temperature (the Seebeck coefficient), how easily they move (electrical conductivity), and how well the material carries heat (thermal conductivity). Improving one often harms another—for example, making a material conduct electricity better can also make it carry heat too well, reducing efficiency. Traditional quantum mechanical simulations, such as density functional theory, can predict these properties from the atomic structure, but they are so computationally costly that they cannot be applied to millions of candidate materials.

Black-Box Models and the Need for Insight

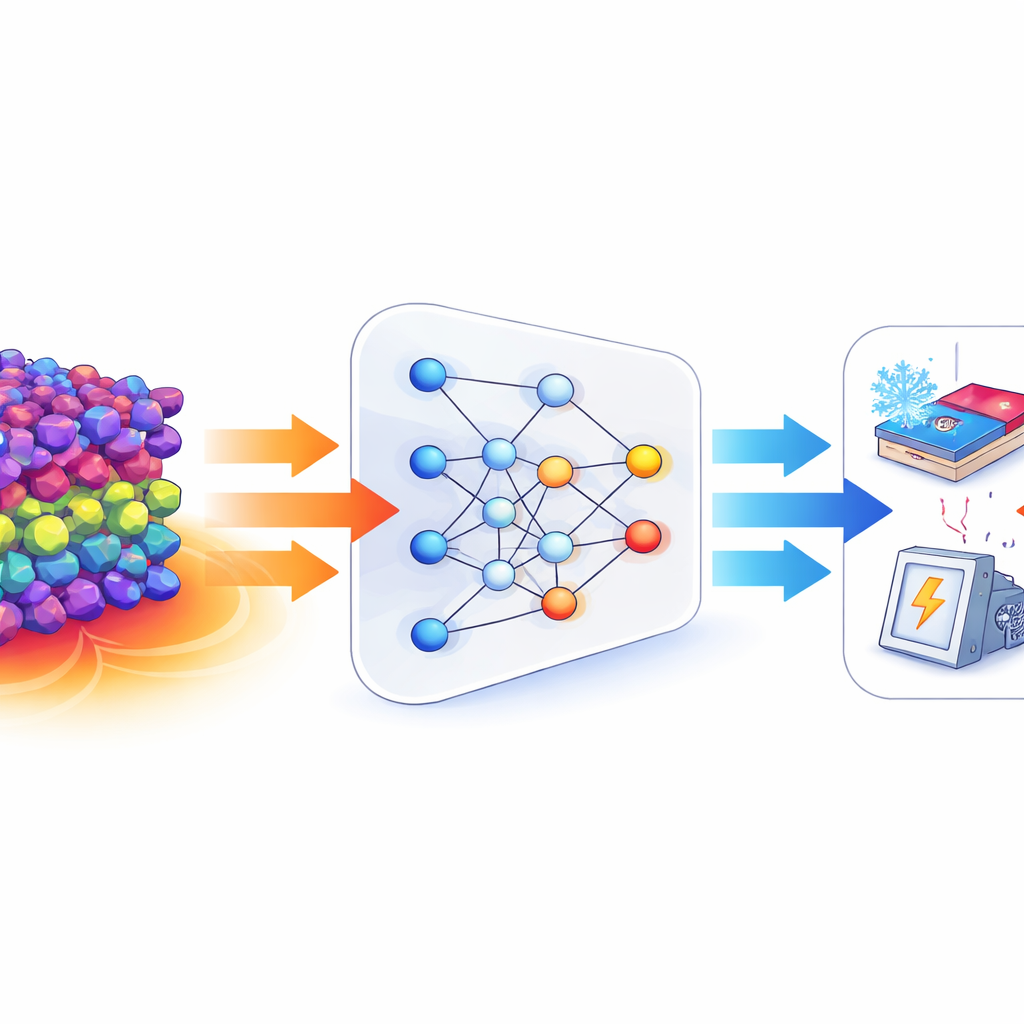

Machine learning models have recently become powerful tools for rapidly estimating material properties based on past simulations and experiments. In this work, the authors start from rich numerical descriptions of crystal structures produced by a specialized "Crystalformer" encoder, which captures how atoms are arranged and interact in a crystal. They first train a standard multilayer perceptron—a common deep-learning model—to predict two key quantities: the Seebeck coefficient and the electronic band gap, which influences how easily a material can host mobile charge carriers. This model reaches high accuracy on both tasks across a large dataset of about 15,000 compounds. However, like most deep networks, it behaves as a black box, offering little guidance about which structural features truly matter or how they combine to control the thermoelectric response.

A Different Kind of Neural Network

The central idea of the paper is to replace opaque neural networks with Kolmogorov–Arnold Networks, or KANs. Instead of hiding all the complexity inside neuron activations, KANs attach flexible, one-dimensional curve-like functions to the connections between layers. Mathematically, these curves are smooth splines that adapt to the data, and the overall model can be written as a sum of simple functions of the input descriptors. After training, the authors fit concise mathematical expressions—built from familiar functions like sines, cosines, and smooth saturation curves—to these learned splines. In this way, the model becomes an explicit symbolic formula linking structural descriptors to the band gap and Seebeck coefficient, rather than a tangle of opaque parameters. Although KANs are more computationally expensive to train, they achieve accuracy comparable to, and in some regimes better than, the multilayer perceptron and several other machine-learning baselines from the literature.

What the Model Learns About Materials

By examining the KAN’s internal structure, the authors show that only a subset of the 128 structural descriptors strongly influences each property. They compute attribution scores to identify the most important descriptors and then prune weak connections, leaving a sparse, easier-to-interpret network that still predicts well. Using pairs of top-ranked descriptors, they build two-dimensional maps that show how the predicted band gap or Seebeck coefficient varies across descriptor space. These maps reveal smooth, cooperative effects, where oscillatory and saturating trends combine, rather than simple one-to-one rules. Chemically, the model struggles most in complex systems with highly directional bonding or strongly interacting d- and f-electrons, but even there KANs produce more stable and physically sensible predictions than the black-box model. For example, they generate fewer unphysical negative band gaps and fewer wrong-sign Seebeck values.

From Prediction to Guided Design

Because KANs output explicit, smooth formulas rather than just numerical predictions, they can be used to explore how changing underlying descriptors would shift thermoelectric performance. While these descriptors are still abstract quantities derived from a deep structural encoder, they correlate with real features of atomic arrangements and bonding. This means researchers can use the analytic KAN model as a kind of map: by scanning it for regions associated with high Seebeck coefficient and suitable band gap, they can narrow down promising materials, then link those descriptor patterns back to candidate crystal structures in large databases or in generative design tools.

What This Means for Future Materials

For a lay reader, the key message is that this work brings us closer to "glass box" artificial intelligence for materials discovery. Kolmogorov–Arnold Networks perform nearly as well as the best black-box predictors, but they also lay out, in mathematical form, how structural features of a crystal are tied to its ability to turn heat into electricity. That combination of speed, accuracy, and interpretability can help scientists make informed choices about which new compounds to synthesize and test, potentially shortening the path from raw data to real-world thermoelectric devices that harvest waste heat and improve energy efficiency.

Citation: Fronzi, M., Ford, M.J., Nayal, K.S. et al. Interpretable machine learning for thermoelectric materials design with Kolmogorov–Arnold networks. Sci Rep 16, 14146 (2026). https://doi.org/10.1038/s41598-026-44723-x

Keywords: thermoelectric materials, interpretable machine learning, Kolmogorov–Arnold networks, materials design, Seebeck coefficient