Clear Sky Science · en

Hair and artifact removal in dermoscopic images using deep learning for enhanced skin cancer detection

Clearer skin images to save lives

Spotting dangerous skin cancers early can mean the difference between a simple outpatient procedure and life‑threatening disease. Doctors increasingly rely on close‑up skin photographs, called dermoscopic images, to see subtle color patterns and borders in moles. But in real life these pictures are often cluttered with hair, glare from lights, and shadows. This study shows how a modern artificial‑intelligence system can automatically "clean" those images, removing visual clutter while preserving the fine details doctors and diagnostic software need to catch melanoma sooner.

The problem with messy skin photos

Although melanoma is less common than other skin cancers, it is far more deadly, so early and accurate diagnosis is critical. Dermoscopy lets clinicians see structures beneath the skin surface, but the images are rarely pristine. Hairs crossing the field of view, uneven lighting, and reflections from the skin or camera lens can blur lesion borders and hide pigment patterns. Traditional clean‑up methods rely on hand‑crafted rules and filters. They often work only on specific types of hair or lighting and may accidentally erase parts of the lesion itself. At the same time, many computer‑aided skin cancer systems quietly assume that the input images are already clean, which is seldom true in busy clinics.

Teaching a network what to remove and what to keep

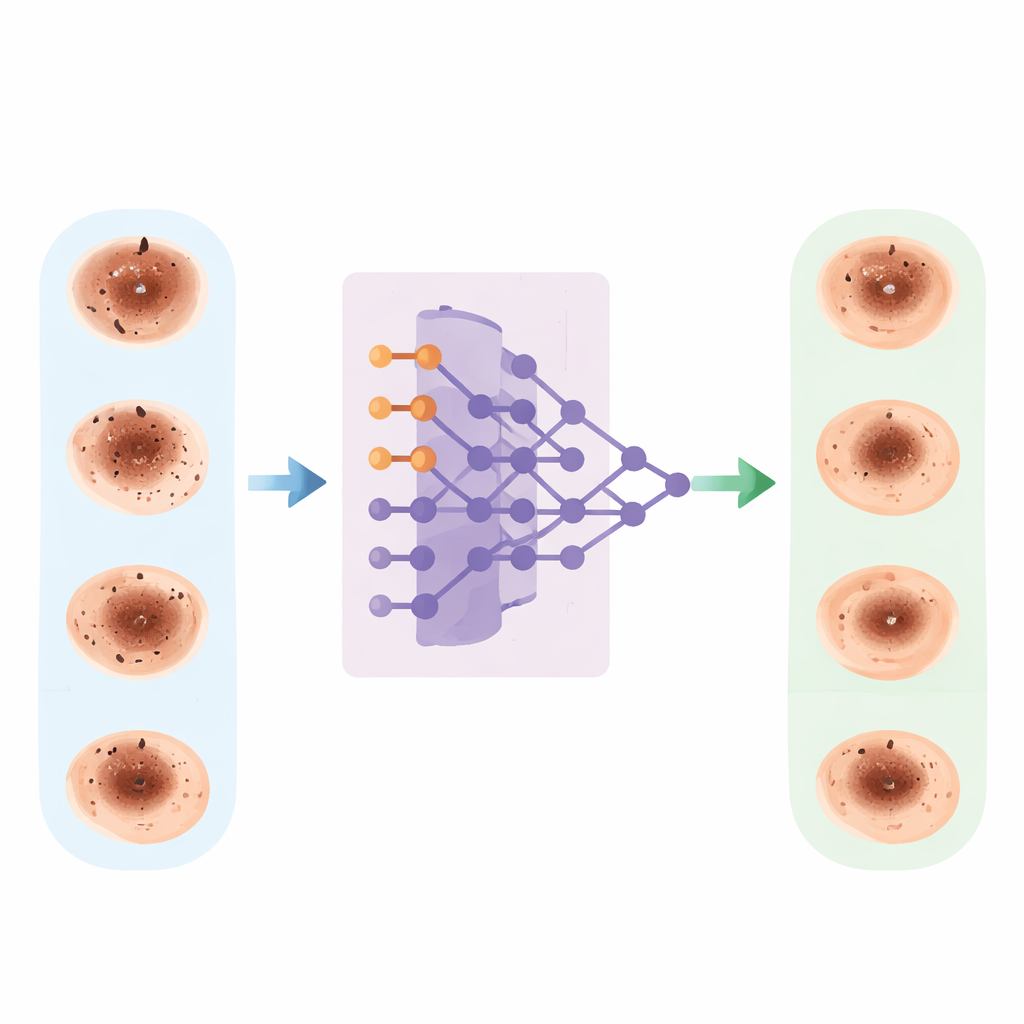

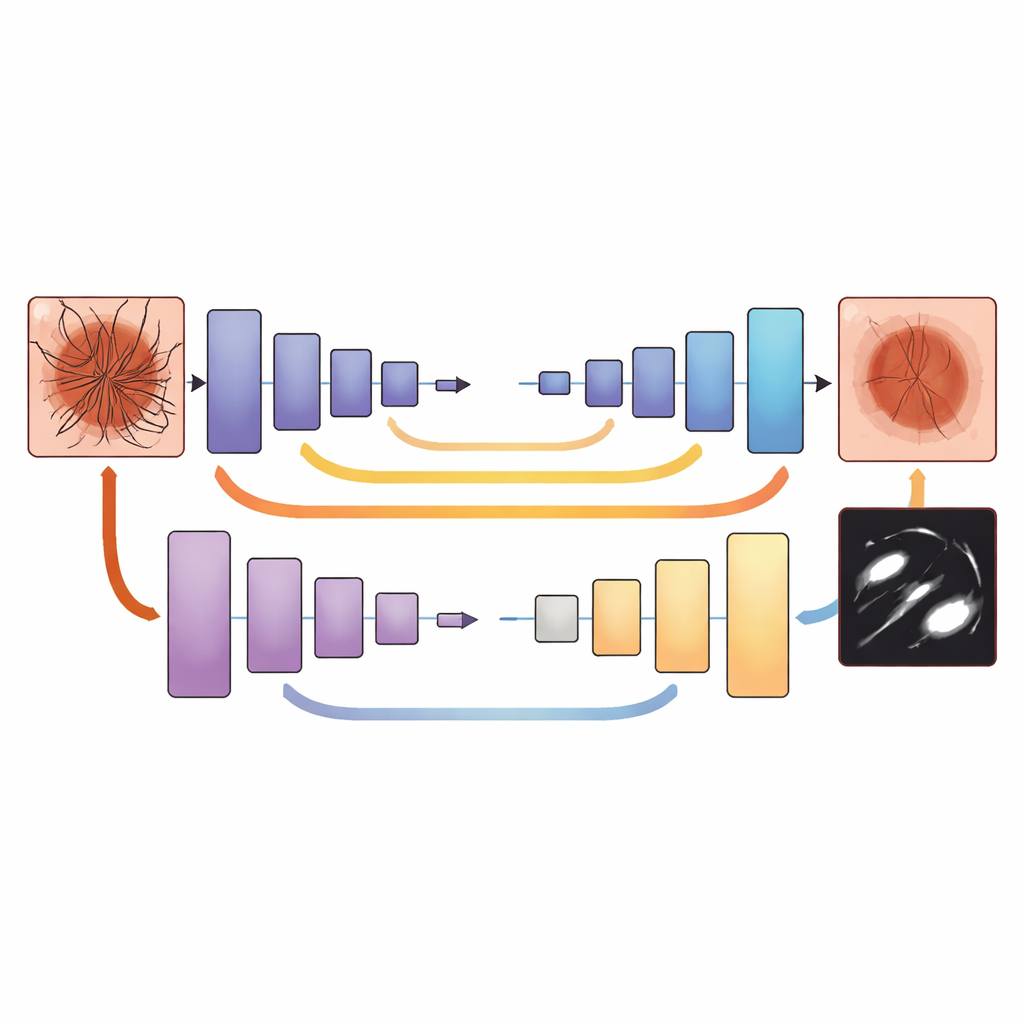

The researchers tackled this problem by training a deep learning model known as U‑Net to separate unwanted artifacts from the actual skin lesion at the level of individual pixels. They used two large, publicly available image collections of moles and other spots on the skin, together covering more than 20,000 dermoscopic images with both benign and cancerous lesions. Each image was carefully preprocessed: colors were normalized so pictures taken with different cameras or lighting became more comparable, and the dataset was expanded with flipped, rotated, and slightly recolored versions to mimic the variety seen in real practice.

Building precise “ground truth” for clutter

A key step was telling the network exactly which pixels were hair or other clutter and which belonged to the lesion or surrounding skin. Dermatology experts manually drew detailed masks on representative images, tracing thin hairs, ruler marks, glare spots, and shadows while avoiding important lesion structures. Classical image‑processing tools were then used to generate draft masks for many more images, which the experts reviewed and corrected. All images and masks were resized to a standard format so the network could learn consistent patterns. During training, a tailored loss function rewarded the model both for accurately outlining artifacts and for avoiding accidental damage to lesion details, especially very thin or small structures.

Sharper pictures and better cancer detection

Once trained, the system was tested on unseen images. Measures of image quality improved markedly: the cleaned images looked more similar to ideal reference images, and their structure was better preserved. The network also learned to mark hairs and other artifacts with high precision, even when they were dense or irregular. To see whether this mattered clinically, the authors fed both the original and cleaned images into an existing melanoma‑detection AI. With cleaned inputs, that classifier’s accuracy rose from about 84% to around 90–92%, and a key balance measure of performance (the F1‑score) improved by nearly 10 percentage points. The model’s confidence in its decisions also increased, especially in difficult cases where clutter had previously obscured lesion borders.

What this means for future skin checks

This work demonstrates that removing hair and other visual clutter is not just cosmetic editing, but a crucial first step for dependable computer‑aided skin cancer diagnosis. By embedding an artifact‑removal network in front of existing classifiers, clinics and telemedicine services could obtain clearer, more standardized dermoscopic images in real time, without adding work for doctors. The approach still needs testing on even more diverse skin tones, cameras, and clinic settings, but it offers a practical path toward more reliable AI‑assisted melanoma screening and, ultimately, earlier treatment for patients.

Citation: Hammouda, N.G., Shalaby, M., Alfilh, R.H.C. et al. Hair and artifact removal in dermoscopic images using deep learning for enhanced skin cancer detection. Sci Rep 16, 14452 (2026). https://doi.org/10.1038/s41598-026-44545-x

Keywords: melanoma, dermoscopy, deep learning, image preprocessing, skin cancer detection