Clear Sky Science · en

SCB-YOLO: a lightweight adaptive attention-enhanced network for student behavior detection in complex classroom settings

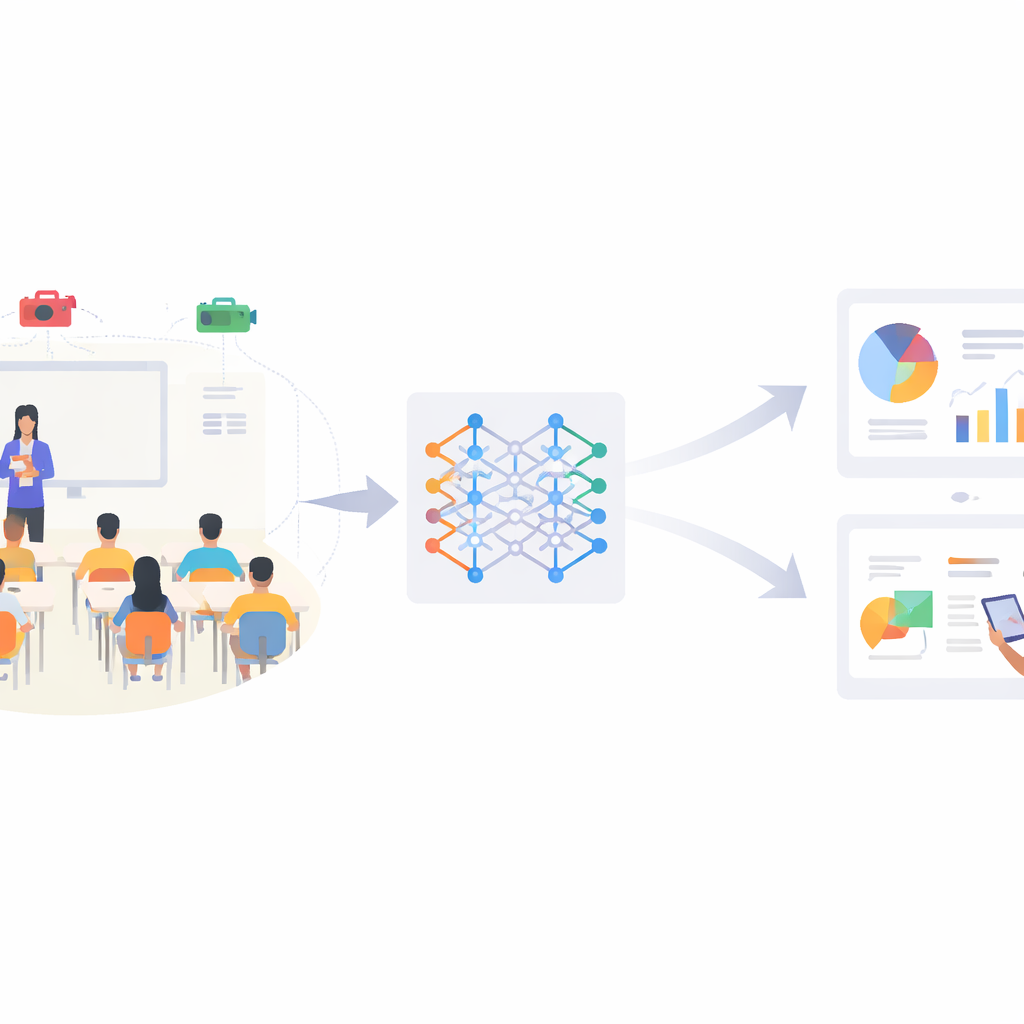

Watching the Classroom in a New Way

Teachers have always relied on their eyes and instincts to judge whether students are listening, reading, or quietly zoning out. But in today’s packed classrooms and data-driven schools, it is almost impossible for one person to track every child’s behavior in real time. This paper presents SCB-YOLO, a compact artificial-intelligence system that can automatically detect key student behaviors—such as raising a hand, reading, or writing—from ordinary classroom video, even under poor lighting, crowding, and visual clutter. The goal is not to replace teachers, but to give them a steady, objective stream of information about how students are engaging, opening the door to more personalized and responsive teaching.

Why Student Actions Matter

Simple classroom actions carry a surprising amount of information. Frequent hand-raising, steady reading, and focused writing are strongly linked to how well students learn and how engaged they feel. Traditionally, teachers or observers tried to record these behaviors by hand, a process that is slow, subjective, and hard to scale beyond a few lessons. Early attempts to automate this used wearable sensors or special hardware in the room, but these devices were intrusive, costly, and raised privacy concerns. In contrast, modern computer vision can work from ordinary video streams already found in many schools, turning raw pixels into a record of how students behave without disrupting the class.

From Raw Video to Recognized Behavior

SCB-YOLO builds on a popular family of vision models known as YOLO, which can spot and locate objects in an image in a single fast pass. The authors adapt the lightweight YOLOv11n variant and reshape it specifically for elementary school classrooms, where lighting is uneven, desks and walls are cluttered, and students often block one another from view. Their dataset, SCB-Dataset3-S, contains more than 5,000 real classroom images labeled with three core behaviors: hand-raising, reading, and writing. These categories were chosen because they are both educationally important and visually challenging—especially distinguishing writing from reading, which can differ only by subtle changes in hand and head position.

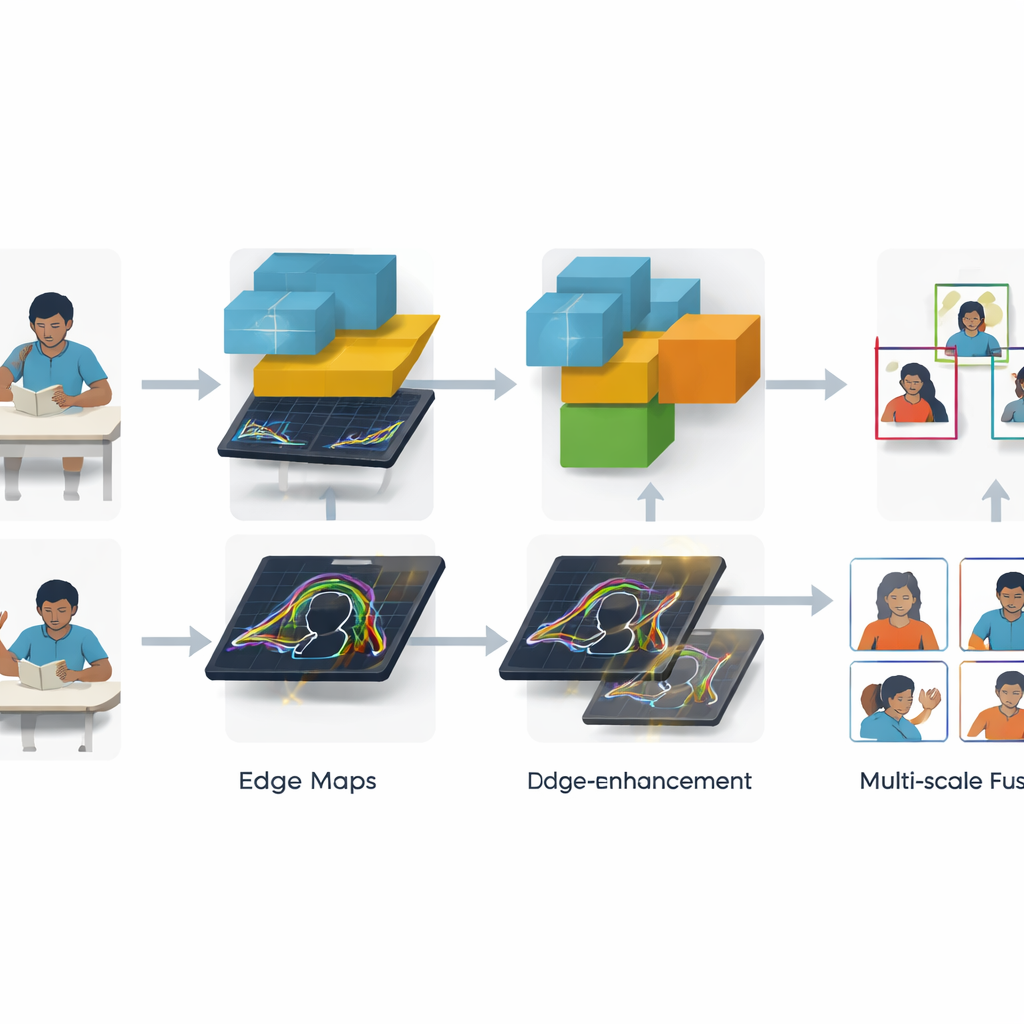

Sharpening Edges and Blending Scales

Two key innovations help SCB-YOLO cope with messy real-world scenes. First, a Global Edge Information Transfer module concentrates on outlines and contours—such as the border of an arm held up in the air or the edge between a hand and a notebook. By applying classic edge filters not directly to the raw image but to early network features, and then feeding these refined edges into deeper layers, the system becomes better at drawing tight boxes around behaviors like hand-raising and writing, even when students are small or partly hidden. Second, a new MANet_Star fusion module combines information from different image scales more intelligently. It sends features through several lightweight branches that mimic attention, boosting the most informative patterns while keeping the overall model compact enough for real-time use.

How Well the System Works

On the SCB-Dataset3-S benchmark, SCB-YOLO outperforms a wide range of other streamlined YOLO models. It improves a standard accuracy measure (mAP@0.5) by 2.6 percentage points over its YOLOv11n starting point, reaching 71.8 percent while still operating at video speeds. The gains are especially large for the hardest case—writing—where accuracy jumps more than in any other category and confusion with reading is sharply reduced. Visual analyses of the network’s internal heat maps show that, compared with the baseline, SCB-YOLO focuses more precisely on books, hands, and heads, particularly for small or distant students. Tests on devices ranging from a powerful desktop graphics card to a compact Jetson edge module show that the system can run comfortably above real-time rates in realistic deployments.

What This Means for Future Classrooms

For non-specialists, the main takeaway is that it is now feasible to build classroom cameras that do more than record—they can understand, in a basic way, what students are doing and how engaged they seem. SCB-YOLO shows that with carefully designed modules that sharpen edges and blend information across scales, a relatively small AI model can reliably spot key learning behaviors in crowded, imperfect conditions. In the near future, such systems could feed into learning analytics and tutoring platforms, alerting teachers when attention wanes, highlighting which lessons lose students, and supporting more tailored instruction. Used responsibly and with strong privacy safeguards, this technology could become a quiet but powerful ally in helping every child get the attention they need.

Citation: Guo, C., Yuan, B., Xie, J. et al. SCB-YOLO: a lightweight adaptive attention-enhanced network for student behavior detection in complex classroom settings. Sci Rep 16, 13309 (2026). https://doi.org/10.1038/s41598-026-43753-9

Keywords: smart classroom, student engagement, computer vision, behavior detection, lightweight deep learning