Clear Sky Science · en

AI-enabled RF data synthesis for breast ultrasound: efficacy in quantitative ultrasound tissue characterization

Why This Matters for Breast Cancer Care

Breast cancer screening often relies on ultrasound images that look like grainy black‑and‑white clouds. Hidden behind those images are rich raw signals that could reveal far more about whether a lump is harmless or dangerous, but these signals are usually thrown away because they are too large to store. This study explores whether artificial intelligence can recreate those lost signals from ordinary ultrasound pictures, opening the door to more accurate, affordable breast cancer assessment without changing the scanners already used in clinics.

From Simple Pictures to Hidden Signal Details

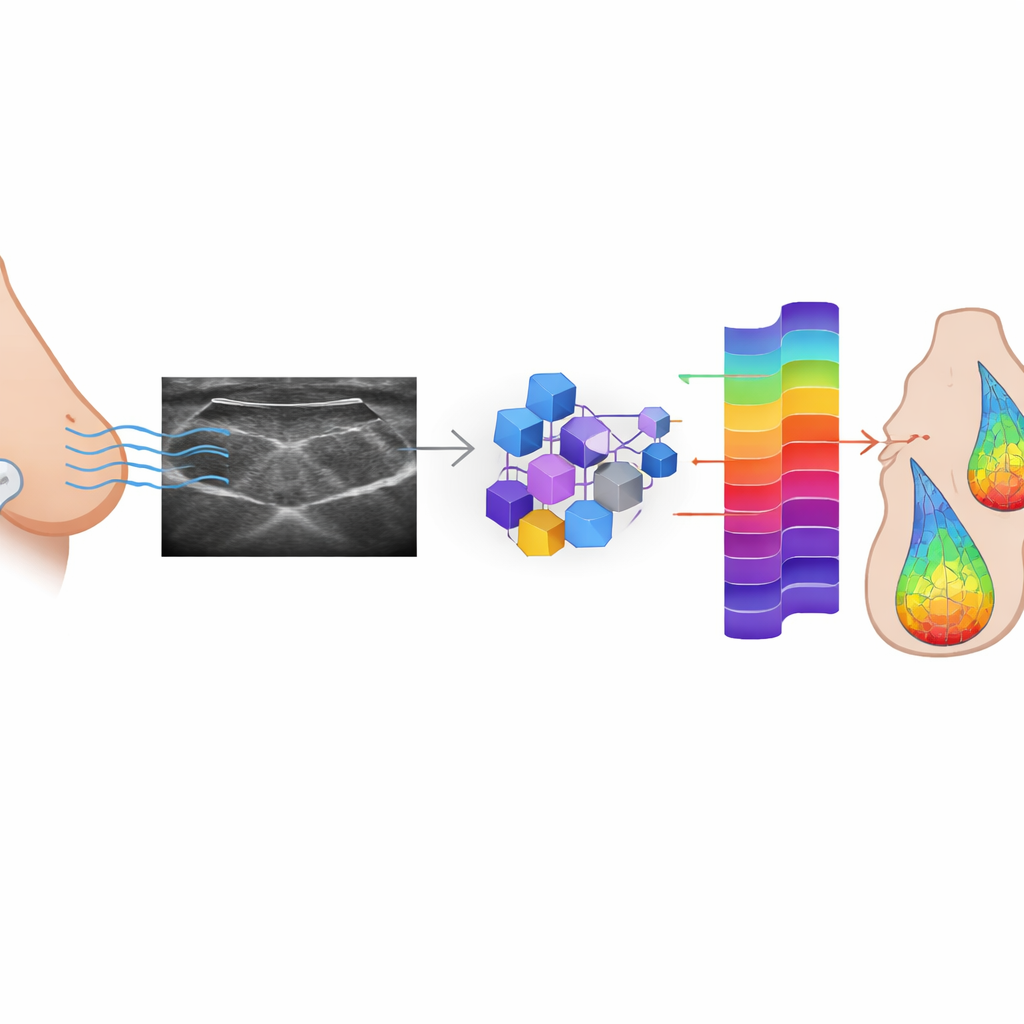

Today’s breast ultrasound exams typically use so‑called B‑mode images: grayscale slices that show the shape and overall brightness of tissue. Before these images appear on the screen, the machine first records raw echo signals, known as radiofrequency (RF) data. Quantitative ultrasound (QUS) techniques can analyze these raw signals to estimate properties related to the size, arrangement, and concentration of tiny structures inside tissue. Prior research has shown that such quantitative maps can help distinguish benign from malignant lesions and even track how tumors respond to chemotherapy. The problem is that RF data are huge, difficult to store and transmit, and often inaccessible on commercial scanners, which has kept QUS largely confined to research labs.

Teaching AI to Rebuild the Lost Signals

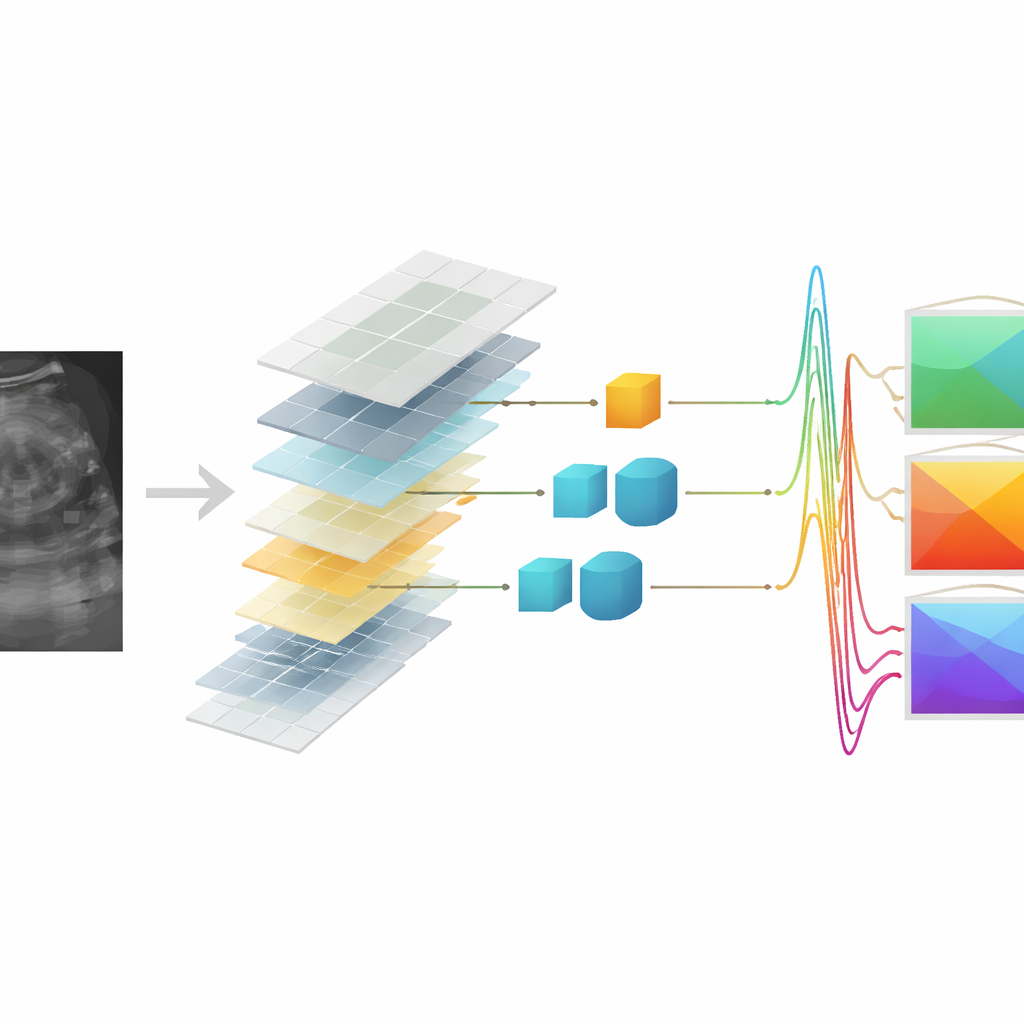

The researchers set out to see whether AI could learn to reconstruct RF data from the B‑mode images that are routinely saved. They trained three kinds of conditional generative adversarial networks (cGANs)—a widely used Pix2Pix model and two versions based on vision transformers (one shallow, one deeper)—on a large dataset of paired RF and B‑mode frames from 152 patients with suspicious breast lesions. In this adversarial setup, a generator network tries to create realistic RF signals from each B‑mode image, while a discriminator network learns to tell real from synthetic signals. Over time, the generator improves until its outputs become hard to distinguish from the originals. The models were tuned not only to fool the discriminator but also to keep each generated RF frame numerically close to its true counterpart.

Putting Synthetic Signals to the Test

To judge how well the AI‑generated RF data matched the originals, the team used several image‑quality scores and then asked a tougher question: can the synthesized signals support the same kind of lesion characterization as real RF data? On a held‑out test set of thousands of frames, all three models produced synthetic RF that was structurally very similar to the real signals and led to B‑mode reconstructions that closely preserved lesion shape and the speckled appearance of ultrasound. The most powerful model, the deep transformer cGAN, achieved the best overall fidelity. When QUS maps were computed from the synthetic RF data, their numerical values and distribution patterns strongly resembled those derived from the original signals, suggesting that key tissue information had been retained.

Can Synthetic Data Still Spot Cancer?

The decisive test was whether these synthetic‑based QUS maps could help distinguish benign from malignant breast lesions. The authors built a classification pipeline that first selected the most informative QUS features and then trained a support vector machine to separate the two groups. Using only features from real RF data, the system correctly classified lesions with about 82% accuracy. Remarkably, when trained and tested purely on QUS features derived from synthetic RF, the deep transformer and Pix2Pix models reached about 81% accuracy, essentially matching the original performance within statistical variation. In some scenarios, the deep transformer model even slightly exceeded the baseline when synthetic features were used for testing against a classifier trained on real‑data features, underscoring the robustness of the learned mapping.

Looking Ahead to Smarter Scans

This work shows that AI can effectively “fill in” the missing raw ultrasound signals from standard images while preserving the key quantitative cues that help separate harmless from dangerous breast lesions. In practical terms, a hospital could one day run QUS analyses directly from routine B‑mode data, without needing to record or store massive RF datasets on every scanner. The study is an early proof of concept, limited to one type of ultrasound system and focused on breast lesions, but it points toward a future in which existing ultrasound machines can be enhanced by software to deliver deeper, more objective insight into tissue health—potentially improving cancer diagnosis and treatment decisions without adding new hardware or invasive procedures.

Citation: Sheibani-Asl, N., Osapoetra, L.O., Czarnota, G.J. et al. AI-enabled RF data synthesis for breast ultrasound: efficacy in quantitative ultrasound tissue characterization. Sci Rep 16, 12544 (2026). https://doi.org/10.1038/s41598-026-43319-9

Keywords: breast ultrasound, artificial intelligence, quantitative ultrasound, medical image synthesis, cancer diagnosis