Clear Sky Science · en

A comprehensive evaluation of lightweight deep learning models for tomato disease classification on edge computing environments

Smarter Eyes for Tomato Plants

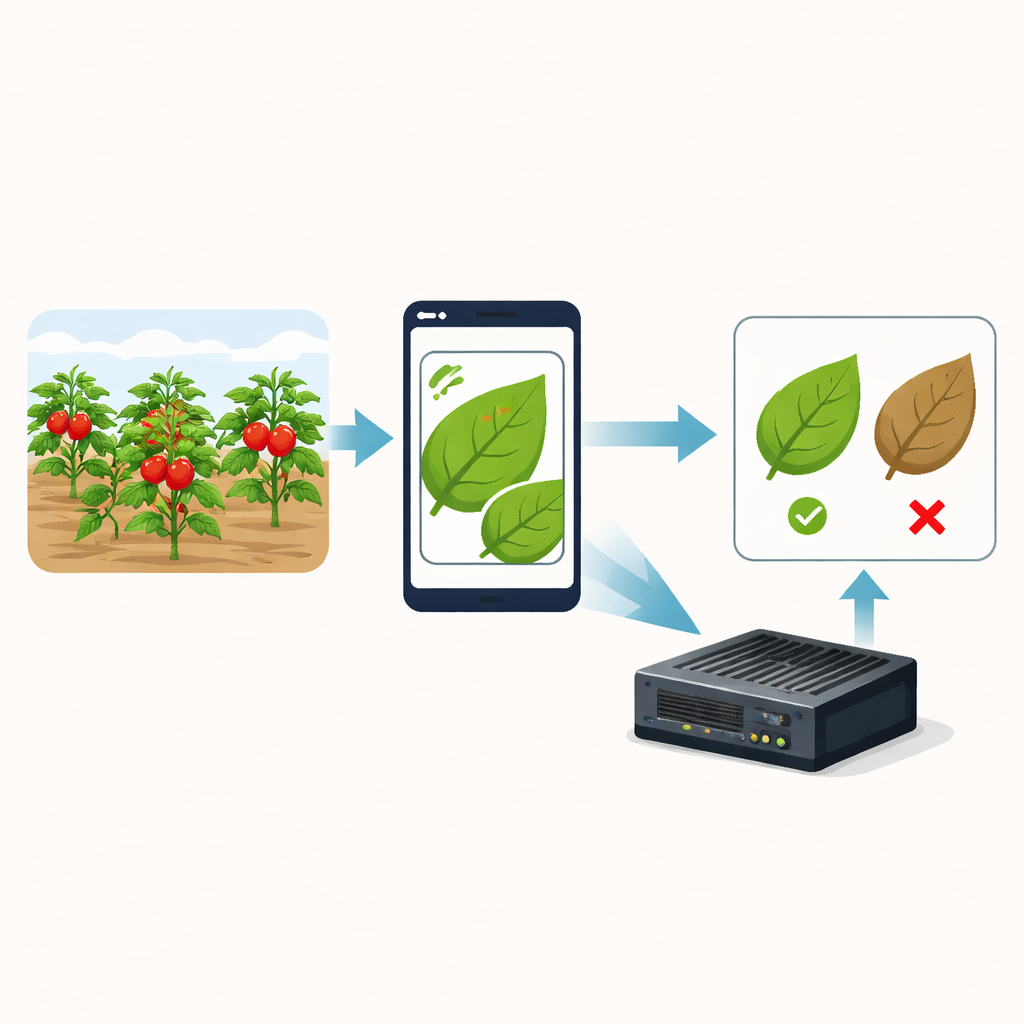

Tomato crops around the world lose a large share of their potential harvest to leaf diseases. Today, farmers can snap photos of leaves with a phone or field camera, letting artificial intelligence (AI) flag problems early. But there is a catch: many of the most accurate AI models are too heavy to run on low-cost devices in fields and greenhouses. This study asks a practical question: which compact AI models can both spot tomato diseases accurately and run fast on small, inexpensive hardware close to the plants themselves?

Why Tomato Leaf Photos Matter

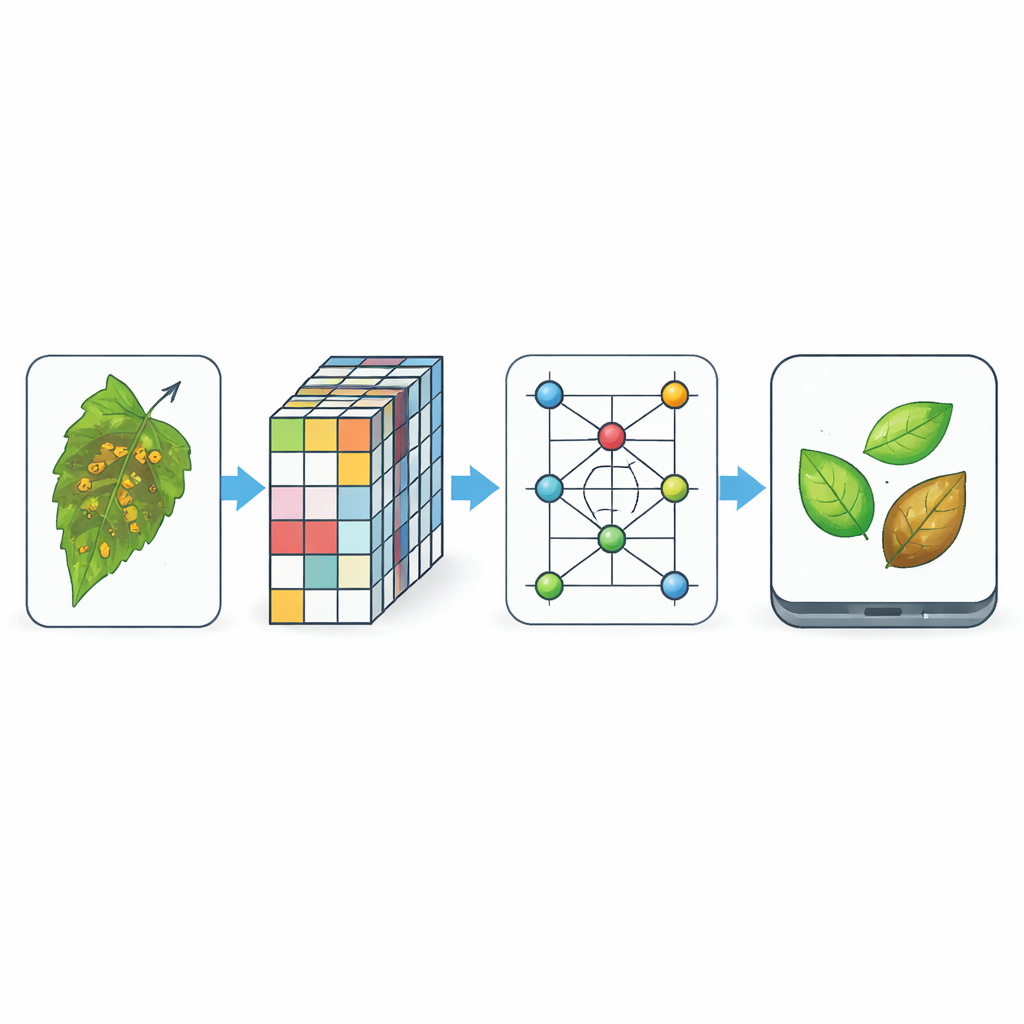

Tomato diseases often show up first as spots, molds, or discoloration on the leaves, and catching them early can prevent serious yield losses. The authors build on a popular public image collection called PlantVillage, focusing on over 18,000 photos of tomato leaves covering nine diseases plus healthy plants. All images are standardized and lightly altered during training to mimic real-world changes such as lighting, zoom, and small shifts in position. This lets the researchers test how well different AI models learn to recognize visual patterns that distinguish, for example, early blight from late blight, or a healthy leaf from one infected by a virus.

Comparing Many Small Brain-Inspired Models

Rather than designing yet another new model alone, the team sets up a fair race among seven compact deep-learning architectures. Some are classic image-recognition networks that have been successful in many tasks, such as VGG16, ResNet50, and DenseNet121. Others are newer designs created specifically to run well on phones and embedded devices, like MobileNetV3-Small, ShuffleNetV2, and SqueezeNet. The final contender, MobilePlantViT, is a hybrid that combines two ideas: traditional convolutional layers that capture fine details in small regions of an image, and transformer-style attention that links distant parts of a leaf to understand the overall pattern of disease.

Seeing Inside the AI’s Decisions

For farmers and agronomists to trust these models, it is not enough for them to be accurate—they must also be understandable. The authors therefore use three popular “explainable AI” techniques that create heat maps over each leaf image, showing which regions most influenced the decision. These are Grad-CAM, LIME, and SHAP. To move beyond simply eyeballing these colorful maps, the team introduces a new score called the Perturbation Stability Score. By adding a little noise to an image many times and comparing how much the explanation maps change, they can measure how stable and reliable each explanation method is. Across models, SHAP tends to give the most stable explanations, while Grad-CAM offers clear, high-resolution highlights of diseased areas that align well with human expectations.

Speed and Power on Real Devices

Because farm tools often rely on low-cost processors without graphics chips, the researchers measure how quickly each model runs on standard desktop CPUs and on a Raspberry Pi 5, a small, affordable computer comparable to hardware used in smart cameras. They track model size, the number of basic math operations per image, and the time to process a single photo under different threading settings. Standard networks like VGG16 deliver excellent accuracy but are huge and slow, while tiny models like SqueezeNet run quickly but lose some precision, especially under more challenging, noisy images and field conditions. MobilePlantViT stands out: it reaches accuracy above 99% on clean images and remains competitive on more realistic tests, yet uses a fraction of the memory and computation, achieving near real-time performance even on constrained hardware.

What This Means for Future Smart Farms

Overall, the study shows that carefully designed lightweight models can bring high-end image recognition to the edge of the field, where connectivity and power are limited. Among all the tested architectures, MobilePlantViT offers the best combination of sharp diagnosis, efficient use of computing resources, and clear visual explanations of what the model “looks at” when it predicts a disease. For growers and technology developers, this points toward a future in which small, inexpensive devices mounted on phones, drones, or greenhouse rails can continuously scan tomato plants, highlight suspicious leaves, and justify their warnings in ways that agronomists can verify and trust.

Citation: Hoang, TM., Bui, VH., Nguyen, VS. et al. A comprehensive evaluation of lightweight deep learning models for tomato disease classification on edge computing environments. Sci Rep 16, 12320 (2026). https://doi.org/10.1038/s41598-026-42439-6

Keywords: tomato disease detection, edge AI, lightweight deep learning, vision transformer, explainable AI