Clear Sky Science · en

SiaCon-DetNet with HySHO: a cutting-edge transformer-based deep learning framework for emotion-aware facial recognition

Why teaching computers to read feelings matters

From video calls to virtual tutors and health apps, we increasingly meet machines through screens. Yet most of these systems are still emotionally “deaf”: they do not see whether we are confused, stressed, or delighted. This paper introduces a new artificial intelligence framework that reads human facial expressions more accurately and more efficiently than earlier methods, aiming to make digital tools more understanding, fair, and helpful in everyday life.

How faces give machines an emotional window

Our faces continuously broadcast information about how we feel, often more honestly than our words. Smiles, frowns, widened eyes, and subtle muscle twitches help people navigate conversations, build trust, and detect distress. Researchers in psychology, neuroscience, and computer science have long tried to teach computers to read these cues, a field known as facial emotion recognition. Such technology already appears in education platforms that track student engagement, game systems that adapt to a player’s mood, medical tools that monitor pain or depression, and security systems that watch for signs of agitation. But real-world conditions are messy: lighting changes, faces are partly covered, and expressions differ across individuals and cultures, making reliable emotion reading a tough problem.

Why older emotion systems fall short

Early computer systems relied on hand-designed rules, measuring simple features such as wrinkles, edges, or the shape of the mouth and eyes. These struggled with changes in pose, lighting, or individual differences. Deep learning brought progress by letting neural networks automatically learn useful patterns from face images, but common architectures still had blind spots. Convolutional networks excel at spotting local details, yet they have trouble linking distant parts of the face, such as how the eyes and mouth move together in a mixed expression. Newer transformer models capture these long-range relationships, but they can be heavy, data-hungry, and not ideal at picking up very fine, low-level details. Many existing systems also need careful manual tuning of hundreds of internal settings and often generalize poorly beyond the data they were trained on.

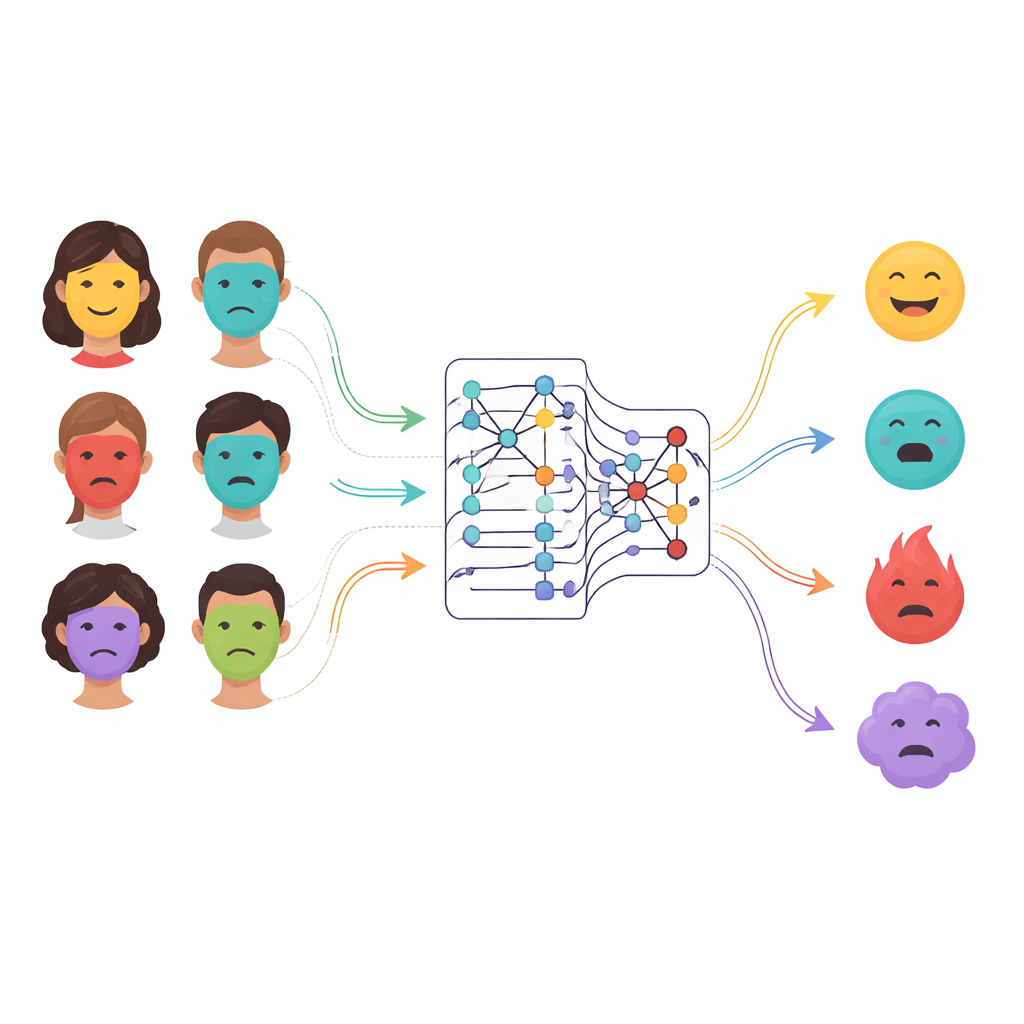

A new twin-eyed and attention-focused approach

The authors propose SiaCon-DetNet, a hybrid network that combines the strengths of several ideas. First, it uses a Siamese structure—two identical processing branches that see matching face images—to learn what really distinguishes one emotion from another. This twin design helps the model notice tiny differences between, for example, fear and surprise, which can involve similar muscles. Within each branch, convolutional layers capture fine-grained textures and shapes, such as eyebrow curves or mouth tension. On top of this, a transformer-based module acts like an attention spotlight, learning how distant regions of the face relate to each other and focusing on the most informative zones. Together, these components let the system build a rich, context-aware picture of each expression, even when faces are partially hidden or lit unevenly.

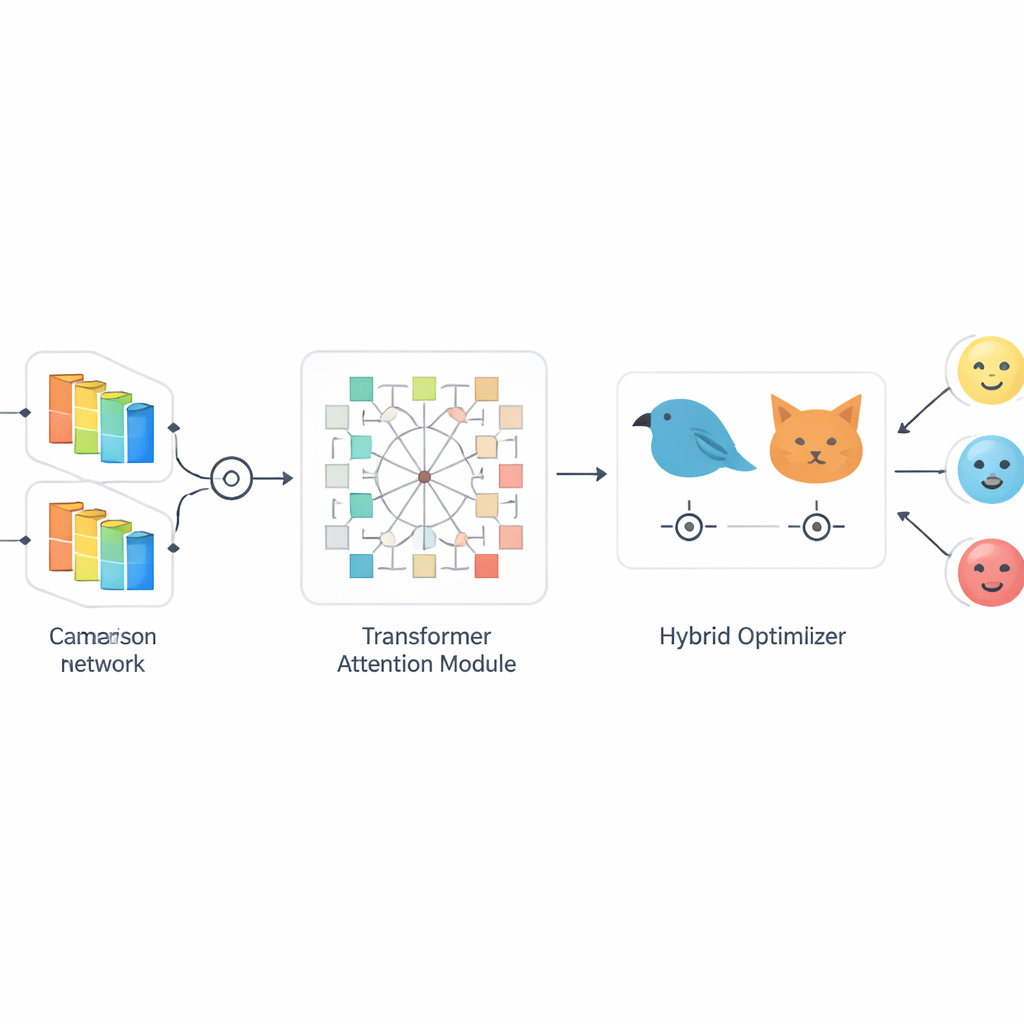

Nature-inspired tuning for sharper and faster learning

Designing a powerful model is only half the battle; it must also be tuned so it learns quickly without overfitting. To handle this, the paper introduces HySHO, a “bio-inspired” optimization scheme that blends strategies modeled on a hunting bird (Northern goshawk) and a desert cat. One part explores a wide range of settings, such as learning rates and filter sizes, preventing the system from getting stuck in poor solutions. The other part performs fine-grained adjustments in promising regions, speeding up convergence. This dynamic tuning is tied to how much facial expressions vary in a given dataset, letting the model adjust itself when it encounters subtle, mixed, or noisy emotions. As a result, training becomes both faster and more robust, supporting real-time or near–real-time applications.

Putting the system to the test

To evaluate their framework, the researchers tested it on three widely used emotion datasets that differ in size and difficulty. These collections include posed and more natural expressions across several basic emotions such as anger, fear, happiness, sadness, disgust, surprise, and neutrality. The new system reached around 99 percent accuracy on the best-known benchmark and maintained equally impressive precision, recall, and F1-scores across nearly all emotion categories. Importantly, it did this while training faster than many popular deep learning models built on well-known image architectures. Analyses of how different emotions correlate in each dataset showed that the model handled tricky pairs—like anger versus disgust or fear versus sadness—without large drops in performance, suggesting it captures the fine structure of expressions rather than memorizing obvious cases.

What this means for everyday technology

In plain terms, the study shows that an AI can be designed to “look” at faces in a more human-like way—comparing subtle differences, understanding context across the whole face, and adjusting its own learning strategy on the fly. The proposed SiaCon-DetNet with HySHO framework delivers extremely high accuracy while remaining relatively light and quick to train, making it a strong candidate for future tools in mental health screening, interactive tutoring, customer service, and assistive technologies for people with communication difficulties. Although important questions remain about privacy, consent, and fairness, this work moves emotion-aware systems closer to reading our feelings reliably enough to respond with sensitivity instead of guesswork.

Citation: M, S., M, U., K, T. et al. SiaCon-DetNet with HySHO: a cutting-edge transformer-based deep learning framework for emotion-aware facial recognition. Sci Rep 16, 14131 (2026). https://doi.org/10.1038/s41598-026-41890-9

Keywords: facial emotion recognition, deep learning, transformer models, human–computer interaction, affective computing