Clear Sky Science · en

Evaluation of cross-ethnic emotion recognition capabilities in multimodal large language models using the reading the mind in the eyes test

Why this matters for everyday life

Imagine a computer program that can look at a person’s eyes and guess what they are feeling—sometimes more accurately than most people can. This study asks whether such systems can do that fairly for people from different ethnic backgrounds. As artificial intelligence (AI) tools move into health care, education, and everyday apps, knowing if they treat different groups of people equally is crucial for trust, safety, and ethics.

Looking for feelings in the eyes

The researchers focused on a well-known psychological test called “Reading the Mind in the Eyes.” In this task, only the eye region of a face is shown, and the viewer must choose which emotion or mental state the eyes express. There are three versions of the test, each using photos of White, Black, or Korean individuals. People often find it harder to judge emotions from faces of another ethnic group, a pattern known as the “other-race effect.” The study asked whether advanced AI systems show a similar weakness, or whether they can recognize emotions equally well across these different sets of faces.

Putting three AI systems to the test

The team evaluated three popular multimodal large language models—systems that can process both images and text. They tested an older GPT-4-based model, a newer GPT-4o-based model, and a competing system called Claude 3 Opus. Each model completed all three versions of the eye test twice, so the researchers could check both accuracy and consistency over time. The AI models saw each eye image with four possible answers and had to pick one, just as a human test taker would. The scientists then compared the AI scores to those of large groups of people who had previously taken the same tests.

How well the machines did

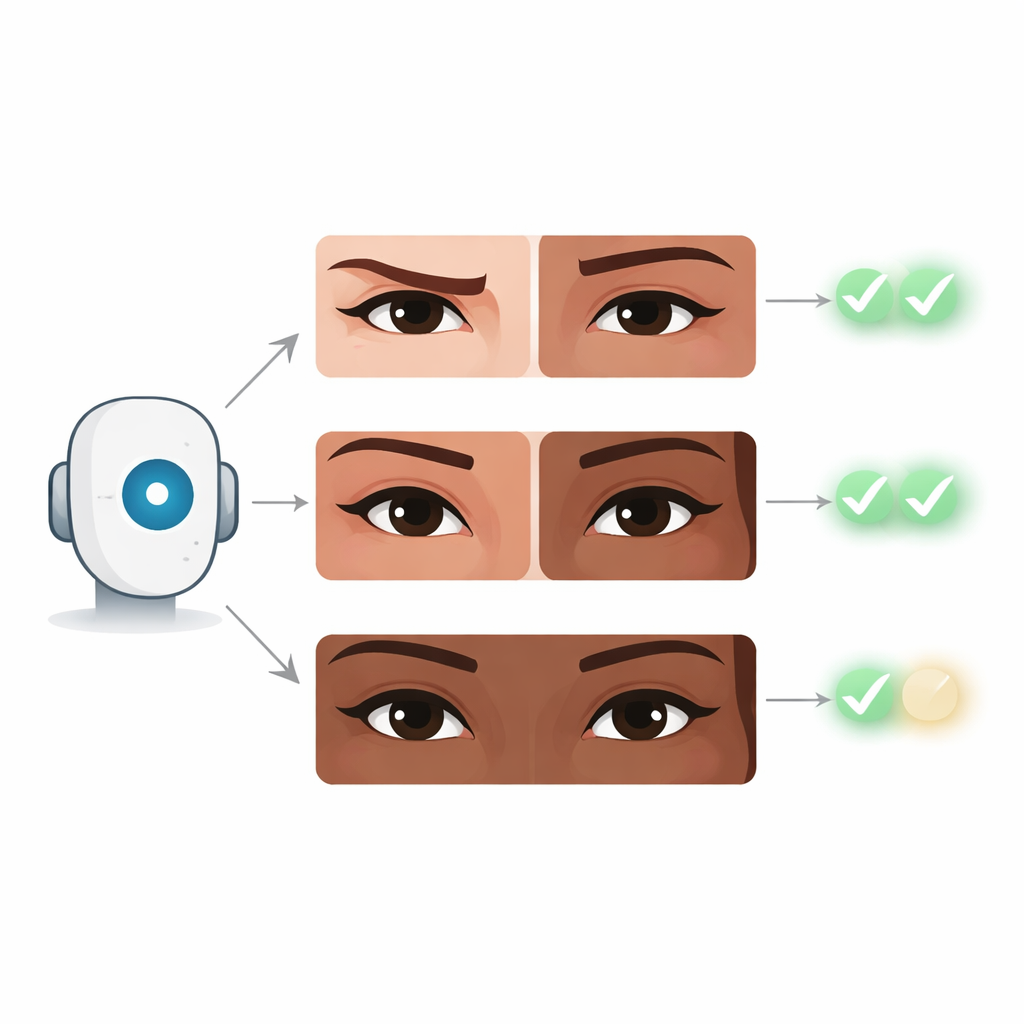

The newer GPT-4o model stood out. It answered correctly on about 83% of items for White faces, 94% for Black faces, and 86% for Korean faces. These scores placed it roughly in the top 85th to 94th percentile compared with human performance, meaning it did better than most people who have taken these tests. Importantly, its success was similar across all three ethnic groups, suggesting that it did not display the same kind of ethnic bias people often show on such tasks. The older GPT-4 model did better than random guessing but scored closer to average human levels, while Claude 3 Opus hovered near chance, performing like someone who was mostly guessing.

What the AI found easy and hard

To move beyond simple score totals, the authors examined which kinds of emotions the models handled well or poorly. Across systems, they tended to recognize inner states such as being worried, uneasy, or thoughtful with high accuracy. In contrast, they struggled more with socially rich, positive expressions that carry interpersonal meaning—such as being playful, friendly, or flirtatious. The newer GPT-4o system reduced these errors more than the others, hinting that each new generation of AI may be getting better at picking up subtle social signals that earlier models miss.

What this could mean for people

The findings raise both exciting possibilities and important cautions. On the one hand, a system that can read emotions from faces as well as, or better than, many humans—and do so similarly across ethnic groups—could one day assist psychologists, doctors, or teachers by offering a more stable second opinion about social cues. On the other hand, the eye test itself has serious scientific limits and may not reflect real-life social understanding, which depends on body language, tone of voice, and context. The authors stress that these results do not prove that AI has genuine empathy or that it is free from bias in other settings. Instead, the work offers an early benchmark: for a narrow, controlled task focused on the eye region, at least one modern AI appears highly accurate and relatively even-handed across different ethnic groups, but much more research is needed before such tools should influence real-world decisions.

Citation: Refoua, E., Elyoseph, Z., Piterman, D. et al. Evaluation of cross-ethnic emotion recognition capabilities in multimodal large language models using the reading the mind in the eyes test. Sci Rep 16, 9975 (2026). https://doi.org/10.1038/s41598-026-39292-y

Keywords: emotion recognition, artificial intelligence, social cognition, cross-ethnic bias, mental health