Clear Sky Science · en

Community-based fact-checking reduces the spread of misleading posts on X (formerly Twitter)

Why this matters for everyday users

Every day, millions of people scroll through social media feeds filled with breaking news, hot takes, and rumors. Some of that information is misleading or flat-out wrong, and it can shape views on elections, health, and public safety. This study asks a practical question that affects anyone who uses X (formerly Twitter): when ordinary users add fact-checking notes to suspicious posts, does it actually slow the spread of misleading information?

From experts to the crowd

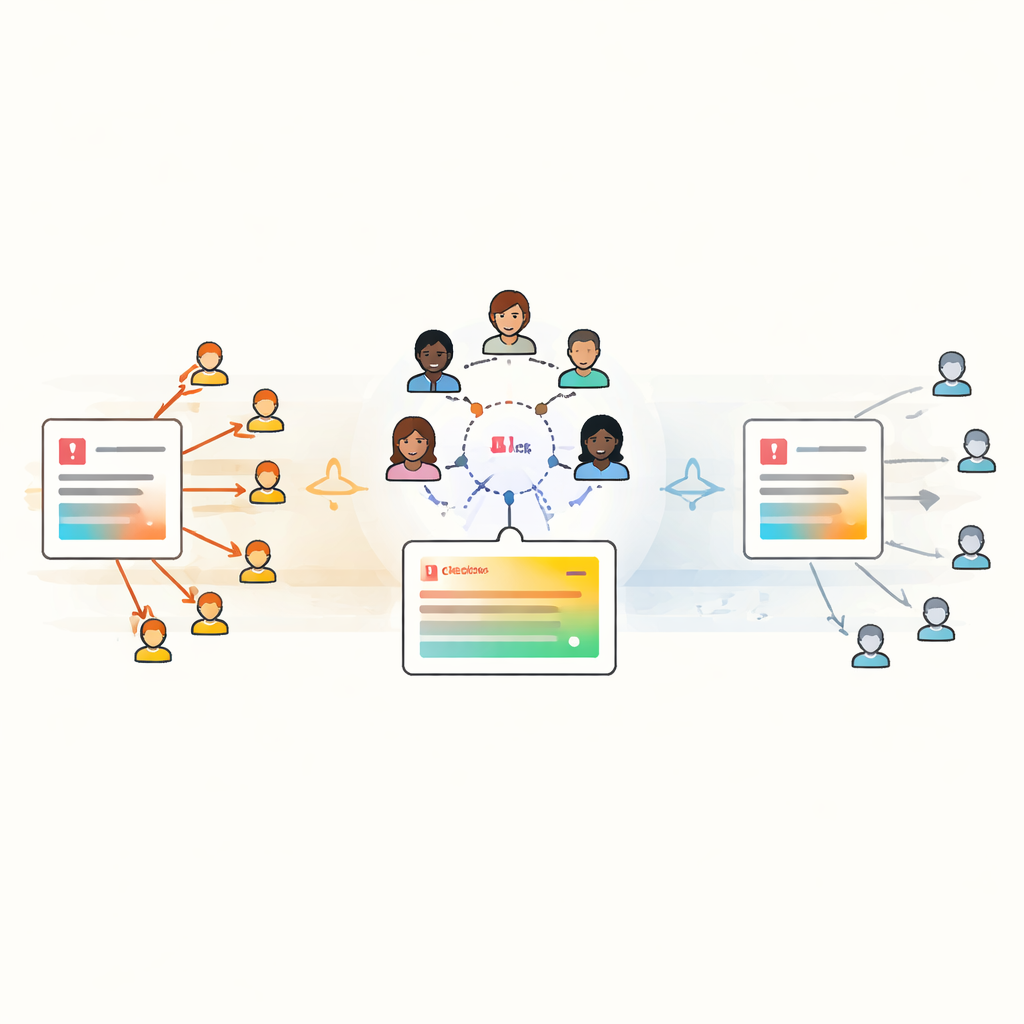

Traditional fact-checking relies on trained professionals who carefully investigate claims and publish corrections. While powerful, this approach struggles with three big problems: there is far more content than experts can check, many people never see professional fact-checks, and large parts of the public do not fully trust them. In response, X launched “community notes,” a system that lets regular users flag questionable posts, add short explanations, and rate each other’s notes for helpfulness. Once enough users agree that a note is useful, it appears directly below the original post, giving readers an instant counterpoint while they browse.

Measuring impact at massive scale

The researchers analyzed more than 237,000 posts on X that had been targeted by community notes over roughly 20 months, covering over 431 million reposts. They focused on posts that the community had flagged as misleading and compared them to very similar posts that never received a displayed note. To make the comparison fair, they matched posts based on author characteristics (such as follower counts) and content features (such as topics and tone). Using a statistical approach called difference-in-differences, they tracked repost activity before and after a note appeared, and contrasted the shift in the “treated” posts with the shift in their matched “control” posts.

What happens once a note appears

The core finding is striking: once a community note is displayed, the misleading post’s further spread falls sharply. On average, reposting drops by about 61 percent compared with what would have been expected without the note. This effect becomes stronger over the first twelve hours after the note appears. The pattern holds across many types of users and topics, including people who frequently engage with questionable content and users on different sides of the political spectrum. However, the notes work somewhat less well for posts from very large or verified accounts and for political or health-related content, suggesting that devoted audiences and sensitive topics can blunt the impact of community corrections.

Timing is everything

Despite their strong local effect, community notes often arrive late in the life of a post. Most resharing on X happens quickly, with half of the 36-hour repost total typically reached in a few hours. By contrast, helpful notes are usually displayed much later, often after much of the viral wave has already passed. When the researchers estimated how many reposts were prevented overall, they found that community notes reduced total engagement with misleading posts by about 15 percent. Simulations suggest that if notes appeared much earlier—say, within two hours of the post being published—the total reduction in reposts could exceed 50 percent. The study also shows that posts with displayed notes are almost twice as likely to be deleted by their authors, while there is no sign that the platform’s own enforcement (such as suspending accounts) is triggered by the notes themselves.

What this means for the fight against falsehoods

For everyday users, the message is twofold. First, community-based fact-checking can work: when clearly visible corrections from fellow users appear on misleading posts, people share those posts far less, and some authors even remove them. Second, speed is crucial. Because misinformation travels fast, even an effective correction has limited reach if it arrives too late. The study suggests that improving how quickly and widely community notes are surfaced could turn a modest system-wide impact into a much stronger brake on viral falsehoods—an important consideration as social media and AI make it easier than ever for misleading content to spread.

Citation: Chuai, Y., Pilarski, M., Renault, T. et al. Community-based fact-checking reduces the spread of misleading posts on X (formerly Twitter). Nat Commun 17, 4070 (2026). https://doi.org/10.1038/s41467-026-72597-0

Keywords: misinformation, social media, fact-checking, community notes, online trust