Clear Sky Science · en

Predicting new research directions in materials science using large language models and concept graphs

Why letting machines read science matters

Every year, scientists publish far more papers than any human can possibly read, even within a narrow specialty. Hidden in this flood of information are unexpected connections—ideas that could spark better batteries, tougher alloys or more efficient solar cells, but that no one has thought to combine. This article explores how artificial intelligence, in particular large language models, can scan vast libraries of materials research papers and suggest fresh, plausible research directions that human experts may otherwise overlook.

Turning scattered ideas into a map of knowledge

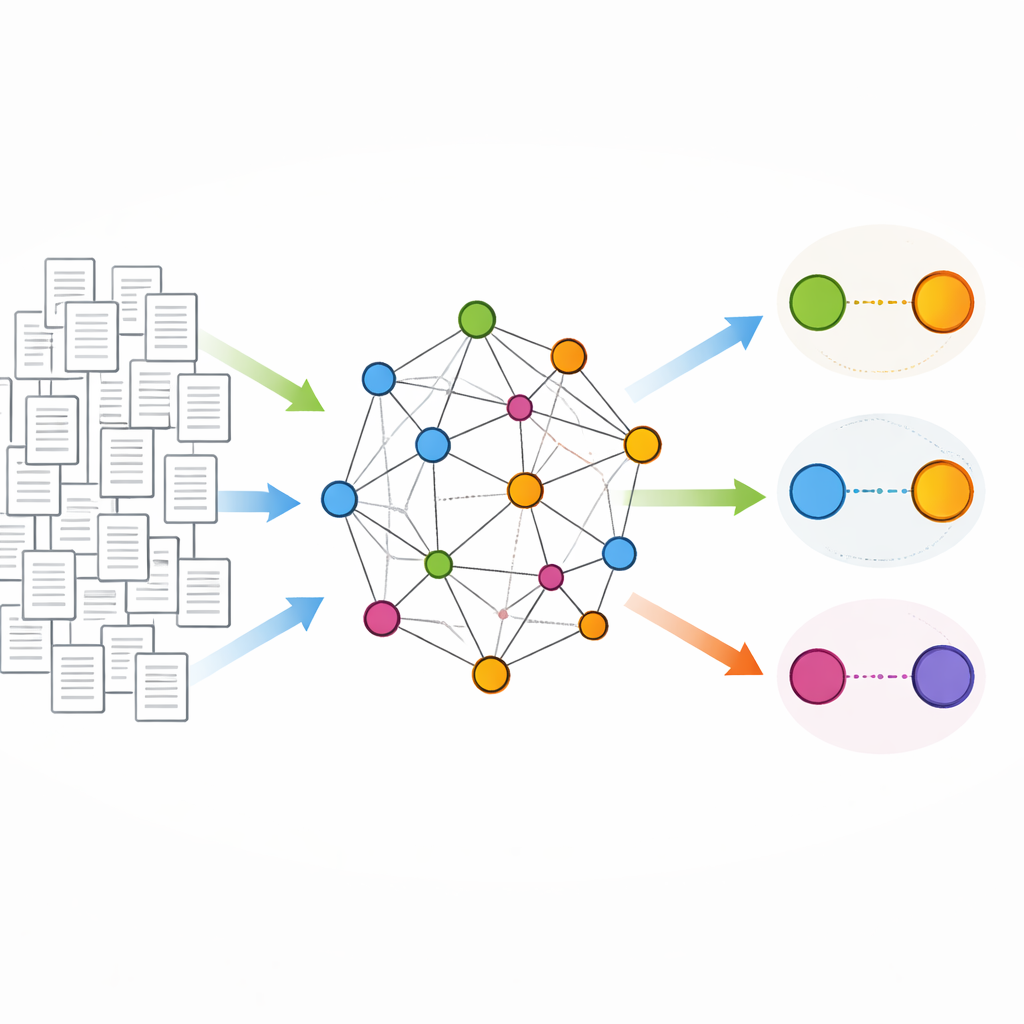

The authors begin by treating each research abstract as a compact description of what a paper is really about. They fine-tune a large language model so that, instead of just predicting words, it reliably extracts the main “concepts” from these abstracts: short, meaningful phrases such as “mechanical property,” “graphene oxide,” or “organic solar cell.” Unlike simple keyword algorithms, the tuned model can clean up grammar, merge synonyms and even infer concepts that are not written exactly as they appear, producing a high-quality list of the core ideas in each paper with minimal human correction.

Building a concept network for materials science

With concepts in hand, the team constructs a huge network in which each node is a distinct concept and links are drawn whenever two concepts appear together in the same abstract. From 221,000 materials science papers, this yields about 137,000 concepts connected by around 13 million links. Most concepts connect to only a few others, but some, like common measurement techniques, form busy hubs. Over time, as more papers are written, new links appear and the network becomes more interconnected. Using advanced language encoders specialized for materials science, every concept is also assigned a numerical fingerprint that captures its meaning, allowing similar ideas to sit near each other in an abstract “map of materials science.”

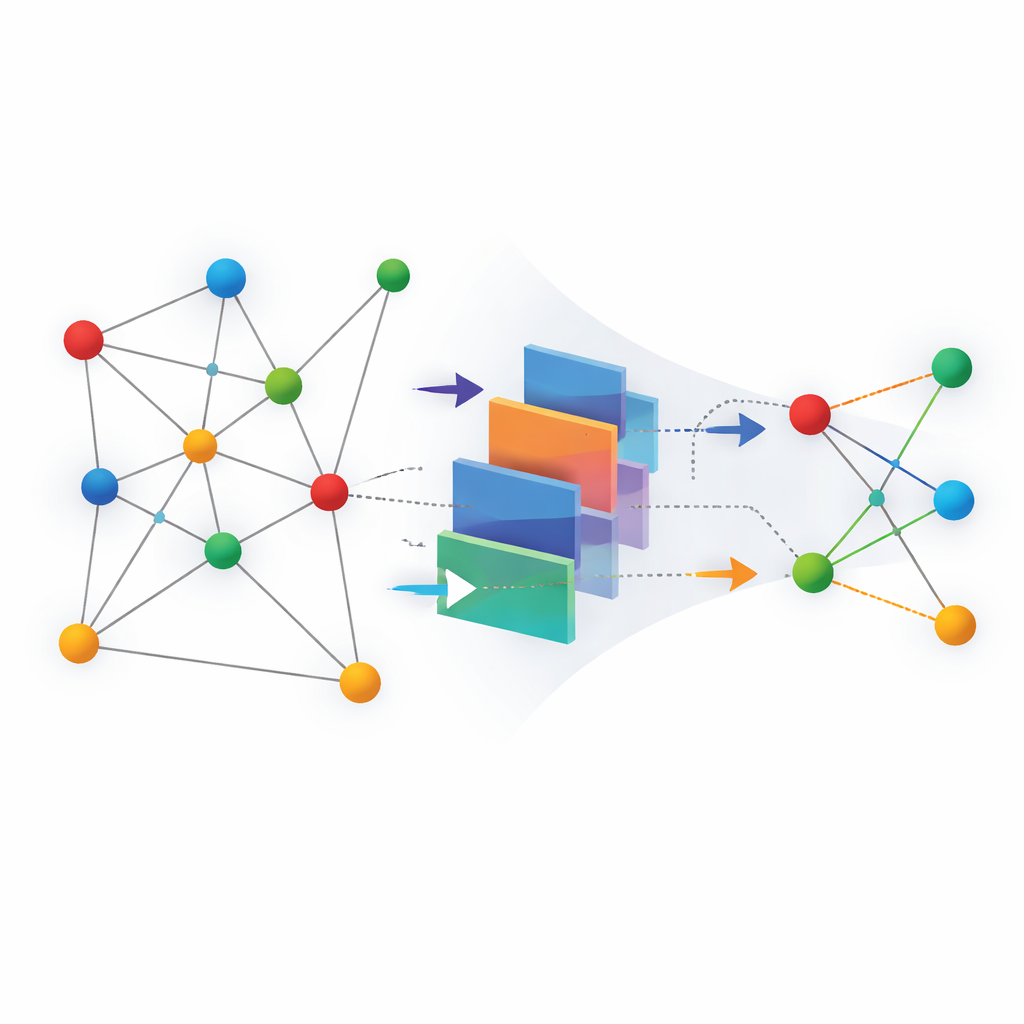

Teaching machines to spot tomorrow’s connections

The heart of the study is a prediction task: given the network up to a certain year, can a machine learning model guess which pairs of concepts will be linked in future papers? Each possible pair becomes a yes-or-no question—will these two ideas ever appear together? The authors test several approaches. Some use only the network’s structure, such as how many neighbors two concepts share. Others draw solely on the semantic fingerprints of the concepts. Hybrid models combine both. A graph neural network that learns from the network layout, blended with semantic information from language models, performs best, correctly distinguishing future links from non-links in a highly imbalanced, realistic setting where true new combinations are rare needles in a haystack.

From model scores to suggestions for real scientists

To see whether these predictions are actually useful, the researchers generate personalized reports for ten materials scientists. For each scientist, they identify the concepts that describe that person’s own work and then ask the model which new concept pairings involving those ideas look most promising. They also apply simple filters to avoid overly generic concepts and use a language model to draft short, human-readable explanations for a subset of suggestions. In interviews, the experts classify each suggestion as already known, trivial, nonsensical or genuinely interesting and inspiring.

How well the system sparks new ideas

The interviews reveal that about a quarter of all suggested combinations fall into the “interesting or inspiring” category. While that fraction may sound modest, each half-hour conversation still yields several concrete, fresh ideas that the scientists consider worth thinking about. Notably, the most intriguing suggestions often link concepts that were only distantly related in the original network—connections that are harder to spot by eye. Adding semantic information from language models is especially helpful in uncovering these more adventurous pairings, and the explanatory paragraphs generated by the AI make it easier for experts to assess whether an unfamiliar combination could be realistic and worthwhile.

What this means for the future of research

In clear terms, the paper shows that AI can act as a kind of idea scout for scientists. By reading hundreds of thousands of abstracts, turning them into a network of concepts, and then forecasting which pairs of ideas are likely to meet in future papers, the system points researchers toward plausible but unexplored directions. It does not replace human creativity or judgment; instead, it offers a curated shortlist of surprising connections that scientists can evaluate, refine and test. Although this study focuses on materials science, the same recipe could be applied to many fields, helping researchers everywhere navigate the growing sea of scientific knowledge and discover promising paths they might otherwise miss.

Citation: Marwitz, T., Colsmann, A., Breitung, B. et al. Predicting new research directions in materials science using large language models and concept graphs. Nat Mach Intell 8, 535–544 (2026). https://doi.org/10.1038/s42256-026-01206-y

Keywords: scientific discovery, materials science, large language models, knowledge graphs, research ideation