Clear Sky Science · en

Teaching multimodal LLMs to comprehend 12-lead electrocardiographic images

Why Teaching Computers to Read Heart Traces Matters

Every day, millions of people have their heart activity recorded using an electrocardiogram, or ECG. Doctors usually see these recordings as printed or digital graphs full of squiggly lines. In many places, especially clinics with limited resources, only these images are available—no raw digital signals, no advanced software. This study shows how a new kind of artificial intelligence (AI) can learn to “read” ECG images directly, offering more reliable help to clinicians around the world.

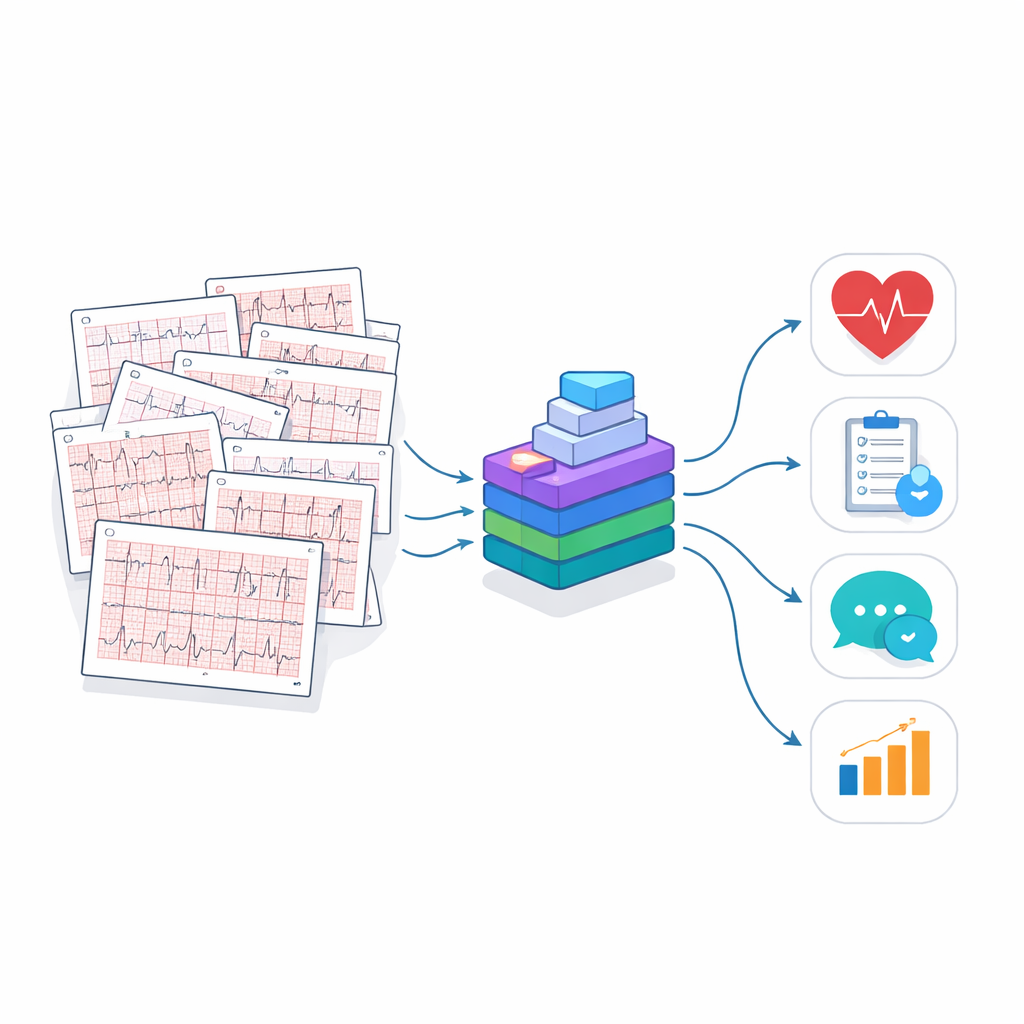

Building a Massive Library of Heart Pictures

To teach an AI system to understand ECG images, the researchers first had to create a huge, realistic training library. Most existing ECG databases store raw electrical signals instead of the familiar paper-like images doctors use. The team converted these signals into lifelike 12-lead ECG pictures, complete with gridlines and standard layouts. They also added realistic imperfections—wrinkles, rotations, faint lines, color changes, and even simulated camera photos—to mimic what happens when ECGs are printed, scanned, or photographed in real clinics. These images came from several large patient groups in Europe, North America, and South America, helping the system learn patterns that appear across different populations and hospital setups.

Teaching an AI to Understand What It Sees

Simply showing the AI millions of ECG pictures is not enough; it also has to learn how to respond to meaningful questions. The team created ECGInstruct, a collection of more than a million image-and-text pairs. Each pair links an ECG image to a task: spotting basic features of the heartbeat, recognizing abnormal rhythms, identifying signs of disease, or writing a short clinical-style report. To scale this up, the researchers used a powerful language model to help draft questions and answers, then filtered and refined them with automatic checks and expert review. This gave the AI not just raw images, but a rich set of examples of how clinicians think and talk about ECGs.

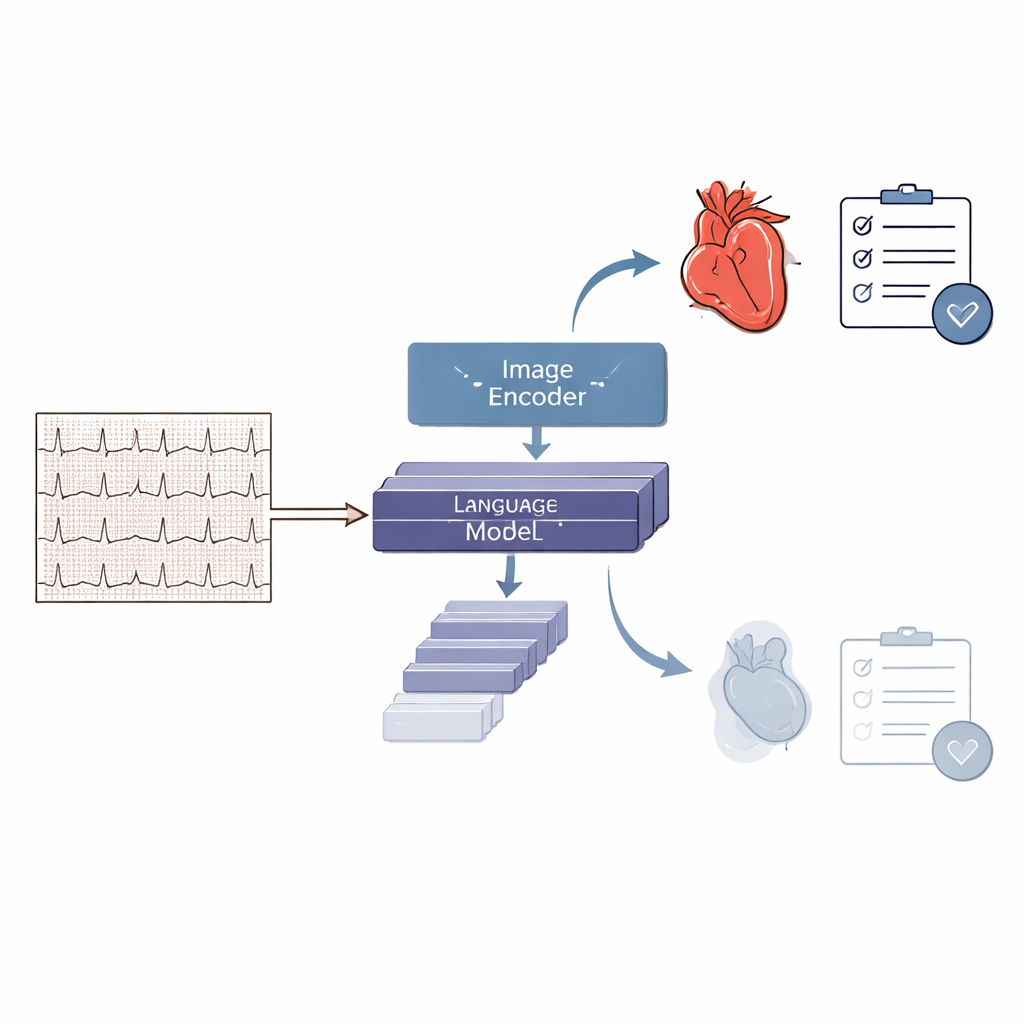

Introducing PULSE, a Specialized Heart-Reading Model

Using this large and carefully prepared dataset, the team trained PULSE, a multimodal AI model that can look at an ECG image and produce text-based interpretations. PULSE combines an image-processing module with a language module so it can connect visual patterns to written explanations and decisions. Unlike earlier systems that were restricted to a few fixed diagnoses or needed clean numerical signals, PULSE is designed to handle many types of questions, from “Is this ECG normal or abnormal?” to “Describe the rhythm and key findings.” It can also engage in multi-step conversations about a single ECG, mirroring how a clinician might reason through a difficult case.

Putting the System to the Test

To see how well PULSE works, the researchers built ECGBench, a broad test suite for ECG image understanding. ECGBench includes standard diagnosis tasks, report generation, multiple-choice questions on real-world cases, and multi-turn question–answer sessions that feel like a dialogue with a specialist. Across both familiar datasets and entirely new ones, PULSE outperformed general-purpose AI models such as widely used commercial systems by 21–33 percentage points in accuracy. It also beat earlier ECG-focused tools that rely on raw signals, especially on tasks requiring open-ended reasoning or working from printout-style images alone. In side-by-side examples, PULSE typically produced reports closer to expert interpretations than those from leading general AI models.

What This Could Mean for Everyday Care

The study suggests that a carefully trained, open-source AI like PULSE could become a versatile assistant wherever ECG images are used. Because it works directly on pictures, it can support clinics that only have scanned or photographed printouts, and it can go beyond simple yes-or-no labels to provide richer explanations and multi-step reasoning. At the same time, the authors emphasize that the system is not yet a replacement for cardiologists. It still falls short of expert performance and must be tested carefully in real hospital settings, with attention to safety, bias, and proper oversight. Even so, this work marks an important step toward AI tools that can help clinicians make better sense of the squiggly lines that reveal the health of the human heart.

Citation: Liu, R., Bai, Y., Yue, X. et al. Teaching multimodal LLMs to comprehend 12-lead electrocardiographic images. npj Digit. Med. 9, 349 (2026). https://doi.org/10.1038/s41746-026-02551-3

Keywords: electrocardiogram, medical AI, multimodal models, cardiac diagnosis, clinical decision support