Clear Sky Science · en

A frequency analysis of filterbank initialisation and noise augmentation for LEAF

Why Smart Listening Machines Matter

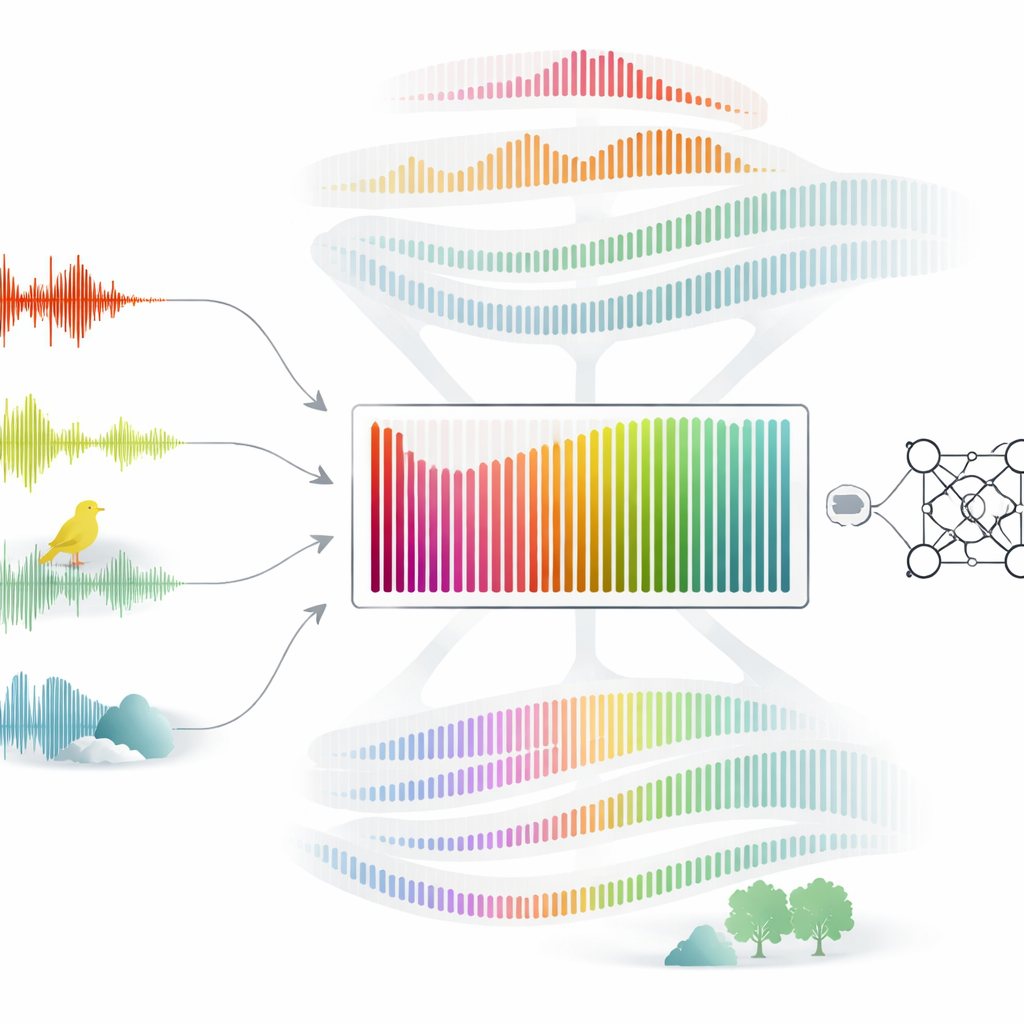

From voice assistants to bird-song monitors, modern life depends on machines that can listen. Behind the scenes, these systems transform raw sound waves into numbers that algorithms can understand. A new study examines a popular “smart ear” module called LEAF, which promises to learn the best way to represent sound for many tasks. The researchers ask a simple but important question: does this smart ear actually adapt to different listening jobs, or is it mostly locked into its starting design?

How Machines Usually Listen

Most audio-based AI systems do not work directly with raw sound. Instead, they first pass the signal through a fixed set of filters that break the sound into low, middle, and high parts, creating pictures called spectrograms. These filters are often based on how the human ear hears pitch, especially the so-called Mel scale. This approach has a long track record of success, but it bakes in human hearing assumptions and leaves little room for the system to discover new, task-specific ways of listening.

A Promising New Kind of Digital Ear

LEAF was introduced as a compromise between rigid, hand-crafted filters and fully end-to-end systems that learn everything from scratch. It mimics classic signal-processing steps, but makes key parameters such as filter positions and widths adjustable during training. In principle, this should let the system learn different “hearing profiles” for speech recognition, emotion detection, urban sound scenes, or bird activity. Earlier work, however, hinted that in practice only a later normalisation step in LEAF changes much, while the filterbank itself barely moves when it starts from a Mel-based design.

Putting LEAF to the Test Across Many Sounds

The authors systematically probe LEAF’s behaviour on four very different listening tasks: recognizing spoken keywords, detecting emotion in children’s speech, classifying everyday acoustic scenes, and detecting bird vocalisations. They repeat each experiment with several starting filter layouts: Mel and Bark scales (both inspired by human hearing), evenly spaced filters across frequency, and an extreme “constant” setup where all filters initially listen to the same narrow band. They track both performance and how much the filter positions and widths actually change. The result: as long as the initial filters already span the full range of heard frequencies, the system reaches high accuracy and the filters barely budge, regardless of whether they follow Mel, Bark, or a simple linear spacing.

When the Starting Point Is Bad on Purpose

Things look different when LEAF begins from the constant setup, where every filter hears the same slice of the spectrum. Here, the system is forced to reshape its filters to cover a wider range, and the positions and widths do change noticeably. Even then, the final layout settles into a smooth, S-shaped spread across frequency, and performance never fully catches up with the better initialisations. To dig deeper, the authors create heavily modified versions of the speech-recognition data: in one case, only a narrow band of frequencies is kept; in others, low- or high-pitched noise is added to mask parts of the spectrum. Surprisingly, even when important frequencies are removed or flooded with noise, the learned filters still drift toward a similar S-shaped pattern that extends into regions with little or no useful information.

What This Means for Interpreting Machine Hearing

These findings suggest that LEAF’s filterbank is much more stubborn than its “learnable” label implies. Once the filters start with reasonable coverage of the spectrum, they have little incentive to adapt to the specific frequency patterns of birds, human emotion, or city sounds. Instead, the heavy lifting appears to be done by later parts of the network. This weakens one of LEAF’s advertised advantages: that inspecting its filters would reveal how the model tunes itself to different tasks. The authors argue that future work should adjust training procedures—such as using different learning rates for early layers or special optimisation tricks—to encourage more meaningful changes in these first listening stages.

Take-Home Message for Non-Experts

In everyday terms, this study shows that giving an AI a “flexible ear” does not guarantee that it will actually listen differently when its job changes. LEAF performs well across several audio tasks, but mostly by keeping a broad, generic way of splitting sound rather than inventing new task-specific hearing strategies. For now, its strength lies in solid performance, not in the promise of giving us clear, interpretable insight into what information the system finds important in different types of sounds.

Citation: Milling, M., Triantafyllopoulos, A., Rampp, S.D.N. et al. A frequency analysis of filterbank initialisation and noise augmentation for LEAF. Sci Rep 16, 13410 (2026). https://doi.org/10.1038/s41598-026-49403-4

Keywords: audio deep learning, learnable frontends, filterbank initialisation, speech and sound recognition, training dynamics