Clear Sky Science · en

Predictive analysis of student engagement in university physical education courses based on a multimodal transformer algorithm

Why this matters for students and teachers

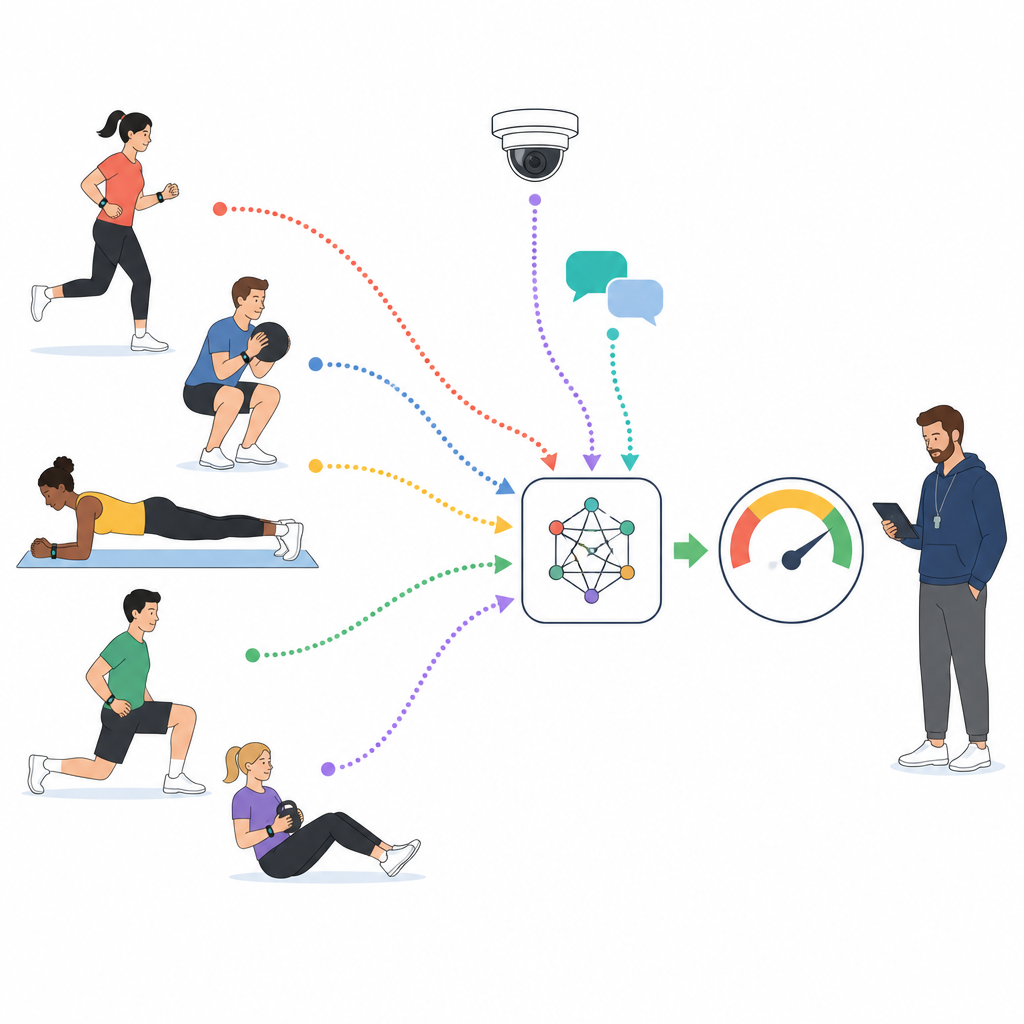

University sports classes are supposed to boost fitness, build good exercise habits, and lift mood, yet many gyms and fields still see low attendance and half‑hearted participation. This study shows how data from wearables, classroom cameras, and short written feedback can be combined to automatically estimate how engaged students really are during physical education classes, offering teachers quicker and more objective insight than traditional checklists or end‑of‑term surveys.

Turning sports classes into rich data streams

In modern physical education courses, students often wear devices that track heart rate, steps, and movement, while cameras capture group activities and online platforms collect short messages and comments. The researchers tap into a large national dataset that brings these streams together for 1,000 university students over thousands of hours of class time. Each ten‑minute slice of class is tagged by trained experts as showing low, medium, or high participation, based on how students move, how hard their bodies are working, and what they say about the lesson. These labeled slices become the training ground for a computer model that learns to read engagement from raw data rather than from scattered impressions.

Teaching a model to read body, face, and words

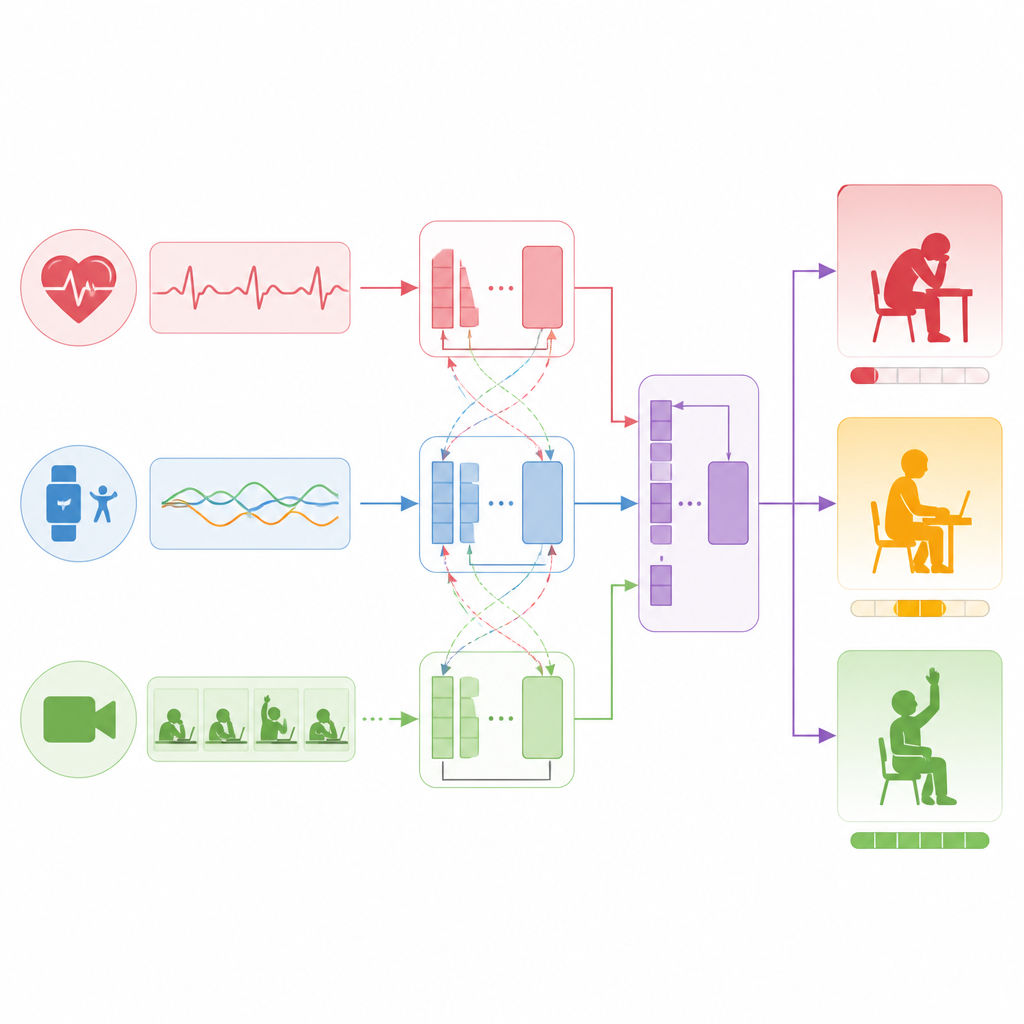

Instead of relying on a single source of information, the study builds a layered model that treats sensors, text, and video as equal partners. For sensor signals such as heart rate and acceleration, a sequence‑processing network learns to spot patterns like sustained effort or repeated bursts of activity. For student comments and short reflections, a language model distills whole sentences into compact representations that encode attitude and tone. For video clips, another network breaks each frame into patches and learns how facial expressions, body posture, and movement patterns unfold over time. All three streams are then translated into a shared numerical space so that the model can compare and combine them effectively.

How the model connects signals to engagement

The heart of the approach is a technique that allows different data streams to pay attention to one another. First, the model strengthens each stream individually, learning internal structure such as trends in heart rate or key moments in a video. Next, it links the streams, asking questions such as which time periods in the sensor data match written mentions of feeling tired, or which video segments line up with language that suggests excitement. By learning these cross‑links, the system builds a fused picture of what is happening with each student during a ten‑minute window. Finally, this combined picture feeds a simple output layer that produces both a continuous engagement score and a three‑level category.

How well the system works in practice

When the researchers compare their multimodal model with a range of existing methods that use only sensors, only video, or just two types of data, they find clear gains. The new system cuts prediction error by more than a fifth compared with a strong sensor‑only baseline and reaches over 90 percent accuracy in classifying engagement levels. Importantly, it does so quickly enough to be useful during class, needing about two tenths of a second to process ten minutes of data for one student. Tests that remove one data type at a time show that all three sources are valuable, with video contributing the most, followed by text and then sensors. Extra analysis of the model’s internal attention patterns suggests that it focuses on sensible cues, such as linking rising heart rate with active movement and later fatigue.

What this could mean for future sports classes

The authors conclude that a carefully designed multimodal system can provide timely and fairly accurate pictures of student involvement in physical education, moving evaluation away from rough impressions toward continuous, data‑driven insight. While the approach depends on cameras and wearables and raises privacy and fairness questions, it points to a future in which teachers receive real‑time feedback about when students are focused, excited, or drifting, and can adjust activities on the spot rather than waiting for end‑of‑semester surveys.

Citation: Li, J. Predictive analysis of student engagement in university physical education courses based on a multimodal transformer algorithm. Sci Rep 16, 15123 (2026). https://doi.org/10.1038/s41598-026-45928-w

Keywords: student engagement, physical education, multimodal learning, transformer model, wearable sensors