Clear Sky Science · en

Automated kidney stone segmentation from flexible ureteroscopy videos using a U-net model: A preliminary feasibility study

Helping Surgeons See Hidden Kidney Stones

Kidney stones are a painful and increasingly common problem, and one of the main ways to treat them is to thread a tiny camera into the kidney and break the stones apart with a laser. But inside the body, the view can be messy: blood, dust from shattered stone, and motion from breathing all make it hard for surgeons to clearly see what they are doing. This study explores whether artificial intelligence (AI) can act like a real‑time highlighter for kidney stones during surgery, making them stand out more clearly on the screen and potentially making treatment faster and safer.

Why Kidney Stones Are Hard to Treat

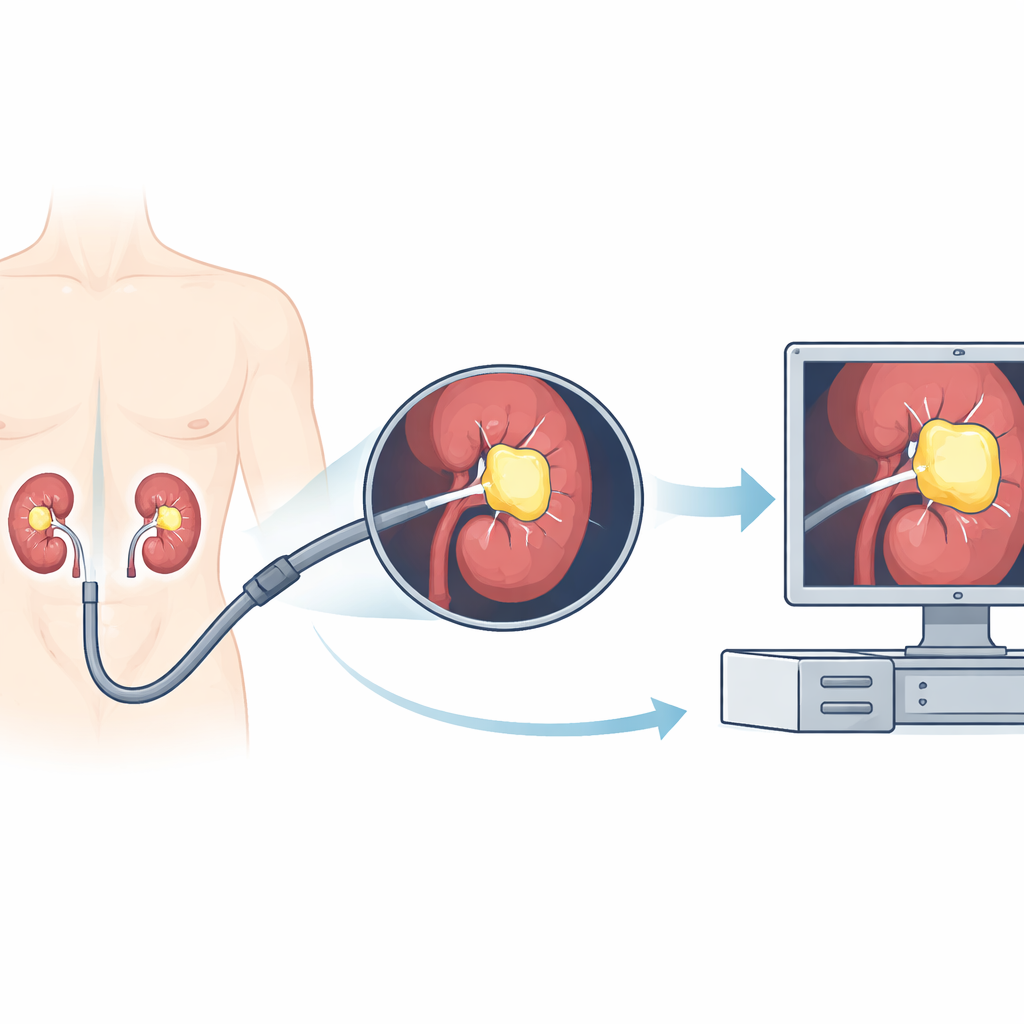

Kidney stones affect a large share of adults worldwide and can cause severe pain, infections, and even kidney failure. Modern treatment often uses flexible ureteroscopy, where doctors guide a bendable scope with a camera through the urinary tract into the kidney. Once there, they use a laser to break stones into smaller pieces. However, the camera image is far from perfect. Sudden bleeding, swirling dust from the laser, changes in lighting, and rapid motion can all blur the view. Surgeons must constantly decide where the stone ends and the surrounding tissue begins. If they miss fragments, patients may need repeat procedures; if they misjudge the field, there is a higher risk of complications.

Teaching a Computer to Spot Stones

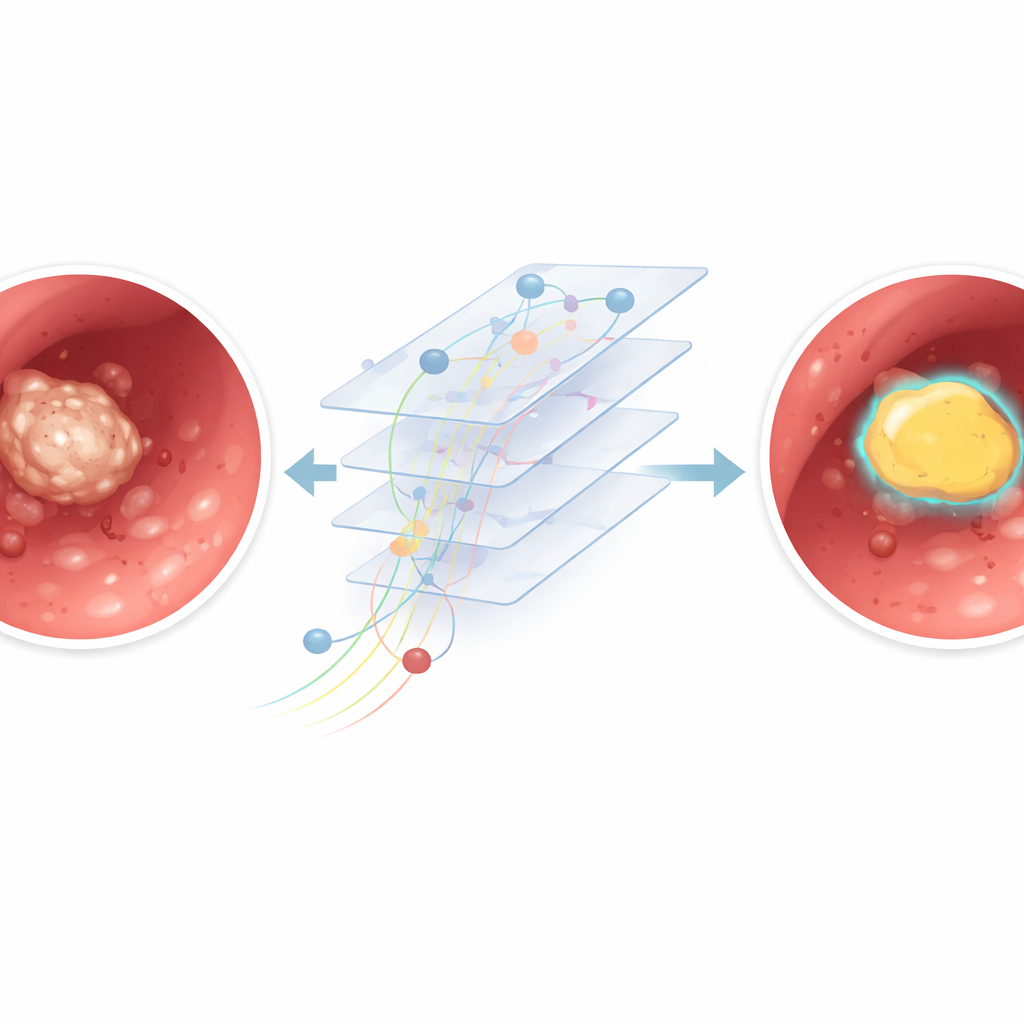

To tackle this challenge, the researchers trained a computer program to automatically outline kidney stones in the video stream from the endoscope. They collected 12 full surgery recordings from different patients, adding up to about 11 hours of real‑world footage. From these videos, they pulled out thousands of individual frames and built a semi‑automatic system to mark where the stones were. First, human reviewers drew simple boxes around visible stones. Then a separate tool generated more precise shapes inside those boxes, and medical experts checked that these matched the true stone areas. These paired images and outlines were used to train a deep learning model based on a U‑Net architecture, a popular type of network for image segmentation that can color in exactly which pixels belong to the stone.

How Well the AI Model Performed

The team carefully split their data so that some surgeries were used to teach the model, some to fine‑tune it, and some were kept completely separate to test how well it would work on new, unseen operations. On these held‑out videos, the AI correctly classified most pixels in each frame and showed a strong overlap between its stone outlines and the expert markings. In simple terms, when the stone was clearly visible and the camera image was sharp, the system drew a tight, accurate boundary around it. The model was also fast: on a standard graphics card, it processed around 30 frames per second, similar to the speed of a live video feed, suggesting that real‑time use in the operating room is technically feasible.

When the View Gets Messy

Performance dropped when the video looked more like a snowstorm than a clear picture. In frames with heavy bleeding, thick dust clouds from the laser, or strong motion blur, the model sometimes confused bright debris with actual stone or missed parts of the stone altogether. A closer analysis compared “clear” frames with minimal clutter to “blurry” frames full of artifacts. The AI did noticeably better in the clear group, confirming that poor visibility makes precise outlining harder even for a trained algorithm. Because the model analyzes each frame on its own, it cannot yet use motion over time to distinguish a stable stone from fleeting dust or blood streaks.

What This Could Mean for Future Surgeries

This work is an early step rather than a ready‑to‑use hospital tool. The study used a modest number of videos from a single center, with a limited set of camera types, and the annotation process may still contain human and algorithmic imperfections. The system has not yet been embedded into a full surgical workflow, where reliability, safety checks, and hardware limits matter greatly. Still, the results show that an AI model can learn to find and outline kidney stones directly from real surgical videos and keep up with live speeds. With larger and more diverse datasets, smarter use of information across consecutive frames, and careful testing in real operating rooms, similar systems could eventually assist surgeons by making hidden stones stand out more clearly, reducing missed fragments and improving patient care.

Citation: El Hajj, A., Bou Mrad, A., Malik, E. et al. Automated kidney stone segmentation from flexible ureteroscopy videos using a U-net model: A preliminary feasibility study. Sci Rep 16, 14542 (2026). https://doi.org/10.1038/s41598-026-45143-7

Keywords: kidney stones, surgical AI, endoscopy video, medical imaging, deep learning