Clear Sky Science · en

Artificial intelligence technology for music teaching reform mode under DCNN algorithm

Smarter Music Lessons for Everyday Students

Imagine practicing piano or singing and getting instant, objective feedback on whether your notes are in tune, your rhythm is steady, and your expression matches the mood of the piece—without waiting for the next lesson. This study explores how artificial intelligence can play the role of an ever-present, tireless assistant in music education, helping both teachers and students move beyond guesswork toward data-driven, personalized learning.

Why Traditional Music Classes Fall Short

Conventional music teaching depends heavily on a teacher’s ear, time, and attention. In crowded classrooms or busy studios, it is hard to give every student detailed, continuous feedback. Assessments can be subjective, and tailoring practice plans to each learner’s needs is time-consuming. The authors argue that computers, which excel at spotting patterns in sound, can help fill these gaps. By analyzing short audio clips—about ten seconds long—an AI system can track pitch, rhythm, and tone, and translate this information into concrete suggestions that support more precise and fair evaluation.

How the New Listening Engine Works

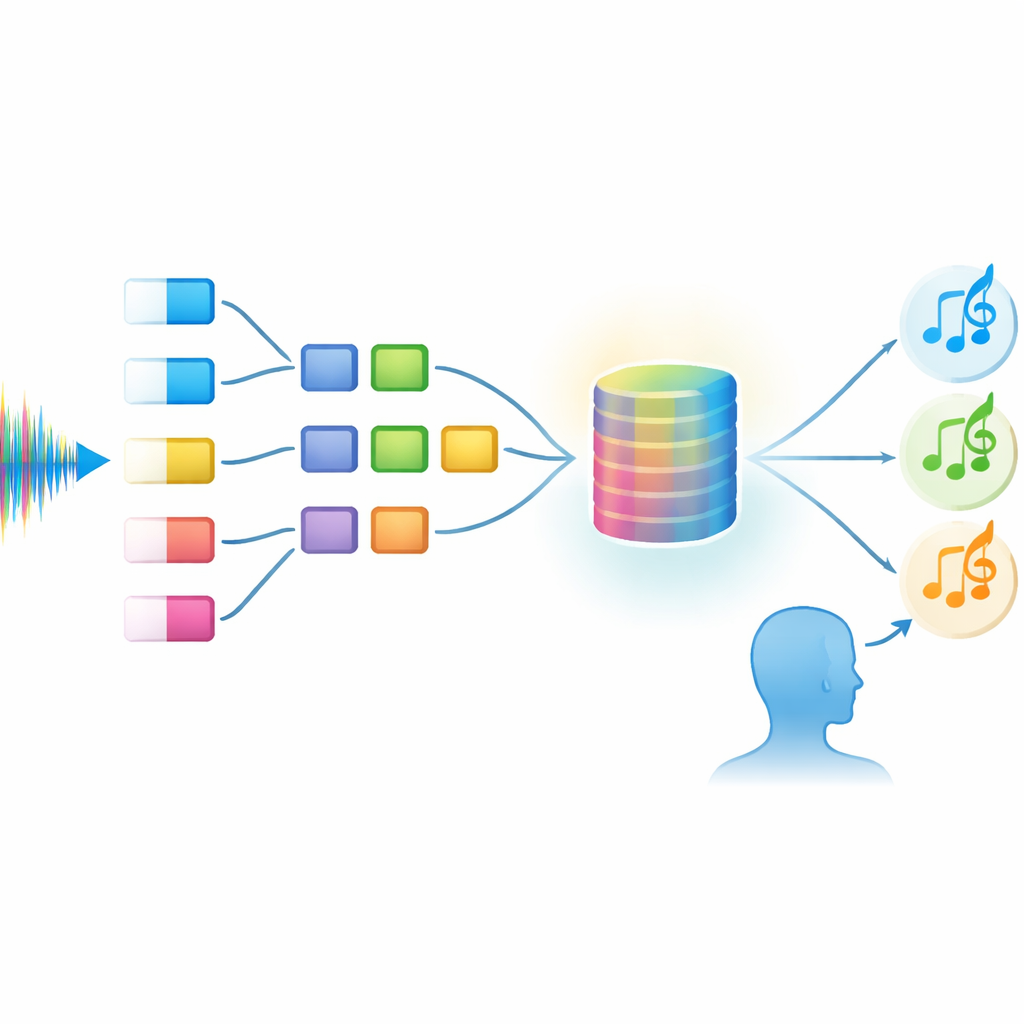

At the heart of the study is a new audio recognition model with a complex name: MBFN-DCAM. In simple terms, it is a digital "ear" built from several cooperating parts. First, the system breaks incoming sound into many parallel streams so it can pay attention to different aspects of the music—such as timbre and contour—at the same time. Special filters called dilated convolutions allow the system to "listen" across the full ten-second span without exploding in size, so it can understand not just isolated notes but whole musical phrases. An attention mechanism then highlights the most informative slices of sound, helping the system stay focused despite classroom noise or recording imperfections.

From Raw Sound to Practical Teaching Tips

Unlike many technical papers that stop at reporting accuracy numbers, this study follows the full journey from recognition to real teaching impact. The model is trained and tested on 5,000 carefully selected clips from a large public audio collection, augmented to mimic real classrooms with echoes and background noise. After the system learns to reliably identify different musical events, the researchers design a direct mapping from what the AI hears to what a student should do next. For example, if the system detects frequent pitch errors, it can trigger specific pitch-matching exercises; if a student’s timing is uneven, it recommends beat training; if emotional expression seems flat, it suggests model performances and targeted repetition.

Proof That AI Help Really Changes Learning

To see whether this digital ear does more than crunch numbers, the authors run a randomized controlled trial with 60 university music students across instrumental, vocal, and ensemble settings. Half of the students receive traditional instruction, while the other half also get real-time AI feedback. Those using the AI system show far larger improvements in pitch accuracy, report higher confidence in their musical abilities, and practice longer on their own. The AI’s scores closely match those of experienced teachers, and the system runs fast enough—about 82 milliseconds per clip on a small, low-power device—to be practical in everyday classrooms or practice rooms.

What This Means for Future Music Lessons

Overall, the study shows that a well-designed AI listener can do more than label sounds: it can power a teaching loop that links what the machine hears to what the student should practice next. The model outperforms several leading audio recognition systems while using fewer computing resources, making it suitable for affordable hardware. For learners, this could mean more objective grading, clearer guidance, and greater motivation. For teachers, it offers a tool that handles routine evaluation so they can focus on musicality and creativity. The work points toward a future where personalized, emotionally aware music coaching is available anytime, not just during a weekly lesson.

Citation: Liu, C., Shi, N. & Jiang, S. Artificial intelligence technology for music teaching reform mode under DCNN algorithm. Sci Rep 16, 14178 (2026). https://doi.org/10.1038/s41598-026-45027-w

Keywords: music education, audio recognition, artificial intelligence, personalized learning, deep learning