Clear Sky Science · en

Prototype-oriented contrastive mean-teacher for unsupervised domain adaptive object detection

Teaching Computers to Spot Objects in New Places

Modern AI systems can find cars, people, and street signs in photos with impressive accuracy—until the scenery changes. A detector trained on sunny city streets can stumble in fog, at night, or in stylized artwork. This paper introduces a new way to “teach the teacher” inside these systems so that they can adapt themselves to new conditions without requiring any new hand‑drawn boxes from humans.

Why Object Detectors Struggle When the World Changes

Object detection relies on vast collections of labeled images where every car, bus, or bike is carefully boxed. But real‑world cameras rarely match those training conditions. Different weather, lighting, or camera types shift the appearance of objects—a phenomenon known as a domain shift. When that happens, detectors trained on one domain, such as clear daytime traffic scenes, may misfire badly on another, such as foggy highways or nighttime drives. Collecting fresh labels for each new condition is expensive, so researchers seek methods that adapt detectors using only unlabeled data from the new domain.

A Self‑Teaching System with a Built‑In Guide

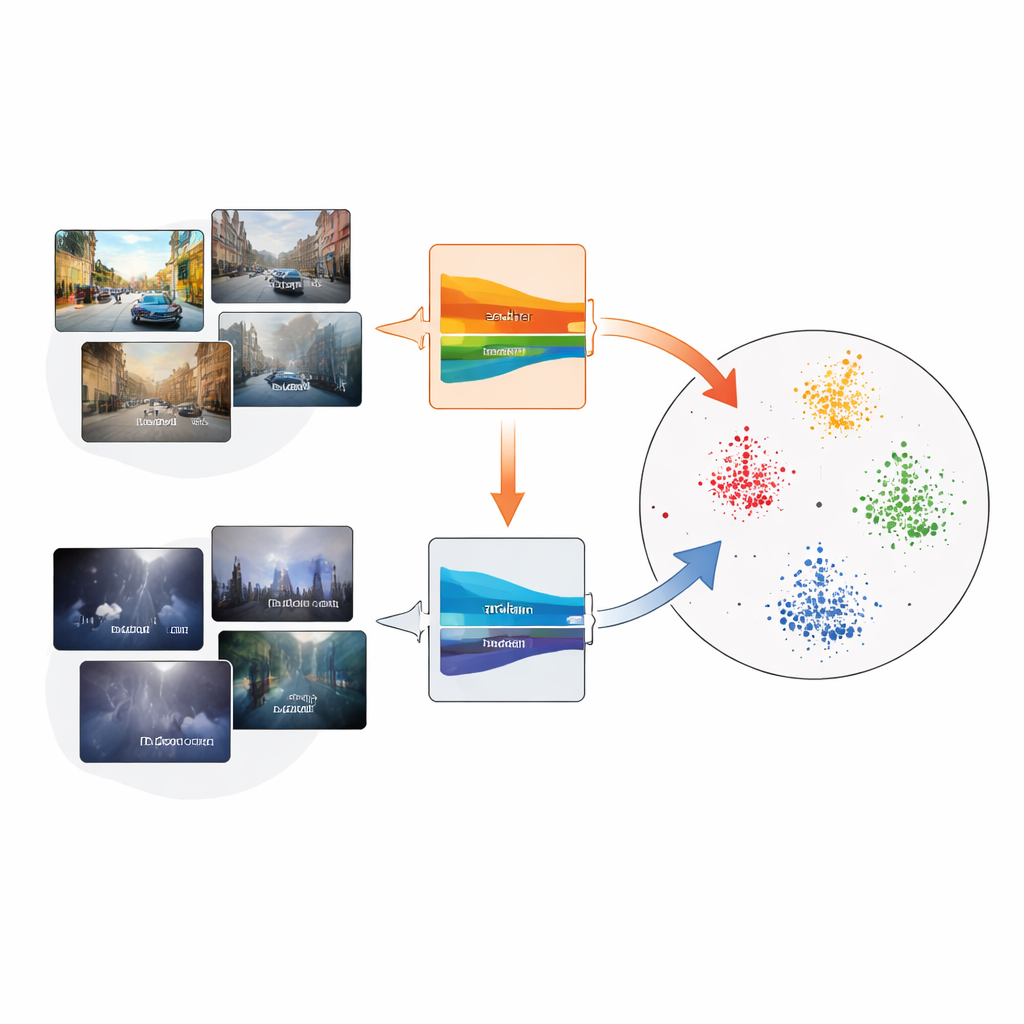

A popular strategy lets the model teach itself. A “teacher” network, built as a smoothed version of a “student” network, predicts boxes on unlabeled target images; these predictions, called pseudo‑labels, then train the student. Over time, the student improves and the teacher is updated as a moving average of the student’s weights. However, if early pseudo‑labels are wrong—missing objects in thick fog, for instance—errors can compound. The authors show that three ideas can be combined to stabilize this self‑training: a mean‑teacher setup, contrastive learning (which pulls related features together and pushes others apart), and compact “prototypes” that summarize each object category.

Prototypes as Landmarks in Feature Space

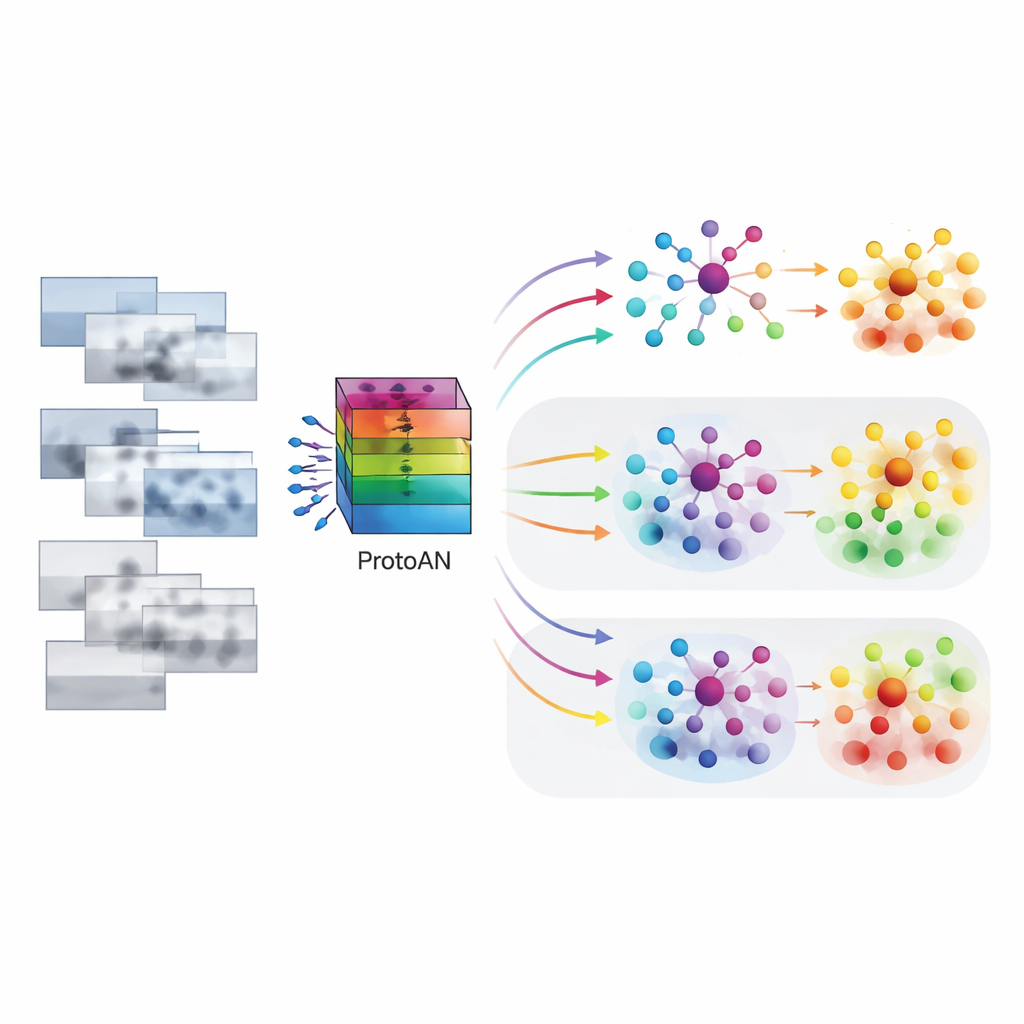

The core of the proposed PoCoMT framework is the Prototype Alignment Network, or ProtoAN. Instead of comparing every object to every other object, ProtoAN learns a small set of representative points—prototypes—for each category, such as car or pedestrian. Features extracted from image regions are mapped into a special space where examples of the same category from different domains gather around their shared prototype, while different categories are pushed apart. A contrastive loss encourages this clustering, both within a single domain and across source and target domains. Crucially, this mechanism treats even the background as its own category, helping the system distinguish true objects from clutter.

Making Better Use of Unlabeled Data

PoCoMT improves the teacher’s pseudo‑labels in two ways. First, an “information maximization” objective nudges predictions on target images to be both confident for each object and diverse across categories, avoiding the trivial behavior of labeling everything as the same class. Second, ProtoAN refines pseudo‑labels by comparing features to prototypes rather than trusting raw predictions. If a region’s predicted class does not match the nearest prototype, the label can be adjusted. This makes the system more tolerant to noise: even when the authors deliberately corrupted many pseudo‑labels during training, PoCoMT degraded more gracefully than competing methods.

Stronger Detectors for Tough Real‑World Scenes

Tested on a broad suite of benchmarks—including clear‑to‑foggy streets, synthetic‑to‑real traffic, day‑to‑dusk driving, and realistic‑to‑artistic imagery—PoCoMT consistently surpassed existing unsupervised domain adaptation techniques, often by several percentage points in detection accuracy. In some cases it even outperformed models trained directly on labeled target data, thanks to its ability to leverage both labeled source images and abundant unlabeled target images. For non‑specialists, the message is straightforward: by letting an object detector organize its own internal “landmarks” for each category and by carefully guiding how the teacher and student exchange information, this approach makes AI vision systems more robust when the world looks different from their training data.

Citation: Cao, Q., Tao, J., Dan, Y. et al. Prototype-oriented contrastive mean-teacher for unsupervised domain adaptive object detection. Sci Rep 16, 10869 (2026). https://doi.org/10.1038/s41598-026-44991-7

Keywords: unsupervised domain adaptation, object detection, self-training, contrastive learning, prototype learning