Clear Sky Science · en

Deep maximum margin matrix factorization

Why smarter suggestions matter

From movie nights to online shopping, we rely on digital platforms to guess what we might like next. These guesses come from recommendation algorithms that learn from our past choices and ratings. But people’s tastes are messy and rarely follow simple patterns. This paper introduces a new way to model those messy tastes, called Deep Maximum Margin Matrix Factorization (Deep MMMF), which aims to turn scattered, partial rating data into sharper, more reliable recommendations.

How today’s recommenders usually work

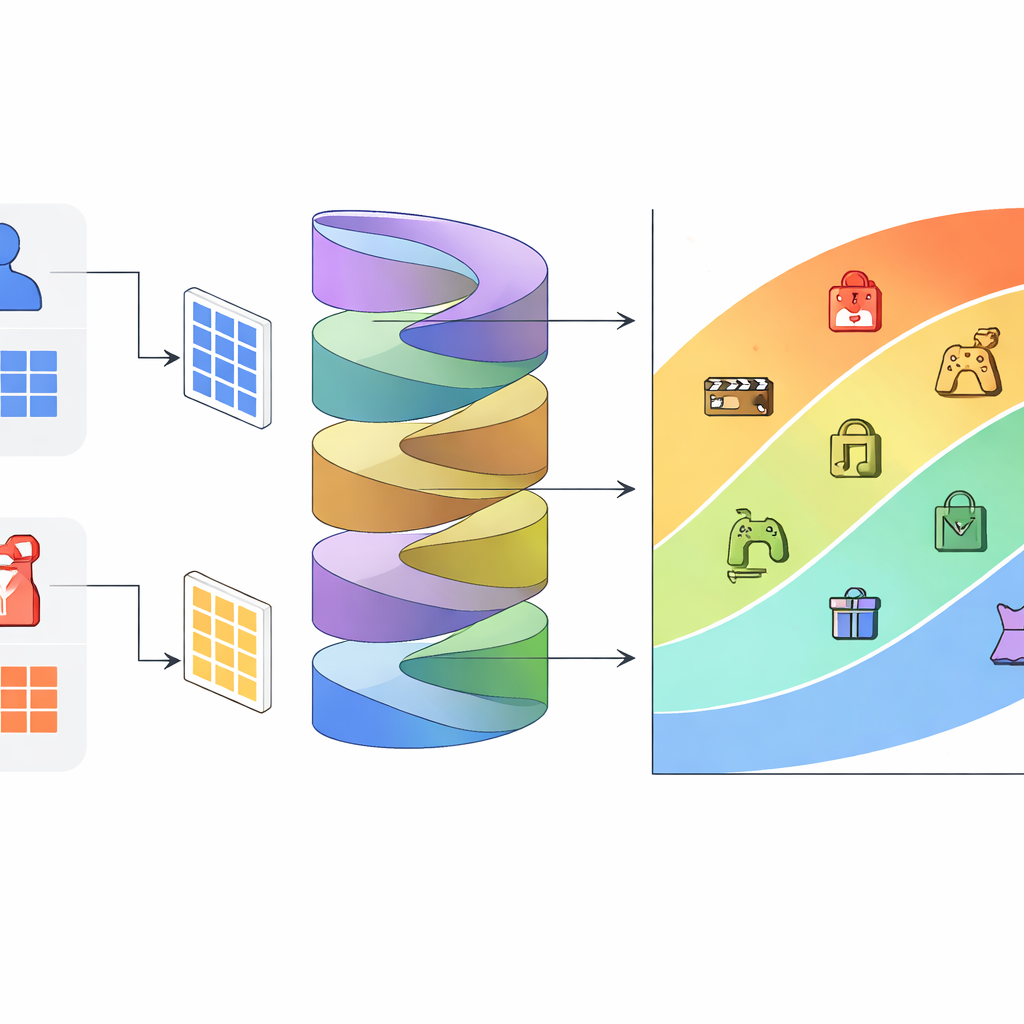

Many modern recommenders are built around a technique called matrix factorization. Imagine a giant table where rows are users, columns are items, and each cell holds a rating if it exists. Matrix factorization compresses this huge, sparse table into two smaller maps: one describes hidden preferences of users, and the other describes hidden traits of items. When the system wants to predict how a user might rate an unseen item, it combines their hidden preference vector with the item’s hidden trait vector and turns that into a score. Classic variations of this idea have powered well-known systems for films, products, and music.

From straight lines to curved preferences

One influential method, Maximum Margin Matrix Factorization (MMMF), goes a step further by treating ratings as ordered categories: one star is worse than two, which is worse than three, and so on. MMMF imagines item representations as points in space and users as flat cutting surfaces (hyperplanes) that divide this space into rating zones. Each zone corresponds to a different rating level, and the method pushes these dividing surfaces apart as much as possible to reduce confusion between neighboring ratings. However, because these surfaces are required to be flat and parallel, the model can only express simple, roughly linear taste patterns.

A deeper view of tastes and items

Real preferences are rarely that simple. Consider three viewers: one who likes balanced, moderate-action films, another who wants high action but low intensity, and a third who enjoys any high-action movie. Such behaviors carve out curved and irregular boundaries in the space of movies, not neat parallel slices. Deep MMMF tackles this by keeping the familiar MMMF backbone but placing deep neural networks on top of its user, item, and rating-related representations. First, the model builds standard compact embeddings for users, items, and rating thresholds, just like MMMF. Then, separate neural modules transform each of these embeddings through layers of nonlinear functions, bending the rating boundaries into richer shapes without increasing the number of hidden dimensions.

What the experiments reveal

The authors test Deep MMMF on three popular datasets: MovieLens 1M, EachMovie, and an Amazon Prime Pantry product set. They examine two situations. In weak generalization, the system predicts ratings for user–item pairs that are held out but where both users and items were seen during training. In strong generalization, the system faces entirely new users whose past ratings were not used to fit the main model, so it must quickly infer their tastes from scratch. Across both situations and all datasets, Deep MMMF consistently produces lower prediction errors than a broad collection of leading methods, including classic MMMF, probabilistic factorization models, and several deep-learning recommenders. Statistical tests confirm that these improvements are not due to random chance.

Why this approach is promising

By keeping the original size of the hidden representations while adding flexible, nonlinear transformations, Deep MMMF cleanly separates “more dimensions” from “more expressive functions.” This makes it easier to see that the gains really come from better modeling of complex taste patterns rather than just from a bigger model. The results suggest that recommendation systems can better respect the natural ordering of ratings and the tangled nature of human preferences without sacrificing interpretability or efficiency. In practical terms, this means future recommenders built on ideas like Deep MMMF could deliver more accurate, personalized suggestions in movies, shopping, and beyond, especially when user tastes do not follow simple straight lines.

Citation: Kumar, S., Kagita, V.R., Kumar, V. et al. Deep maximum margin matrix factorization. Sci Rep 16, 14518 (2026). https://doi.org/10.1038/s41598-026-44839-0

Keywords: recommender systems, collaborative filtering, deep learning, matrix factorization, user ratings