Clear Sky Science · en

ROI-guided relational YOLO–SegNet transformer for lightweight bone tumor segmentation and classification from X-ray images

Sharper X-rays for Earlier Answers

Bone tumors are uncommon but serious, and in many clinics the first and sometimes only imaging test is a simple X-ray. Unfortunately, tumors can be faint, irregular and easily missed, especially in busy or low-resource hospitals. This paper presents a streamlined artificial intelligence (AI) system that cleans up noisy X-rays, zooms in on suspicious regions and outlines possible tumors with high precision, aiming to support radiologists rather than replace them.

Why Bone Tumors Are Hard to Spot

On an X-ray, a bone tumor does not always stand out as a neat, round spot. Its edges can blur into normal bone, its brightness may resemble healthy tissue, and other structures can overlap in confusing ways. These hurdles are especially severe on standard X-rays, which have less contrast than CT or MRI scans. Many existing AI tools either focus only on deciding “tumor” versus “no tumor” for an entire image, or they demand heavy computing power that smaller hospitals may not have. Precise outlining of the tumor, which is crucial for planning surgery and tracking disease over time, has remained difficult to automate efficiently.

A Three-Step AI Helper for X-rays

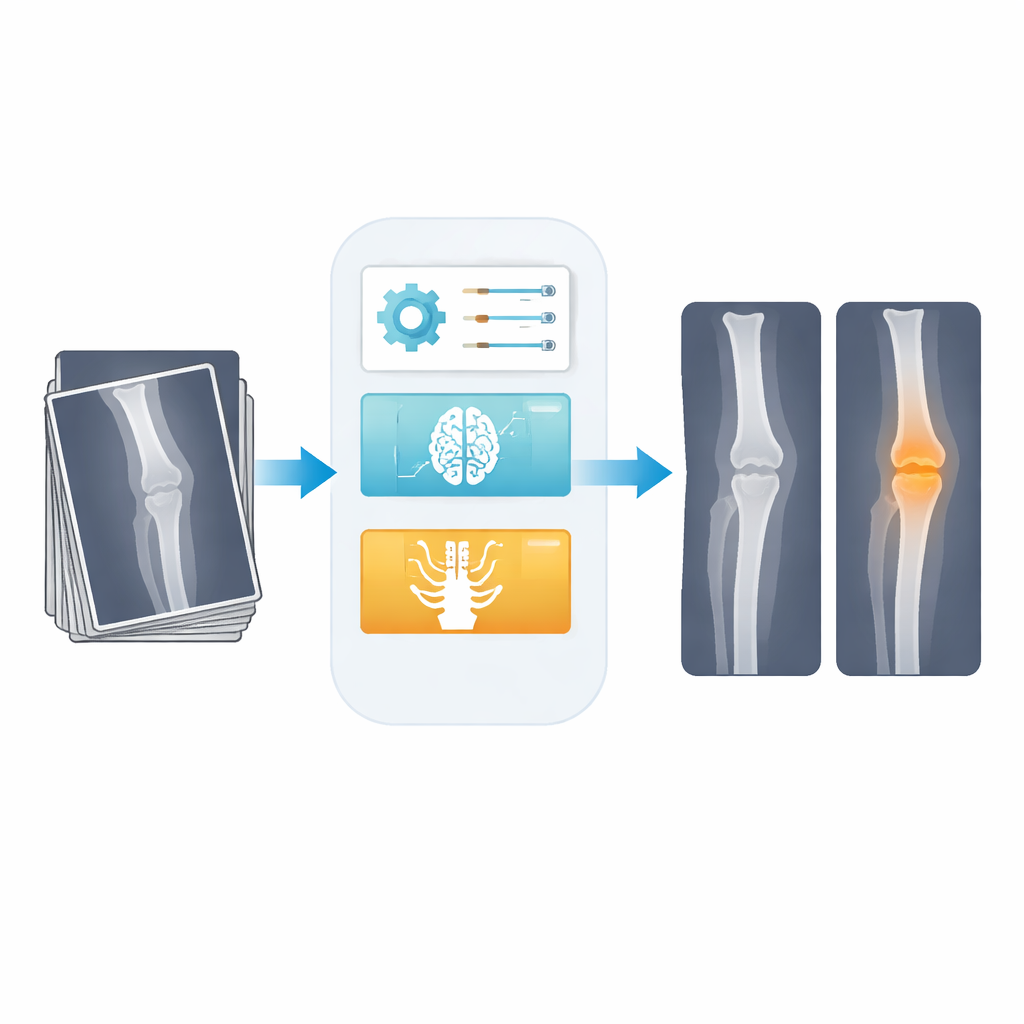

The authors design a three-stage pipeline tailored to this challenge. First, a smart filtering step cleans the raw X-ray. Instead of using a fixed blur that can wash out detail, they use an adaptive digital filter whose settings are automatically chosen by an optimization routine. This raises the signal-to-noise ratio from about 21 to nearly 30 decibels, meaning the image is noticeably less noisy while the edges of bones and tumors stay sharp. Second, a compact detection network, derived from the popular YOLO family of models, draws a rough box around any region likely to contain a tumor. Third, only this narrowed region is sent to a lightweight segmentation network that traces the tumor’s boundaries at the pixel level, and the final “normal” versus “tumor” decision is made using information from the segmented region.

Focusing the AI’s Attention Where It Matters

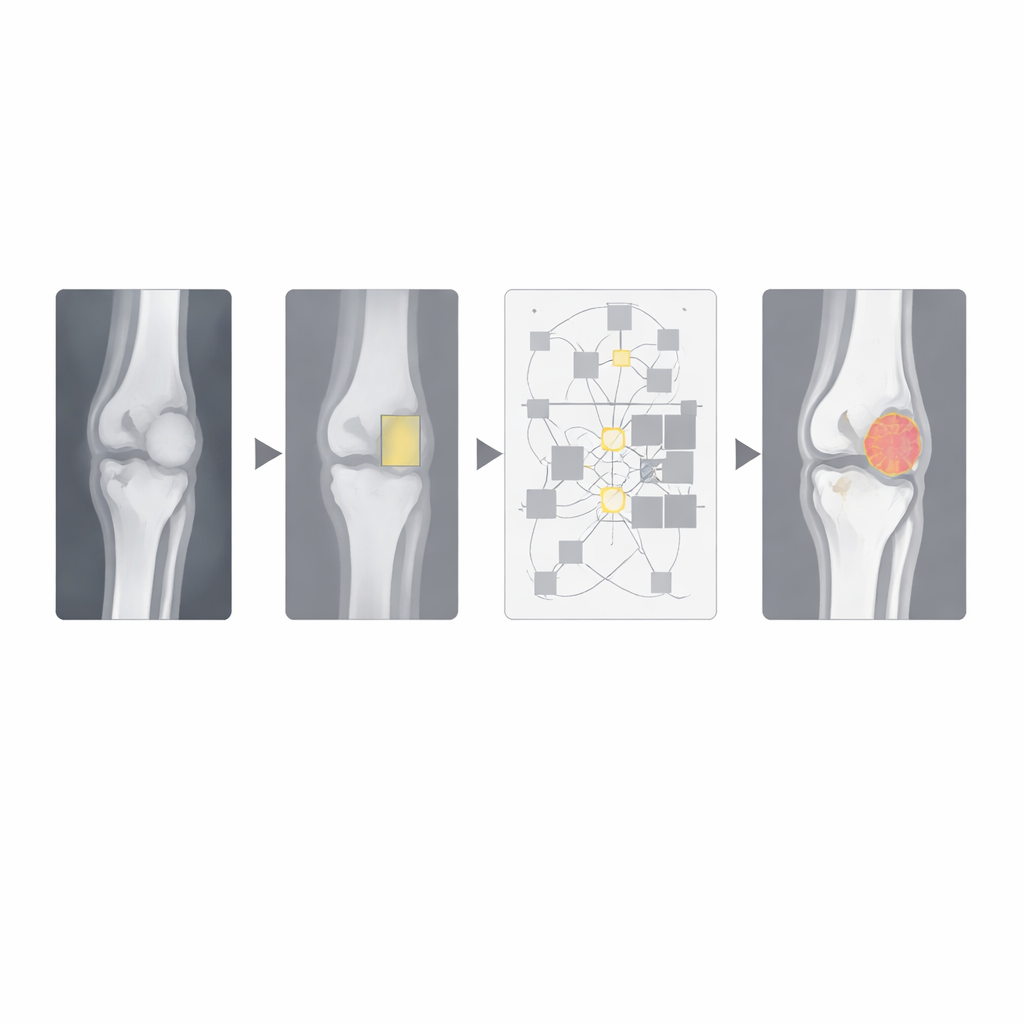

A key innovation is how the system uses “relational” attention. Many modern vision models rely on transformer blocks that try to compare every part of an image with every other part. While powerful, this becomes expensive on large medical images and can slow down real-time use. Here, the transformer is applied only inside the tumor box found by the detector, plus nearby bone. Within this smaller area, the model learns how different spots relate to one another, helping it tell fuzzy tumor tissue from normal bone. Because it ignores the rest of the image, the approach cuts the amount of computation dramatically while still capturing long-range context inside the clinically relevant region.

Letting Nature-Inspired Search Tune the Knobs

The pipeline also uses two nature-inspired search methods to choose important settings automatically. One algorithm adjusts how strongly the filter smooths the X-ray, balancing noise removal against edge clarity. Another hybrid “fire hawk election” optimizer searches for good training settings such as learning rate, batch size and dropout. These metaheuristics reduce manual trial-and-error and help the model converge quickly without overfitting the relatively small dataset of 809 expert-labeled X-rays, which includes both healthy and tumor cases.

Fast, Accurate, but Not Yet a Clinic-Ready Tool

Tested with five-fold cross-validation, the system correctly distinguishes normal from tumor-bearing X-rays about 98.5% of the time and achieves a Dice score of 97%, indicating close agreement between the AI’s tumor outlines and expert drawings. It uses roughly a third of the parameters of common segmentation networks like UNet and runs in about 48 milliseconds per image on a modern graphics card, supporting near real-time use. However, the authors stress that their results come from a single public dataset and do not yet reflect the full variety of machines, hospitals and patients in real-world practice. They position their framework as a promising research-support tool that could eventually help radiologists spot and measure bone tumors more consistently, once it is validated across multiple centers and clinical conditions.

Citation: Natarajan, C., Rajendran, S., Vinmathi, M.S. et al. ROI-guided relational YOLO–SegNet transformer for lightweight bone tumor segmentation and classification from X-ray images. Sci Rep 16, 14603 (2026). https://doi.org/10.1038/s41598-026-44297-8

Keywords: bone tumors, medical X-rays, deep learning, tumor segmentation, computer-aided diagnosis