Clear Sky Science · en

Adaptive multi-objective optimization of microgrid energy management using deep reinforcement learning considering battery degradation and renewable uncertainty

Smarter Local Power for Everyday Life

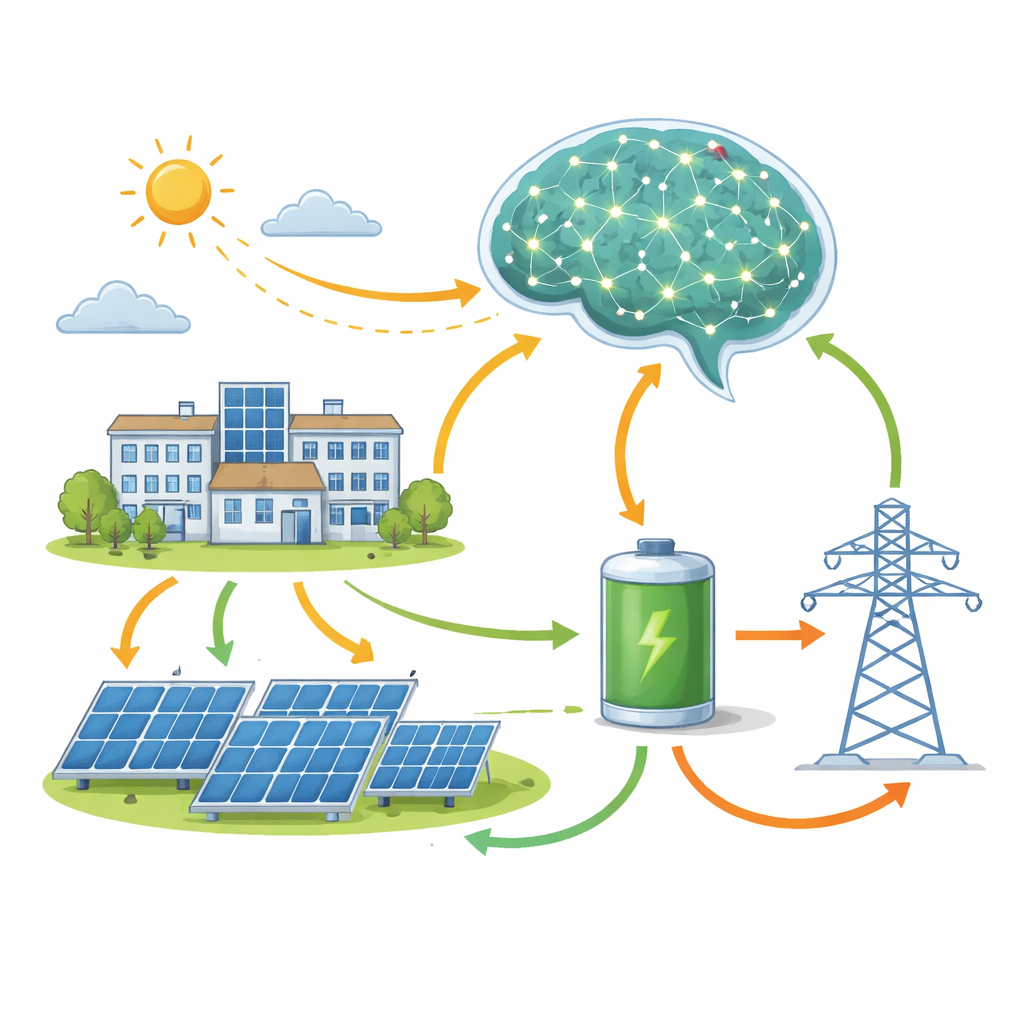

As more homes, campuses, and businesses install solar panels and batteries, managing when to buy, store, or use electricity becomes a high‑stakes juggling act. Done well, it cuts bills and pollution; done badly, it wastes clean power and wears out costly batteries. This study explores how a form of artificial intelligence called deep reinforcement learning can act as a "digital operator" for a solar‑and‑battery microgrid, learning day by day how to keep costs low, use more renewable energy, and protect the battery from premature aging—even when the weather forecast is wrong.

Why Small Power Networks Matter

Microgrids are compact power systems that tie together solar panels, battery storage, controllable appliances, and a connection to the larger grid. They can power a campus or neighborhood, and they can keep critical loads running when the main grid is stressed. But they are hard to operate: sunlight and demand change by the minute, electricity prices vary through the day, and every charge–discharge cycle slowly degrades the battery. Traditional control schemes rely on mathematical optimization and accurate forecasts, which often break down when conditions deviate from expectations or when the system becomes too complex.

A Learning Controller That Trains on Experience

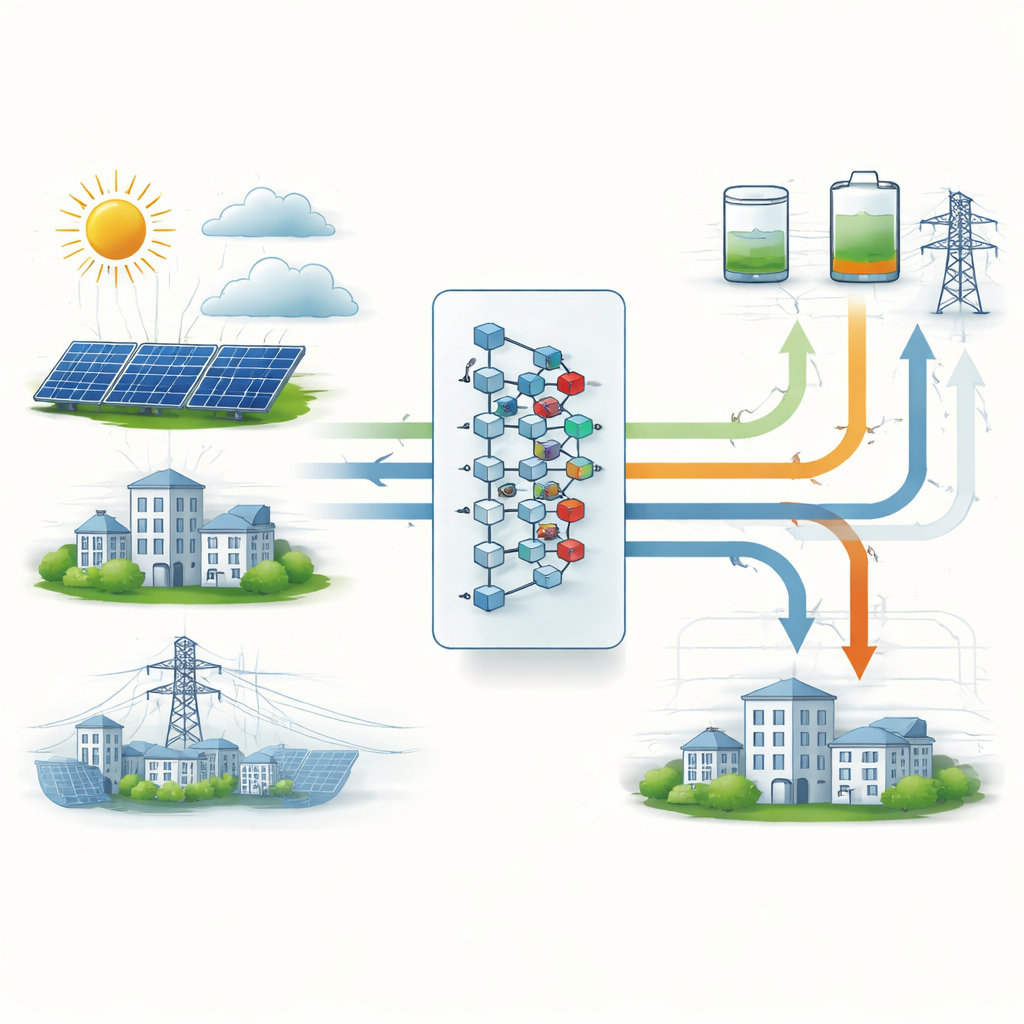

The researchers built a detailed digital twin of a campus‑scale microgrid in western Saudi Arabia, including 300 kW of solar panels, a large lithium‑ion battery, flexible and inflexible loads, and a two‑way link to the main grid. They then trained a deep reinforcement learning agent—specifically, an advanced Deep Q‑Network—to operate this virtual system in 15‑minute steps over many simulated years. The agent observed the battery charge level, short‑term forecasts for solar and demand, electricity prices, and temperature, and chose actions such as how hard to charge or discharge the battery and whether to run shiftable loads. After each step it received a reward that blended three goals: pay less for electricity, slow battery wear, and rely less on fossil‑fueled grid power.

Balancing Cost, Battery Health, and Clean Energy

To reflect real‑world trade‑offs, the team wove a physics‑based battery aging model directly into the learning signal. The reward function penalized both short‑term costs and long‑term capacity loss, and it harshly discouraged violations of safety limits like over‑charging or over‑discharging the battery. Over about 10,000 simulated days of practice, the agent discovered strategies that people often recommend—but that it was never explicitly taught. It learned to favor many moderate‑depth charge cycles instead of a few deep ones, to keep the battery in a mid‑range state of charge where aging is slower, and to leave enough room in the battery before sunny hours so that upcoming solar energy could be stored instead of wasted.

Beating a Conventional Smart Controller

The new learning‑based controller was tested head‑to‑head against a strong benchmark known as model predictive control, which is widely used in industry and assumes access to good forecasts. Over a full year of weather and demand data, the learned policy cut overall operating costs by about 12 percent, reduced battery capacity loss by just over 8 percent, and increased the use of locally generated solar power by roughly 10 percent. It also trimmed peak power drawn from the grid and cut associated carbon emissions by almost 14 percent. Perhaps most striking, once trained, the AI controller made decisions in a few thousandths of a second—far faster than the seconds‑long optimization required by the conventional method—making it suitable for inexpensive on‑site hardware.

Staying Robust When the Weather Is Wrong

Real microgrids must cope with cloudy surprises and imperfect forecasts. The researchers stressed both controllers with increasingly inaccurate solar predictions. As forecast errors grew to severe levels, the traditional controller’s costs rose by more than one fifth, while the learning‑based controller’s costs rose by less than one tenth. The AI agent also handled rapid swings in sunlight more gracefully, using the battery to smooth power flows without resorting to damaging deep discharges. It scheduled flexible loads to coincide with solar production and low‑price hours, further easing strain on the grid.

What This Means for Future Power Systems

In everyday terms, the study shows that a trained AI can run a solar‑and‑battery system like a savvy operator who cares about today’s bill, tomorrow’s battery health, and the climate impact of each kilowatt‑hour. By learning directly from data, rather than relying on perfect forecasts and simplified formulas, the controller delivers cheaper, cleaner power while extending the life of expensive batteries. Although the work was done in simulation for a campus microgrid in Saudi Arabia, the same approach could be adapted to other sites and expanded to networks of microgrids, offering a promising path toward more resilient, efficient, and sustainable local energy systems.

Citation: Altimania, M.R.M., Basem, A., Saydullaev, B. et al. Adaptive multi-objective optimization of microgrid energy management using deep reinforcement learning considering battery degradation and renewable uncertainty. Sci Rep 16, 14296 (2026). https://doi.org/10.1038/s41598-026-44179-z

Keywords: microgrids, deep reinforcement learning, battery health, renewable energy, energy management