Clear Sky Science · en

Enhancing IELTS writing automated scoring with M-LoRA fine-tuned LLAMA-3 and human feedback-driven PPO reinforcement learning

Why Smarter Essay Help Matters

For millions of people each year, the IELTS exam can open doors to study, work, or immigration abroad. Yet many test takers struggle most with the writing section, where getting clear, reliable feedback is hard and paying human tutors can be expensive. This paper explores a new way to use artificial intelligence not just to score IELTS essays, but also to give detailed, human-like suggestions that help writers actually improve, while remaining closely aligned with how real examiners think.

The Challenge of Judging Writing

Assessing the quality of an essay is more complicated than checking spelling or counting words. Human examiners look at how well the writer answers the question, how clearly ideas are organized, how rich and accurate the vocabulary is, and how correct and varied the grammar appears. Existing automated scoring systems often work well only on narrow, fixed question sets and can “forget” how to judge earlier types of essays when exposed to new ones. Large language models such as GPT-4 have shown promise, but when used directly they still struggle to match human scores and tend to give generic, one-size-fits-all feedback.

Building a Rich IELTS Writing Dataset

To push beyond these limits, the authors first created a new private dataset of 5,088 real IELTS Writing Task 2 essays written by Chinese learners. Each essay came with scores from experienced IELTS teachers on the four official criteria: Task Response, Coherence and Cohesion, Lexical Resource, and Grammatical Range and Accuracy. Importantly, teachers also provided fine-grained feedback pointing out problems such as unclear ideas, awkward links between sentences, or weak vocabulary, plus suggested rewrites. This rich annotation goes far beyond typical public datasets and serves as the foundation for training and testing the new system.

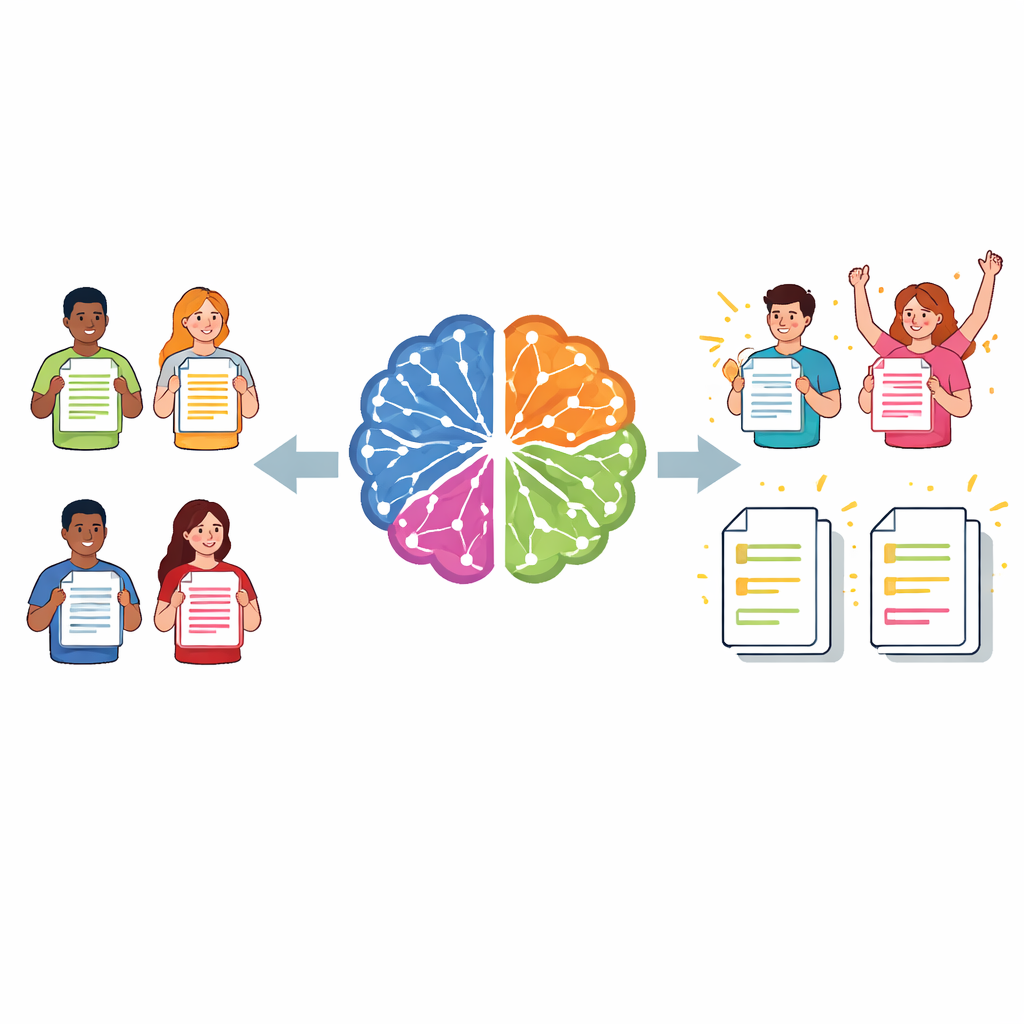

A Three-Step Intelligent Writing Coach

The proposed system is built on LLaMA‑3, a modern large language model, enhanced using a lightweight tuning method called Multi‑task LoRA. In the first step, the model is trained to handle several tasks at once: for any given essay, it predicts a band score for each of the four IELTS criteria and generates targeted comments for each area. Separate “heads” focus on each trait, while sharing a common understanding of the text, which helps the model avoid the usual “catastrophic forgetting” when facing many different prompts.

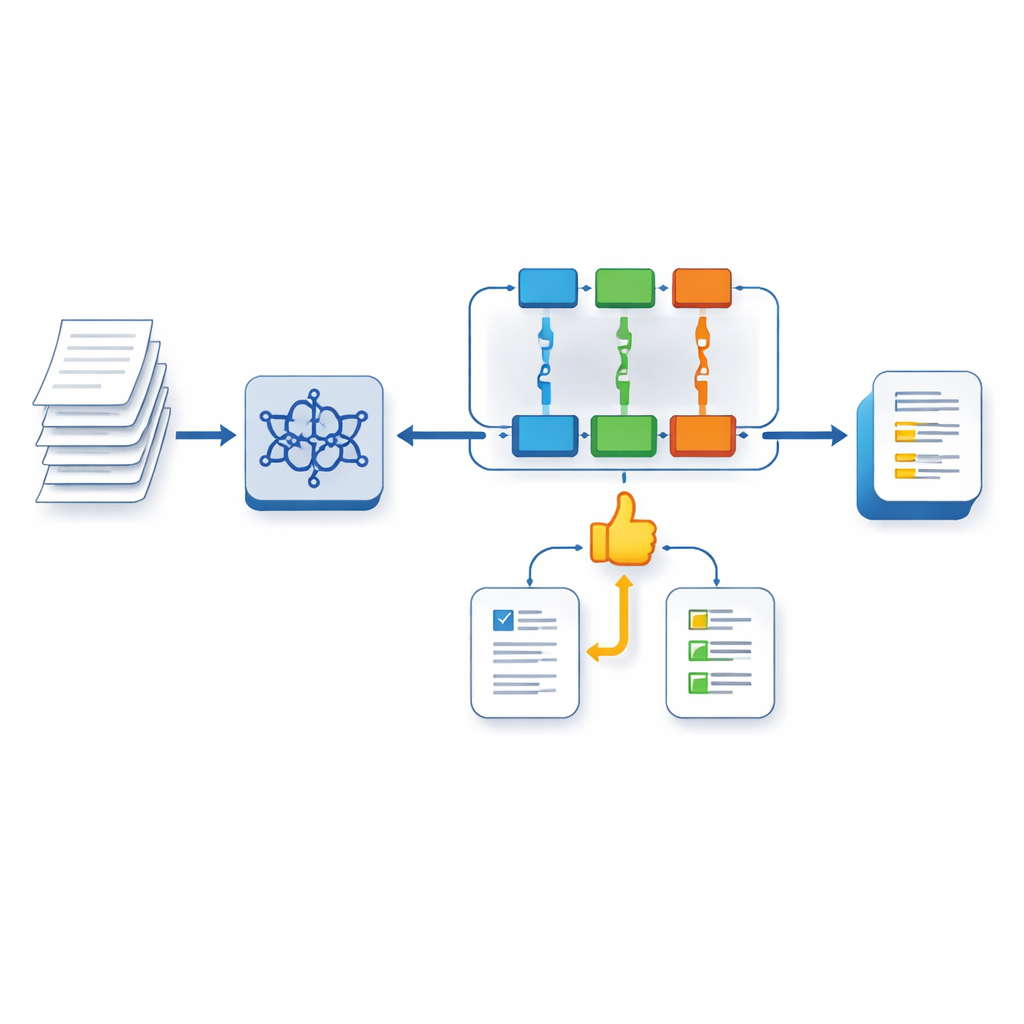

Teaching the AI to Value Good Feedback

In the second step, the authors train a separate reward model that learns to judge the quality of feedback itself by comparing model-generated comments with teacher-written ones. This reward model acts as a stand‑in for human examiners during training. In the third step, the main system is further refined using a reinforcement learning method known as PPO. Here, the model generates feedback, the reward model scores how well that feedback aligns with expert preferences, and the system adjusts its behavior to move toward higher‑quality, more examiner‑like responses over many cycles.

What the Results Mean for Learners and Teachers

When tested, the new system achieved higher agreement with human scores than powerful alternatives, including GPT‑4 prompted in various ways, and produced feedback that automatic measures and human judges found closer to expert comments. While the numeric gains in scoring accuracy are modest, the system’s real strength lies in delivering detailed, rubric-based, and personalized advice that resembles what a skilled teacher might write. For IELTS candidates, this approach points toward affordable, always‑available writing support that does more than assign a band score—it explains why, and how to do better next time.

Citation: Xu, W., Kassim, M.S.S. & Mahmud, R. Enhancing IELTS writing automated scoring with M-LoRA fine-tuned LLAMA-3 and human feedback-driven PPO reinforcement learning. Sci Rep 16, 10865 (2026). https://doi.org/10.1038/s41598-026-43318-w

Keywords: automated essay scoring, IELTS writing, large language models, educational feedback, reinforcement learning