Clear Sky Science · en

Comparative assessment of machine learning models for polymer solution viscosity prediction in enhanced oil recovery

Why predicting fluid thickness in oil fields matters

Getting the last drops of oil out of an aging field is surprisingly tricky. Engineers often thicken injected water with special polymers so it pushes oil smoothly instead of fingering through it and leaving valuable fuel behind. But tuning how “thick” these polymer solutions should be usually demands hundreds of lab tests under different temperatures, salt levels, flow rates, and chemical doses. This study shows how modern machine learning can learn from those experiments and then instantly predict polymer thickness (viscosity), helping design more efficient and lower‑risk oil recovery projects.

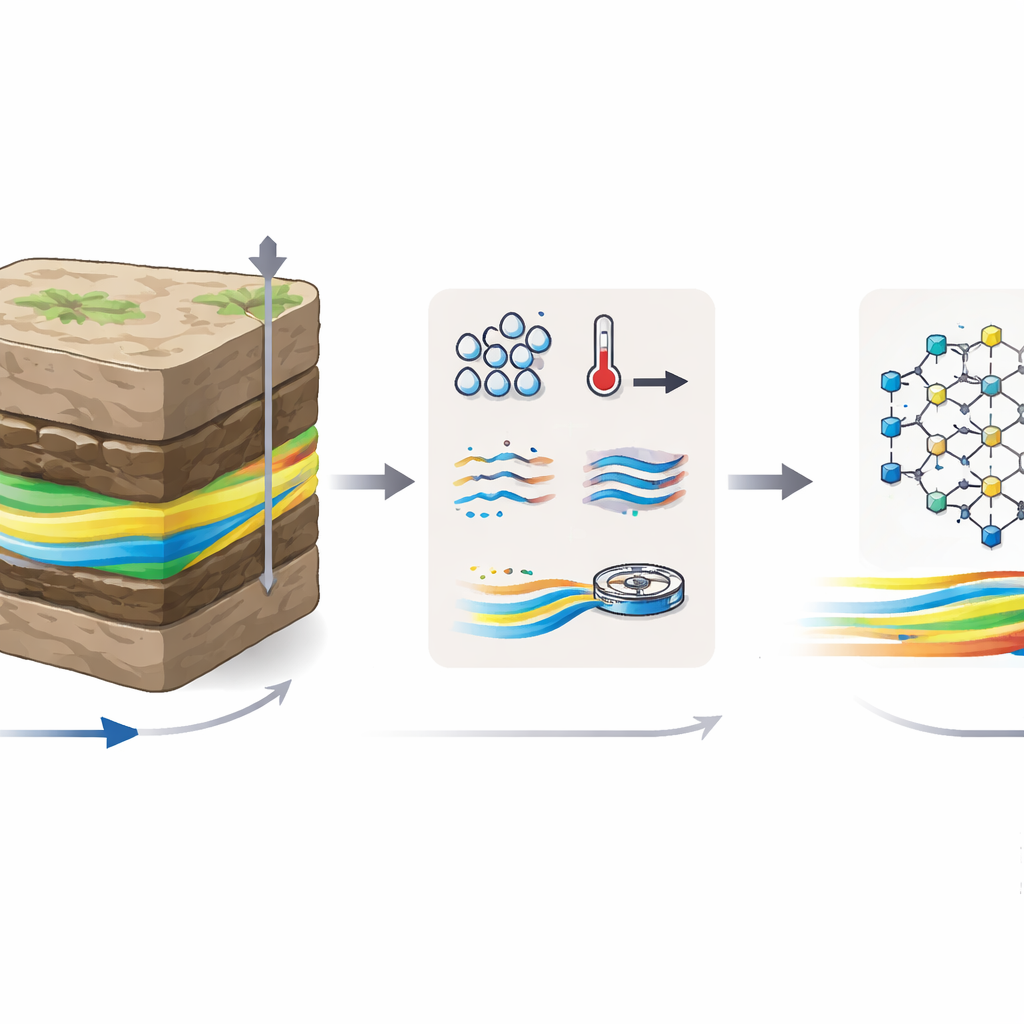

How thick fluids help move stubborn oil

When a reservoir is first tapped, oil flows relatively easily. Over time, what remains is trapped in rock pores and does not move well under normal waterflooding. If the injected water is too thin compared to the oil, it snakes through the easiest paths, bypassing much of the remaining crude. Adding polymers makes the injected water thicker, allowing it to push more evenly, improving “sweep” of the rock and raising total recovery. The catch is that polymer behavior is very sensitive to conditions: higher concentration generally thickens the fluid, higher temperature and stronger shear (faster flow) usually thin it, and dissolved salts can either weaken or reshape the polymer chains. Because these factors interact in complex, non‑linear ways, simple formulas often fail.

Building a rich dataset from lab experiments

The authors focused on a widely used polymer (SAV10, a type of HPAM) and created 1,079 measurements of its viscosity under realistic oil‑field conditions. They varied four main ingredients: polymer concentration (from 1,000 to 4,000 parts per million), temperature (25–80 °C), salt content mimicking Arabian seawater and its dilutions, and flow rate, expressed as shear rate. As expected, thicker solutions appeared at low temperature, low salinity, high concentration, and gentle flow; all samples showed “shear‑thinning” behavior, becoming less viscous as they were stirred or pumped faster. Before modeling, the team cleaned and standardized the data, checked for outliers, and examined correlations. Concentration showed the strongest positive link to viscosity, while temperature, shear, and salinity tended to reduce it, but not in a simple straight‑line fashion.

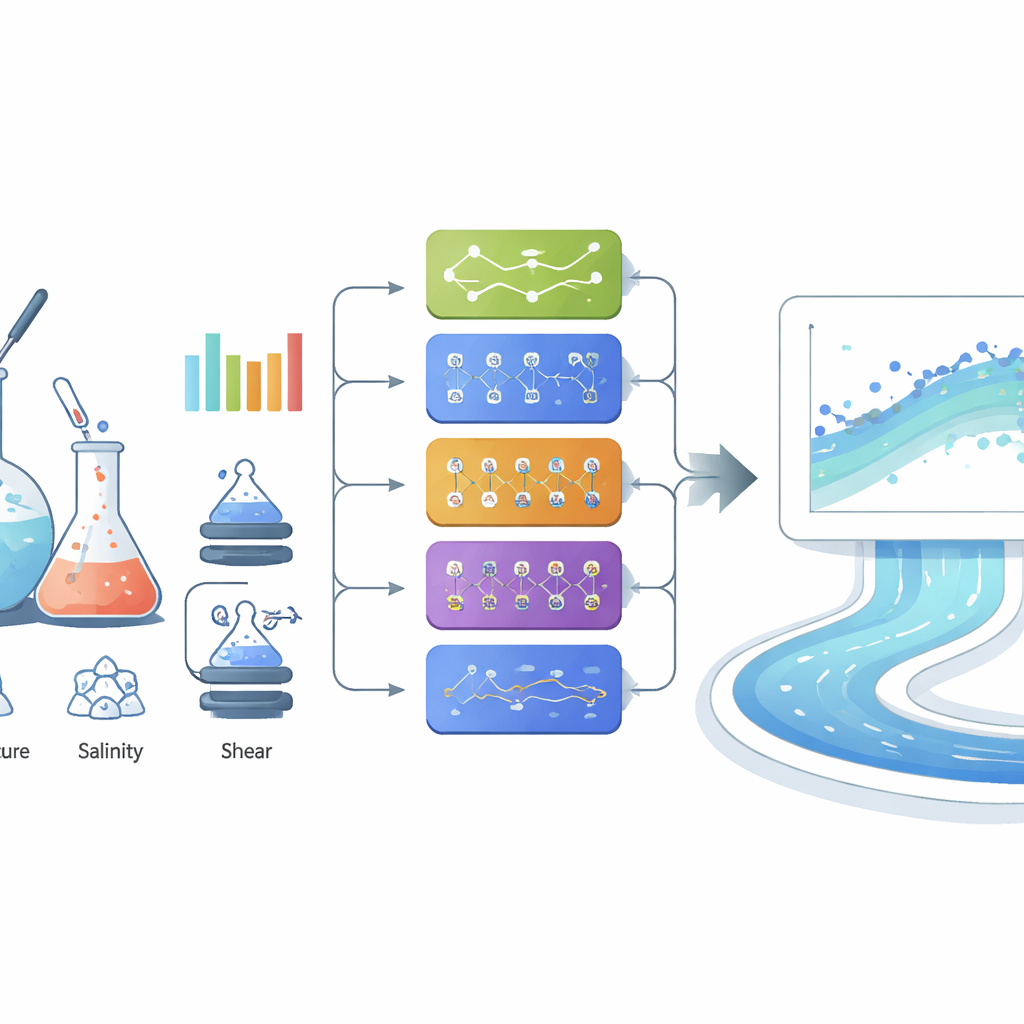

Putting many learning methods to the test

The researchers then treated viscosity prediction as a data‑driven task and systematically compared different machine learning methods. They started with familiar tools—linear regression, support vector machines, decision trees, and several neural networks—and found that more flexible models did far better than simple straight‑line fits. A “wide” neural network with a single hidden layer of 100 neurons already predicted viscosities with excellent accuracy, closely matching measured values across the full range. Next, they evaluated a suite of more advanced methods known as ensemble models, which combine the strengths of many decision trees or boosted learners, along with Gaussian process regression, a probabilistic method that also provides uncertainty estimates. Several of these advanced models performed extremely well, with very small prediction errors even at high viscosities, where many traditional approaches break down.

Why stacking models gave the sharpest predictions

To squeeze out the last bit of accuracy, the team built a “stacking” ensemble that blends three of the best individual models (Extra Trees, XGBoost, and CatBoost) using a simple meta‑model on top. Each base model learned slightly different aspects of how concentration, shear, temperature, and salinity shape viscosity; the meta‑model then learned how to weight their outputs. This stacked system delivered the strongest performance of all, with a near‑perfect match between predicted and measured viscosities and very low average error, including for the most viscous cases that are most important for field design. Explainable‑AI tools, such as permutation importance and SHAP analysis, confirmed that polymer concentration is the dominant control, followed by shear rate, temperature, and salinity—an ordering that agrees with basic polymer physics.

What this means for future oil recovery design

For a lay reader, the bottom line is that the study turns a slow, trial‑and‑error laboratory process into a fast, data‑driven prediction engine. Once trained, the stacked model can instantly estimate how thick a polymer solution will be under new combinations of salt, heat, flow, and concentration, without running new experiments each time. That can speed up screening of recovery options, cut costs, and reduce uncertainty when planning polymer flooding projects. While the current work is based on one specific polymer and lab‑scale data, the same approach could be extended to other chemistries and additives, ultimately helping engineers tailor smart fluids for tougher reservoirs while relying less on guesswork and more on learned patterns in the data.

Citation: Belkhir, S.A., Pakeer, A.A., Shakeel, M. et al. Comparative assessment of machine learning models for polymer solution viscosity prediction in enhanced oil recovery. Sci Rep 16, 10442 (2026). https://doi.org/10.1038/s41598-026-41045-w

Keywords: polymer flooding, viscosity prediction, machine learning, enhanced oil recovery, ensemble models