Clear Sky Science · en

Improving acute lymphoblastic leukemia diagnosis through CBAM-enhanced VGG19 deep learning

Why faster blood cancer checks matter

Acute lymphoblastic leukemia (ALL) is an aggressive blood cancer that can become life-threatening in a matter of weeks if not caught and treated quickly. Today, doctors often diagnose ALL by looking at bone marrow and blood cells under a microscope—a careful, time‑consuming task that depends heavily on expert judgment. This study explores how a tailored form of artificial intelligence (AI) might help doctors spot ALL and its subtypes more rapidly and consistently from microscope images, potentially speeding up care while still keeping specialists in control.

A closer look at a childhood blood cancer

ALL arises when immature white blood cells, called lymphoblasts, grow out of control in the bone marrow and spill into the bloodstream. These abnormal cells crowd out healthy blood cells and can spread to the nervous system. ALL is the most common childhood cancer, but it also appears in adults. Under the microscope, specialists divide ALL into three main subtypes—L1, L2, and L3—based on the size and shape of the cells, the texture of their genetic material, and how their surrounding fluid looks. Distinguishing these subtypes, and separating them from healthy bone marrow, is important for choosing treatment, yet even experts can find this difficult when cell appearances are very similar.

Teaching computers to see what experts see

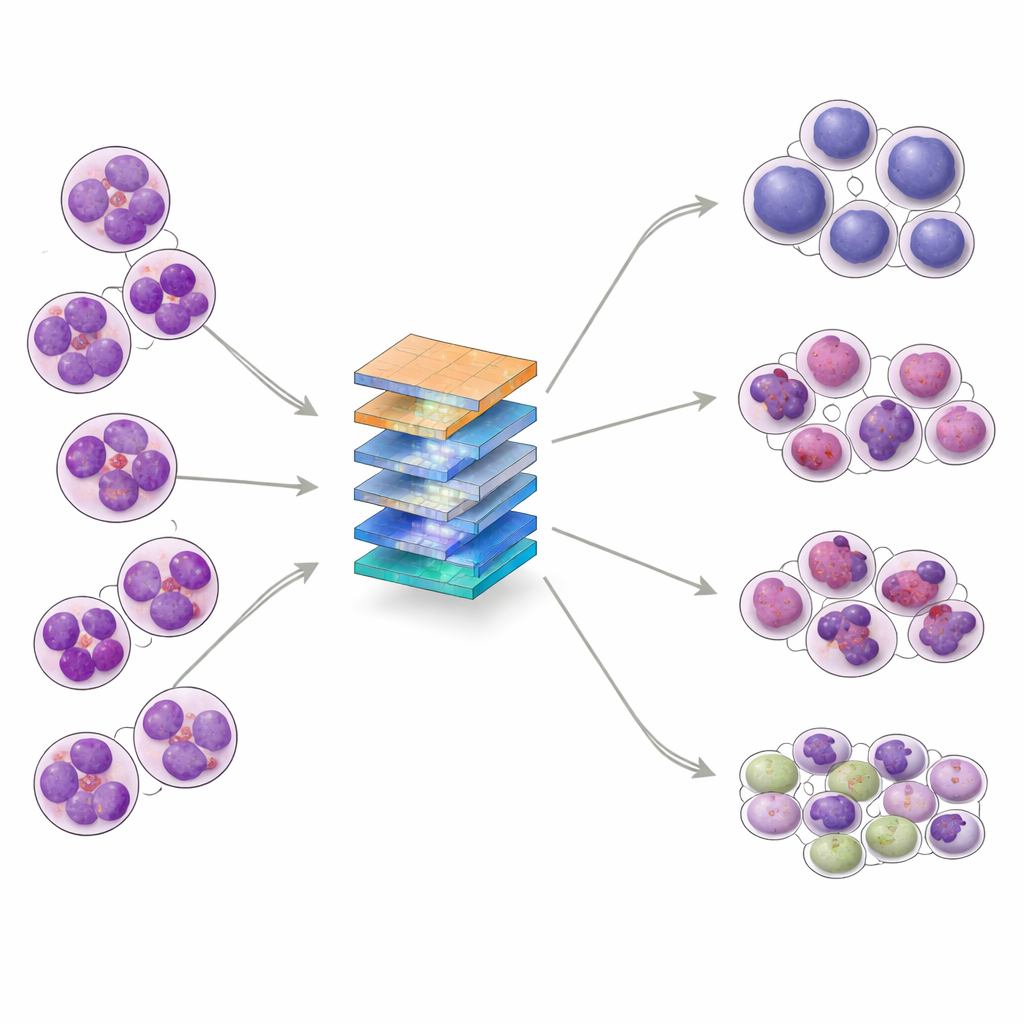

The researchers set out to build an AI system that can sort bone marrow images into four groups: the three ALL subtypes and normal (non‑leukemic) samples. They collected 328 high‑magnification images from a clinical laboratory in Pakistan, covering patients aged 10 to 45, and then used data‑augmentation techniques—such as rotation, flipping, and subtle changes in brightness or contrast—to create 1,585 varied training examples while preserving key cell structures. The dataset was split into training, validation, and test sets, and further examined with statistical tools that showed the classes overlap in complex ways, reinforcing that the task is not trivial even for advanced algorithms.

How the new AI model pays attention

At the heart of the study is a modified version of a well‑known image‑recognition network called VGG19. On top of this, the authors added a “convolutional block attention module” (CBAM) after every major pooling step. In simple terms, the model first learns many layered features from the images, then uses attention to decide which features and which regions in each image deserve more weight. One part of the attention mechanism emphasizes the most informative channels—patterns such as nuclear edges or internal texture—while another part highlights the most important locations within the cell. By stacking these attention steps at several depths in the network, the system keeps track of subtle visual cues that help distinguish, for example, between closely related leukemia subtypes.

How well the system performs

The CBAM‑enhanced VGG19 model was tested against several popular deep‑learning architectures, including DenseNet, Inception, MobileNet, and the original VGG19. Using standard measures of performance, the new model achieved an overall accuracy of 98.73%, about 5.7 percentage points higher than VGG19 without attention and better than all competing models on the same data. Detailed results showed strong precision and recall across all four classes, including the rare L3 subtype, and receiver‑operating‑characteristic curves were close to ideal. The team also performed k‑fold cross‑validation—training and testing on multiple splits of the data—to check that results were stable, and conducted “ablation” experiments demonstrating that using both channel and spatial attention at multiple stages was crucial to the gains.

What this could mean for patients and doctors

While the numbers are promising, the authors stress that this system is not ready to replace human experts or to be used on its own in the clinic. The study relies on a relatively small, single‑center dataset, and there is no independent, multi‑hospital test to prove that the model works equally well in different settings or populations. Still, the work shows that carefully designed attention mechanisms can help AI focus on the same fine visual details that pathologists use when reading bone marrow slides. In the future, similar systems, validated on much larger and more diverse datasets, could serve as decision‑support tools—rapidly flagging suspicious cases, suggesting likely ALL subtypes, and showing heat maps of the regions that influenced their predictions—so that doctors can make faster, more confident diagnoses.

Citation: Rahman, S.I.U., Abbas, N., Ali, S. et al. Improving acute lymphoblastic leukemia diagnosis through CBAM-enhanced VGG19 deep learning. Sci Rep 16, 11027 (2026). https://doi.org/10.1038/s41598-026-40184-4

Keywords: acute lymphoblastic leukemia, medical imaging AI, deep learning diagnosis, attention mechanisms, bone marrow microscopy