Clear Sky Science · en

A multi-region flexible neural interface for behavioral state decoding in freely moving mice

Listening In On The Brain’s Daily Routine

Everyday activities like resting, wandering around, or grabbing a snack feel effortless, but they emerge from millions of nerve cells firing together deep inside the brain. This study shows how a new flexible sensor system, paired with modern artificial intelligence, can “listen” to many brain regions at once in freely moving mice and reliably tell what the animal is doing. In the long run, such technology could help scientists understand brain disorders and build better brain‑computer interfaces that work outside the lab.

A Soft Window Into A Busy Brain

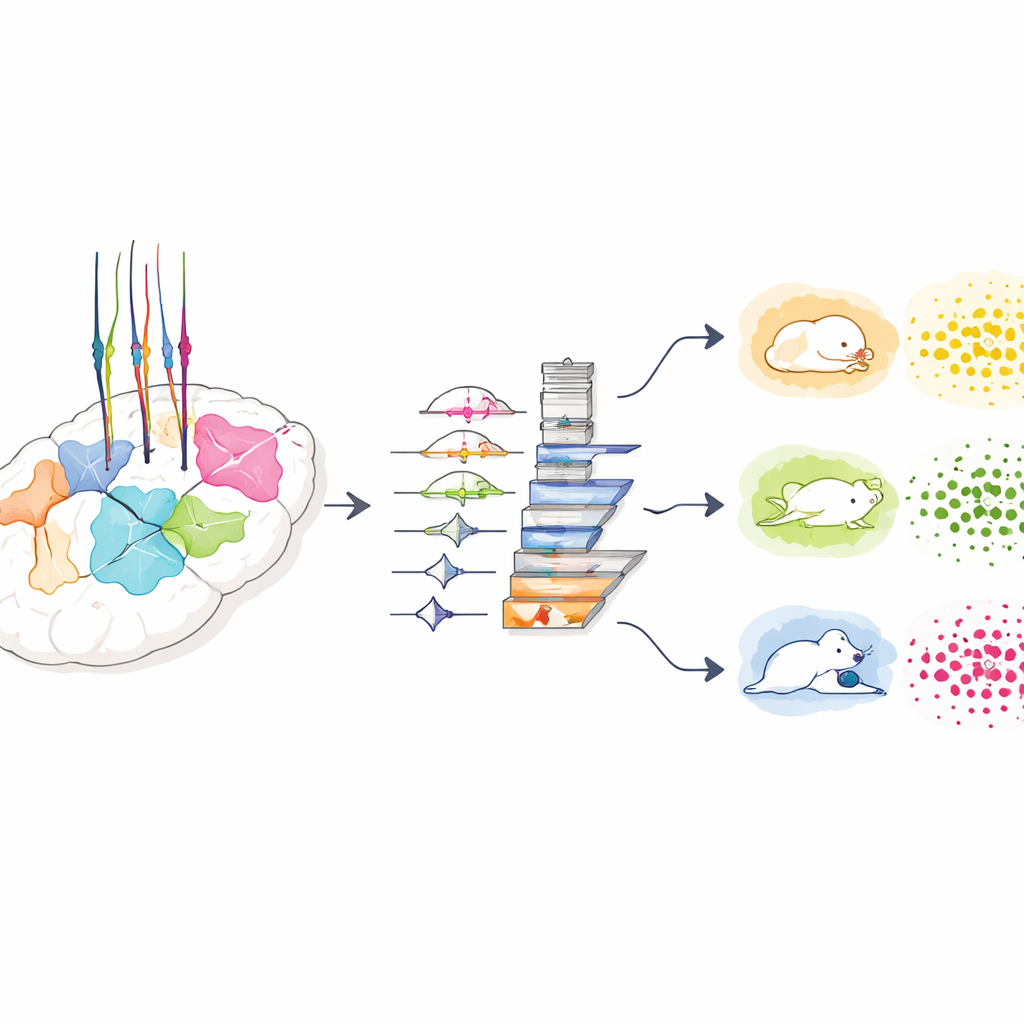

Traditional brain probes are stiff and usually listen to a single area, which can irritate tissue and miss the bigger picture of how different regions cooperate. The team designed a multi‑region flexible probe that solves both problems. Each probe carries eight slender arms, or shanks, lined with tiny gold recording pads. Clever omega‑shaped curves built into each shank let the structure stretch and flex with the soft brain, so it can reach distant areas without snapping or pulling on tissue. Tests in gel “phantom” brains and in live mice showed that the device can span more than a centimeter of brain and keep its electrical properties stable for weeks, even as the animals move naturally.

Following Mice As They Rest, Roam, And Feed

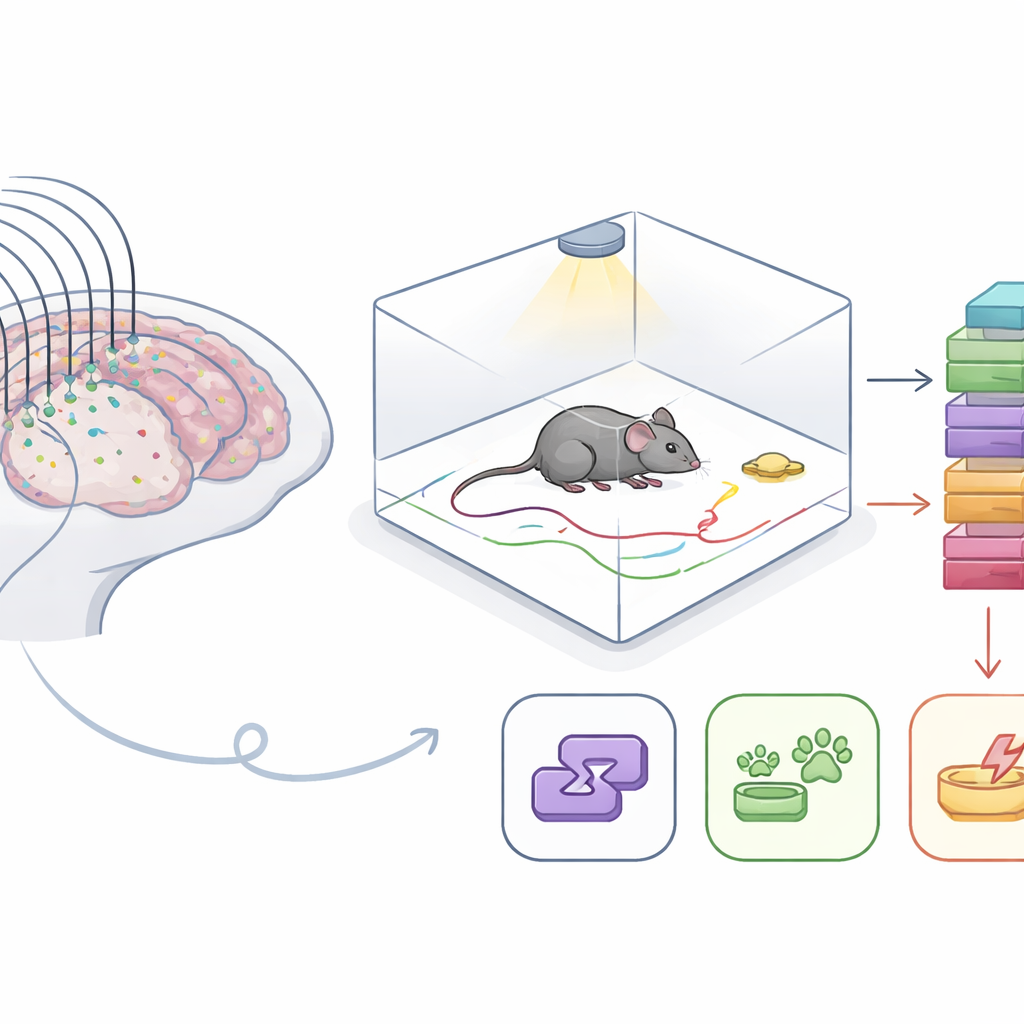

To connect brain activity to real behavior, the researchers built a clear box where mice could move freely, with bedding on the floor, food in a fixed corner, and a light that could deliver brief flashes. Overhead cameras tracked head and tail positions, while the new probe recorded low‑frequency brain signals from up to eight regions, including movement, touch, memory, and vision centers. The team focused on four easily recognized states: resting, roaming around the box, feeding at the food spot, and responding to a rhythmic light flash. By carefully labeling behavior from the videos and matching it to the brain recordings, they assembled a rich dataset spanning about a week of active time across four mice.

Teaching An AI To Read Brain Patterns

Brain signals change rapidly over time and across regions, so the group turned to a deep‑learning model to find patterns humans cannot easily see. Their custom “L‑Conformer” model combines two ideas: one part looks for short‑range shapes in the signal, while another “attention” part tracks how patterns relate over longer stretches of time. By sliding a time window over the recordings, the model learns to link each four‑second chunk of brain activity to one of the four behavioral states. The researchers tested many window lengths and found that four seconds struck the best balance between capturing sustained behavior and avoiding mixtures of different states, reaching nearly 89% accuracy. Competing models drawn from recent brain‑computer‑interface work did not perform as well on this demanding, naturalistic dataset.

Many Brain Regions Beat One

A key question was whether it is better to pack many electrodes into a single “favorite” region or to spread them across the brain. When the model was trained on signals from one area at a time, performance varied widely and was often modest. Combining all eight regions boosted average accuracy to nearly 88%. The team then made fair, head‑to‑head comparisons by keeping the total number of channels the same while changing how they were placed. With only a few channels, concentrating them in one region worked slightly better. But once signals from five or more regions were included, the distributed layout clearly pulled ahead and kept improving, while the single‑region setup hit a ceiling. This suggests that everyday states like resting, roaming, and feeding are true whole‑brain phenomena, not the product of any lone “center.”

Stable Decoding Across Days And Different Mice

For any future clinical or assistive use, a decoder must keep working beyond a single recording session or individual. The researchers therefore asked whether their model could handle new days and new animals without constant retraining. When trained on several days of data from one mouse and then tested on later days, accuracy climbed to about 85%, close to same‑day performance, even without re‑tuning the model. In a tougher test, they trained the system on three mice and evaluated it on a fourth. Remarkably, the model could still guess that animal’s behavioral state with around 70% accuracy straight out of the box, and simple fine‑tuning with some of the new mouse’s data pushed accuracy above 80%.

What This Means For Future Brain Interfaces

Put simply, the study shows that a soft, multi‑region “listening net” combined with a powerful learning algorithm can decode what a freely moving mouse is doing with high reliability over weeks and across different animals. For non‑experts, the key idea is that brain states like resting, exploring, and eating are written in large‑scale patterns spread across the brain, and that flexible electronics and AI can read this distributed code without damaging the tissue or starting from scratch each day. In the long term, similar approaches could help monitor internal states in brain disorders, guide therapies, and support brain‑computer interfaces that work in more natural, everyday settings.

Citation: Tian, Y., Li, G., Su, H. et al. A multi-region flexible neural interface for behavioral state decoding in freely moving mice. Microsyst Nanoeng 12, 154 (2026). https://doi.org/10.1038/s41378-026-01258-5

Keywords: brain-computer interface, neural decoding, flexible electrodes, behavioral state, deep learning