Clear Sky Science · en

A novel quantum convolutional neural network framework for quantum-enhanced classification of pixelated colour images

Seeing More in Fuzzy Pictures

Modern life runs on images, from medical scans and satellite photos to emojis and video game sprites. But as these image collections grow, the computers that analyze them face a dilemma: big, sophisticated models need huge datasets and lots of energy, while many real-world tasks must work with only a handful of tiny, low‑resolution pictures. This paper explores whether the strange rules of quantum physics can help computers spot patterns in such small, noisy images more reliably than today’s standard tools.

Why Tiny Images Are a Big Challenge

Classical convolutional neural networks (CNNs) have transformed image recognition by scanning pictures with small filters and learning layers of patterns. They excel on large, detailed images and massive datasets, such as those used for internet photo tagging. However, in many practical settings—embedded sensors, low‑cost cameras, remote sensing, or icon‑sized displays—only small, 4×4 or 8×8 pixel images are available, often in limited numbers. In this low‑data regime, standard CNNs tend to overfit: they memorize the training examples instead of learning general rules, leading to impressive accuracy on known images but poor performance on new ones.

Bringing Quantum Physics into Vision

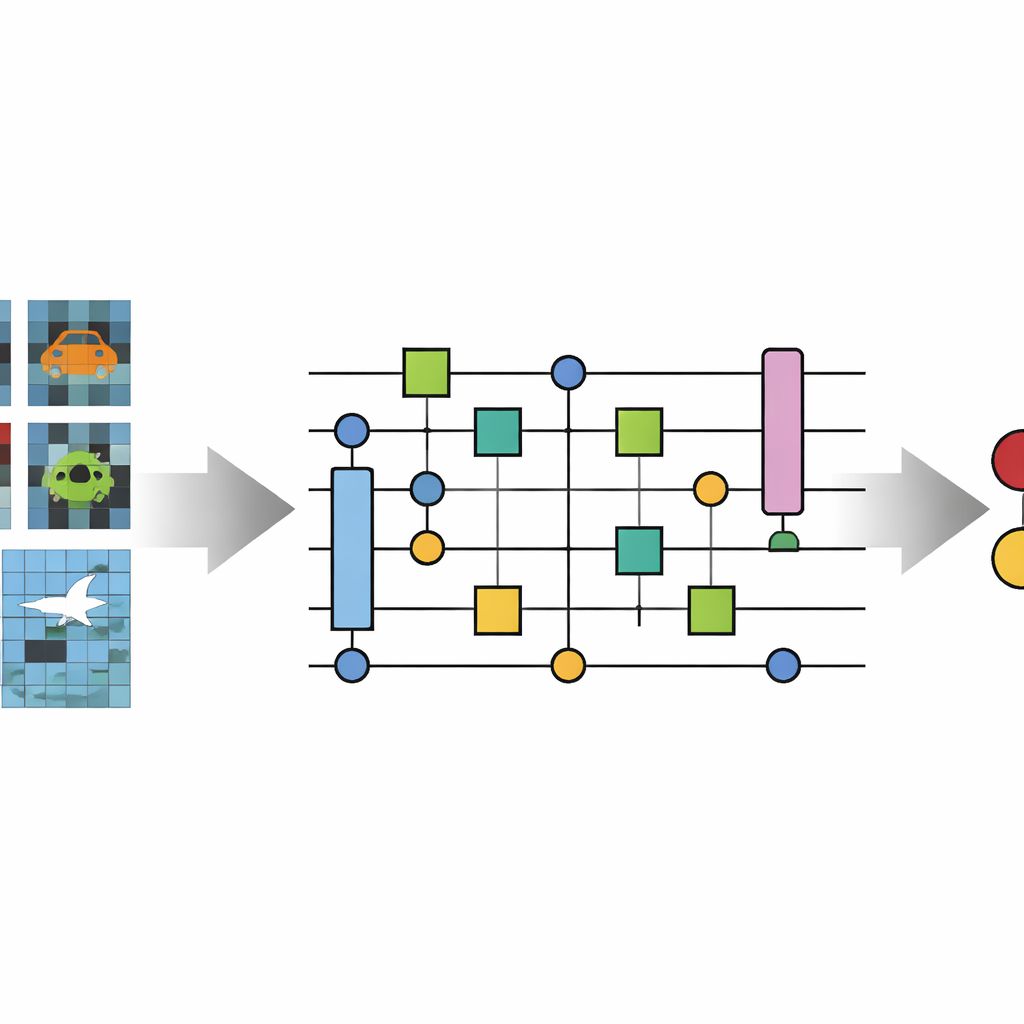

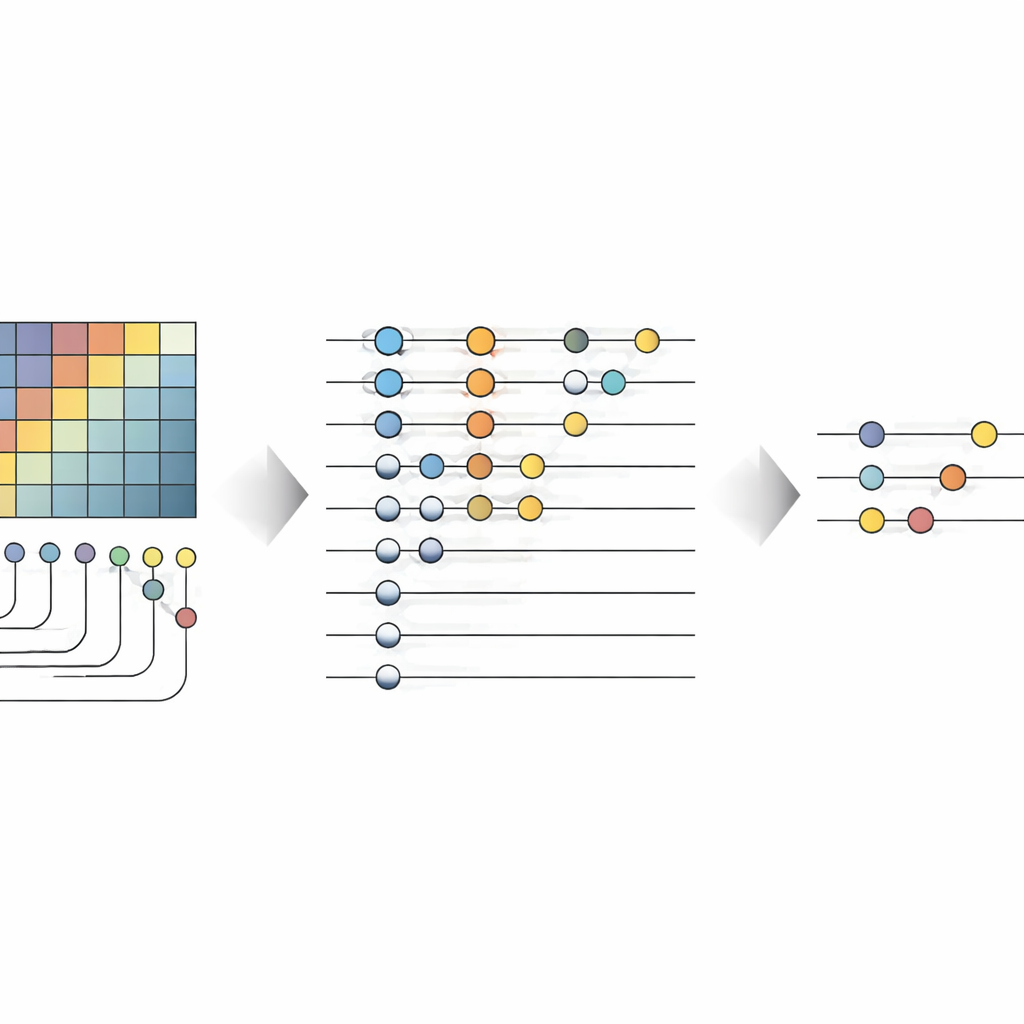

The authors introduce the Novel Quantum Convolutional Neural Network (No-QCNN), a hybrid model that uses both a conventional computer and a simulated quantum device. The core idea is to represent each tiny color image as a collection of quantum bits (qubits). Instead of feeding raw pixel values directly into a network, the method first converts each pixel’s red–green–blue intensities and its position into a compact three‑dimensional block of data. This block is then encoded into a quantum state using carefully chosen rotations and entangling operations between pairs of qubits. Because qubits can exist in superpositions and become entangled, a single quantum state can naturally represent many combinations of pixel colors and positions at once, in principle capturing subtle correlations without very deep networks.

How the Quantum Network Processes Pictures

Once the image is stored in qubits, No-QCNN processes it through a sequence that mirrors classical CNNs but runs entirely on a quantum circuit. Pairs of qubits undergo small, repeated transformation blocks that act like quantum analogs of convolution filters, mixing information from neighboring "locations" in the image. After each of these quantum "convolution" steps, a quantum pooling operation reduces the effective number of qubits, folding information from two qubits into one. Layer by layer, the circuit narrows down to just a few qubits whose measurement outcomes are interpreted, via a classical post‑processing step, as the predicted class—such as whether a line is horizontal or vertical, and what color it is. The strengths of these quantum operations are tuned automatically using a classical optimizer that treats the whole setup as a trainable model.

Testing Quantum vs. Classical Approaches

To see how well No-QCNN works, the researchers created simple but revealing image datasets. In the basic task, each 4×4 image contained either a horizontal or vertical bright line against a noisy background, forming a two‑class (binary) problem. In the more demanding task, 8×8 images contained a line that could be horizontal or vertical and colored red, green, or blue, yielding six possible combinations. For fairness, the quantum model was run on a noise‑free simulator, and its performance was compared with a compact classical CNN of similar complexity. On the binary task, the classical CNN achieved perfect validation accuracy, while No-QCNN reached about 90%, showing that for simple problems with clear structure, the conventional approach still has the edge. On the richer six‑class problem with only 50 images, however, the picture flipped: No-QCNN reached a validation accuracy of about 82%, whereas the classical CNN slumped to 40%, a sign of strong overfitting.

Where Quantum Vision Helps Most

The experiments revealed both promise and limits. As the authors increased the dataset size and training time, No-QCNN’s performance gradually declined. The fixed number of qubits and shallow circuit depth meant the model could not easily absorb more data, and repeatedly sampling quantum states introduced noise into the training process. Yet in small, correlation‑rich datasets—especially the six‑class task with very few images per class—the quantum model generalised better than the classical CNN. In plain terms, the quantum circuit resisted the temptation to memorize the training images and instead learned a rule that transferred more reliably to new examples.

What This Means for the Future

For a non‑specialist, the key takeaway is that quantum versions of neural networks are not magic accelerators for all image problems, nor are they ready to replace today’s deep‑learning systems. Instead, this study identifies a realistic niche where quantum hardware could matter first: tiny, low‑data image tasks where patterns are subtle and classical models easily overfit. No-QCNN shows that even on today’s early, noisy quantum platforms (simulated here), carefully designed quantum circuits can compete with—and sometimes surpass—classical CNNs in generalisation, albeit with much longer training times. As quantum processors become more powerful and less error‑prone, architectures like No-QCNN may evolve into practical tools for specialized visual tasks in medicine, remote sensing, and beyond.

Citation: Daka, C., Bhattacharyya, S. A novel quantum convolutional neural network framework for quantum-enhanced classification of pixelated colour images. Sci Rep 16, 10828 (2026). https://doi.org/10.1038/s41598-026-45140-w

Keywords: quantum machine learning, image classification, quantum neural networks, low-resolution images, hybrid quantum-classical models