Clear Sky Science · en

YOLO-Starfish: fish object detection learning complex underwater features

Why spotting fish underwater is so hard

From climate change to overfishing, understanding what happens beneath the water’s surface is crucial. Scientists and fishery managers increasingly rely on underwater cameras to count and identify fish, but the images they collect are often murky, tinted blue‑green, and filled with overlapping animals. Manually reviewing thousands of hours of video is slow and error‑prone. This paper introduces YOLO‑Starfish, a compact artificial‑intelligence system designed to help underwater robots and cameras automatically find fish in these difficult conditions, along with a new, richly detailed image dataset of freshwater fish.

The underwater world through a camera’s eyes

Underwater object detection is not just regular image analysis with a splash of water added. Light behaves very differently in rivers and lakes: red wavelengths vanish quickly, particles scatter light in many directions, and visibility can change from clear to cloudy within meters. Fish themselves add another layer of difficulty. Different species can look very similar, fish of the same species may range from tiny juveniles to large adults, and they frequently overlap, hide among plants, or swim in and out of shadows. Many existing AI approaches were trained on relatively clean, well‑lit images and rarely see such messy scenes, so they struggle when deployed in the wild.

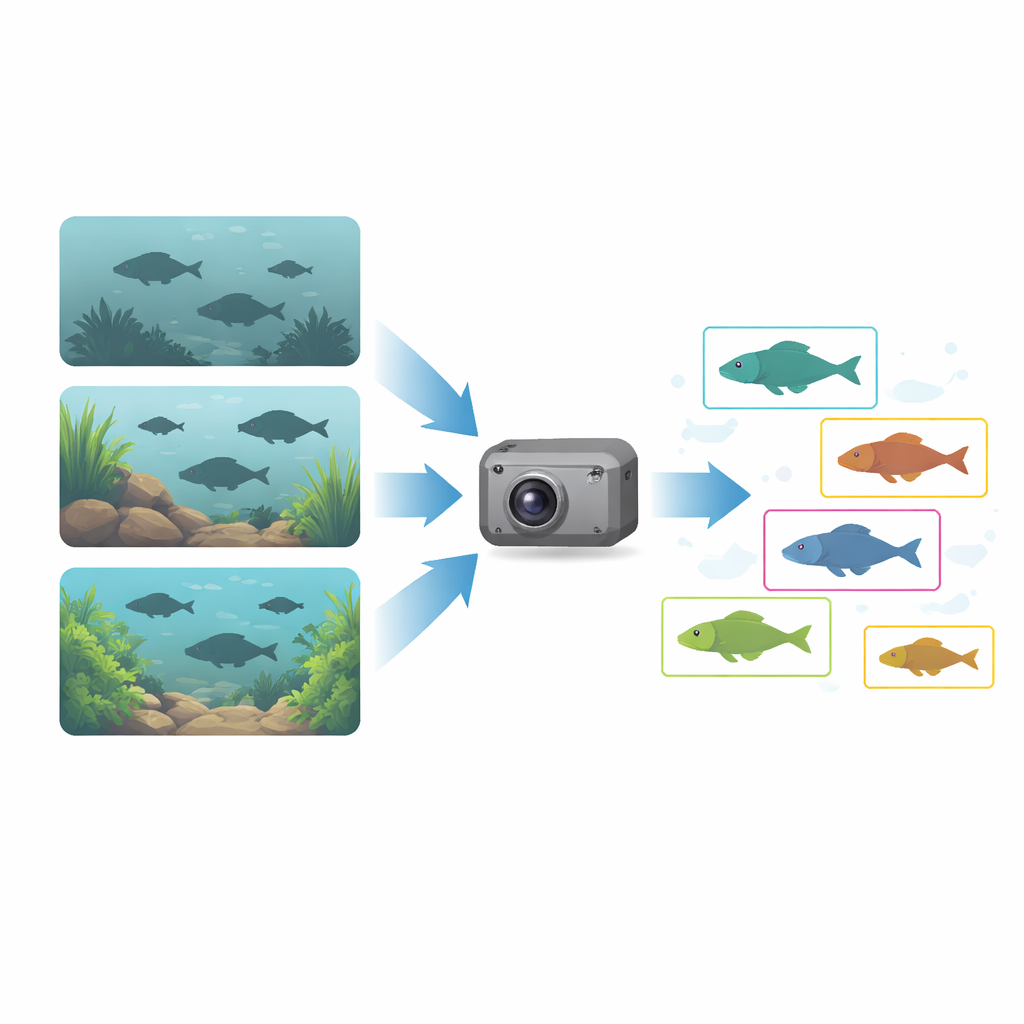

Building a realistic fish photo collection

To tackle this gap, the authors first assembled the Underwater Freshwater Fish Dataset (UFFD), a large collection of real‑world underwater images. They gathered public videos from diverse freshwater habitats, automatically grabbed frames at regular intervals, and then painstakingly selected and labeled high‑quality images. Rather than focusing on just a few famous carp species, they decided to label every recognizable fish, ending up with 19 categories, including an “unknown fish” class for individuals that could not be confidently identified. The final dataset contains 18,594 images (16,904 unique), covering a wide range of water clarity, lighting conditions, camera distances, and fish sizes. Importantly, the species counts follow a “long‑tailed” pattern: a few species are common, while many are rare—much like real ecosystems.

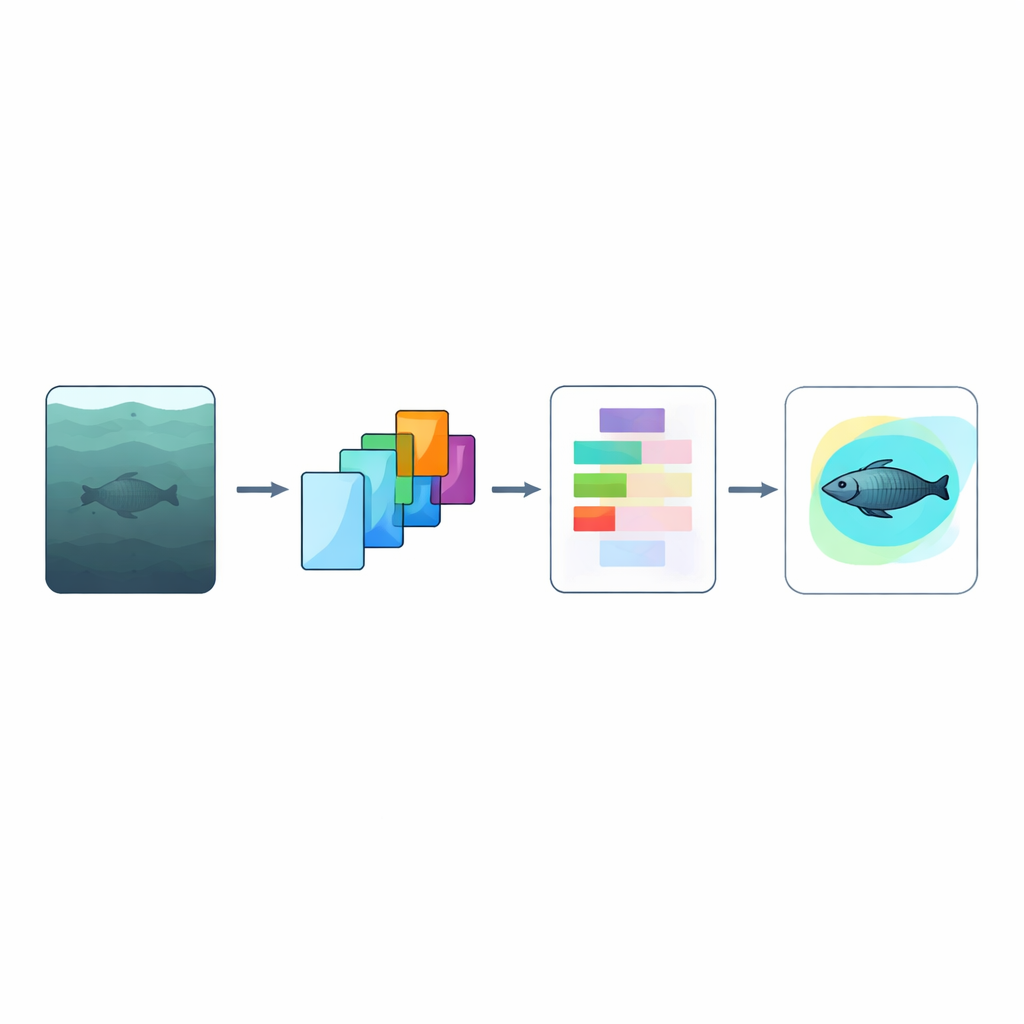

A smarter way to read degraded images

On top of this dataset, the team built YOLO‑Starfish, an improved version of the popular YOLOv8 real‑time detector. Two key ideas drive the upgrade. First, the C2Star module changes how the network combines internal features. Instead of simply adding patterns together, it multiplies them element by element in a so‑called “star operation.” This mirrors how light is actually weakened as it travels through water, where signals are scaled rather than just buried in noise. Mathematically, this multiplication lets the network represent more complex combinations of shapes and colors without becoming bulky, which is vital for battery‑powered underwater robots with limited computing power.

Letting the network decide what really matters

The second innovation, the Attention‑Driven Enhancement Module (ADEM), focuses on what information is trustworthy in each image. Because water often strips away some color channels—especially red—the usual practice of treating each color equally can mislead a detector. ADEM compresses all color‑channel information into a single guiding value that estimates how reliable those channels are overall. It then combines this global cue with spatial attention, which highlights specific regions in the image, using a simple “take the maximum” rule instead of a straightforward sum. In scenes where color cues are strong, the model leans more on channel information; when colors are washed out, it relies more on spatial patterns like shapes and edges. The resulting attention map is finally used to boost or suppress features across the image in a flexible, data‑driven way.

How well does YOLO‑Starfish work?

The authors tested YOLO‑Starfish on three benchmarks: their new UFFD dataset, an existing underwater dataset (RUOD), and the widely used general‑purpose COCO2017 collection. On all three, adding C2Star and ADEM improved the detection scores over baseline YOLOv8, often by several percentage points, while slightly reducing the number of model parameters and computation. The gains were especially notable on difficult cases in UFFD, such as rare “tail” species with few training examples and the catch‑all “unknown fish” category, suggesting better generalization to new or ambiguous appearances. On COCO2017, YOLO‑Starfish also held its own against other state‑of‑the‑art small models, showing that the improvements are broadly useful and not limited to underwater imagery.

What this means for watching the water

In essence, the study shows that thoughtfully designed AI can bridge the gap between clean laboratory images and the messy, color‑distorted world beneath the surface. By pairing a realistic freshwater fish dataset with physically inspired feature processing (C2Star) and adaptive attention (ADEM), YOLO‑Starfish delivers more accurate fish detection without demanding heavyweight hardware. For ecologists, fisheries managers, and roboticists, this kind of tool could make long‑term, large‑scale monitoring of aquatic life far more practical, offering a clearer, automated view of underwater ecosystems and how they are changing over time.

Citation: Gong, R., Xu, J., Zheng, Z. et al. YOLO-Starfish: fish object detection learning complex underwater features. Sci Rep 16, 13964 (2026). https://doi.org/10.1038/s41598-026-44187-z

Keywords: underwater fish detection, computer vision, deep learning, aquatic ecology, robotic monitoring