Clear Sky Science · en

MM FD ConvFormer multimodal frequency aware deformable CNN transformer network for robust brain tumor classification

Why smarter brain scan reading matters

Brain tumors are among the most feared medical diagnoses, and doctors often rely on MRI scans to spot and characterize them. But reading these images is difficult and time‑consuming, and even experienced specialists can disagree. This study introduces a new artificial intelligence (AI) system, called MM‑FD‑ConvFormer, designed to help classify brain tumors from MRI scans more accurately, more reliably, and in a way that doctors can better interpret.

Seeing tumors from more than one angle

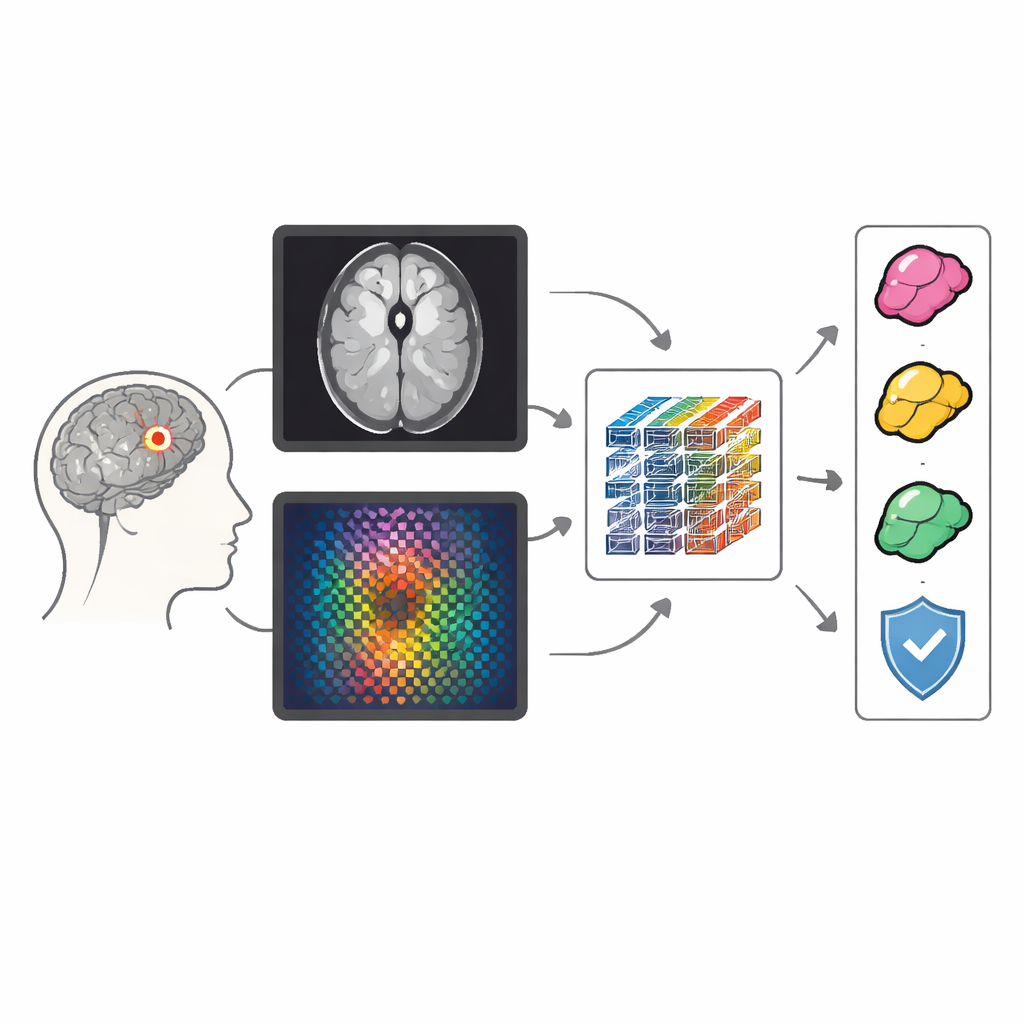

Most existing computer systems look at MRI scans in a straightforward way: they analyze the picture we see on the screen, focusing on shapes, brightness, and edges. MM‑FD‑ConvFormer goes further by treating the same scan as two different but complementary views. One view is the familiar spatial image of the brain; the other is a frequency view created by mathematical transforms that highlight subtle textures and rapid changes in intensity. By combining both views, the model can better capture fine differences between tumors and healthy tissue, especially in cases where the tumor edges are blurry or the appearance varies from one scanner or hospital to another.

A layered pathway from scan to decision

The system processes each MRI slice through two parallel pathways. In the first, a modern convolutional network (a refined form of a classic image‑analysis engine) learns patterns of anatomy and tumor shape. In the second, a lighter network analyzes the frequency‑based version of the same slice, which emphasizes texture and boundary clues. These two streams are then brought together and refined by a transformer module, a type of AI architecture originally developed for language but now widely used in vision because it can connect distant regions of an image and understand broader context, such as where a tumor sits within the brain.

Adapting to irregular tumor shapes

Many tumors, particularly aggressive gliomas, do not have neat, round outlines. Traditional attention mechanisms in AI look at fixed grid locations, which can miss or blur these irregular structures. MM‑FD‑ConvFormer introduces a deformable cross‑modal attention block that lets the model “bend” its focus to follow the actual tumor shape. Crucially, this block bases its adjustments on a blend of both spatial and frequency information, so structure and texture jointly guide where the model looks. This design improves sensitivity along complex borders and helps align what the two branches have learned, making the final fused representation more informative for classification.

Proving reliability across diverse hospitals

To test whether this system would hold up in realistic conditions, the authors trained it on widely used public MRI collections from Kaggle and Figshare and then evaluated it on separate, clinically oriented datasets, including BraTS 2020/2021 and the REMBRANDT collection. MM‑FD‑ConvFormer outperformed strong convolutional, transformer, and hybrid competitors on standard measures like accuracy, F1 score, and area under the ROC curve. It reached about 99.8% accuracy for distinguishing tumor from normal scans and maintained high performance when evaluated on unseen datasets collected with different scanners and protocols. The model also estimates its own uncertainty using repeated, slightly randomized passes, which can flag borderline cases where a human expert’s judgment is especially important.

Making AI decisions visible to clinicians

Beyond raw numbers, the authors focused on whether radiologists could understand and trust the model’s decisions. They used heat‑map techniques such as Grad‑CAM and SHAP to show which parts of the image and which feature stream (spatial or frequency) drove each prediction. These visual explanations aligned well with known tumor regions and boundaries, achieving strong overlap with expert‑drawn masks even though the system was trained only for classification, not segmentation. The frequency branch contributed more in challenging, artifact‑heavy, or cross‑site data, confirming that the dual‑view approach is not just a mathematical trick but genuinely helpful in practice.

What this means for patients and doctors

In simple terms, MM‑FD‑ConvFormer is an AI assistant that looks at brain MRI scans in two complementary ways, flexibly follows the true tumor shape, and can explain where it is “looking” when it makes a call. Across several datasets, it was more accurate and more robust to changes in scanners and hospitals than previous methods, while also offering better visual justification for its decisions and a built‑in sense of when it might be wrong. If validated further in clinical settings and extended to full 3D scans, this kind of technology could support earlier, more consistent tumor detection and help radiologists and neurologists tailor treatment with greater confidence.

Citation: Arockia Selvarathinam, A.X., Lilhore, U.K., Alroobaea, R. et al. MM FD ConvFormer multimodal frequency aware deformable CNN transformer network for robust brain tumor classification. Sci Rep 16, 12669 (2026). https://doi.org/10.1038/s41598-026-43616-3

Keywords: brain tumor MRI, medical imaging AI, deep learning models, tumor classification, model interpretability