Clear Sky Science · en

A fuzzy-TD3 hybrid reinforcement learning framework for robust trajectory tracking of the Mitsubishi RV-2AJ robotic arm

Smarter Robot Arms for Messy Real-World Jobs

Industrial robot arms are fantastic at repeating the same motion over and over, but they can stumble when the job or environment changes even slightly. This paper presents a new way to give a common factory-style robot arm the steadiness of a traditional controller and the adaptability of artificial intelligence at the same time. The goal is simple but demanding: make the arm follow complex 3D paths precisely, even when its load changes or it is pushed and disturbed, without needing a perfect mathematical model of the machine.

Why Precise Motion Is Hard for Robots

Modern robot arms, like the 5‑joint Mitsubishi RV‑2AJ studied here, are complicated mechanical systems. Their joints influence one another, their motion is highly nonlinear, and in real factories they must cope with friction, vibration, sensor noise, and unknown payloads. Classic control methods, such as PID controllers, are easy to tune and widely used, but they struggle when the robot moves fast, carries different objects, or meets unexpected forces. On the other side, deep reinforcement learning can in principle learn excellent control policies by trial and error, but in practice it can learn slowly, behave erratically at first, and is often a “black box” that engineers find hard to interpret or trust.

Blending Human Rules with Machine Learning

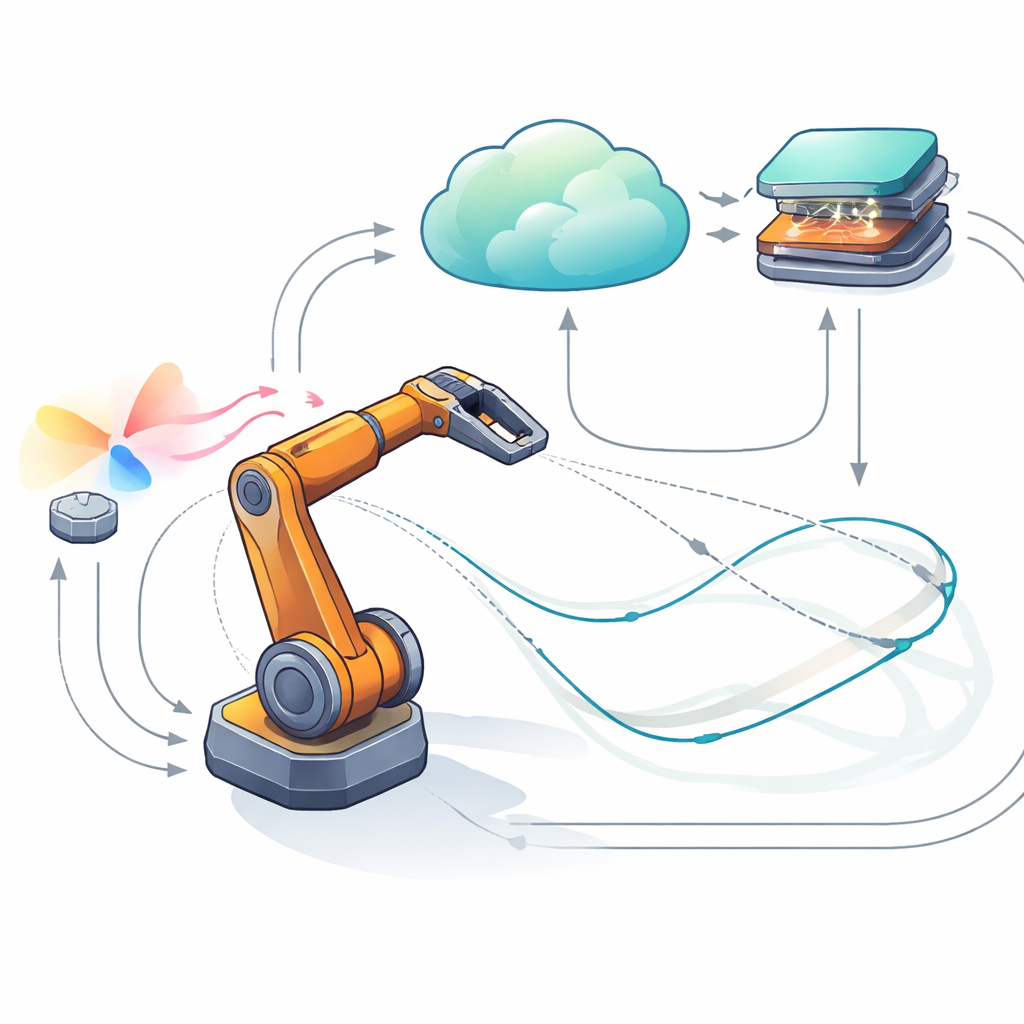

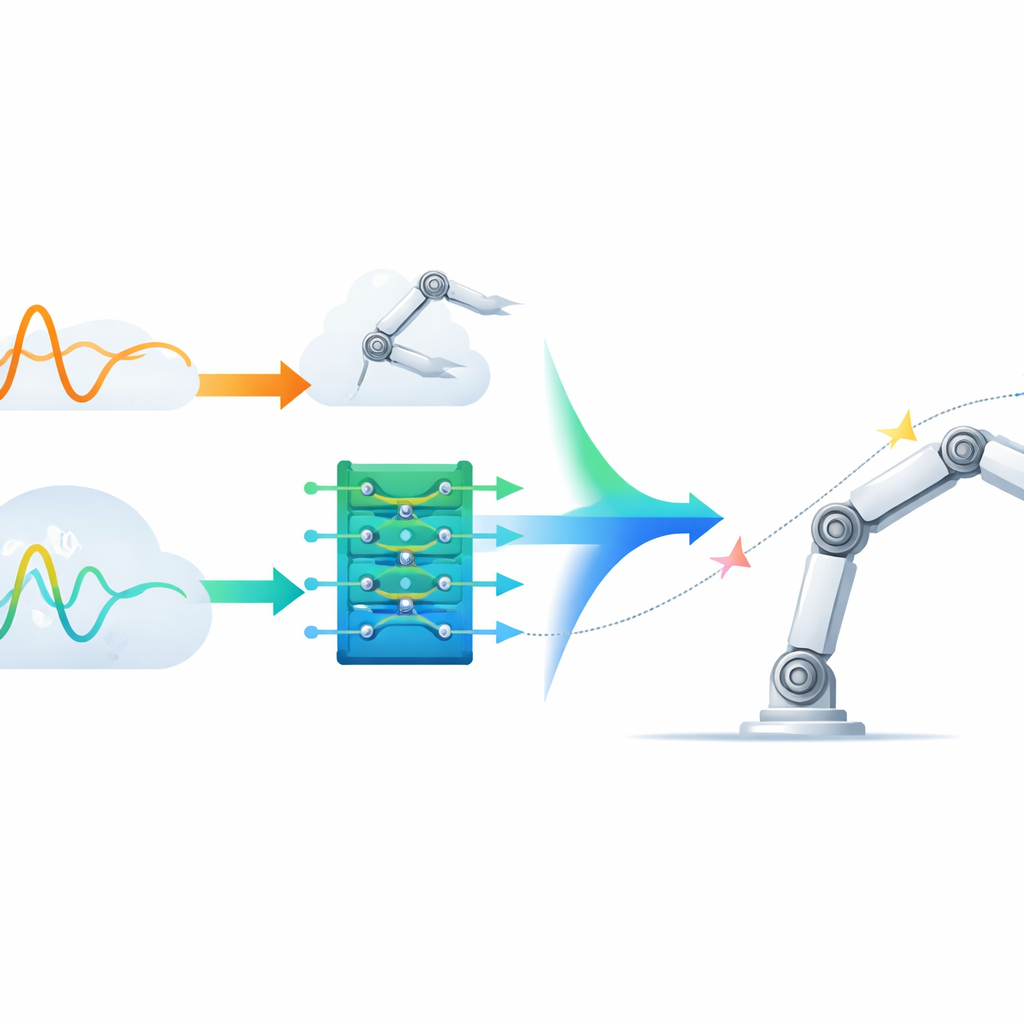

To bridge this gap, the author proposes a hybrid controller that pairs a fuzzy logic system—which encodes expert rules in an interpretable way—with a powerful reinforcement learning method called TD3. In this design, the fuzzy part watches how far each joint deviates from its target and how quickly that error is changing. It then applies immediate corrective torques according to a compact set of “if–then” rules, much like a seasoned operator would. This provides a stable, understandable baseline behavior. At the same time, the TD3 agent learns, through repeated simulation, how to add a smaller “residual” torque that fine‑tunes the motion, compensating for hard‑to‑model effects like nonlinear friction or persistent changes in carried weight. The two torque signals are simply summed at each joint, so the robot is always driven by a partnership between explicit rules and learned adaptation.

A Digital Test Bench for Tough Paths

The hybrid controller is trained and tested in a detailed virtual replica of the Mitsubishi arm built with multibody simulation tools. This environment reproduces the arm’s rigid links, joint limits, and sensor imperfections, letting the learning algorithm explore safely while still facing realistic physics. The researchers challenge the controller with demanding 3D trajectories—N‑shaped, helical, and spiral paths—that require smooth, coordinated motion of all joints. They also inject uncertainty by altering link masses and inertias and by adding sudden torque pulses that mimic impacts or external pushes. Within this setup, the fuzzy logic component ensures the arm does not behave wildly, while the TD3 agent gradually improves performance by maximizing a reward signal that values accuracy, smoothness, and energy efficiency.

How the Hybrid Outperforms Its Rivals

Across all tested paths, the hybrid fuzzy‑TD3 controller beats both a pure TD3 controller and a previous hybrid that combined TD3 with a standard PID controller. Error measures that accumulate deviation over time show reductions of around 28–50% compared with TD3 alone and roughly 15–29% compared with the PID‑based hybrid. Even when the robot’s physical parameters are perturbed and external disturbances are applied, the new controller keeps its advantage, cutting errors by about 23–34% versus TD3 and 11–17% versus PID‑TD3. Additional analyses reveal that the learning process converges smoothly, the overall behavior is numerically stable, and the fuzzy rules activate in intuitive patterns—gentle, frequent corrections during normal motion and stronger, rarer interventions when the arm drifts far from its target.

Balancing Precision and Energy Use

The study also shows that the controller can be tuned to trade a little precision for noticeable energy savings. By adjusting a single weight in the reward function, the algorithm learns to reduce average joint torque by more than 20% while only slightly increasing the tracking error. This tunability means the same control scheme can be adapted to tasks where efficiency matters more than microscopic accuracy, or vice versa, without redesigning the whole system.

What This Means for Future Robots

In everyday terms, this work demonstrates a promising recipe for more dependable and understandable robot arms: let a clear set of human‑readable rules handle fast corrections and safety, while a learning algorithm quietly refines performance over time. The result is a controller that tracks intricate paths more accurately, shrugs off disturbances, uses energy more wisely, and remains explainable to engineers. Such hybrid designs could help bring advanced AI‑driven control out of the lab and into real factories, warehouses, and service robots, where reliability and transparency are just as important as raw intelligence.

Citation: Hazem, Z.B. A fuzzy-TD3 hybrid reinforcement learning framework for robust trajectory tracking of the Mitsubishi RV-2AJ robotic arm. Sci Rep 16, 12269 (2026). https://doi.org/10.1038/s41598-026-42615-8

Keywords: robotic arm control, reinforcement learning, fuzzy logic, trajectory tracking, robust automation